AI Assistant: Ask Your Metrics Questions in Natural Language

Dashboards are great — until you need an answer that doesn't fit any pre-built chart. "What was our average PR review time last month, excluding the infra team?" That query doesn't have a button. It requires filtering, grouping, and calculating across multiple dimensions.

PanDev's AI assistant lets you ask questions like this in plain English. Powered by Google Gemini, it understands your engineering data and returns answers, charts, and tables in seconds. Gartner predicts that by 2026, over 80% of enterprises will have used generative AI APIs or deployed AI-augmented applications — natural-language analytics is part of that shift.

{/* truncate */}

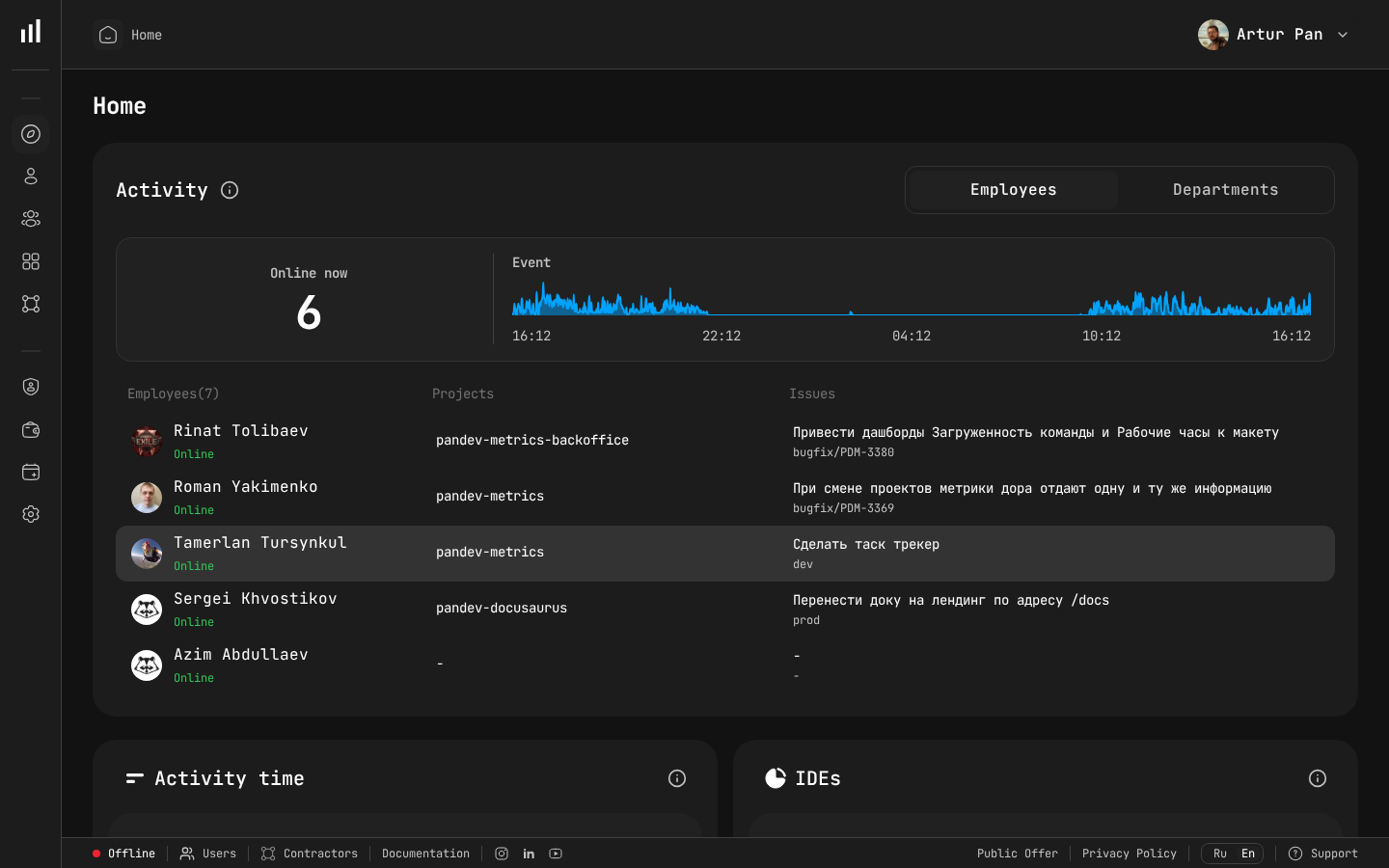

PanDev dashboard — the data the AI assistant queries in natural language.

How It Works

The AI assistant sits between you and PanDev's data layer:

You type a question in natural language

│

▼

┌─────────────────────┐

│ Google Gemini │

│ (language model) │

│ Understands intent │

│ Generates query │

└──────────┬──────────┘

│

▼

┌─────────────────────┐

│ PanDev Query │

│ Engine │

│ Runs structured │

│ query against │

│ your data │

└──────────┬──────────┘

│

▼

┌─────────────────────┐

│ Formatted Response │

│ Tables, charts, │

│ summaries │

└─────────────────────┘

The AI model never sees your raw data. It translates your question into a structured query, PanDev's engine executes it against your database, and the results come back formatted.

What the AI Can Access

The AI assistant queries the same data available in your PanDev dashboards:

- Commits, branches, and code changes

- Pull requests / merge requests — open, merged, closed, review activity

- IDE activity — coding time per developer, project, language

- Task data from Jira, ClickUp, or Yandex.Tracker

- Sprint and epic metrics

- DORA metrics

- Team structure and user profiles

It cannot access source code, file contents, or anything outside PanDev's metrics database.

Getting Started

Access the Assistant

- Log in to PanDev Metrics

- Click the AI Assistant icon in the top navigation bar (or press

Ctrl+K/Cmd+K) - Type your question and press Enter

That's it. No setup, no API keys, no configuration.

Your First Questions

Start simple to see how the assistant works:

You: "How many PRs did we merge last week?"

AI: Last week (Mar 3–9), your organization merged 47 pull requests

across 12 repositories.

Top repositories:

│ Repository │ PRs Merged │

│ backend-api │ 14 │

│ frontend-app │ 11 │

│ mobile-ios │ 8 │

│ infrastructure │ 6 │

│ shared-libs │ 5 │

│ other (3 repos) │ 3 │

You: "What was our average cycle time this month?"

AI: Average PR cycle time for March 2026: 18.4 hours (working hours).

Breakdown:

• Coding time (first commit → PR open): 6.2h avg

• Time to first review: 4.8h avg

• Review and iteration: 5.1h avg

• Merge after approval: 2.3h avg

Compared to February: cycle time decreased by 12% (from 20.9h).

Real-World Examples

Here are questions real PanDev users ask the AI assistant, organized by role.

For Engineering Managers

Team performance overview:

You: "Show me each developer's coding time and PR count for the last sprint"

AI: Sprint 24 (Feb 24 – Mar 7):

│ Developer │ Coding Time │ PRs Merged │ Avg PR Size │

│ Anna Chen │ 28h 15m │ 8 │ +142 lines │

│ Boris Kim │ 22h 30m │ 6 │ +203 lines │

│ Carlos Diaz │ 31h 45m │ 11 │ +87 lines │

│ Diana Ray │ 19h 20m │ 5 │ +312 lines │

│ Erik Svensson│ 25h 10m │ 7 │ +156 lines │

Identifying bottlenecks:

You: "Which PRs have been open for more than 5 days?"

AI: 3 PRs have been open for more than 5 days:

1. backend-api#342 — "Refactor auth middleware" (Anna Chen)

Opened: Mar 4 | Last activity: Mar 6 | Reviews: 1 comment, no approval

⚠️ Waiting for review from Boris Kim

2. frontend-app#891 — "Migrate to React 19" (Carlos Diaz)

Opened: Feb 28 | Last activity: Mar 7 | Reviews: 3 rounds, changes requested

⚠️ Large PR (+2,341 lines) — consider splitting

3. infrastructure#156 — "Upgrade PostgreSQL to 16" (Erik Svensson)

Opened: Mar 3 | Last activity: Mar 3 | Reviews: none

⚠️ No reviewer assigned

Review load balance:

You: "Who does the most code reviews on the backend team?"

AI: Code review distribution for the Backend team (last 30 days):

│ Reviewer │ Reviews │ Avg Time to Review │ Approval Rate │

│ Boris Kim │ 23 │ 3.2h │ 78% │

│ Anna Chen │ 14 │ 5.7h │ 85% │

│ Diana Ray │ 6 │ 8.4h │ 92% │

Boris Kim handles 53% of all backend reviews. Consider distributing

review load more evenly to reduce their bottleneck risk.

For Project Managers

Sprint progress:

You: "Are we on track for the current sprint?"

AI: Sprint 25 progress (Mar 10–21, day 4 of 10):

Planned: 34 story points (18 tasks)

Completed: 11 points (6 tasks) — 32%

In Progress: 13 points (7 tasks)

Not Started: 10 points (5 tasks)

Pace: 2.75 points/day (need 3.4/day to finish on time)

⚠️ Slightly behind pace. 3 tasks in "In Progress" have no

commits in the last 48 hours — worth checking in standup.

Estimation accuracy:

You: "How accurate were our estimates last quarter?"

AI: Q1 2026 Estimation Accuracy:

│ Story Points │ Tasks │ Avg Actual Time │ Accuracy │

│ 1 point │ 42 │ 3.1h coding │ ±15% │

│ 2 points │ 38 │ 6.8h coding │ ±22% │

│ 3 points │ 29 │ 12.4h coding │ ±31% │

│ 5 points │ 17 │ 22.1h coding │ ±45% │

│ 8 points │ 8 │ 41.3h coding │ ±62% │

Smaller tasks are estimated more accurately. Tasks rated 5+ points

have high variance — consider breaking them down further.

For CTOs and VPs

DORA metrics:

You: "What are our DORA metrics for Q1?"

AI: DORA Metrics — Q1 2026:

│ Metric │ Value │ Rating │

│ Deployment Frequency │ 4.2/week │ ⭐ High │

│ Lead Time for Changes │ 2.1 days │ ⭐ High │

│ Mean Time to Recovery │ 4.3 hours │ ⭐ High │

│ Change Failure Rate │ 8.7% │ ⭐ Medium │

Trend: All metrics improved from Q4 2025 except Change Failure

Rate, which increased from 6.2% to 8.7%. This correlates with

the frontend migration project (12 reverts in February).

Cross-team comparison:

You: "Compare cycle time across all teams for the last month"

AI: Average PR cycle time by team (February 2026):

│ Team │ Cycle Time │ PRs │ Trend vs Jan │

│ Backend │ 14.2h │ 68 │ ↓ 18% │

│ Frontend │ 22.7h │ 45 │ ↑ 31% │

│ Mobile │ 16.8h │ 32 │ ↓ 5% │

│ Infrastructure │ 28.4h │ 19 │ ↑ 12% │

│ Data │ 11.3h │ 24 │ ↓ 22% │

Frontend cycle time spiked due to the React 19 migration —

large PRs averaging +890 lines (vs. team norm of +210).

For Individual Developers

Personal stats:

You: "How much did I code last week?"

AI: Your activity for Mar 3–9:

Total coding time: 22h 45m

Projects: backend-api (14h), shared-libs (6h), docs (2h 45m)

Languages: Go (12h), TypeScript (8h), Markdown (2h 45m)

PRs opened: 4 | PRs merged: 3 | Reviews given: 6

Most active day: Wednesday (5h 20m)

Most active time: 10:00–12:00

Advanced Queries

The AI handles complex, multi-condition questions:

"Show me developers who reviewed more than 10 PRs last month

but wrote fewer than 5 PRs themselves"

"What percentage of our PRs are merged without any review comments?"

"List all tasks from Sprint 23 that were carried over to Sprint 24,

and show how much coding time was spent on each before carry-over"

"Compare our Monday vs Friday deployment frequency over the last quarter"

"Which repositories have the highest code churn rate?"

Follow-Up Questions

The assistant maintains context within a conversation. You can drill down:

You: "What was our cycle time last month?"

AI: Average cycle time in February: 18.4 working hours.

You: "Break that down by PR size"

AI: │ PR Size │ Cycle Time │ Count │

│ Small (under 50) │ 8.2h │ 42 │

│ Medium (50-200)│ 16.7h │ 35 │

│ Large (200-500)│ 28.9h │ 18 │

│ XL (500+) │ 52.3h │ 7 │

You: "What about only the backend team?"

AI: Backend team cycle time by PR size (February):

│ PR Size │ Cycle Time │ Count │

│ Small (under 50) │ 6.1h │ 22 │

│ Medium (50-200)│ 13.4h │ 19 │

│ Large (200-500)│ 24.2h │ 8 │

│ XL (500+) │ 38.7h │ 2 │

Tips for Better Results

Be Specific About Time Ranges

❌ "How are we doing?"

✅ "What was our PR throughput for the last 2 weeks?"

Name Teams and Projects Explicitly

❌ "Show review stats"

✅ "Show review stats for the backend team in the frontend-app repo"

Ask for Comparisons

✅ "Compare this sprint's velocity with the previous 3 sprints"

✅ "How does our cycle time this quarter compare to last quarter?"

Request Specific Formats

✅ "Show me a table of coding time by developer and language"

✅ "Give me a weekly trend of PR merge count for the last 8 weeks"

Privacy and Security

The AI assistant is designed with data boundaries:

| What the AI sees | What the AI never sees |

|---|---|

| Aggregated metrics | Source code |

| Developer names (within your org) | File contents |

| Repository names | Commit diffs |

| Task titles and IDs | Credentials or tokens |

| Time and count data | Data from other organizations |

The Google Gemini model processes your natural language question and generates a structured query. Your actual metrics data stays within PanDev's infrastructure — it's never sent to the language model.

For on-premise deployments, the AI assistant connects to Google Gemini's API for language processing only. If your security policy doesn't allow external AI API calls, contact us about self-hosted LLM options.

What the AI Can't Do (Yet)

To set expectations:

- Can't modify data — it's read-only. You can't create tasks, close PRs, or reassign reviews through the assistant

- Can't predict the future — it can show trends and patterns, but won't forecast sprint completion dates (yet)

- Can't explain "why" — it can tell you that cycle time increased 30%, but the root cause analysis is still on you

- Can't access external tools — it only queries PanDev's database, not Jira or GitHub directly

These limitations will shrink over time as we expand the assistant's capabilities. Forrester's research on AI in software engineering shows that AI-assisted analytics tools are evolving rapidly — predictive capabilities and root-cause analysis are the next frontier.

Getting Started

The AI assistant is available on all PanDev Metrics plans. Open it with Ctrl+K (or Cmd+K on Mac) from any PanDev page, type your question, and get answers in seconds.

No dashboards to configure. No SQL to write. Just ask.

Ask your data anything. Try the AI assistant in PanDev Metrics — powered by Google Gemini, built for engineering teams.