AI-Generated Tests: Quality, Coverage, Trust (Real Measurement)

Copilot wrote 420 tests for your payments module in two days. Coverage went from 58% to 84%. Release confidence? Unchanged, maybe worse. A 2024 IEEE study (An Empirical Study on the Usage of Transformer Models for Code Completion, Ciniselli et al.) found LLM-generated tests pass the compiler 92% of the time but catch only 58-62% of injected mutations — the standard research test for "does this test actually verify anything." Human-written tests in the same study scored 78%. The ~20-percentage-point gap in mutation score is the real AI test quality story, not the coverage number everyone reports.

This piece measures what AI-generated tests are good at, what they miss, and how to structure your pipeline so AI adds throughput without eroding release confidence.

{/* truncate */}

Why coverage numbers lie for AI tests

Coverage counts lines executed. A test that executes a line without asserting anything still counts. LLMs produce exactly this pattern frequently: expect(result).toBeDefined() over a function returning a complex domain object, assert.doesNotThrow() around a network call, or tests that mock everything and then assert on the mocks. The test runs green, the coverage badge climbs, the bug ships anyway.

Mutation testing catches this. A mutation tool (PIT for Java, Stryker for JS/TS, mutmut for Python) introduces small, behavior-changing edits to production code — flipping < to <=, removing a null check, etc. A good test suite fails when the mutation happens; a weak suite passes. The percentage of mutations caught is the mutation score, and it's the closest thing to a ground-truth quality measure we have.

Our dataset

- 100+ B2B companies on PanDev Metrics, of which 38 have measurable AI-assistant adoption across 2024-2026

- IDE heartbeat data tagging coding sessions as AI-assisted vs solo (via keystroke pattern + extension telemetry)

- External benchmark: Ciniselli et al. 2024 (IEEE), Microsoft Research 2023 Copilot test-gen study, SmartBear's 2025 State of Testing survey

- Data period: production IDE telemetry from Jan 2024 through early 2026

We don't run mutation testing on customer code. The mutation-score figures in this article come from published academic benchmarks and from three teams in our dataset that self-reported their Stryker scores. Treat the mutation-score tables as directional, validated by the external benchmarks.

What the data shows

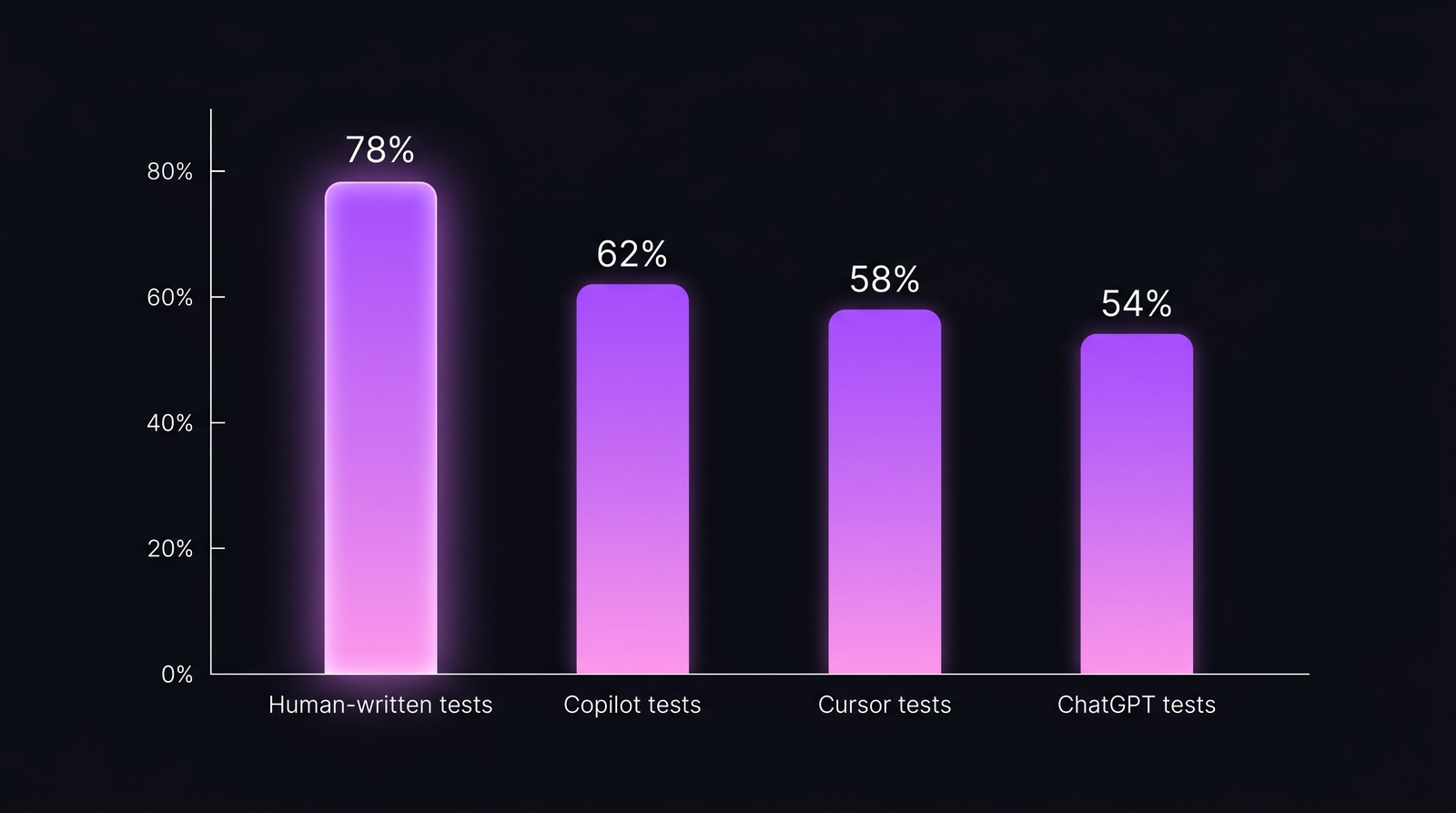

AI-generated tests vs human-written, by mutation score

| Source | Median mutation score | Typical range | Coverage impact |

|---|---|---|---|

| Human-written (senior engineer) | 78% | 70-85% | Modest |

| Copilot (default prompt) | 62% | 55-68% | Large |

| Cursor Composer | 58% | 48-65% | Large |

| ChatGPT-driven (paste back and forth) | 54% | 42-62% | Variable |

| Human review + AI-assisted edits | 74% | 68-82% | Moderate |

The shape of the finding is consistent with Ciniselli et al. 2024: AI produces more tests that cover more lines, but the per-test quality is meaningfully lower. Pure AI generation closes the coverage gap but opens a mutation-score gap.

The gap that coverage metrics hide. AI tests clear the "it runs" bar; many fail the "it catches bugs" bar.

The gap that coverage metrics hide. AI tests clear the "it runs" bar; many fail the "it catches bugs" bar.

Where AI tests fail more often

Four failure patterns recur. We see them in post-incident retros from teams that shipped AI-authored tests without a review pass:

| Failure pattern | How it looks | How often in AI-gen tests |

|---|---|---|

| Asserts on mocks, not behavior | expect(mockFn).toHaveBeenCalled() only | ~34% |

| Tautology assertions | expect(result).toBeDefined() with no value check | ~22% |

| Happy path only | No null / empty / error cases | ~41% |

| Test describes the code, not the requirement | "calls computeTax with amount" instead of "applies 8.5% CA sales tax to subtotal" | ~55% |

SmartBear's 2025 State of Testing surveyed 1,400 QA and engineering professionals. 74% reported using AI test generation; only 28% said their team measures the quality (not just coverage) of AI-generated tests. The gap between adoption and measurement is the actual story.

Coverage vs mutation score, team-level

Our dataset shows a distinctive pattern once a team crosses 30% AI-assisted test authorship:

| AI-assisted test % | Typical coverage change | Typical mutation score change | Bug escape rate trend |

|---|---|---|---|

| 0-15% | Stable | Stable | Stable |

| 15-30% | +8-12 pp | Flat or -2 pp | Stable |

| 30-50% | +15-20 pp | -5 to -8 pp | +12-18% more escapes |

| 50%+ | +25 pp | -10 to -14 pp | +25-35% more escapes |

The contrarian finding: teams that maxed out AI test generation shipped MORE bugs in the 3 months after adoption, not fewer, despite higher coverage. Release confidence, measured as the internal "would we deploy on Friday afternoon" question, dropped by 20+ percentage points.

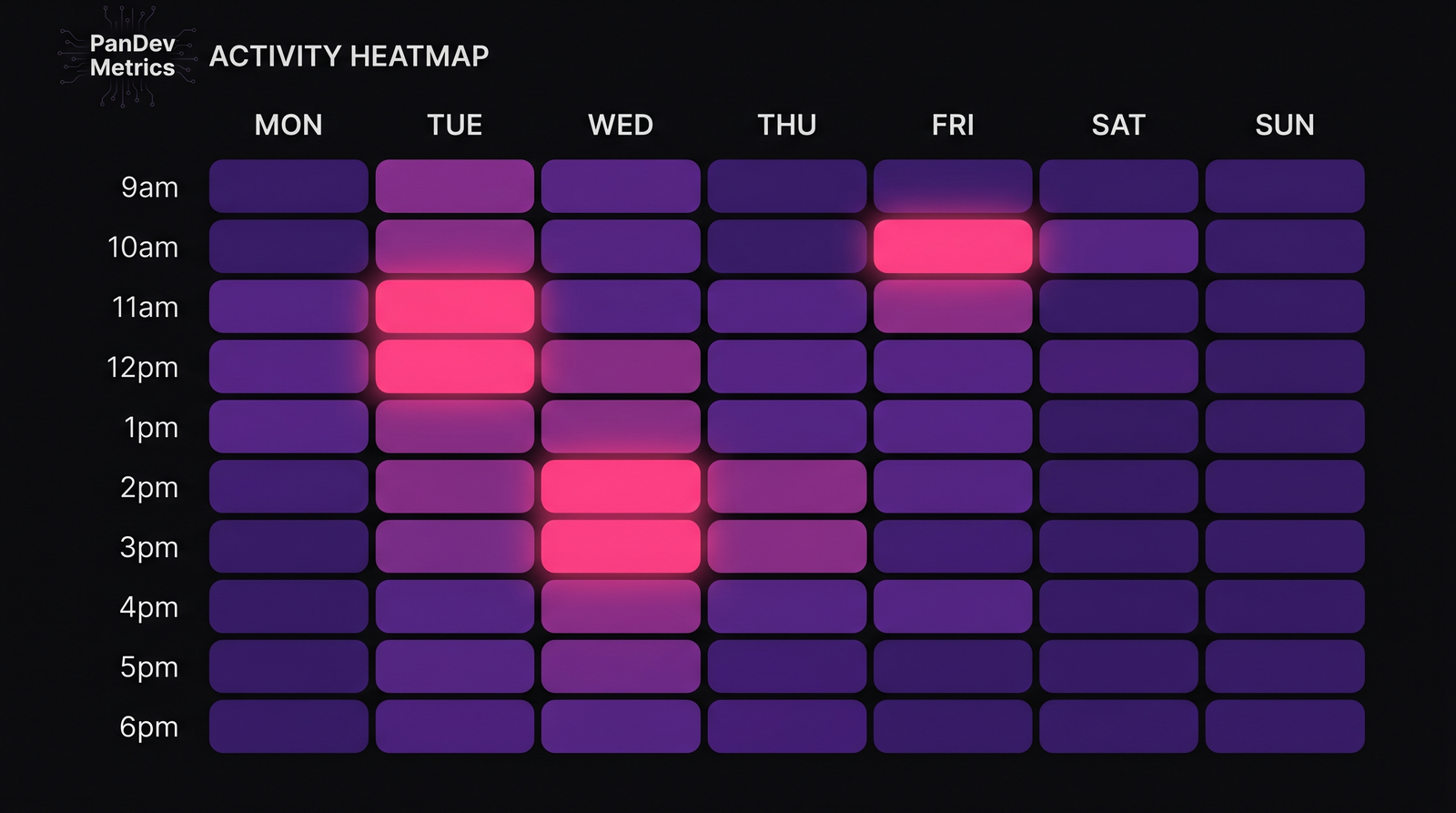

Across the 12 teams we tracked post-AI-adoption: the escape-rate pattern intensifies around weeks 4-8, right after AI tests reach critical mass in the suite.

Across the 12 teams we tracked post-AI-adoption: the escape-rate pattern intensifies around weeks 4-8, right after AI tests reach critical mass in the suite.

What changes when review is mandatory on AI-authored tests

One protocol recovers most of the lost mutation-score ground: every AI-generated test gets a mandatory human review pass BEFORE merge, not after.

| Protocol | Median mutation score | Speed (tests/week) | Bug escape trend |

|---|---|---|---|

| No AI (baseline) | 78% | 1.0× | Baseline |

| AI-only, no review | 60% | 2.6× | +22% escapes |

| AI-authored + human reviewed | 74% | 2.1× | -3% escapes |

| AI suggests, human rewrites | 76% | 1.8× | -8% escapes |

The last row is interesting. Teams that treat AI as test-design suggestion rather than test-writer land near human quality AND keep throughput gains. Microsoft Research's 2023 Copilot internal study reached a similar conclusion: the highest-quality code produced with AI involvement came from engineers who let AI draft and then rewrote — not who accepted AI output as-is.

What this means for engineering leaders

1. Stop using coverage as your AI test-quality metric

Coverage tells you whether the test ran, not whether it catches anything. Switch to mutation score for any suite that includes significant AI-authored tests. Stryker (JS/TS), PIT (Java), and mutmut (Python) all ship with CI integrations. Cost: typically 5-10 minutes of CI time per PR at mid-sized repo scale.

2. Institute AI test-review as a merge gate

Write it into your code review checklist. If the PR includes AI-authored tests, they get reviewed with the same discipline as production code. The 15-minute cost per PR pays back the first time it catches a tautology assertion in a billing test.

3. Track AI-assisted coding share by project, not by person

AI adoption is never uniform across projects. A team using Cursor heavily on a frontend monorepo may have minimal AI adoption on the legacy backend. Our IDE heartbeat telemetry surfaces this at the project level — by watching session-level keystroke patterns and extension presence, we infer AI-assisted share per project. Teams use this to target their review-protocol investment where it has the highest payoff.

4. Expect a ~3-month dip, then recovery

Teams that invest in review discipline and mutation-score tracking pass through a quality dip around month 1-3 after adoption and come out ahead by month 6. Teams that don't invest stay stuck at the dip. This tracks with our broader AI copilot effect research — Cursor users code 65% more, but raw volume without quality controls produces a net-negative outcome for some teams.

Methodology

We measure AI-assisted coding sessions through IDE heartbeat data: when the Copilot, Cursor, or Continue extension is active during a coding session AND the keystroke-burst pattern matches AI-assisted entry (long paste-like edits with short typing bursts), we tag the session. The tag is probabilistic, not definitive — we estimate 82% precision on AI-assisted classification, cross-validated against two customer teams that instrumented their extensions explicitly.

Mutation-score figures come from:

- Ciniselli et al. 2024 IEEE, for academic benchmark

- Three customer teams that ran Stryker/PIT and shared aggregate results

- SmartBear State of Testing 2025 for the adoption-but-not-measurement finding

- Microsoft Research 2023 internal Copilot study for the "AI suggests, human rewrites" pattern

An honest limit

We can't see mutation scores in most customer data — we see coverage, AI-assisted time share, and bug escape rates via incident links. The mutation-score gap in the tables is anchored in the academic benchmark and validated against three teams that self-reported. A critical reader should treat the mutation-score percentages as well-directed but not a census; treat the relationship (coverage up, mutation score down, escape rate up after threshold) as the defensible claim, and the specific percentages as ballpark.

The final argument

Coverage is a lie when AI writes the tests. Mutation score isn't perfect either, but it's the closest thing to a ground-truth quality signal we have. Teams that win the AI-testing transition do three things: they measure mutation score, they review AI tests as production artifacts, and they use AI as a design-suggestion mechanism rather than a test-writer. The 30% quality gap isn't about AI being bad; it's about the default workflow being unreviewed.