Best CodeClimate Alternative in 2026: Velocity vs Quality

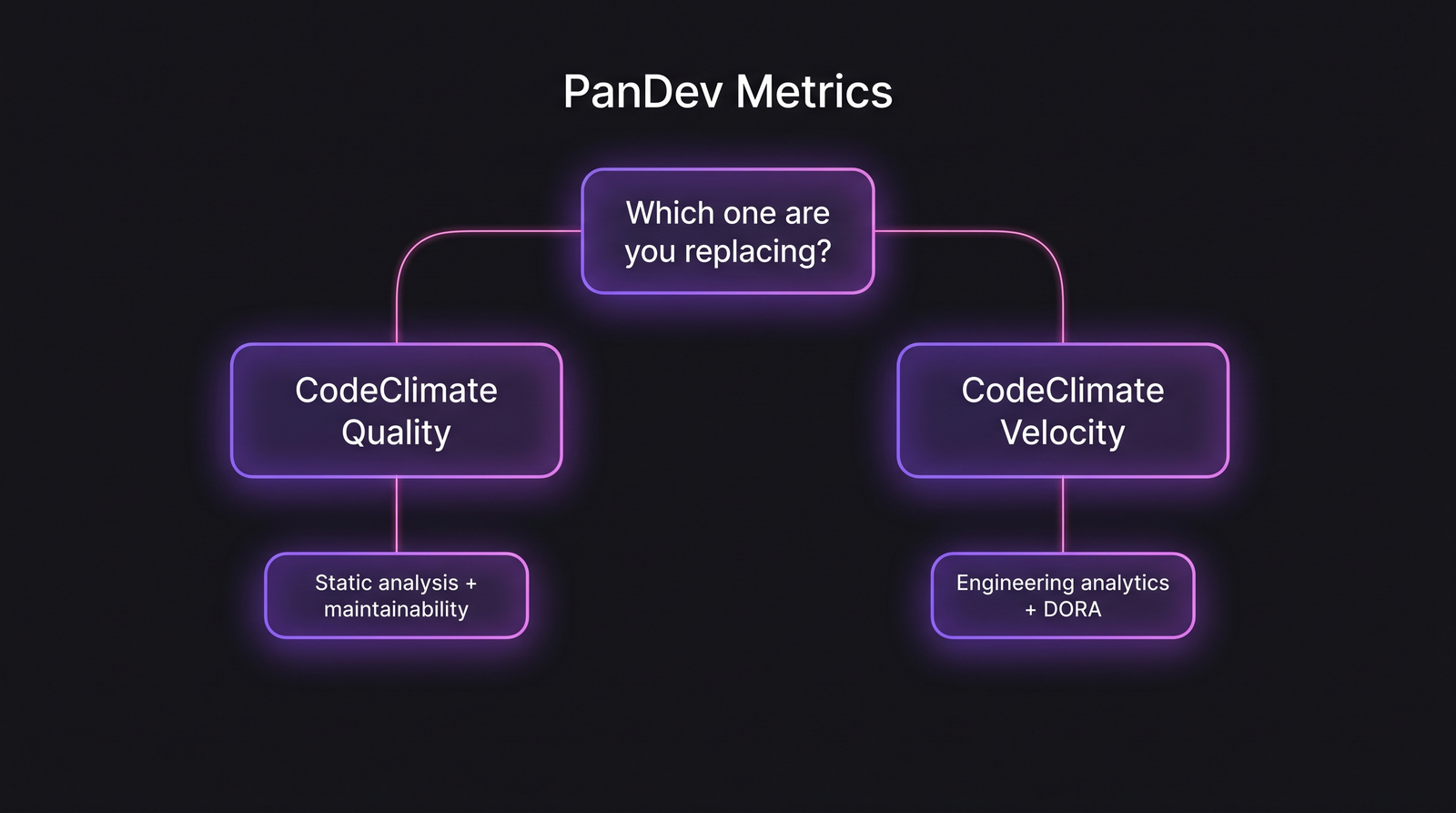

The first thing to clear up: CodeClimate is two products under one brand, and most "CodeClimate alternative" searches conflate them. CodeClimate Quality is the original. It's a SaaS static analyzer that scores maintainability, surfaces duplication, and runs as a PR gate. CodeClimate Velocity is the engineering-analytics product, sitting in the same lane as Jellyfish, LinearB, and Swarmia.

You replace these for completely different reasons. If you're shopping for a CodeClimate Quality alternative, you want SonarQube, Codacy, or DeepSource. If you're shopping for a CodeClimate Velocity alternative, you want PanDev Metrics, LinearB, Swarmia, or Faros AI. Buying the wrong category costs roughly six months and a renewal cycle.

This article walks both lanes honestly. We have a separate PanDev vs CodeClimate head-to-head; this piece is the broader market view.

{/* truncate */}

The two-product confusion (and why it matters)

CodeClimate built Quality first (launched 2011 as a Ruby static analysis service) and grew into engineering analytics with Velocity in 2018. Both are still sold today, on separate pricing pages, with separate trials, and (confusingly) overlapping marketing language.

The CodeClimate brand splits across two product categories. Most teams use one but reference both interchangeably in vendor evaluations, which leads to mismatched shortlists.

The CodeClimate brand splits across two product categories. Most teams use one but reference both interchangeably in vendor evaluations, which leads to mismatched shortlists.

According to GitHub Octoverse 2024, code-quality tooling and engineering-analytics tooling rank in different procurement categories at large enterprises. Smaller orgs often run both under "developer tooling" and surface the confusion only mid-evaluation.

Quick test: which of these does your CodeClimate dashboard show?

- Maintainability grade per file, code smells, churn vs complexity scatter plot → Quality

- Cycle time chart, deployment frequency, sprint velocity, ROI per initiative → Velocity

- Both, on different tabs → both products, two contracts

Once you know which side you're replacing, the alternatives are almost completely disjoint.

When teams outgrow CodeClimate Velocity

Velocity launched as a fast-follow into the engineering-management-platform (EMP) category. It does the standard EMP job: ingest Git, ingest Jira, render cycle time, render DORA, render investment allocation. The 2024 DORA State of DevOps Report notes that EMP adoption grew sharply 2022-2024, with 41% of surveyed organizations using some form of engineering-analytics platform.

Three patterns drive teams off Velocity:

- Pricing renegotiations. Velocity moved to seat-based pricing with minimum commits that surprised renewing customers.

- Lack of IDE-level signal. Velocity reads Git events and Jira tickets, no editor telemetry; teams comparing to PR-event-only platforms eventually want deeper data.

- The Quality-Velocity confusion at procurement time. Finance asks "we already pay CodeClimate, why two line items".

When teams outgrow CodeClimate Quality

Quality is a more crowded space. The 2024 Stack Overflow Developer Survey listed SonarQube as the most-used static analysis platform among professional developers, with 27% adoption. Quality didn't crack the top 5. Switching pressure usually comes from:

- Want self-hosted on-prem (Quality has limited self-hosted, SonarQube ships it natively)

- Want broader language coverage (Quality has Ruby/JS strength, weaker in Go, Rust, Kotlin)

- Want LLM-augmented review (Codacy, DeepSource shipped this faster)

This article focuses on the Velocity replacement for the rest, because that's the harder decision and where most "CodeClimate alternative 2026" search traffic actually intends. Quality alternatives are a separate evaluation we'll cover elsewhere.

The 5 alternatives to CodeClimate Velocity

| Alternative | Best fit | Annual cost (50 devs) | On-prem | IDE telemetry | Quality features |

|---|---|---|---|---|---|

| PanDev Metrics | Mid-market wanting full-stack signal | $15K-$25K | Yes | Yes | No (use SonarQube alongside) |

| LinearB | Workflow-automation-focused teams | $30K-$60K | No | No | No |

| Swarmia | Eng-led teams wanting opinionated guidance | $25K-$45K | No | No | No |

| Jellyfish | 200+ dev orgs needing portfolio view | $80K-$250K | No | No | No |

| Faros AI | Enterprises with data-warehouse mindset | $80K-$150K | Yes | Partial | No |

None of these include code-quality scoring. If you used Velocity and Quality, you'll need a Velocity-replacement plus a Quality-replacement (SonarQube/Codacy/DeepSource). Most teams discover this in the second week of evaluation.

Five evaluation criteria for the Velocity replacement

1. Does "engineering productivity" mean PRs or coding?

This is the deepest fork in the alternative landscape. CodeClimate Velocity, LinearB, Swarmia, Jellyfish, and Faros AI all derive activity from Git and Jira. PR cycle time is the headline metric. The hidden assumption: every meaningful unit of work becomes a PR.

That assumption breaks under inspection. A Microsoft Research / GitHub joint paper (Storey, Houck et al., 2022) examining developer activity across 2,000+ engineers found that "non-PR work" (research, prototyping, debugging without commit, design discussion captured in comments) consumes a substantial share of senior-engineer time. PR-only platforms render those engineers as low-output and are wrong about it.

PanDev Metrics ingests IDE heartbeat data alongside Git events. That changes the productivity picture in three ways: senior engineers stop looking idle; deep-work blocks become visible; the 40% productivity tax of context switching shows up as lost coding minutes, not as a derived guess from ticket churn.

2. Setup time and Jira mapping debt

CodeClimate Velocity onboarding involves Jira workflow mapping: defining what counts as "in progress", "in review", "done" across project types. That mapping is a recurring tax. Every new team's Jira config has to be re-mapped.

PanDev Metrics setup requires one branch-naming convention (feature/TASK-324) and reads everything else automatically. LinearB and Swarmia sit between, with their own workflow definitions to configure but less depth than Velocity.

3. On-prem and air-gapped support

Velocity is cloud-only with limited enterprise self-hosted options. Faros AI offers self-hosted with implementation effort. PanDev Metrics ships Docker Compose and Helm: same product, on-prem or cloud, no degraded edition.

For fintech, telecom, and govtech, this isn't a preference. CNCF's 2024 annual survey noted that 44% of organizations operating regulated workloads had on-prem requirements for at least some developer tooling. Velocity loses the deal at that table.

4. Cost-per-feature and financial readout

Velocity surfaces investment allocation but it's tied to Jira initiative tagging. You only see what your team tagged. PanDev Metrics derives cost per feature from tracked time × hourly rate, which works even if Jira hygiene is imperfect. The contrarian point: most teams don't realize their "investment allocation" report from Velocity is reflecting tag discipline, not actual effort distribution.

5. The renewal-time question

Six months before your CodeClimate Velocity renewal, ask one question: which dashboard did anyone outside engineering open in the last 30 days? If the answer is "nobody opened any of them", you're paying for a tool that solved a perceived problem, not an actual one. The real replacement might be a smaller scope (DORA only via Sleuth, IDE-only via WakaTime), not a like-for-like swap.

Pricing reality

| Plan tier | CodeClimate Velocity | PanDev Metrics | LinearB | Swarmia |

|---|---|---|---|---|

| Entry (10-25 devs) | $25-30/dev/mo | $15-25/dev/mo | $25-30/dev/mo | $20-35/dev/mo |

| Mid (50-150 devs) | $30-45/dev/mo | $20-30/dev/mo | $30-40/dev/mo | $25-40/dev/mo |

| Enterprise (200+) | Custom | Custom | Custom | Custom |

| Min seats | 25 | 5 | 25 | 25 |

| On-prem premium | N/A | None | N/A | N/A |

For a 50-engineer team, Velocity lands around $20K-$30K/year. PanDev Metrics is roughly half. The savings doesn't make it the right choice (fit does), but it changes the conversation from "approve a procurement" to "approve a small line item".

Honest limits

We are PanDev Metrics. This article is biased toward arguing that IDE-level signal beats PR-event-only. That bias is real, but it's load-bearing for our positioning, and we'd rather be honest about it than pretend the comparison is neutral. We do not offer code-quality scoring. If you use CodeClimate Quality, none of the Velocity alternatives we listed replaces that side. Pair with SonarQube or Codacy for the static-analysis function.

Our IDE dataset covers B2B engineering teams, primarily in KZ/UZ/RU and select EU/US/SG deployments. Solo developers and pure open-source contributors are not in it.

The decision in two sentences

If you're replacing CodeClimate Velocity, the field is PanDev Metrics, LinearB, Swarmia, Jellyfish, and Faros AI, and the deepest fork is whether you want IDE-level signal or PR-event-only. If you're replacing CodeClimate Quality, you're shopping in a different aisle entirely (SonarQube, Codacy, DeepSource), and conflating the two is the most expensive evaluation mistake we see.