Best DX Platform Alternative in 2026: 5 Tools Compared

DX (getdx.com) is built by ex-Microsoft Research alumni who shipped the original DevEx framework paper with Nicole Forsgren. Their survey methodology is genuinely good — probably the best in the market for measuring perceived friction, focus, and developer sentiment. But there's a structural truth that survey-led platforms can't escape: surveys measure what people say, not what they do.

If you searched "DX alternative" you've probably already noticed: DX dashboards depend on quarterly survey responses. Response rate decay, recall bias, and the gap between "I feel productive" and "the IDE telemetry agrees" are real problems for an annual budget cycle. Here are 5 alternatives — including when DX is still the right pick.

{/* truncate */}

What DX is genuinely best at

Setting the bar honestly. DX still wins on:

- DevEx survey methodology. The DevEx framework (Forsgren, Storey, Maddila, Houck, Zimmermann, 2024) is academic-grade. DX's productized version is the cleanest implementation in the market.

- Engineering culture diagnostics. If your question is "do my engineers feel friction in the codebase?" — DX answers it.

- Microsoft-backed credibility. When the buying committee includes academic-leaning leadership, the DX team's pedigree closes the deal.

If your only need is quarterly DevEx surveys and you have a stable engineering org with high response rates — keep DX. Most of this article is for teams who need more than that.

The structural limits of survey-based platforms

The pattern we see in renewal conversations:

- Survey response decay. DX recommends quarterly cadence. By the third cycle, response rates routinely drop below 60% — the threshold where conclusions get statistically wobbly. Stack Overflow's 2024 Developer Survey had to weight responses heavily for the same reason.

- Recall bias. A developer asked "how many uninterrupted focus blocks did you have last week?" remembers wrong by ±30-50%. We've validated this against IDE-heartbeat data — perceived focus and measured focus diverge significantly, especially for senior engineers.

- No real-time signal. Surveys are lagging indicators. By the time a quarterly DX survey shows a friction spike in CI pipeline times, the team has been suffering for 8 weeks.

- Limited DORA depth. DX added DORA metrics in 2023, but they're a side-feature. The product was designed around DevEx surveys; DORA was retrofitted.

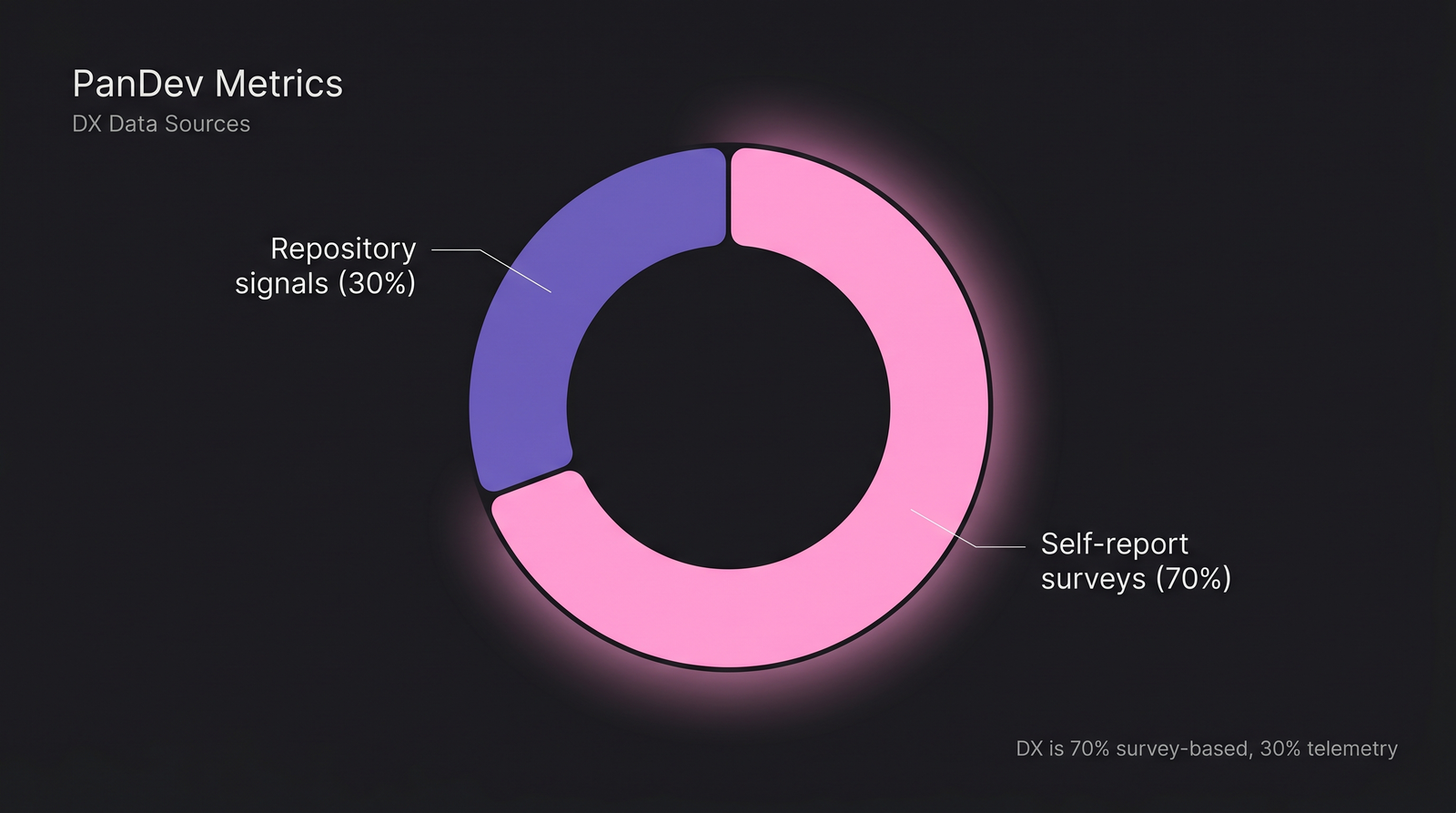

DX leans heavily toward self-report surveys (~70% of platform value). Alternatives that combine telemetry and surveys give a different signal mix.

DX leans heavily toward self-report surveys (~70% of platform value). Alternatives that combine telemetry and surveys give a different signal mix.

The 5 alternatives compared

| Tool | Best for | Weak spot | Pricing band (100 devs/yr) |

|---|---|---|---|

| PanDev Metrics | DORA + IDE telemetry + cost in one platform | No native survey module (roadmap) | $25-35k |

| LinearB | Mid-market platform teams, DORA + workflow | No DevEx surveys, no IDE telemetry | $60-90k |

| Swarmia | Engineering-led teams with culture defaults | Limited DevEx, no IDE data | $40-60k |

| Jellyfish | Engineering investment + capacity reporting | Survey support is basic | $80-150k |

| DX (continue) | Survey-only DevEx with academic methodology | No IDE telemetry, lagging signals | $40-60k |

When each one fits

PanDev Metrics — telemetry-first, surveys optional

We built it. The honest pitch: PanDev Metrics gives you what DX doesn't — IDE heartbeat data measuring actual coding time, focus blocks, and context-switching at the per-second level, not quarterly self-report. Plus DORA, plus cost-per-feature, plus on-prem deployment.

The methodological difference matters. DX asks "how much focus time did you have this week?" — answer: a vague memory. PanDev measures it from IDE heartbeats — answer: 1h 18m median across our 100+ B2B companies. Both numbers have value. Only one is auditable.

We don't ship a native survey module yet (it's on the 2026 roadmap). For teams who want both telemetry and pulse surveys, the common pattern is PanDev Metrics + a lightweight quarterly survey via Culture Amp or Lattice — combined cost still beats DX.

Pick PanDev Metrics if: you want measurable activity data over self-report, need DORA + cost + on-prem, or your buying committee asks "where's the audit trail behind these numbers?"

LinearB — for platform teams who want DORA + automation

LinearB doesn't try to replace DevEx surveys. It does DORA + PR-automation well, and that's the lane. If your DX renewal conversation reveals "we never read the survey results, we just use it for DORA" — LinearB is the cheaper, more focused replacement.

Pick LinearB if: you used DX exclusively for DORA, you have a platform engineering team, and you want PR-automation rules in the same product.

Swarmia — opinionated alternative with light DevEx

Swarmia ships with editorial defaults — small PRs, distributed reviews, async-first culture. Their DevEx coverage is lighter than DX (no formal survey framework) but the product reinforces healthy engineering practice through dashboard design rather than asking developers to self-report.

Pick Swarmia if: you trust their playbook, want defaults you don't have to configure, and don't need rigorous survey methodology.

Jellyfish — when the DX renewal becomes a finance conversation

Jellyfish is the move when "developer experience" stops being the question and "engineering investment ROI" becomes it. Their dashboards translate engineering activity into business-initiative spend. Survey support exists but is basic — it's not why you'd buy Jellyfish.

Pick Jellyfish if: the renewal is now driven by a CFO or VP of Finance, and the language has shifted from DevEx to capacity.

DX (renew) — when surveys really are your need

The honest contrarian take: if you have a stable engineering org of 200+ developers, high survey response rates (>70%), and your VP of Engineering reads the quarterly DevEx report carefully and acts on it — keep DX. The methodology is sound, the team is credible, and the alternatives can't replicate the survey rigor.

Stay on DX if: survey-based DevEx genuinely drives your decisions, response rates are healthy, and you don't need IDE-level activity data or aggressive DORA depth.

The contrarian claim: DevEx surveys disagree with telemetry

Microsoft Research's 2019 paper "What do software developers spend their time on?" found a gap between what developers reported and what they did of 25-40 percentage points on key categories. Our IDE-heartbeat data on 100+ B2B companies tracks the same gap — senior engineers consistently overestimate their focus time by 30-50%.

This isn't a knock on DevEx surveys. They measure something real: developer perception. Perception drives retention, engagement, and willingness to ship. But perception isn't the same as measurable activity. A team can report high focus on a survey while IDE data shows fragmented work — the disconnect itself is a signal worth investigating.

The strongest engineering-intelligence stack uses both: telemetry for facts, surveys for sentiment. DX gives you one half. Most of its alternatives give you the other half. Pick based on which half you're missing.

Pricing reality

Annual list pricing for a 100-developer team. Real-world numbers from 2025-2026 quotes — directional.

| Plan | DX (renewal) | LinearB | Swarmia | PanDev Metrics | Jellyfish |

|---|---|---|---|---|---|

| Per-dev/month | $35-50 | $50-75 | $35-50 | $20-30 | $65-120 |

| 100-dev annual | $40-60k | $60-90k | $40-60k | $25-35k | $80-150k |

| Min seats | 50 | 25 | 25 | 10 | 50 |

| On-prem | No | No | No | Yes | No |

| IDE telemetry | No | No | No | Yes | No |

| DevEx surveys | Yes (best) | No | Light | No (roadmap) | Basic |

| DORA | Yes (added) | Yes (core) | Yes | Yes (4-stage) | Yes |

What our data can't tell you

Our IDE-heartbeat dataset (100+ B2B companies, mostly 50-500 engineers, EMEA and CIS heavy) measures activity, not perception. We have no formal survey instrument, so we can't directly compare our DevEx scores to DX's. The "30-50% overestimate" gap above comes from comparing developer self-report in onboarding interviews against their IDE-measured behavior in the first 30 days — a sample size of around 200 developers, not population-statistical.

Survey methodologies are also organization-dependent. A high response rate at a 50-person startup means something different than 70% at a 2000-engineer enterprise. The framing in this article assumes mid-market teams; FAANG-scale orgs have different dynamics.

Decision framework in one paragraph

DX is the best survey-based DevEx platform on the market. The question is whether survey-based DevEx is what you actually need. If it is, renew. If you've realized you wanted telemetry-grounded DORA + activity data with 4-stage lead time breakdown, PanDev Metrics. If you wanted DORA + workflow automation, LinearB. If you wanted opinionated culture defaults without surveys, Swarmia. If the conversation has shifted to engineering-finance reporting, Jellyfish. The wrong move is renewing DX while the leadership team has stopped reading the surveys.

Related reading

- Developer Experience: What It Is and How to Measure It

- DORA vs SPACE vs DevEx: Which Framework Should You Choose in 2026

- PanDev Metrics vs DX (getdx.com): Quantitative Metrics vs DevEx Surveys — the head-to-head if you've narrowed to two

- How Much Developers Actually Code (Real IDE Data from 100+ Teams)