Best Haystack Alternative in 2026: 5 Tools Compared

Haystack does one thing well: lightweight engineering analytics for early-stage teams. It's clean, fast to set up, and reasonably priced. The issue most customers hit isn't a feature gap — it's a scale ceiling. Past 50-80 engineers, the dashboards feel thin, the integration list feels short, and the question "where does our developer time actually go?" goes unanswered.

If you're searching "Haystack alternative" you've probably already hit one of those walls. Here are 5 platforms that take you past it — including, honestly, when you should stay on Haystack.

{/* truncate */}

What Haystack is genuinely good at

Setting the bar honestly first. Haystack still wins when:

- Your team is under 30 engineers. The product is sized for this segment, and the pricing reflects it.

- You only need DORA + a few collaboration metrics. PR cycle time, review distribution, deployment frequency — these are clean.

- You don't want to think about deployment. Haystack is cloud-only, no on-prem, no setup beyond connecting GitHub.

For a 15-person engineering team that just wants the four DORA numbers and a basic team dashboard, Haystack is fine. This article isn't for that team.

The scale ceiling: why teams outgrow Haystack

The pattern we see in renewal conversations:

- Integrations stop scaling at 50+ devs. Haystack supports GitHub, GitLab, and Jira. That's it. No ClickUp, no Azure DevOps, no Bitbucket on-prem, no Linear. As your toolchain grows, Haystack stops covering it.

- No IDE-level telemetry. Haystack reads Git events. It can't tell you what developers do between commits — debugging, code reading, switching projects. Microsoft Research's 2019 study put active coding at 22% of the workday. Git-only tools see only that 22%.

- The product cadence has slowed. Haystack's last major UI overhaul was in 2023. New features in 2025 were incremental. For a 200-person engineering org, "thin and stable" reads as "stagnant."

- No on-prem. Same dealbreaker as with most US-based DORA tools. Fintech and govtech teams with EU residency requirements hit a wall.

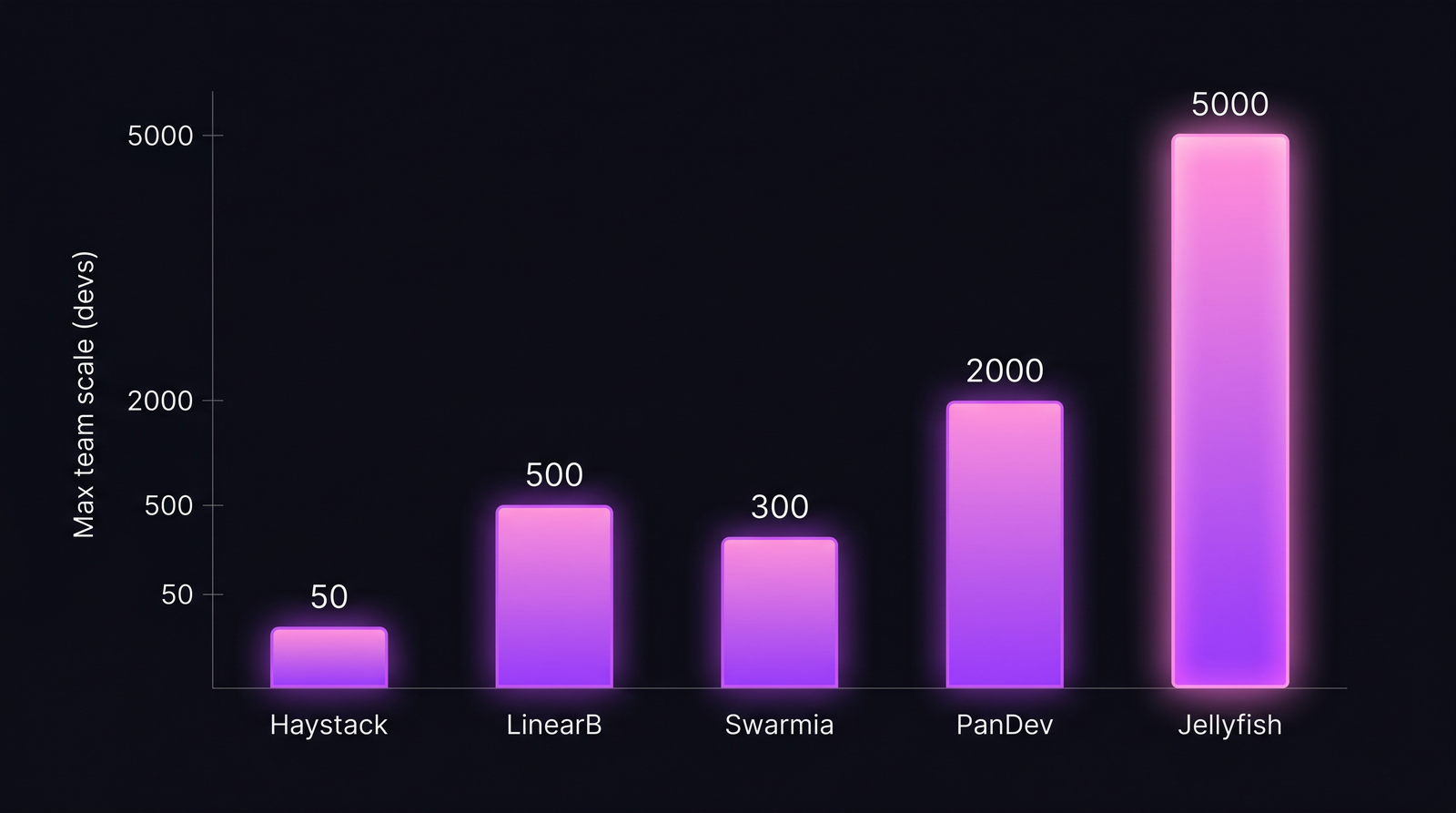

Practical ceiling based on customer-base data and dashboard performance benchmarks. "Max team scale" reflects where each platform's UX and data model start to feel constrained, not a hard product limit.

Practical ceiling based on customer-base data and dashboard performance benchmarks. "Max team scale" reflects where each platform's UX and data model start to feel constrained, not a hard product limit.

The 5 alternatives compared

| Tool | Best for | Weak spot | Pricing band (100 devs/yr) |

|---|---|---|---|

| LinearB | Mid-market teams that want DORA + PR automation | Opaque pricing, mandatory annual | $60-90k |

| Swarmia | Engineering-led teams with opinions on collaboration | Smaller dataset, fewer integrations | $40-60k |

| PanDev Metrics | Full-stack: DORA + IDE + cost + on-prem | Smaller US footprint than incumbents | $25-35k |

| Jellyfish | Boardroom-level engineering investment reporting | DORA is a side feature, not core | $80-150k |

| Code Climate Velocity | Code-quality + git-based metrics in one | Acquired in 2023, slow updates | $40-70k |

When each one fits

LinearB — for teams that want DORA + workflow automation

LinearB is the natural step up from Haystack if your problem is "DORA is fine, but we need more." Their gitStream PR-automation rules let you encode reviewer assignment, label propagation, and SLA tracking inside the same product. Mid-market teams (50-300 devs) are the sweet spot.

Pick LinearB if: you have 50-300 devs, an SRE-leaning platform team, and the renewal conversation includes "we want to automate our PR process."

Swarmia — for teams aligned with their playbook

Swarmia takes a stronger editorial position than Haystack. They publish their view on healthy engineering — small PRs, distributed reviews, async-first — and the product reflects that view. If your team already operates that way, Swarmia reinforces it. If you don't, you'll fight the defaults.

The dataset is smaller and the integration list is similar to Haystack's (GitHub, GitLab, Jira, Linear, Slack). The win over Haystack is more polish and faster product velocity, not a wider feature footprint.

Pick Swarmia if: you want opinionated defaults you don't have to configure, you're 50-200 devs, and you don't need on-prem or non-standard integrations.

PanDev Metrics — for teams that need the full picture

We built it. The honest pitch: PanDev Metrics gives you Haystack-equivalent DORA plus IDE heartbeat telemetry across JetBrains, VS Code, Eclipse, Xcode, and Visual Studio, cost-per-feature analytics, and on-prem Docker / Kubernetes deployment.

The IDE layer is the differentiator. Haystack tells you "PR cycle time is 2.4 days." PanDev tells you what happened during those 2.4 days — was the developer actively coding, debugging, in meetings, or context-switching across three projects? Our IDE-heartbeat data across 100+ B2B teams shows that the 2.4-day cycle time often hides only 1h 18m of actual code-writing per day. Knowing which is which changes the conversation with leadership.

Pick PanDev Metrics if: you've outgrown DORA-only, want IDE-level activity data, need cost-per-feature for finance conversations, or have a regulated-industry on-prem requirement.

Jellyfish — for engineering-finance reporting

Jellyfish is a step in a different direction. Less about day-to-day engineering ops, more about how engineering investment maps to business initiatives. Their dashboards talk in CFO language: "47% of engineering capacity went to enterprise-tier work last quarter."

If your renewal conversation includes a CFO or VP of Finance, Jellyfish gets the room. If it's just engineers in the room, it's overkill.

Pick Jellyfish if: the buying committee includes finance leadership and the use case is "we need to defend the engineering budget to the board."

Code Climate Velocity — for code-quality-first teams

Code Climate (acquired by Aikido Security in 2023) bundles their classic code-quality scanner with git-based velocity metrics. The DORA implementation is solid. The real edge is technical-debt and complexity tracking on top of velocity — you see "PRs ship 18% slower in the legacy module" because the data is right there.

The catch: post-acquisition, the velocity product has moved slowly. Some customers report 9-month gaps between meaningful releases.

Pick Code Climate if: technical debt and code quality are first-class concerns, you already use their quality scanner, and you can tolerate slower product cadence.

Pricing reality

Annual list pricing for a 100-developer team. Real-world numbers we've seen in 2025-2026 quotes — directional, not exact.

| Plan | Haystack (renewal) | LinearB | Swarmia | PanDev Metrics | Jellyfish | Code Climate |

|---|---|---|---|---|---|---|

| Per-dev/month | $15-25 | $50-75 | $35-50 | $20-30 | $65-120 | $35-60 |

| 100-dev annual | $18-30k | $60-90k | $40-60k | $25-35k | $80-150k | $40-70k |

| Min seats | 10 | 25 | 25 | 10 | 50 | 25 |

| On-prem | No | No | No | Yes | No | No |

| IDE telemetry | No | No | No | Yes | No | No |

| Trial | Yes (14d) | Yes (14d) | Yes (14d) | Yes (30d) | Demo only | Yes (14d) |

The contrarian take: most teams switching from Haystack are switching too early

Unpopular advice from a competitor. But: about 30% of "Haystack alternative" searchers we've talked to don't actually have a Haystack problem. They have a measurement problem — they're tracking the wrong metrics, ignoring the dashboards they have, or expecting numbers to fix a process gap that no tool will fix.

Switching tools won't fix:

- A team that doesn't run retros on the metrics they have

- A leadership team that asks for "productivity numbers" but doesn't act on the ones already shown

- A workflow where branch names don't include task-IDs (every DORA tool degrades without this)

Switch tools when you've outgrown a feature surface, not when you're frustrated by your own process.

What the data can't tell you

Our IDE-heartbeat dataset covers 100+ B2B companies (mostly 50-500 engineers, EMEA and CIS heavy). We have less signal on US-only teams under 20 engineers, which is Haystack's core market — so the "scale ceiling" framing comes from buyer conversations, not statistical analysis. The pricing bands are list-price estimates from quotes; every vendor on this list discounts 20-40% on annual contracts.

DORA scores in any of these tools also depend on the task-ID-in-branch-name convention. If your team doesn't use it, lead time and change failure rate degrade in every platform — including ours.

Decision framework in one paragraph

Haystack was built for the 10-50-engineer segment. If you're still there and renewing isn't painful, stay. If you've grown past 50 and want PR-automation, LinearB. If you want opinionated culture defaults, Swarmia. If you need DORA plus IDE-level activity, cost data, and on-prem — especially for regulated environments — PanDev Metrics. If you're answering to a CFO, Jellyfish. If code quality is first-class, Code Climate. The wrong move is renewing Haystack while frustrated and never naming what you actually need next.