Best LinearB Alternative in 2026: When the Workflow Engine Costs More Than It Saves

LinearB built one of the most opinionated tools in engineering analytics. The dashboards are good. The DORA reports are accurate. But the real product is the workflow engine: gitStream rules, auto-PR-routing, slack-bot reminders, custom team initiative tracking. That layer is what justifies the $30-50/seat price tag. The question every renewal cycle asks: is the workflow engine actually changing behavior, or are we paying premium for a dashboard?

If you're typing "linearb alternative" in 2026, you've probably already asked yourself that.

{/* truncate */}

Where LinearB is genuinely strong

Three capabilities are hard to find elsewhere at the same depth:

- gitStream as a programmable workflow layer. Custom rules in YAML that block, route, label, or automate PRs. No one else ships this with the same maturity.

- DORA + Project DORA. Standard four metrics, plus their breakout for projects/initiatives, useful for planning conversations.

- A real customer-success motion. LinearB invests in onboarding the way a top-tier B2B SaaS does. If you need an opinionated coach pushing your team to adopt metrics, that comes free with the seat price.

If the gitStream automation layer is the reason you bought LinearB and your team adopted it, the rest of this article doesn't apply. Stay where you are.

When LinearB stops paying back

We pulled migration patterns from teams that left LinearB in the last 18 months. The cost-vs-value math is the dominant story.

| Migration trigger | What happens at renewal | Frequency in our pipeline |

|---|---|---|

| "We use 20% of the features for 100% of the price" | Per-seat cost grew faster than usage | ~45% |

| "Workflow automations weren't adopted" | gitStream rules sit unused after onboarding | ~25% |

| "We need IDE-level data" | LinearB is Git+CI/CD only | ~15% |

| "We need on-prem" | LinearB is cloud-only | ~10% |

| "We need cost-per-feature" | LinearB doesn't compute hourly rate × time | ~5% |

The 45% row is the one we keep seeing. The honest framing: gitStream is a programming model, and most teams onboard like they would a SaaS feature, then never write rules. The 2024 SPACE framework follow-up paper from Microsoft Research is explicit about this: automation tools only show ROI when teams actually configure them, and most don't.

Our own customer pipeline shows that of teams who churned from LinearB, about 60% had fewer than 5 active gitStream rules at the time of migration. They were paying for a workflow engine they weren't using.

The five real LinearB alternatives in 2026

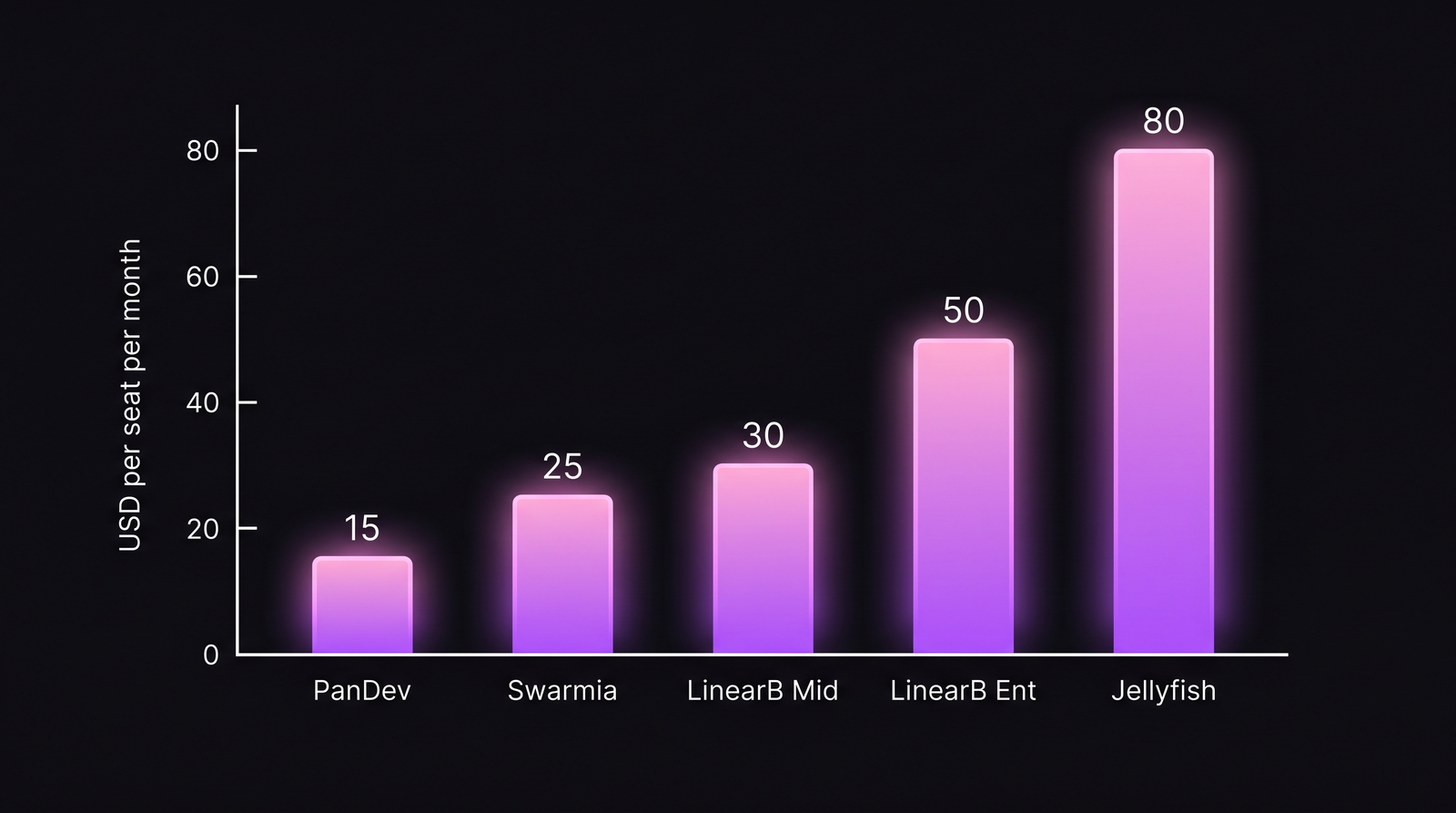

LinearB sits in the middle of the pricing spectrum. Alternatives split into "cheaper, simpler" and "more expensive, broader."

| Tool | Best for | Approx. price (2026) | Trade-off vs LinearB |

|---|---|---|---|

| Swarmia | Pure DORA + PR analytics | ~$25/seat/month | No workflow engine, no automations |

| DX (getdx.com) | DevEx surveys + telemetry | $10-20/seat/month | Different question; measures developer experience, not delivery throughput |

| Jellyfish | Engineering ↔ exec / capitalization | $50-100K/year | More expensive, broader, board-ready |

| Pluralsight Flow (Appfire) | Legacy Git analytics | ~$25/seat/month | Older UX, slower iteration |

| PanDev Metrics | IDE + Git + tasks + cost + on-prem | $15/seat/month | No gitStream-style workflow rules; richer data sources, on-prem option |

Prices are 2026 list quotes from public pricing pages and reseller conversations. LinearB and Jellyfish list prices have shifted twice this year alone, so verify before procurement.

The price-vs-feature gap is real, but the trade-off changes by tool. Cheaper alternatives drop the workflow engine; more expensive alternatives add executive-grade reporting.

The price-vs-feature gap is real, but the trade-off changes by tool. Cheaper alternatives drop the workflow engine; more expensive alternatives add executive-grade reporting.

Swarmia: the simpler Git-native alternative

Swarmia is the closest functional substitute for LinearB's measurement features. It computes DORA from the same Git events, surfaces PR cycle bottlenecks, and ships team dashboards. What it doesn't do is the workflow engine. If you're using LinearB primarily for the dashboards and you've never written a gitStream rule, Swarmia at lower price gets you the same answers. We covered this in PanDev vs Swarmia for context.

DX (getdx.com): when the question is DevEx, not delivery

DX answers a different question than LinearB. LinearB asks "is your delivery pipeline efficient?". DX asks "is your developer experience healthy?". Different problem, different tool. If you've been using LinearB to track flow metrics and wishing you had survey data alongside, DX is the right move. If you've been using LinearB for delivery acceleration, DX is not the right substitute.

Jellyfish: the upgrade, not the alternative

Jellyfish is more expensive than LinearB at every level. The reason teams move up the price ladder: Jellyfish maps engineering investment to business outcomes in a language CFOs and boards understand. Capitalization, ROI per initiative, multi-quarter trends. If you're at LinearB and finance is asking questions LinearB can't answer, Jellyfish is the answer. We dug into this in PanDev vs Jellyfish.

Pluralsight Flow: the legacy choice

Pluralsight Flow (acquired by Appfire) is the oldest tool in the category. It still has customers because the data model is mature and the price is reasonable. The trade-off is UX (it feels like a 2018 tool), and the iteration pace has slowed since the Appfire acquisition. Worth evaluating only if your procurement team trusts incumbents and your engineering team doesn't care about polish.

PanDev Metrics: for the data-source breadth

We sit at $15/seat/month, which is at the bottom of the LinearB-class market. The trade-off is honest: we don't have a gitStream-equivalent workflow engine, and we won't pretend otherwise. What we add is data-source breadth: IDE heartbeat from VS Code / JetBrains / Eclipse / Xcode / Visual Studio plugins, task-tracker time from Jira / ClickUp / Yandex Tracker, plus a financial layer that turns hours into cost-per-feature. Joining IDE time + Git artifact + Jira task + hourly rate is the kind of query LinearB explicitly doesn't run.

We are also the only tool in this comparison with an on-prem Docker and Kubernetes package. In fintech, telecom and govtech, that's not a feature; it's a procurement gate. Cloud-only competitors fail it. We pass.

What to actually evaluate next

Don't write a feature checklist. Write a question list. Then test each tool against the questions in a 30-day pilot with real data.

A practical evaluation framework that survives our customer pipeline:

| Step | Question | How to test |

|---|---|---|

| 1 | What 3 questions can't I answer today? | Write them down before vendor demos |

| 2 | Which vendor's data model joins the sources I need? | Ask for the schema diagram |

| 3 | What's the renewal price structure (linear, step-function)? | Ask for year-3 pricing in writing |

| 4 | Will the workflow features actually get used? | Audit your existing tools: what % of features are configured? |

| 5 | Does on-prem matter? | Check with security/compliance, not the CTO |

The most predictive question we've seen: ask the vendor for a customer reference at your scale who specifically uses the feature you're paying for. If they can't produce one in a week, the feature exists but isn't adopted.

The honest limit

This article is written from a perspective: we're a competitor in this market. We've tried to be honest about where LinearB outperforms us (workflow automation, customer-success motion, brand) and where the alternatives sit. But our customer dataset skews B2B mid-market in Eastern Europe and Central Asia. For a 2,000-engineer FAANG org, the trade-offs change. For a 5-person bootstrap, this entire category is overkill. None of these tools is the right answer at that size, and we'd point you at GitHub's free Insights features or DORA's open-source Four Keys project instead.

A team-sized rule of thumb that has held up:

| Engineers | Likely best fit |

|---|---|

| <10 | Free GitHub Insights, no platform yet |

| 10-50 | Swarmia, PanDev Metrics, or DX |

| 50-200 | LinearB, PanDev Metrics, Swarmia |

| 200-1000 | Jellyfish, LinearB, PanDev Metrics (on-prem cases) |

| 1000+ | Jellyfish, Faros AI, custom data pipeline |

The contrarian read

The default frame in this category is "pay more, get more." It's wrong half the time. The cleaner version: the tool that gets adopted wins, and adoption tracks closer to UX simplicity than feature breadth. We've seen 200-engineer orgs adopt Swarmia in 2 weeks and not adopt LinearB after 6 months. The same data sources. The same DORA metrics. Different adoption curves. Pay for the tool your team will open every Monday morning, not the one with the longest feature matrix.

Pick the alternative that the team actually uses. That's the only metric that matters at renewal.