Best Sleuth Alternative in 2026: 5 Tools Compared

Sleuth shipped one of the cleanest DORA implementations on the market. Then in late 2024 it was acquired and folded into a larger DevOps suite, and by 2026 the standalone product feels less actively developed than the platforms around it. That's reason enough for many teams to shop around — not because Sleuth is bad, but because betting your delivery telemetry on a product that's no longer the parent company's headline matters.

This is not a hit-piece. Sleuth's deploy-correlation model still beats most competitors. But if you searched "Sleuth alternative" you already know the deal: you want options. Here are 5 — what each does well, what each gets wrong, and the honest pick for each shape of team.

{/* truncate */}

Why teams are leaving Sleuth in 2026

Three patterns repeat in the renewal conversations we hear:

- Roadmap drift after acquisition. Sleuth shipped fewer standalone features in 2025 than in 2023. Customers ask whether the product survives long-term as a focused tool, or gets bundled into a bigger DORA-adjacent platform.

- DORA-only is no longer enough. Google's 2024 DORA report named the four classic metrics as a floor, not a ceiling. Teams now want DevEx, focus time, and cost-per-feature in the same view — Sleuth doesn't model any of those.

- Cloud-only is a dealbreaker for regulated buyers. Fintech, govtech, and EU-residency customers can't ship telemetry to a US SaaS. Sleuth has no on-prem option and no plans to add one.

Two of those three are structural. The third is fixable but hasn't been fixed in three years.

What Sleuth is still genuinely good at

Before listing alternatives, set the bar honestly. Sleuth still wins on:

- Deploy correlation. Sleuth ties git commits → deploys → incidents → DORA scores in one chain better than anyone except maybe LinearB.

- Feature flag tracking. First-class LaunchDarkly integration. If you ship behind flags, Sleuth understands the difference between "code merged" and "feature live".

- Slack ChatOps. The Slack bot for deploys and incidents is mature and used in production by hundreds of platform teams.

If those three are 80% of your need, just renew Sleuth. The rest of this article is for teams whose need is bigger.

The 5 alternatives compared

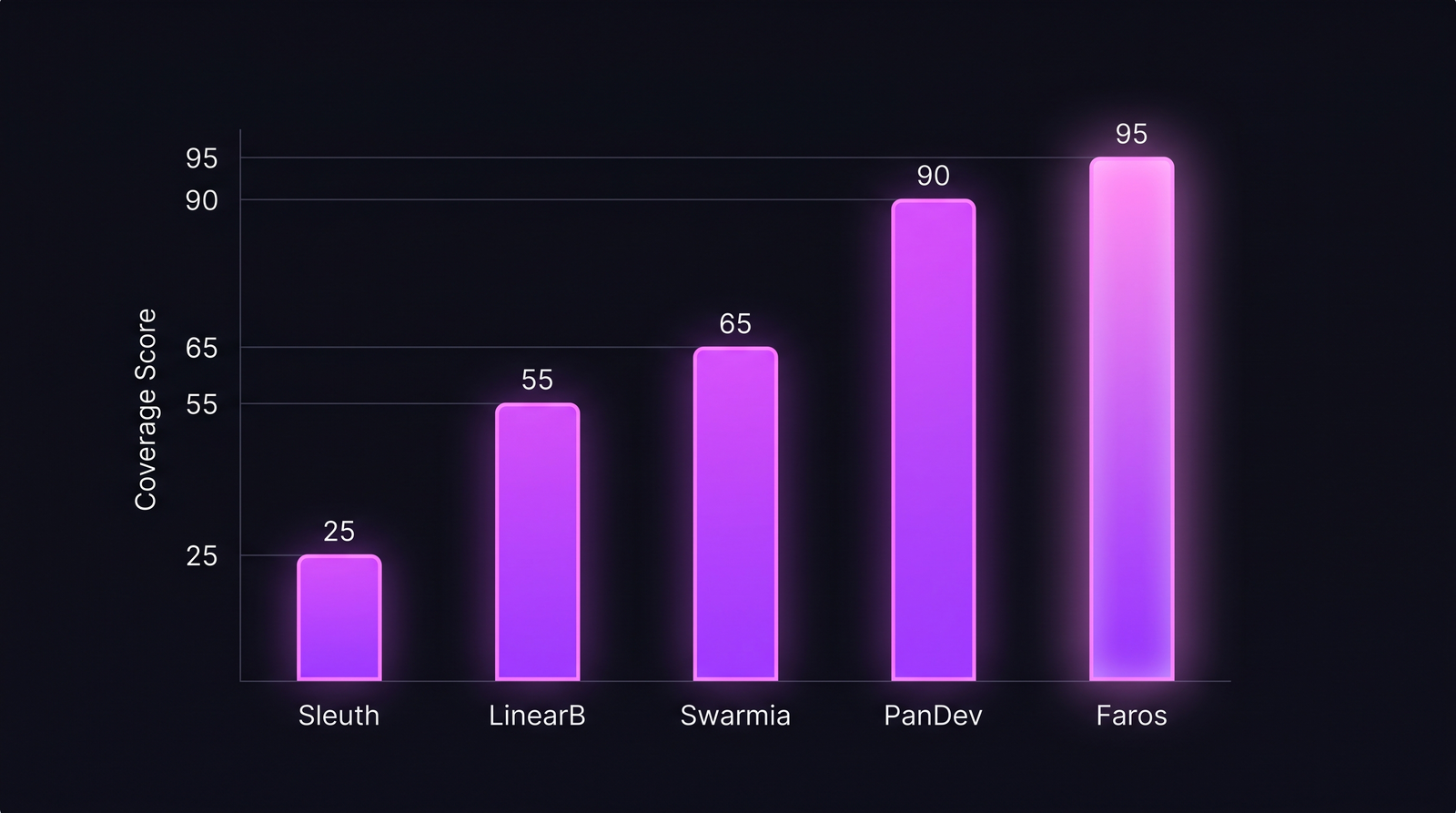

Each tool's "coverage score" reflects how many engineering-intelligence dimensions it measures: DORA, IDE activity, cost, DevEx, on-prem support, and AI queries.

Each tool's "coverage score" reflects how many engineering-intelligence dimensions it measures: DORA, IDE activity, cost, DevEx, on-prem support, and AI queries.

| Tool | Best for | Weak spot | Pricing band (100 devs/yr) |

|---|---|---|---|

| LinearB | Mid-market platform teams that want DORA + workflow automation | Pricing opacity, mandatory annual | $60-90k |

| Swarmia | Engineering-led teams, opinionated on collaboration metrics | Smaller dataset, fewer integrations | $40-60k |

| PanDev Metrics | DORA + IDE telemetry + cost in one platform, on-prem option | Smaller community than US incumbents | $25-35k |

| Faros AI | Enterprise data lakes, custom modeling, very deep pockets | Expensive, slow to deploy | $150-300k |

| Jellyfish | Finance-heavy reporting to CFO and board | DORA is a side feature, not core | $80-150k |

When each one fits

LinearB — the closest like-for-like swap

If you liked Sleuth's deploy-centric model and just want a more actively-developed version, LinearB is the obvious move. They ship gitStream (PR automation) and WorkerB (Slack bot) features that overlap with where Sleuth was heading before the acquisition. The catch: pricing is opaque and they're aggressive on annual contracts.

Pick LinearB if: you have a platform engineering team, deploy ≥3x/day, and want PR-automation rules baked into the same product as DORA.

Swarmia — the opinionated alternative

Swarmia takes a stronger editorial line than Sleuth ever did. They publish their position on what good engineering looks like (small PRs, pair work, healthy review distribution) and the dashboards reinforce that view. Some teams love it. Others find it preachy.

The dataset is smaller — Swarmia is a Helsinki-based company with hundreds of customers, not thousands. The product reflects that: focused, well-designed, narrower than LinearB.

Pick Swarmia if: you want defaults you don't have to configure, your team is already culture-aligned with their playbook, and you don't need on-prem.

PanDev Metrics — DORA + the layers Sleuth ignores

We're biased — we built it. The honest pitch: PanDev Metrics gives you Sleuth-equivalent DORA (with the 4-stage lead time breakdown Sleuth doesn't expose), plus IDE heartbeat telemetry, cost-per-feature analytics, and on-prem Docker / Kubernetes deployment.

The IDE layer is the real difference. Sleuth tells you "lead time is 3.2 days." PanDev tells you it's 3.2 days and the median developer codes 1h 18m per day during that window — the number we measured across 100+ B2B teams. One unlocks the question "where's the time going?" the other doesn't.

Pick PanDev Metrics if: you need DORA and IDE-level activity, want cost-per-feature for the CFO conversation, or have a regulated-industry on-prem requirement.

Faros AI — enterprise data-lake mode

Faros is the heaviest option. AI-native, deeply customizable, designed for engineering organizations of 500+ developers with internal data teams. You don't buy Faros to "track DORA." You buy it to build a custom engineering data warehouse on top of their schema.

For most ex-Sleuth customers it's overkill. For Fortune 500 platform organizations with a dedicated MELT team, it's the right shape.

Pick Faros if: you have 500+ engineers, an internal data engineering team, and a budget that starts with "$200k".

Jellyfish — for board-level reporting

Jellyfish leans toward CFO and board reporting more than day-to-day engineering ops. Their strength is mapping engineering investment to business initiatives — "we spent 47% of engineering capacity on enterprise-tier features last quarter." DORA is in the product but it's not the headline.

Pick Jellyfish if: your buying committee includes a CFO or VP of Finance, and the reason you're switching off Sleuth is "engineering reports don't make sense to the rest of the leadership team."

Pricing reality (real budget numbers)

Annual list pricing for a 100-developer team, based on 2025-2026 quotes we've reviewed. Most vendors don't publish these — call this directional, not exact.

| Plan | Sleuth (renewal) | LinearB | Swarmia | PanDev Metrics | Faros AI | Jellyfish |

|---|---|---|---|---|---|---|

| Per-dev/month | $20 | $50-75 | $35-50 | $20-30 | $125-250 | $65-120 |

| 100-dev annual | $24k | $60-90k | $40-60k | $25-35k | $150-300k | $80-150k |

| Min seats | 10 | 25 | 25 | 10 | 100+ | 50 |

| On-prem | No | No | No | Yes | Hybrid | No |

| Trial available | Yes (14d) | Yes (14d) | Yes (14d) | Yes (30d) | No | Demo only |

The Sleuth column is a renewal estimate. Net-new Sleuth pricing has been moving since the acquisition; we've seen quotes 30-40% higher than 2023 levels.

The contrarian take: don't switch yet if you only need DORA

This is unpopular advice from a competitor. But: if you exclusively use Sleuth for the four classic DORA metrics, deploy frequency tracking, and the LaunchDarkly integration — and your renewal price hasn't jumped — keep it for one more cycle.

The reason: every alternative on this list has a feature edge somewhere, but switching delivery-pipeline telemetry mid-year is genuinely painful. You re-baseline your DORA scores, you retrain your team on the new dashboards, and you lose 2-3 months of comparable historical data. Don't pay that cost unless the upgrade actually unlocks a workflow you can't build today.

The honest signal that it's time to move: when someone asks "what does our developer experience look like?" and Sleuth has no answer. That's when the DORA-only platform stops being enough — not before.

What our data can't tell you

Our IDE-heartbeat dataset covers 100+ B2B companies, mostly mid-market (50-500 engineers), heavy in EMEA and CIS. We don't have signal on FAANG-scale orgs or pure open-source contributors. The cost benchmarks above are list-price estimates from buyer conversations, not negotiated contract numbers — every vendor on this list will discount 20-40% on annual deals.

DORA scores in our dataset also assume your team uses task-IDs in branch names. If your team doesn't, the lead-time numbers in any of these tools (including ours) drift toward unreliable. That's a workflow constraint, not a product limitation.

Decision framework in one paragraph

Sleuth was built when DORA was the frontier. The frontier moved. If you're an SRE-heavy platform team, LinearB is the cleanest swap. If you're culture-aligned with Helsinki-style opinionated tooling, Swarmia. If you need DORA and developer experience and cost data in one platform — and especially if you need on-prem — PanDev Metrics. If you're a Fortune 500 with a data team, Faros. If your problem is boardroom reporting, Jellyfish. The wrong move is renewing Sleuth on auto-pilot without asking which of those shapes you actually are.