Board of Directors: Engineering Review Questions

A Series-B board presentation went sideways in 2023 when a director — former GitHub VPE — asked the CTO three questions in a row she hadn't prepared for. She knew deployment frequency and team size. She didn't know median lead time, hiring velocity against plan, or the engineering payroll as a share of operating burn. The board didn't defund engineering, but they added a quarterly engineering review with a different CTO on the call. The meeting became a test the team passed but the CTO didn't.

Boards are harder to prepare for than investors because they have more context and less patience. This is a question list — what a working board actually asks, what the CTO should bring without being asked, and the red flags an experienced director spots in 15 minutes. We collected it from conversations with CTOs who have presented successfully, CTOs who haven't, and two board directors who sit on engineering-heavy portfolios.

{/* truncate */}

What the board really needs to know

A board isn't the engineering team's manager. They're the engineering org's auditors plus investors. Three questions at any time:

- Is engineering capacity being turned into product and revenue at a reasonable rate?

- Is the team well-run enough that a founder-departure wouldn't melt it?

- Are there named risks (key-person, security, regulatory) being managed or hidden?

Everything downstream follows from those three. Deployment frequency isn't a board metric — it's a diagnostic a board uses if they suspect the first question is failing. Lead time isn't a board metric either. Board metrics are one level higher and one level more financial.

Stanford's 2023 board-effectiveness study (David Larcker, Brian Tayan) found boards spent on average 18% of meeting time on technology oversight — but only 9% of that time actually explored engineering decisions. The rest was security updates and AI strategy talk. That 9% is the window to explain what's actually happening under the hood.

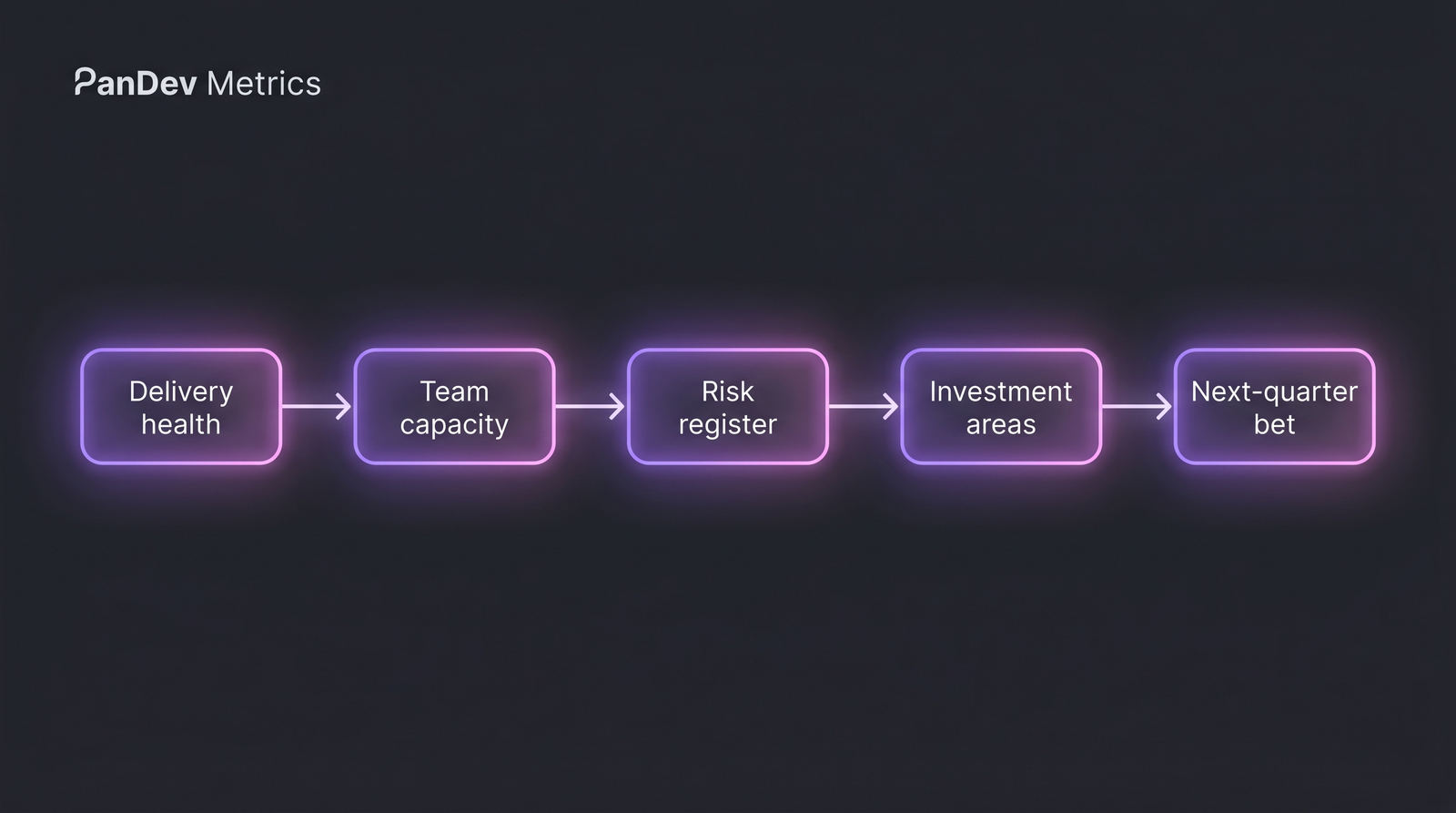

The board review is five linked conversations, not a data dump. Each answer earns you time for the next one.

The board review is five linked conversations, not a data dump. Each answer earns you time for the next one.

The 12 questions every board should ask — and what the answer tells them

Category 1: Delivery health (are we shipping?)

1. What was your team's deployment frequency last quarter, trending which direction?

- Good answer: "We ship X per week on average, up 30% QoQ, because we consolidated three release trains."

- Red flag: "We ship... I'd have to check." CTO doesn't know = CTO isn't operating the factory.

2. What's your lead time for changes — commit to production — and where does it break down?

- Good: "Median 2.1 days. The tail is code review — P90 is 4.8 days, driven by two reviewers bottlenecked on a merger-integration rebuild."

- Red flag: no median, no P90, no named bottleneck. See our DORA implementation guide for the expected baseline.

3. What's your change failure rate and MTTR?

- Good: "CFR is 8% (below 15% industry norm per DORA 2024). MTTR 42 minutes. Rolling quarter."

- Red flag: "We don't track incidents by severity yet."

Category 2: Team capacity (are we spending humans well?)

4. What is engineering as a percentage of total operating burn, trending?

- Good: "Engineering is 62% of burn, held flat QoQ while output metrics are up 18%."

- Red flag: "I'll have to check with finance." Engineering + finance alignment is a core maturity signal.

5. Hiring plan vs actuals — what's your close rate on offers?

- Good: "We planned 14 hires, closed 11. Offer acceptance dropped from 68% to 52% — tied to the comp band lag, proposal coming."

- Red flag: hiring numbers without acceptance rate or time-to-fill.

6. What's your engineering utilization — billable / revenue-generating work vs internal-platform vs support vs KTLO?

- Good: "58% feature work, 22% platform, 12% support, 8% KTLO. KTLO was 14% last quarter, we reduced by killing two legacy services."

- Red flag: single number ("we're 90% productive"). That number is always wrong.

Category 3: Risk register

7. What's your key-person concentration risk?

- Good: "Three systems have bus factor 1. Migration plan in place, done by Q3. Documented in our onboarding process."

- Red flag: "Everyone can fill in for everyone" — always a lie.

8. Top 3 security risks you're tracking, not the ones that made the news?

- Good: specific CVEs, specific third-party dependencies, specific pending pentests with known findings.

- Red flag: a tour of SOC 2 control names. That's a product security conversation, not an engineering risk conversation.

9. What's your incident pattern over the last 12 months — severity, root cause clusters?

- Good: "7 Sev-1s, 4 tied to a database hot-spot we've remediated, 2 to third-party outages we've since added fallback for, 1 human error in deploy that led to the freeze-window policy."

- Red flag: an incident count with no pattern recognition.

Category 4: Investment and strategy

10. If I gave you 3 more senior engineers today, where would they go and what would we measure in 90 days?

- Good: specific team, specific problem, specific metric (e.g. "reliability engineering, reducing database hot-spots, target MTTR down from 42min to <30min").

- Red flag: "I'd distribute them across teams." That's not a plan; it's a placement.

11. What's our engineering "stop doing" list?

- Good: two legacy services being deprecated, one internal tool being killed, one framework migration we've paused.

- Red flag: no such list. Engineering orgs with no "stop doing" list are accumulating.

12. What's the one thing your team would do differently if the company were 3× the size?

- Good: honest answer that shows CTO is thinking two steps ahead (e.g. "centralize SRE; today each product team has a part-time SRE and it won't scale").

- Red flag: "We're ready for scale." You're not. Nobody is.

The 5-slide deck that earns more time

Slide 1: The single number that summarizes engineering output (your choice — deployment frequency, lead time, roadmap % hit) trending over 4 quarters.

Slide 2: The delivery scorecard — DORA metrics with one-line commentary each.

Slide 3: The capacity table — headcount by team, % of headcount on feature vs platform vs support, hiring plan vs actuals.

Slide 4: The risk register — top 5 risks, owner, mitigation, due date. Include one risk you can't yet mitigate so you look honest.

Slide 5: The ask — one specific thing you need from the board this quarter (a hire, an exception, a policy sign-off). If you don't have an ask, you're not using your board.

Don't exceed 5 slides. Every extra slide dilutes the attention window. Board time is scarcer than any budget line item.

Red flags experienced directors spot in 15 minutes

| Signal | What it suggests |

|---|---|

| CTO says "we're about to start tracking X" | The metric was asked about last quarter too |

| Engineering headcount up 40% YoY, output metrics flat | Classic engineering-scale trap (Brooks's Law) |

| Every incident is attributed to external causes | Team isn't owning its own reliability |

| Productivity measured in commits, PRs, or LoC | CTO is measuring activity, not output |

| No explicit "stop doing" list | Org is adding work without subtracting |

| Engineering roadmap has no "platform" allocation | Feature debt accumulating, predicts future slowdown |

How a CTO should prepare

Two to three days before the meeting, run through these:

- Have every DORA number ready in two forms: median and P90. Directors with engineering backgrounds ask for the tail. Directors without will accept the median.

- Have one honest weakness prepared. "The area I'd invest more in" or "the risk I'm least sure about." This disarms the director who's looking for blind spots.

- Practice the 30-second version of each answer. Boards are patient for 90 seconds, impatient after. If the answer takes 3 minutes, you haven't internalized it.

- Bring one specific ask. Boards exist to authorize things the management team can't authorize alone — comp bands, org changes, major hires, strategy pivots. Don't waste the meeting.

How PanDev Metrics fits a board-review prep cycle

Two direct uses:

Weekly CTO dashboard. Our CTO dashboard rolls up delivery, team health, and cost metrics into a one-page view that doesn't require an analyst. That becomes slide 2 of the board deck without a week of data wrangling. Several of our enterprise customers run the dashboard during the board call itself.

Cost-per-feature for the capacity slide. Boards want dollar signs. Our cost-per-feature calculation (from tracked time × hourly rate, across 100+ B2B customers) turns "we shipped Feature X" into "we shipped Feature X for $48k, compared to last quarter's average of $61k." That's a number boards can use.

We're not the only way to do this, but we're one of the few tools that pulls this together from IDE telemetry rather than self-report. The numbers resist inflation because developers aren't filling timesheets; the data comes from the editor.

The honest limit

Our dataset is skewed toward 50-500 engineer organizations across KZ, UZ, RU, US, SG, and EU. Boards of public companies operate differently — they have formal audit committees, SEC disclosure schedules, and governance patterns that this article doesn't cover. The 12 questions above come from private-company boards at Seed to late-Growth stage. If you're preparing for a FAANG-adjacent public-company board seat, the mechanics are similar but the formality is not.

The sharp claim

Engineering boards fail in the prep, not the meeting. A CTO who can answer any of these 12 questions in 60 seconds has already done the organizational work to deserve the answer. A CTO who is scrambling for numbers the night before is confessing that the operating rhythm isn't running. Boards don't care about your metrics; they care whether you know your metrics.

Related reading

- CTO Dashboard: What to Show at Your Weekly — the weekly feed that makes board prep easy

- Implement DORA Metrics in 2 Weeks — the baseline numbers boards expect

- Team Size and Productivity: Brooks's Law — for the "why is output flat at 40% more headcount" question

- New Developer Onboarding Ramp — the key-person-risk mitigation story

- External: Stanford Rock Center on Board Effectiveness — Larcker/Tayan research on tech oversight