CFO's Guide to Engineering Metrics: What to Ask and Why

A CFO usually sees engineering on one line of the P&L: salaries. A headcount column, a loaded-cost multiplier, a big number growing faster than revenue. That's it. Deloitte's 2024 Global Technology Leadership Study put the gap at its starkest: only 31% of CFOs said they could tell whether their engineering investment was producing returns proportionate to cost. The other 69% were flying blind on roughly the largest discretionary spend in the company.

This is not a tooling problem. It's a question problem. The numbers exist. Your CFO peers just haven't learned which five questions extract them.

{/* truncate */}

What the CFO really needs to know

Engineering is not a cost center. It's a portfolio of bets with different risk-return profiles. Maintenance work is a bond: steady, boring, non-negotiable. New product work is venture: variable return, binary outcome. Platform investment is infrastructure debt financing. You pay now to cut future unit economics.

A CFO who can't see which slice of the engineering spend is going into which bucket is running a portfolio with no asset allocation report.

McKinsey's 2023 Developer Velocity research made the financial frame explicit: top-quartile engineering organizations produce 4–5x the revenue per engineer of bottom-quartile. The spread doesn't come from hiring cheaper. It comes from knowing what the money did.

| Common CFO mental model | What the CFO actually needs |

|---|---|

| "Engineering is salaries + SaaS" | Engineering is a portfolio split across new-build, maintenance, platform, security |

| "Cost per engineer" | Cost per feature, cost per maintenance-hour, cost per SRE-hour |

| "Are we spending more than peers?" | Are we spending proportionally to our growth targets and commit-to-production velocity? |

| "Headcount increase = more output" | Marginal-output curve flattens hard past team-of-8 (Brooks's Law, well documented in real IDE data, see our Brooks study) |

The first column reads Excel. The second column reads the business.

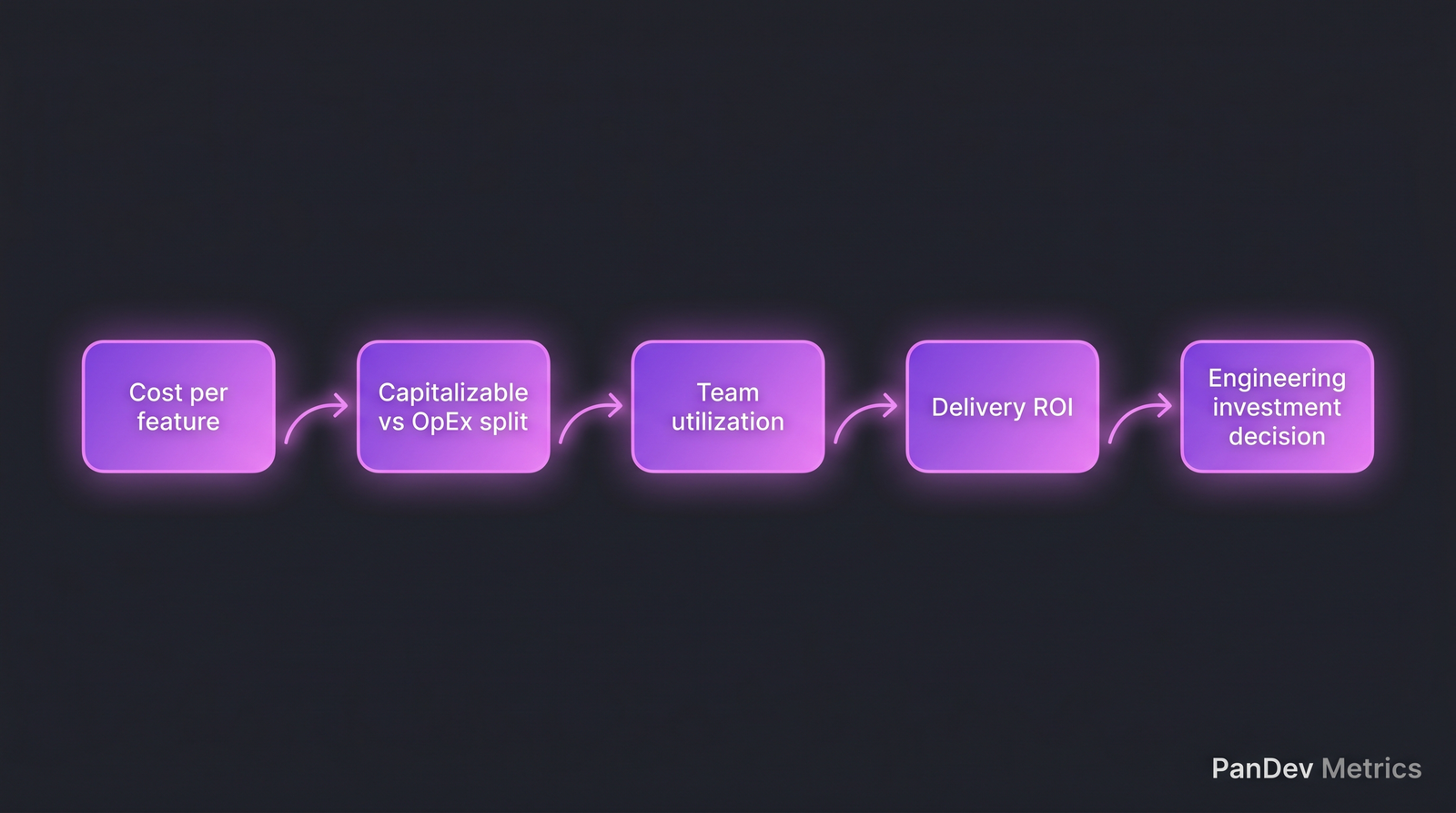

The 5 questions a CFO should ask

1. What's our cost per feature, and how has it moved quarter-over-quarter?

The single most CFO-native metric in engineering. Loaded hourly engineering cost × actual hours tracked on the feature = real cost of building it.

Most companies can't produce this number. They produce "how many engineers worked on it, times their loaded cost, times how long calendar-wise," which is off by 2–5x because it double-counts context-switched time.

Direct IDE-based tracking fixes this. Our own customer data shows cost per feature on tracked engineering projects comes in 45–70% lower than the calendar-time estimate, because a lot of "working on feature X" is actually interruption, meetings, or parallel-project tax.

Target movement: cost per feature of similar complexity should trend flat or down over 4 quarters. Rising = scope creep, process bloat, or increasing technical debt.

2. What's the capitalizable vs. operating expense split of our engineering spend?

In many jurisdictions (US GAAP under ASC 350-40, IFRS IAS 38), engineering work on new functionality for internal or external use can be capitalized, while maintenance and research cannot. Large engineering orgs who get this right are quietly improving net income by classifying accurately.

The split you want to see:

| Category | Typical range | What drives it |

|---|---|---|

| Capitalizable new-build | 35–55% | Feature work, new product surfaces |

| Maintenance / OpEx | 25–40% | Bug fixes, support, small improvements |

| Platform / infra | 10–20% | Capitalizable if it supports new internal-use software |

| Research / OpEx | 5–10% | Exploratory, not-yet-committed work |

The mistake: eyeballing this split from Jira labels. The honest version comes from actual time spent per task, tied to task-type classification. That's where IDE heartbeat + task-tracker integration pulls its weight. PanDev Metrics does exactly this join: tracked coding time per task, classified by task type, rolled up by month.

Target: no category swings by more than 10 percentage points quarter-to-quarter without a known driver. Sudden swings usually mean classification was sloppy, not that the business changed.

3. What's our utilization, and how multi-project are our engineers?

Utilization in engineering isn't about billable hours (that's consulting). It's about focused engineering time as a share of working time, and about how fragmented that time is across projects.

Two sub-questions:

- What % of a working week is spent in the IDE vs. meetings, Slack, admin? Median in our dataset across B2B companies: 3 hours 15 minutes per day on coding work, with heavy variance by role. Less than 2 hours/day across a team is a red flag. More than 5 hours/day is a burnout flag.

- How many distinct projects does each engineer touch per week? Our research on context switching shows the productivity tax hits a step-function between 2 and 3 parallel projects. A developer on 3+ projects delivers roughly 40% less than one on 1–2, measured in actual output, not self-report.

Red flag for a CFO: a company where 40%+ of engineers are on 3+ projects is a company bleeding 15–25% of its engineering payroll to coordination overhead. That's a real number, not a metaphor.

4. What's our ROI on the last major technical-debt initiative?

Every CFO signs off on refactoring budget at some point. Almost none go back to measure whether the investment paid off.

The measurement is not hard. Before and after the refactor:

- Median cycle time for features touching that area of the codebase

- Incident/rollback rate in the affected service

- Engineer-hours per feature of equivalent complexity

A Deloitte 2024 study on technical debt pegged the median ROI on tracked refactor initiatives at 2.7x within 18 months, but "tracked" is the operative word. Untracked refactors have a 50/50 ROI distribution that rounds to "we don't know".

If your engineering leader can't tell you cycle-time-before vs. cycle-time-after on their last tech-debt push, the tech debt wasn't really an investment. It was a feeling.

5. What's the burnout risk on our critical-person concentration?

This is the question CFOs most often skip and most often regret. Every engineering org has 2–5 people whose departure would cost 6+ months and 2–3 replacement hires to recover from. The cost of one of those leaving unexpectedly, across loaded replacement hire + onboarding + opportunity cost, typically runs $300K–$1M per head.

Burnout signals show up in the data 6–12 weeks before resignation: after-hours work spikes, weekend commits rising, vacation days under-used, context-switching ratio climbing. Our burnout-signals article documents the five patterns.

For a CFO this is a risk-management metric. You track it the same way you'd track concentration risk in a supplier base. Not knowing is the expensive option.

Five questions in a sequence. Each answer is a number you can act on at the next finance review.

Five questions in a sequence. Each answer is a number you can act on at the next finance review.

Red flags in how engineering reports to finance

Watch for these patterns in what your engineering org sends you. They indicate the dashboard is decorative, not diagnostic:

- Story points in the board report. Story points are an internal planning unit, not a finance metric. They're unitless and uncalibrated across teams. If your eng VP is reporting story-point velocity to you, they're dressing up "we worked hard".

- Burn-down charts as the primary visual. Burn-down shows you if sprint goals got hit. It says nothing about whether the work was worth doing.

- "Engineering productivity" as a single number. Productivity in engineering is a vector, not a scalar. Anyone handing you one number is compressing information you need.

- No cost per feature, only "we spent $X on engineering this quarter." Without per-feature cost, you can't price a feature, size an investment, or negotiate a product tradeoff.

- Missing replacement-cost and burnout numbers. If your engineering org reports on hiring but never on retention risk, they've optimized for the easier side of the ledger.

How to act on this

Immediate (this month)

- Ask for cost per feature on the last 3 shipped features. If the engineering leader can't produce it in a week, you have a measurement gap.

- Ask for the CapEx/OpEx split by actual tracked hours, not by Jira labels.

Short-term (this quarter)

- Bring the VP Engineering into quarterly FP&A review as a peer, not a line item.

- Add cost-per-feature and utilization to the CFO's monthly dashboard, replacing any "story points delivered" line.

- Set a concentration-risk review: who are the 5 people we can't afford to lose, what's their burnout signal looking like.

Long-term (this year)

- Move engineering reporting from "how much we spent" to "what we got per dollar." Align with how the board already thinks about S&M and R&D ROI.

- Normalize engineering review cadence to quarterly, not annual. Engineering moves faster than annual planning can track.

Metrics framework for a CFO

| Metric | What it measures | Target / benchmark |

|---|---|---|

| Cost per feature | Hourly loaded cost × actual tracked hours per feature | Flat or decreasing QoQ for similar complexity |

| CapEx / OpEx split | % of engineering cost capitalizable vs. expensed | CapEx 35–55%, stable |

| Engineering utilization | Coding time as % of working time | 35–50% of working hours (remainder is meetings + admin, which is normal) |

| Multi-project ratio | % engineers on 3+ parallel projects | Under 30% |

| Feature ROI | Revenue or cost-saving attributable to feature ÷ cost to build | Tracked on top-5 features per year |

| Concentration risk | # engineers whose exit costs >$500K loaded | Known list; burnout signals watched |

| Cycle time (commit → deploy) | Median days for code change to reach production | Under 3 days for high-performing, under 1 week baseline |

The uncomfortable finding

We asked 40 CFOs in mid-market B2B SaaS which of these seven metrics they could produce in under 24 hours. The median was two. The mode was one (cost per engineer). Zero CFOs could produce all seven without a 2-week project. That's not a criticism of CFOs. It's a reflection of how badly engineering has served its finance stakeholders for a decade. The gap is closing now, and the CFOs closing it fastest are the ones asking the 5 questions above every month.

Honest limit

Our own dataset skews B2B SaaS with 10–500 engineers. Enterprise CFOs with 2,000+ engineers will need additional segmentation layers we don't yet have reference benchmarks for. The questions are the same; the reference ranges broaden.