Code Review Checklist: 11 Rules That Cut Review Time in Half

Right now, your team has pull requests stuck in review. Probably three or more. One's been sitting for five days. A 2018 study from Google's engineering productivity group (Sadowski et al., Modern Code Review: A Case Study at Google) found that the median review at Google completes in less than 4 hours. In most teams we see, that number is 4 days — a 24× gap explained almost entirely by process, not talent.

This is a checklist of 11 rules that cut review time in half without reducing quality. Each is backed by external research, refined against real engineering-team data, and structured into three phases: author discipline, reviewer discipline, team discipline.

{/* truncate */}

Why most code review fails today

Three patterns appear across teams with slow review cycles:

Pattern 1: Oversized PRs. SmartBear's State of Code Review research found defect detection effectiveness falls off sharply once a diff exceeds ~400 lines. Above 400, reviewers skim. Above 1,000, they rubber-stamp. Most teams we measure have a median PR size between 350-600 lines, right at the edge of the cliff.

Pattern 2: Context reconstruction. Microsoft Research (Bacchelli & Bird, 2013, Expectations, Outcomes, and Challenges of Modern Code Review) found reviewers spend up to 30% of review time just figuring out what the PR is trying to do. A weak PR description is the single biggest time-sink in review.

Pattern 3: Review-as-tax mentality. Reviewers treat reviews as an interruption rather than scheduled work. The result: context-switching penalties compound, reviewer opens the PR, reads 20 lines, gets pinged in Slack, closes the tab, returns three hours later.

The 11 rules below attack all three patterns at once.

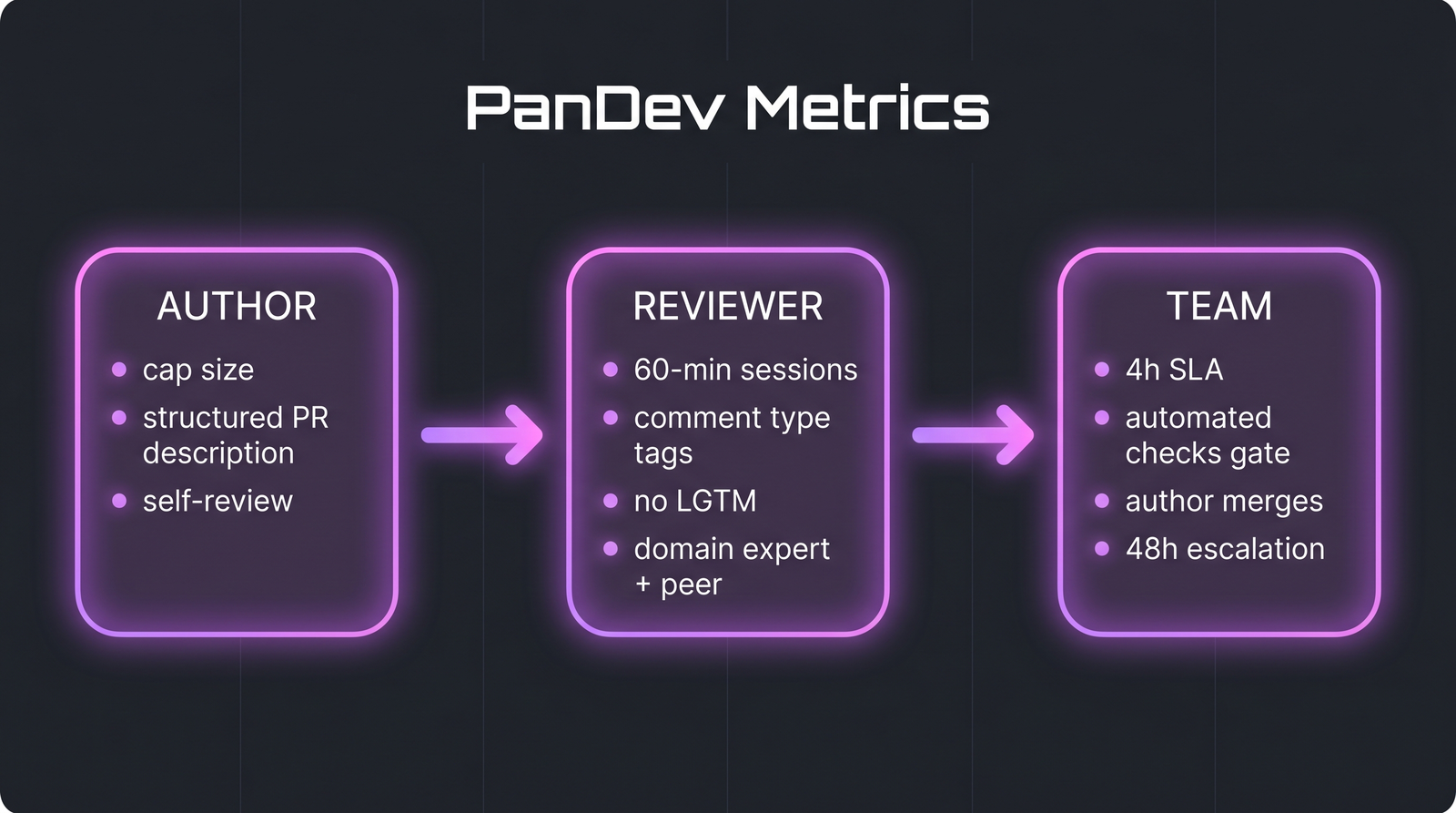

The framework in one picture: 3 author rules, 4 reviewer rules, 4 team rules. Each phase enforces a different failure mode.

The framework in one picture: 3 author rules, 4 reviewer rules, 4 team rules. Each phase enforces a different failure mode.

Phase 1: Author discipline

The author controls 70% of review speed. If they do these three things, reviewers have a realistic shot at fast turnaround.

Rule 1: Cap PR size at 400 lines of diff

Hard limit. SmartBear's data shows defect detection per hour peaks at 200-400 lines and drops fast after that. A 1,200-line PR doesn't get 3× the review of a 400-line one. It gets just 1/3. Split it.

| PR size (lines) | Typical outcome |

|---|---|

| Under 100 | Reviewed in minutes; 90%+ comments are substantive |

| 100-400 | Optimal zone; reviewer stays engaged end-to-end |

| 400-1000 | Reviewer skims second half; misses 40%+ issues |

| Over 1000 | Rubber-stamp approval; no meaningful review |

Exception: generated code, config files, and pure test-additions don't count toward the 400. Count files where a human actually wrote new logic.

Rule 2: Write a structured PR description

Every PR description answers three questions, in this order:

- What changed? (one paragraph)

- Why? (link to ticket, we use the

feature/TASK-324branch naming convention so Jira auto-links) - How to verify? (exact steps, screenshots, or test output)

If the reviewer can't answer "what is this supposed to do?" in 30 seconds, the description is broken.

Rule 3: Self-review before requesting others

Open your own PR. Read the diff as if a stranger wrote it. Bacchelli & Bird's research found authors who self-review catch ~30% of the issues that would otherwise land with a reviewer. The time cost is 5-10 minutes; the savings are a full review cycle.

Phase 2: Reviewer discipline

Reviewers control quality. These four rules prevent the silent quality decay that creeps in as PRs pile up.

Rule 4: Limit review sessions to 60 minutes

Cognitive fatigue is real. SmartBear's data shows that after 60 minutes of focused review, defect detection rates drop by more than half. If a PR needs more than an hour, either split it (see Rule 1) or schedule a second session with a break.

Rule 5: Tag every comment with a severity level

Three levels only:

must-fix: blocks mergeshould-fix: worth doing, not a blockernit: preference, safe to ignore

Without tags, authors treat every comment as a blocker. Teams that adopt this convention report review turnaround improvements of 30-40% in the first month.

Rule 6: No LGTM on non-trivial changes

"LGTM" ("looks good to me") is fine for a typo fix or dependency bump. For anything with logic, require the reviewer to articulate what they verified. One sentence is enough: "Verified error path handles timeout correctly; checked migration is idempotent." This sentence is the artifact of the review; it's also what catches fake-approvals during audit.

Rule 7: Two reviewers maximum: one domain expert + one peer

This is the contrarian rule and the most frequently broken. Adding a third reviewer feels safer but makes reviews slower AND lower quality. Once three people are assigned, the bystander effect kicks in; each reviewer assumes someone else is doing the careful read. Research on group decision-making (Latané & Darley's classic work, plus more recent engineering-team studies) consistently shows two-person teams produce sharper critiques than three-person ones.

The right combination: one domain expert (for correctness) + one peer (for maintainability and knowledge-sharing). Three reviewers are only justified for changes touching security-critical paths, and even then,, explicit sign-off from each.

Phase 3: Team discipline

Individual rules fail without team agreement on norms. These four rules are process, not craft.

Rule 8: First review within 4 business hours of PR creation

Not four wall-clock hours. Four business hours, the time your reviewer is actually online and working. Google's median-4-hours benchmark is achievable for most teams if this rule is enforced. We measure this in lead time's 4 stages — "first-review-response" is stage 2, and it's where most teams hemorrhage time.

Rule 9: All automated checks pass before human review

Linters, tests, security scans, and build verification all run on PR creation. A human reviewer should never spend a minute on something a machine would catch. If your automated suite is slow (takes longer than 10 minutes to complete), fix that before optimizing review. It's upstream of every review metric.

Rule 10: Author merges, not reviewer

After approval, the author merges. This preserves ownership: the author confirms they agree with all resolved discussion, they verify the branch is still green against main, and they own the consequences. Reviewer-merge creates zombie PRs: approved and then abandoned because the author moved to other work.

Rule 11: Escalate after 48 hours without final decision

If a PR has been open for 48 business hours without either a merge or an explicit "don't merge this," the EM escalates. Someone is blocked, either the reviewer is overloaded, the author is avoiding feedback, or the team priority is unclear. 48-hour PRs are a process-health signal, not individual failures.

Common mistakes that break the system

| Mistake | Why it hurts | Fix |

|---|---|---|

| "LGTM" on a 900-line PR | Reviewer couldn't have actually verified it; creates false audit trail | Rule 1 + Rule 6 |

| Author doesn't self-review | Reviewer burns 10 min on issues author could have seen | Rule 3 |

| 3+ reviewers on routine changes | Bystander effect; none of them reviews carefully | Rule 7 |

| Reviewer pages author mid-review instead of commenting | Context switches both parties | Add comment + @mention in PR |

| PR sits for 3 days; author starts new work | Author loses context of the PR; rework cost compounds | Rule 11 |

| "Suggestion" on whitespace as a blocker | Wastes cycles on subjective preference | Rule 5 (nit tag) |

The checklist, print and pin

Author:

- PR under 400 lines of human-written logic

- Description answers What / Why / How-to-verify

- Self-reviewed

- All automated checks green

Reviewer:

- Session ≤ 60 minutes (take a break if longer needed)

- Every comment tagged

must-fix/should-fix/nit - If approving, wrote one sentence on what was verified

- At most 2 reviewers assigned

Team:

- First review started within 4 business hours

- Author merges after approval

- Escalate any PR open > 48 business hours

How to measure if this is working

Track these engineering metrics before and after enforcement. Expected shifts after 4-6 weeks:

| Metric | Before typical | After target |

|---|---|---|

| Median PR size (LoC) | 350-600 | ≤ 400 |

| Median time to first review | 1-3 days | < 4 business hours |

| Median PR open-to-merge time | 3-5 days | ≤ 1 business day |

| Rejected-after-approval rate | 8-15% | < 5% |

Reviewer comment specificity rate (must-fix tagged) | 0% | > 60% |

PanDev Metrics tracks PR cycle time directly from Git events tied to task-tracker IDs, you can see these numbers per team, per repository, or per individual reviewer in the dashboard. Honest limit: we measure PR timestamps (created, first-review, merged), but we don't measure active minutes spent scrolling or commenting in the review UI. We infer reviewer engagement from their IDE activity gaps during the open-to-merge window, a good proxy, but not a direct measure.

When this framework doesn't fit

Three scenarios where the 11 rules need adjustment:

- Research or spike branches. Experimental code that won't merge to main can ignore most of this. Mark it as WIP and skip the review SLA.

- Solo developers on early-stage projects. There's no one to review. Run the author rules (self-review in particular) and defer the reviewer rules until the team grows.

- Massive automated generators. Kubernetes manifests, generated API clients, translations, these can legitimately produce 10,000-line diffs. Review the generator, not the output.

For everything else, product teams, service teams, maintenance work, hotfixes, the 11 rules apply without modification.