Cybersecurity Engineering Metrics: SOC Operations Beyond MTTR

A Security Operations Center running on MTTR alone is measuring the fire, not the fire department. IBM's Cost of a Data Breach Report 2024 found the average breach takes 258 days to identify and contain, and the teams that broke below 200 days didn't do it by responding faster. They detected earlier and spent less time on toil. MTTR was a side effect, not the target.

Cybersecurity engineering needs its own metric stack. Generic engineering KPIs under-weight the asymmetric cost of a miss, and pure InfoSec dashboards ignore whether the team is burning out or burning budget.

{/* truncate */}

Why cybersecurity engineering is different

A security engineering team isn't shipping features. It's shipping coverage, detections, and response capability. The feedback loops are brutal: a missed threat may not surface for months, so you can't wait for the lagging signal to tell you the team's working.

Three asymmetries make standard engineering metrics misleading here:

| Standard eng team | Security engineering team |

|---|---|

| Failure = buggy feature, fixed next sprint | Failure = undetected breach, compounded for weeks |

| Customer feedback in hours | Adversary feedback in months (or never) |

| Deploy frequency is a goal | Deploy frequency is a risk surface |

The implication: you have to measure leading indicators (coverage, detection engineering throughput, patch lag), not just lagging ones (incidents, MTTR).

The frameworks worth citing:

| Framework | What it contributes | Where it hits short |

|---|---|---|

| MITRE ATT&CK | Coverage taxonomy for detections | No productivity model |

| NIST CSF 2.0 | Governance + Identify/Protect/Detect/Respond/Recover | Too abstract for team-level tracking |

| SANS SOC Survey (annual) | Benchmarks for SOC staffing and toil | Self-reported, survey-based |

A SOC that measures only MITRE coverage and MTTR is like a dev team measuring only test coverage and uptime. Both useful, neither tells you whether the team will burn out next quarter.

The 7 metrics that matter in cybersecurity engineering

1. Mean Time to Detect (MTTD): the leading half of MTTR

What it measures: time from threat presence on the network to first alert that a human or automated playbook acts on.

Target benchmark: under 60 minutes for high-severity events. Gartner's 2024 SOC benchmarks show median MTTD in financial-services SOCs at 43 minutes for tier-1 threats.

Why the "to restore" half misleads: a team can have a fast MTTR and a two-week MTTD. The breach was already catastrophic by the time you started the stopwatch.

2. Mean Time to Contain (MTTC)

What it measures: time from detection to the threat being isolated. Not fixed, not eradicated, just unable to do further damage.

Target benchmark: under 2 hours for tier-1 incidents.

IBM's 2024 report attributes $1.76M of avg breach cost reduction specifically to containment speed. Containment compounds: every hour saved removes an hour of lateral movement.

3. Detection engineering throughput

What it measures: number of high-quality detections authored and deployed per engineer per quarter, with a quality gate (not just detection count): detections that fire at least once in 90 days without being tuned to death.

Why it's the hardest metric: teams optimize for detection count, ship 400 rules, half are disabled within a month because of false-positive noise. The quality gate is what kills Goodhart's law.

4. Patch lag

What it measures: median days between a CVE publication relevant to your stack and its deployment to production.

Target benchmark: under 14 days for CVSS 9+. Under 30 days for CVSS 7–8.

A Verizon DBIR 2024 data point: 60% of successful exploits target vulnerabilities that had a patch available for 60+ days. Patch lag isn't a security team problem; it's a security-engineering-meets-DevOps problem, which is exactly why most orgs can't fix it. You need cross-team telemetry.

5. Alert-to-action ratio

What it measures: % of alerts that lead to a human action (investigation, escalation, tuning). The inverse, alert noise, is the single biggest predictor of analyst burnout.

Target benchmark: above 15%. Under 5% means the SOC is flooded with noise; analysts stop investigating entirely.

The 2024 SANS SOC Survey reported analyst burnout as the top reason for SOC turnover, ahead of pay. Noise, not volume, drives that.

6. Toil ratio

What it measures: % of analyst time on repetitive, automatable tasks (false positive triage, compliance-log gathering, manual enrichment).

Target benchmark: under 30%. Google's SRE book put the toil ceiling at 50% for SREs; security analysts need tighter (closer to 30%) because the work is higher-cognitive-load and the burnout tax is steeper.

7. Security-engineering coding time

What it measures: actual time your detection engineers and security tooling engineers spend in the IDE writing rules, pipelines, or SOAR playbooks, as opposed to sitting in triage.

If your detection engineers code under 1 hour a day, they aren't detection engineers. They're analysts with a fancy title. PanDev Metrics tracks IDE heartbeat data specifically to separate engineering-time from toil-time, which is exactly the split most SOC leads can't see from their SIEM dashboards.

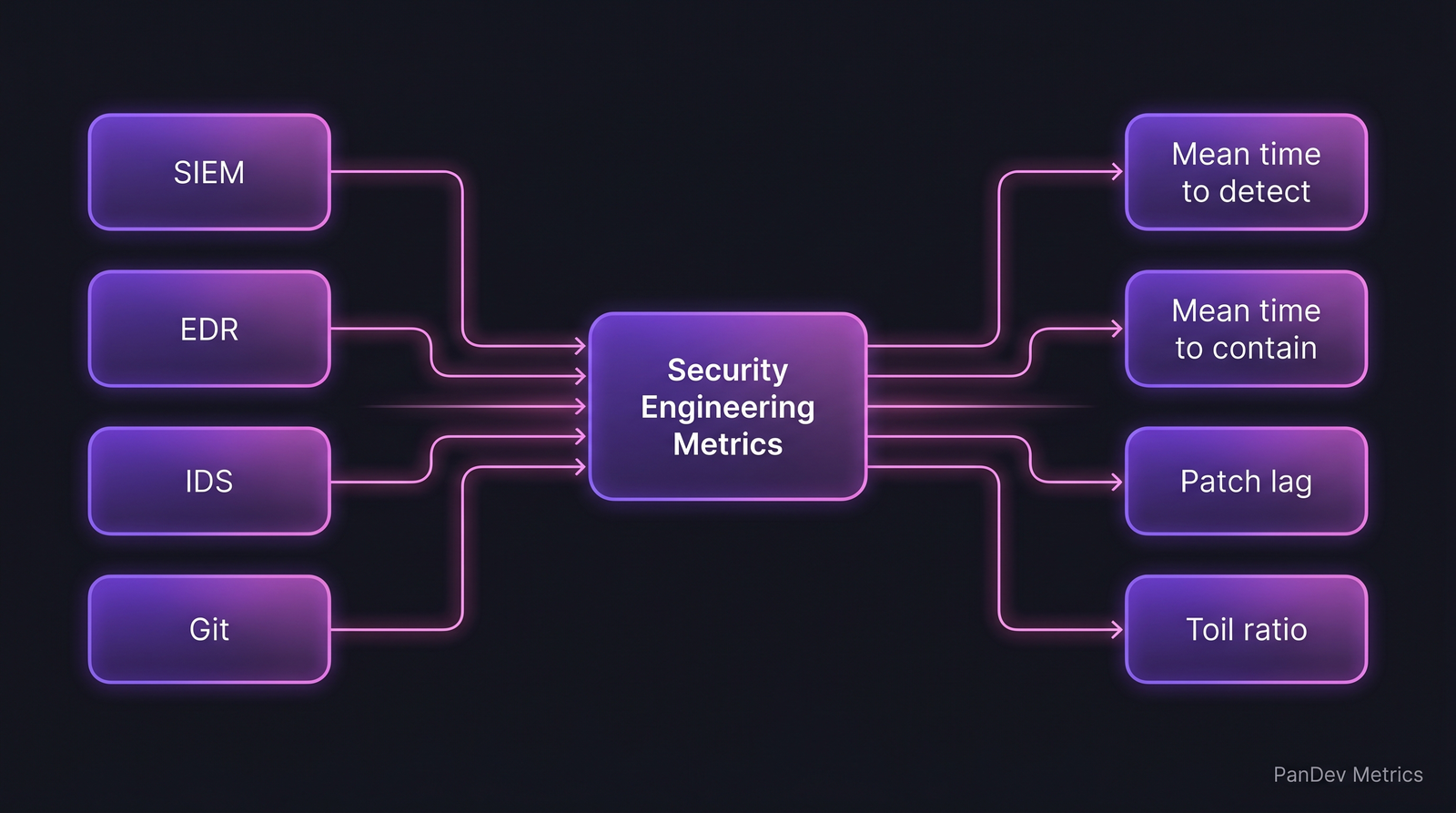

SOC telemetry, not just SIEM telemetry. Security-engineering metrics need IDE, Git, and task-tracker signals alongside the security stack.

SOC telemetry, not just SIEM telemetry. Security-engineering metrics need IDE, Git, and task-tracker signals alongside the security stack.

How compliance and scale change measurement

Financial and healthcare SOCs live under evidence regimes. PCI-DSS 4.0 (effective March 2025) explicitly requires documented detection coverage and time-bound remediation records, meaning your MTTD and patch lag metrics aren't internal KPIs anymore. They're audit artifacts.

Three regulatory nuances:

| Regulation | What it adds to metrics | Practical change |

|---|---|---|

| PCI-DSS 4.0 | Proof of detection for each critical control | MTTD per ATT&CK tactic, not just global |

| NIS2 (EU) | 24-hour initial reporting of significant incidents | MTTD + MTTC for regulator-reportable events broken out |

| SOX IT (US public co.) | Change control on production detections | Audit trail on every detection tuning: Git, not Excel |

The practical implication: a SOC in a regulated vertical can't track metrics only in Splunk or Sentinel. You need Git-backed records of detection changes, IDE-backed records of who authored what, and tracker-backed records of the incident lifecycle. This is where the plain "SIEM dashboard" model falls over.

Typical cybersecurity engineering team profile

| Parameter | Typical range |

|---|---|

| Team size | 6–30 |

| Structure | Tier-1 analysts, Tier-2/3 responders, Detection engineers, Tooling engineers, SOC manager |

| Core stack | SIEM (Splunk / Sentinel / Chronicle), EDR (CrowdStrike / SentinelOne), SOAR, ticketing |

| Coding share | Detection engineers 40–60%, analysts 5–15% |

| Primary pressure | Regulatory audit + analyst turnover |

The split between coding and non-coding roles inside a security team is stark. Detection engineers and SOAR/tooling engineers should have coding-time profiles closer to backend engineers than to helpdesk. If they don't, you have analyst work being labeled as engineering work. The SOC's output per headcount tanks without the dashboard showing why.

What to track differently from a standard SaaS team

- Coverage, not velocity. Detection coverage over MITRE ATT&CK tactics matters more than tickets closed. A team that closed 40% more tickets but lost 3 tactics of coverage is regressing.

- Quality-gated throughput. Every engineering metric needs a "still-useful-in-90-days" gate. Detections that get disabled don't count.

- Burnout signals, earlier. Security analysts exhibit burnout patterns 6–8 weeks before they quit: weekend alert acks, vacation interruptions, after-hours logins. Our burnout-signals post documents five patterns that apply here with a wider margin.

- Cross-team patch lag. Security team doesn't own deployment, so patch lag is a joint metric with platform engineering. Measure jointly or you'll finger-point.

Common pitfalls

- MTTR as the only KPI. Lagging, reactive, can be gamed by narrowing what counts as an incident.

- Alert count as a KPI. Analysts optimize for closing alerts, which means closing them without investigating.

- Coverage without quality. 1,200 detections, 800 silent, 400 noisy, zero useful.

- Conflating analyst time with engineering time. Your "detection engineering team" is probably doing 70% triage. The IDE data will tell you. The timesheet won't.

- Measuring with spreadsheets. Manually pulling metrics monthly equals 20 hours of SOC-manager toil, numbers 3 weeks stale, half the audit requires a re-pull.

Where PanDev Metrics fits

For security engineering teams, the relevant slice is measuring the engineering half of the SOC: detection engineers, SOAR engineers, tooling engineers. IDE heartbeat data shows the split between engineering-time and investigation-time, burnout pattern detection catches the early weekend-ack signal, and the on-prem Docker/K8s deployment makes it work inside air-gapped security environments where a cloud SaaS platform would be a non-starter.

An honest limit

Our IDE dataset has reasonable depth on detection engineers and DevSecOps roles. We don't have strong signal on pure analyst workflows; those happen in the SIEM console, not the IDE. For the analyst half of the SOC, you still need SIEM-native productivity tooling. Anyone telling you a single platform covers both is selling, not measuring.