How to Run Data-Driven 1:1s With Your Developers

Gallup research consistently shows that manager quality is the single largest factor in employee engagement — yet most engineering managers run 1:1s the same way: "How are things going?" followed by an awkward silence, then a pivot to project status updates. That's not a 1:1 — that's a standup with extra steps. Real 1:1s should be the most valuable 30 minutes in your developer's week, and data makes them dramatically better.

{/* truncate */}

Why Most 1:1s Fail

Let's be honest about the three failure modes:

- The Status Update — You spend 25 minutes going through Jira tickets. The developer tells you things you could have read in a dashboard. Nobody grows.

- The Therapy Session — Pure vibes, no structure. You ask "how are you feeling?" and get "fine." Neither of you knows what to do with the meeting.

- The Surprise Attack — The developer hears feedback for the first time in months, and it's negative. No context. No data. Just opinions.

Data-driven 1:1s fix all three. When you walk in with objective metrics, you can skip the status theater and go straight to the conversations that matter: growth, blockers, career development, and team dynamics.

The Data You Actually Need Before a 1:1

You don't need a 50-metric dashboard. Here's what to pull before each 1:1:

Core Metrics (5-minute prep)

| Metric | What to Look For | Where It Helps |

|---|---|---|

| Activity Time trend (2 weeks) | Sudden drops or spikes | Detecting burnout or blockers |

| Focus Time | Are they getting uninterrupted blocks? | Meeting load, context switching |

| PR cycle time | How long from first commit to merge? | Process bottlenecks |

| Review participation | Are they reviewing others' code? | Team collaboration |

| Current project allocation | What are they actually working on? | Alignment with priorities |

Context Metrics (when relevant)

| Metric | When to Check |

|---|---|

| Delivery Index | Before quarterly reviews |

| Cost per project | When discussing project impact |

| Comparison to team average | Only for context, never for ranking |

The key principle: use data to ask better questions, not to deliver verdicts. As Will Larson writes in An Elegant Puzzle, the best engineering managers use metrics as conversation starters, not as scorecards.

The Data-Driven 1:1 Framework

Here's a practical framework that works for weekly 30-minute 1:1s.

Phase 1: Open (5 minutes)

Start with the human. This part is not data-driven, and that's intentional.

- "What's on your mind this week?"

- "Anything you want to make sure we cover today?"

- "How's your energy level — 1 to 5?"

This gives the developer control. If something urgent is burning, they'll tell you here and you can skip the rest of the framework.

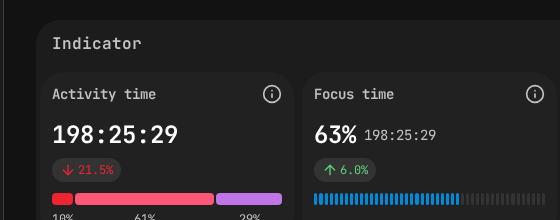

PanDev Metrics employee dashboard — Activity Time (198h) and Focus Time (63%) cards give you the data foundation for a productive 1:1 conversation.

PanDev Metrics employee dashboard — Activity Time (198h) and Focus Time (63%) cards give you the data foundation for a productive 1:1 conversation.

Phase 2: Data Review (10 minutes)

Share your screen (or a printed summary) with the developer's metrics. Go through them together — this is collaborative, not evaluative.

Template conversation:

"I noticed your Focus Time dropped from an average of 3.2 hours/day to 1.1 hours this past week. I see you were pulled into the payments project mid-sprint. What happened there?"

"Your PR cycle time has been consistently under 4 hours for the past month — that's great. Is there anything about the review process that's still frustrating you?"

"Activity Time shows Wednesday and Thursday were almost zero last week. Were you in meetings, doing design work, or something else?"

Rules for the data review:

- Always ask before assuming. Low coding time might mean architecture work, research, or mentoring — all valuable.

- Show trends, not snapshots. One bad week means nothing. Three weeks of declining focus time means something.

- Compare to their own baseline, not to other developers. Ever.

- Let them explain first. Present the data, then ask an open question.

Phase 3: Growth & Blockers (10 minutes)

Now that you have a shared picture of reality, dig into what matters:

Blocker questions:

- "What slowed you down the most this week?"

- "Is there a decision you're waiting on from someone?"

- "Are there any tools or access issues I can fix for you?"

Growth questions:

- "What did you learn this week that was interesting?"

- "Is there a skill you want to develop that you're not getting to practice?"

- "Looking at your project allocation — is this the kind of work you want to be doing?"

Career questions (monthly):

- "Where do you want to be in a year? Are we making progress toward that?"

- "What's the most impactful thing you've done this quarter? Let's make sure it's visible."

Phase 4: Action Items (5 minutes)

Every 1:1 should end with concrete commitments. Write them down in a shared doc.

Template:

| Owner | Action | Due |

|---|---|---|

| Manager | Move Wednesday architecture sync to async | Next week |

| Developer | Write ADR for the caching approach | Friday |

| Manager | Talk to PM about reducing mid-sprint scope changes | Before next 1:1 |

Review last week's action items at the start of this phase. If the same items keep rolling over, that's a signal.

1:1 Templates for Common Scenarios

Template 1: The New Hire (First 90 Days)

Focus: onboarding progress, comfort level, early wins.

Pre-meeting data pull:

- Activity Time trend (is it ramping up?)

- First PR cycle times (are reviews fast enough?)

- Project allocation (are they on the right starter tasks?)

Questions:

1. What surprised you most about the codebase this week?

2. Is the onboarding documentation accurate, or did you find gaps?

3. Who on the team has been most helpful? (Reveals team dynamics)

4. [Data] Your first PRs are getting reviewed in ~6 hours —

is that fast enough, or are you blocked waiting?

5. What's one thing I could change to make your ramp-up faster?

Template 2: The Senior Developer

Focus: impact, autonomy, technical direction.

Pre-meeting data pull:

- Review participation (are they mentoring via code review?)

- Focus Time (are they protected enough to do deep work?)

- Cross-project involvement (are they spread too thin?)

Questions:

1. What's the most important technical decision you made this week?

2. [Data] You reviewed 12 PRs this week — is that sustainable,

or should we redistribute review load?

3. Is there a tech debt item that's silently costing us?

4. Are you getting enough time for deep technical work?

5. What should I be worried about that I'm not?

Template 3: The Struggling Developer

Focus: support, clarity, specific improvement areas.

Pre-meeting data pull:

- Activity Time (is it declining?)

- Focus Time (are external factors blocking them?)

- PR cycle time (stuck in review loops?)

- Delivery trend (are commitments being met?)

Questions:

1. How are you feeling about your work right now? (Open, honest)

2. [Data] I notice your delivery pace has slowed over the past

three weeks. Walk me through what's happening.

3. Is the work clear enough? Do you know what "done" looks like?

4. What kind of support would help most — pairing, mentoring,

fewer meetings, clearer specs?

5. Let's pick one specific thing to improve this week.

What feels most important to you?

IMPORTANT: Never ambush. If this is the first time you're

raising performance concerns, the problem is your management,

not their performance.

Template 4: The Pre-Promotion Check-in

Focus: evidence gathering, gap identification.

Pre-meeting data pull:

- 3-month trend across all metrics

- Cross-team impact (reviews, mentoring)

- Project complexity and delivery record

- Cost efficiency of their projects

Questions:

1. Let's look at your last quarter together. What are you most

proud of?

2. [Data] Your Delivery Index has been consistently above team

average for 3 months. Let's document specific examples.

3. For the next level, we need evidence of [specific competency].

Where are you demonstrating that already?

4. What's one gap we should close before the review cycle?

5. Who else should I talk to about your impact?

Anti-Patterns to Avoid

1. The Leaderboard Manager

What it looks like: Ranking developers by Activity Time and sharing the ranking. "Alex coded 6 hours this week, why did you only code 2?"

Why it's toxic: Activity Time doesn't measure value. A developer who spends 2 hours coding and 4 hours designing a system that saves the team weeks is more valuable than one who writes code all day that needs to be rewritten.

What to do instead: Compare individuals to their own trends. Use team averages only as broad context.

2. The Gotcha Manager

What it looks like: Saving up data surprises for the 1:1. "Three weeks ago, on Tuesday, you only coded for 15 minutes..."

Why it's toxic: It breaks trust instantly. The developer feels surveilled, not supported.

What to do instead: Address patterns in real-time via Slack when they're fresh. Use 1:1s for trends and deeper conversations.

3. The Dashboard Zombie

What it looks like: Spending the entire 1:1 staring at charts. "Let's go through all 15 of your metrics one by one."

Why it's toxic: It turns a human conversation into a reporting ceremony. The developer checks out mentally.

What to do instead: Pick 2-3 relevant data points max. The data is the appetizer, not the main course.

4. The Metric Denier

What it looks like: Refusing to use any data because "I trust my team." Running 1:1s purely on vibes.

Why it's broken: Without data, feedback is based on recency bias, availability bias, and who is loudest. Quiet high performers become invisible.

What to do instead: You can trust your team AND use data. Data isn't surveillance — it's shared context.

Setting Up Your 1:1 Data Workflow

Here's a practical workflow that takes less than 5 minutes of prep per developer:

Weekly routine (Monday morning, before 1:1 week starts):

- Open your engineering intelligence platform (PanDev Metrics or similar)

- For each developer with a 1:1 this week:

- Check Activity Time and Focus Time trend (30 seconds)

- Check PR metrics and review activity (30 seconds)

- Note any anomalies or patterns (30 seconds)

- Write 2-3 data-informed questions in your 1:1 doc

- Total prep time: ~2 minutes per developer

In the meeting:

- Share the dashboard briefly (or don't — just reference the data verbally)

- Ask your prepared questions

- Take notes on action items

After the meeting:

- Log action items in your shared doc

- Set a reminder to check on blocker-removal commitments you made

Measuring Whether Your 1:1s Are Working

How do you know your data-driven 1:1s are actually better? Track these proxy signals:

- Developer satisfaction scores — if you run engagement surveys, are 1:1-related questions improving?

- Action item completion rate — are commitments being kept? On both sides?

- Surprise count — how often do performance reviews contain surprises? (Target: zero)

- Retention — developers rarely leave managers who invest in them with genuine, data-informed attention

- Developer self-awareness — do your developers start referencing their own metrics proactively?

The last one is the gold standard. When a developer walks into a 1:1 and says, "I noticed my Focus Time tanked this week because of the incident response rotation — can we talk about the on-call schedule?" — you've won. Research from the State of DevOps reports confirms that teams with strong feedback loops — including data-informed 1:1s — consistently outperform on both delivery speed and employee retention.

Quick-Start Checklist

If you want to start running data-driven 1:1s this week:

- Set up access to your team's engineering metrics (Activity Time, Focus Time, PR cycle time at minimum)

- Create a shared 1:1 doc per developer (Google Doc, Notion, whatever works)

- Before your next 1:1, spend 2 minutes reviewing the developer's data

- Prepare 2 data-informed questions (not accusations — questions)

- In the meeting: share the data, ask the question, listen

- End with written action items

- Follow up on your commitments before the next 1:1

The bar is low. Most managers don't prepare at all. Two minutes of data review before a 1:1 puts you ahead of the vast majority of engineering managers.

Ready to make your 1:1s actually useful? PanDev Metrics gives you per-developer dashboards with Activity Time, Focus Time, and delivery trends — everything you need for a 2-minute pre-meeting prep. Your developers get their own dashboards too, so the conversation starts from shared context.