Developer Experience: What It Is and How to Measure It

Developer Experience — DevEx or DX — has gone from a niche concept to a boardroom topic. Companies like Google, Spotify, and Shopify have dedicated DevEx teams. Job postings for "Developer Experience Engineer" have tripled since 2023. The JetBrains Developer Ecosystem Survey now includes DevEx-specific questions, signaling that the industry treats this as a measurable dimension, not a buzzword.

But what is Developer Experience? How do you measure something that feels inherently subjective? And why should a VP of Engineering care?

{/* truncate */}

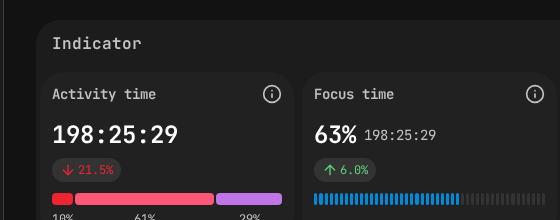

Focus Time and Activity Time — quantitative DevEx dimensions tracked automatically.

Defining Developer Experience

Developer Experience is the sum of all interactions a developer has with the tools, processes, systems, and culture of their organization, and how those interactions affect their ability to do their best work.

It's not one thing. It's everything:

- Tools: IDE quality, CI/CD speed, internal platform reliability

- Processes: Code review turnaround, deployment frequency, bureaucratic overhead

- Codebase: Architecture clarity, documentation quality, test coverage

- Culture: Psychological safety, recognition, autonomy, trust

- Environment: Meeting load, focus time availability, on-call burden

Good DevEx means developers can focus on solving problems rather than fighting their environment. Bad DevEx means half their energy goes to working around broken tools, confusing processes, and organizational friction.

Why DevEx Matters (The Business Case)

If DevEx sounds like a "nice to have" — something for companies that have already solved their real problems — consider the business implications:

Retention

Developers leave jobs because of bad developer experience more often than because of salary. In the Stack Overflow Developer Survey, the top reasons for leaving included "poor tools and infrastructure," "too much bureaucratic process," and "inability to do deep work due to meetings." This echoes Cal Newport's argument in Deep Work that knowledge workers need protected focus time to produce their best output.

Replacing a developer costs ~$50K-$200K (recruiting, onboarding, lost productivity). If improving DevEx prevents even 2-3 departures per year, the ROI is immediate.

Productivity

Good DevEx directly improves productivity. When CI/CD pipelines are fast, developers iterate quickly. When documentation is current, new features get built without tribal knowledge hunts. When code review is responsive, pull requests don't sit in limbo for days.

Research from DX (formerly Jellyfish/Uplevel) and other platforms consistently shows that teams with better DevEx metrics ship faster with fewer defects.

Recruiting

Top developers choose employers partly based on DevEx signals. They ask in interviews: "What's your deployment process? How long does CI take? What tools do you use?" Companies with strong DevEx attract better candidates.

Innovation

When developers aren't fighting their environment, they have cognitive bandwidth for creative problem-solving. The companies that produce the most innovative software tend to be the ones that obsess over their developers' daily experience.

The Three Dimensions of DevEx

A useful framework for understanding DevEx comes from the 2023 paper "DevEx: What Actually Drives Productivity" by Noda, Storey, and colleagues. They identified three core dimensions:

1. Flow State

Can developers achieve and maintain deep focus? Flow state — the psychological state of complete immersion in a task — is where the highest-quality work happens.

What breaks flow: Frequent meetings, Slack interruptions, slow builds, unclear requirements, context-switching between unrelated tasks.

What enables flow: Protected focus time, fast feedback loops (build, test, deploy), clear task definitions, minimal process overhead.

2. Feedback Loops

How quickly do developers get feedback on their work? Fast feedback loops mean developers can iterate rapidly. Slow loops mean they wait, lose context, and switch to other tasks.

Key feedback loops:

- Build time: How long from code change to seeing results? (Seconds vs. minutes vs. hours)

- Test execution: How quickly do tests run? Can developers run them locally?

- Code review: How long from PR submission to first review? (Hours vs. days)

- Deployment: How quickly can a change reach production? (Minutes vs. weeks)

3. Cognitive Load

How much mental overhead does the development process impose? High cognitive load means developers spend mental energy on things unrelated to the actual problem they're solving.

Sources of cognitive load: Complex configurations, undocumented tribal knowledge, unclear ownership boundaries, too many tools and platforms, inconsistent processes across teams.

How to Measure Developer Experience

This is where it gets practical. DevEx has both subjective and objective components, and measuring it well requires both.

Subjective Measures: Surveys

Surveys capture how developers feel about their experience. This matters because perception drives behavior — a developer who perceives their environment as frustrating will be less engaged, regardless of objective metrics.

Effective DevEx survey questions:

- "On a scale of 1-10, how easy is it to get your work done in our current environment?"

- "What is the single biggest time-waster in your daily workflow?"

- "How often do you achieve a state of deep focus during the workday?" (Never / Rarely / Sometimes / Often / Daily)

- "How satisfied are you with our development tools and infrastructure?"

- "Would you recommend our engineering environment to a friend?" (Developer NPS)

Survey best practices:

- Run quarterly, not annually (things change fast)

- Keep it under 10 questions

- Make it anonymous

- Share results transparently with the team

- Act on at least one finding per quarter

Objective Measures: Activity Data

Surveys tell you how people feel. Activity data tells you what's actually happening. Both are needed.

PanDev Metrics provides several objective DevEx indicators:

1. Total Coding Hours

How much time do developers actually spend coding? Our data from 100+ B2B companies with thousands of tracked coding hours shows wide variation. Teams with better DevEx tend to have higher coding-to-meeting ratios.

2. Session Length

Average uninterrupted coding session length is a proxy for flow state. Longer sessions suggest fewer interruptions. If your team's average session length is declining, something is breaking their focus.

3. Weekly Patterns

A healthy team shows Tuesday as peak day (as our data consistently demonstrates) with a reasonable Friday and minimal weekends. An unhealthy pattern: flat or increasing weekend activity, which suggests weekday environments aren't conducive to getting work done.

4. Language and Tool Distribution

Tracking which languages and IDEs developers use (we track 236 languages and tools like VS Code at 3,057h, IntelliJ at 2,229h, Cursor at 1,213h) reveals whether the organization is investing in the right tooling for the stack they actually use.

5. Onboarding Ramp-Up

How quickly new developers reach full productivity is a direct DevEx metric. Faster ramp-up = better documentation, cleaner code, more effective onboarding processes.

Combining Subjective and Objective

The most powerful insights come from combining both:

- Survey says developers feel they can't focus → Activity data confirms declining session lengths

- Survey says tools are frustrating → Activity data shows time wasted on slow builds or context switches

- Survey says everything is fine → Activity data shows weekend work increasing (developers might not recognize their own burnout signals)

When subjective and objective data align, you have a strong signal. When they diverge, you have an interesting investigation to pursue.

Building a DevEx Measurement Program

Step 1: Establish Baselines

Before you can improve, you need to know where you are. Deploy both:

- A quarterly DevEx survey (start with 5-7 questions)

- Activity tracking via PanDev Metrics (captures objective data automatically)

Collect 2-3 months of baseline data before setting improvement targets.

Step 2: Identify the Biggest Pain Points

Combine survey responses with activity data to find the highest-impact areas. Common findings:

| Survey Signal | Activity Signal | Likely Issue |

|---|---|---|

| "Too many meetings" | Short, fragmented sessions | Meeting overload |

| "Slow CI/CD" | Long gaps between code changes | Build/deploy bottleneck |

| "Hard to find information" | Long ramp-up for new hires | Documentation gap |

| "Weekend stress" | Increasing weekend activity | Scope or staffing issue |

Step 3: Prioritize and Act

Pick 1-2 issues per quarter. Don't try to fix everything at once. Each improvement should be:

- Specific ("Reduce average PR review time from 48h to 24h" not "improve code review")

- Measurable (using the metrics you've established)

- Time-bound (one quarter to show improvement)

Step 4: Measure the Impact

After implementing changes, compare post-intervention metrics to baselines:

- Did survey scores improve on the targeted dimension?

- Did activity data shift in the expected direction?

- Do developers feel the improvement?

Share results with the team. Seeing that their feedback led to concrete improvements builds trust in the measurement program.

Step 5: Iterate

DevEx is not a project with an end date. It's an ongoing practice. Each quarter: measure, prioritize, improve, validate. Over time, your DevEx measurement program becomes a core competency that differentiates your engineering organization.

Common Mistakes in DevEx Measurement

Mistake 1: Measuring Only Speed

DevEx isn't just about shipping faster. A team that ships quickly but burns out, produces bugs, and has high turnover has bad DevEx despite good velocity metrics.

Mistake 2: Surveying Without Acting

If you survey developers and don't act on the results, you've made things worse. You've demonstrated that their feedback doesn't matter. Either commit to acting on survey results or don't survey.

Mistake 3: Over-Indexing on Tools

Buying new tools is the easiest DevEx intervention and often the least impactful. Process changes, cultural shifts, and organizational redesigns are harder but more valuable. Don't throw tools at a culture problem.

Mistake 4: Ignoring Individual Variation

DevEx is personal. What one developer considers a great experience (quiet, autonomous, async) might be another developer's nightmare (isolated, unsupported, disconnected). Measure at the individual level and look for patterns, but don't assume one-size-fits-all.

Conclusion

Developer Experience is measurable, improvable, and directly linked to business outcomes. It's not a luxury — it's a competitive advantage.

The organizations that measure DevEx systematically — combining survey data with objective activity metrics — can identify and fix problems before they become retention crises, productivity drains, or recruiting disadvantages.

Start measuring. Start improving. Your developers (and your business results) will thank you.

Measure Developer Experience with real data. PanDev Metrics provides objective activity metrics — coding hours, session patterns, tool usage — that complement your DevEx surveys with hard numbers.