Documentation ROI: When to Write, When to Skip

A senior engineer at a fintech client spent 3.5 hours writing a runbook for a deploy process she hoped no one would ever run manually. Eight months later, it saved a junior on-call engineer roughly 4 hours at 2 a.m. on a bank holiday. That doc produced a tidy 15% time return. A peer doc written the same week — a 6-page architectural overview of a system being deprecated — has never been opened by anyone, according to the wiki logs. Same team, same hours, wildly different ROI.

Documentation is not free, and it is not infinitely valuable. The engineering conversation is usually framed as "we need more docs" or "docs are always stale" — both true at once, which is the clue. The actual question is: which docs pay back, how fast, and when writing them is worse than admitting the knowledge is tacit. This is a framework for making that call before committing the hours.

{/* truncate */}

The problem: docs have a cost, and it's not zero

A thoughtful doc takes 2-8 hours of senior engineering time. At a $120k fully-loaded US rate, that's $120-500 per doc. Multiply across a team of 30 engineers, each writing 5-10 docs a year, and you're at $18k-150k annually on documentation alone. That cost is invisible on most budgets because it comes out of engineering time.

Write Docs Day Foundation's 2024 practitioner survey (Valentine Reid, lead author) found the median enterprise doc has a read-to-write ratio of 4.2 — each doc is read just over 4 times before going stale. That's not 4× ROI; it's the raw opening count. Most reads are skim-and-close; the effective "information transferred" multiple is lower. Not all docs are the same: the same survey found runbooks average 11 reads and architectural docs 1.8 reads before staleness. Topic predicts value more than writing quality.

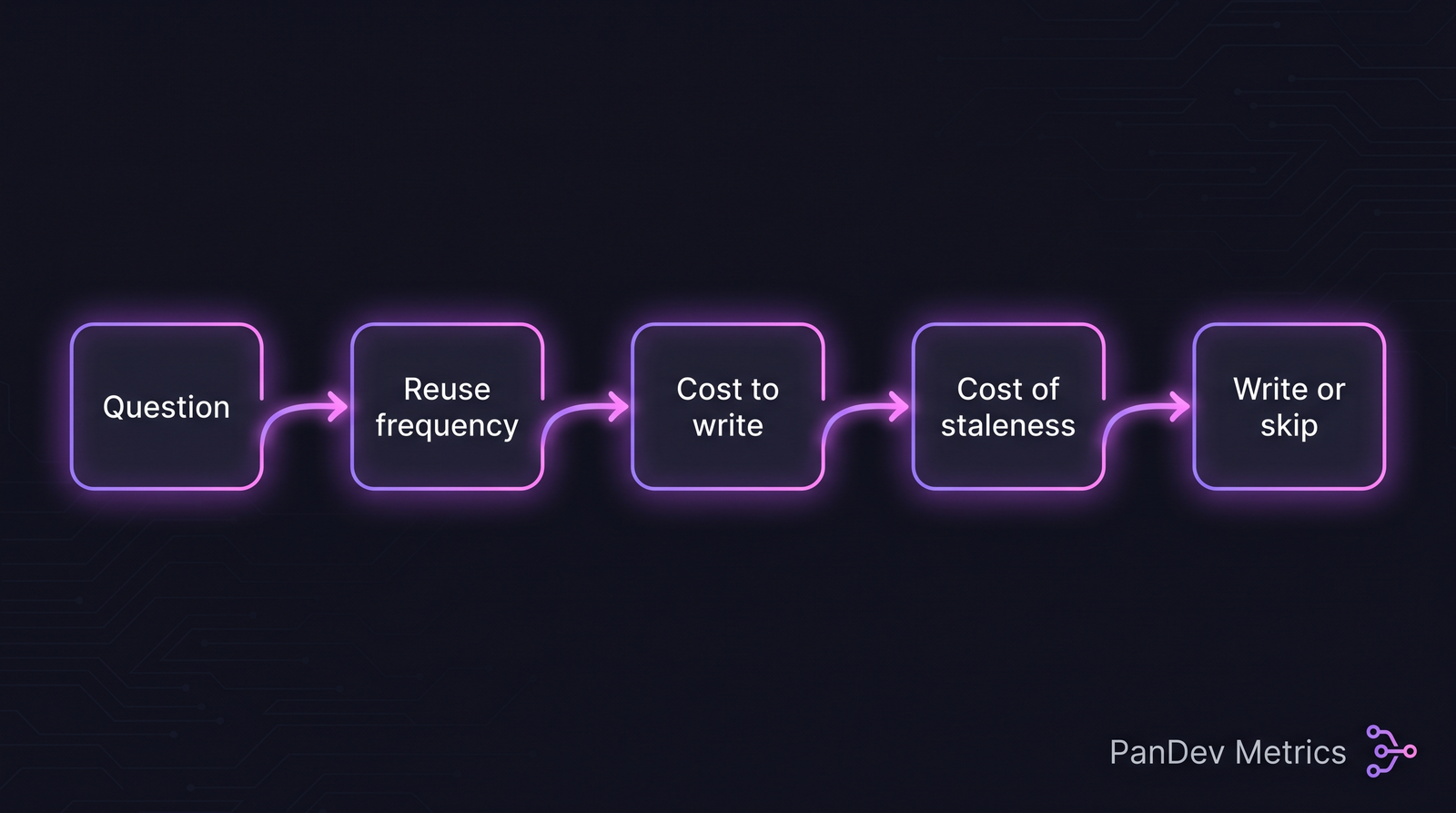

The five-step decision. Most "should we write this?" arguments skip step 3 (cost to write) and step 4 (cost of staleness).

The five-step decision. Most "should we write this?" arguments skip step 3 (cost to write) and step 4 (cost of staleness).

The three classes of documentation (different economics)

Class A — Runbooks and operational docs. High reuse, specific value per read. Saves hours during incidents. Best ROI.

Class B — Architectural and design docs. Moderate reuse, high value per read when consulted. Often over-produced relative to actual consultation.

Class C — Process and onboarding docs. Bursty reuse (new hires hit them in month 1, then rarely). Good ROI if kept tight.

The failure mode: teams invest Class B effort (8-hour architectural deep-dives) when the actual need was Class A (a 30-minute runbook). Worse, they invest Class B effort on systems that get deprecated in 12 months, making the doc dead before it's read.

A concrete ROI formula

For any proposed doc, compute:

ROI = (expected_reads × hours_saved_per_read) / (write_cost + decay_cost)

Where:

- expected_reads = how many times this will be opened in 18 months (realistic, not hopeful)

- hours_saved_per_read = time-saving vs figuring it out from code or asking a colleague (typical: 0.25-2 hours)

- write_cost = senior engineer hours to write it well

- decay_cost = hours per quarter to keep it fresh × quarters expected useful

Example A — Deploy runbook:

- Expected reads: 20 over 18 months

- Hours saved per read: 1.5

- Write cost: 3 hours

- Decay: 0.5 hr/q × 6 = 3 hours

- ROI = (20 × 1.5) / (3 + 3) = 5.0 — write it

Example B — Architecture doc for system being deprecated:

- Expected reads: 3

- Hours saved per read: 2

- Write cost: 8 hours

- Decay: 1 hr/q × 2 = 2 hours

- ROI = (3 × 2) / (8 + 2) = 0.6 — skip or defer

Example C — Onboarding guide for a new framework:

- Expected reads: 15 (new hires + cross-team)

- Hours saved per read: 0.5

- Write cost: 4 hours

- Decay: 0.5 hr/q × 4 = 2 hours

- ROI = (15 × 0.5) / (4 + 2) = 1.25 — marginal; write only if no simpler alternative

The threshold: ROI > 2.0 means write. ROI 1.0-2.0 means consider the alternatives (README, inline comment, Loom video). ROI < 1.0 means skip.

The decay cost is what everyone underestimates

Docs are not write-once. A doc that isn't maintained becomes actively harmful within 6-18 months — new hires trust stale docs, follow broken instructions, and burn more time than they would have without the doc. GitLab's 2023 Handbook postmortem (published internally, portions shared publicly) found 37% of their "how do I" internal searches returned a doc more than 18 months old, and roughly a quarter of those had at least one materially wrong instruction.

Maintenance rate estimate per doc class:

| Class | Maintenance cost/quarter | Staleness horizon |

|---|---|---|

| Runbook (operational) | 0.5-1 hr | 6 months if system changes |

| Architecture | 1-2 hr | 12 months |

| Onboarding | 0.5 hr | 6 months for tooling, 12 for process |

| Reference (API, config) | Automate or don't write | Decays fastest; auto-generate |

Insight: reference docs (API, config) should almost never be hand-written. Auto-generate from code or schema; the hand-written layer is only the "why" on top. A team writing and maintaining API reference by hand is accumulating decay cost with zero upside vs generation.

The 4-part pre-write check

Before committing an afternoon to a doc, ask:

1. Who will read this, and when?

- Specific roles (on-call engineer, new backend hire, interviewing PM)

- Specific triggers (during incident, during onboarding, during design review)

- If the answer is "anyone, sometime" — skip or radically shorten.

2. What's the alternative cost of not having it?

- A Slack question that gets answered in 5 minutes is fine.

- A Slack question that pings three senior people and derails a feature — not fine.

- The doc pays for itself against the alternative, not against zero.

3. Can this be a 5-line README or a Loom video instead?

- README.md at the repo root beats a 5-page wiki 80% of the time.

- A 10-minute Loom screencast beats a written onboarding guide for visual processes.

- The "best" format is the lowest-friction one the reader will actually use.

4. Who owns it?

- A doc without a named owner ages to uselessness within a year.

- If the honest answer is "I'll write it and then nobody will maintain it" — skip.

Template prompts for when to write vs skip

Copy-paste policy every team can adopt:

Write it:

- Any procedure that loses knowledge when one person leaves

- Any incident runbook for a system with >3 on-call engineers

- Any onboarding doc where the same question is asked 5+ times

- Any architectural decision that will be questioned in 6 months ("why did we pick X?")

Don't write it:

- Anything that can be auto-generated from code or schema

- Any explanation that needs to be rewritten on every release

- Any "comprehensive guide" to a system being deprecated within 18 months

- Any doc for which the answer is "just read the code" and the code is <200 lines

Common mistakes

- Writing Class B effort on Class A problems. "Let me write a comprehensive architectural overview" when a 2-paragraph runbook would do.

- No named owner. Everyone's doc is nobody's doc. A named owner reviewing quarterly is the single most-predictive variable for doc freshness.

- Writing instead of fixing. "This system is confusing, let me write a doc" — often the system is broken; the doc papers over the real fix.

- Duplicate docs. Three pages titled "Staging Auth" in three locations. Worse than no doc, because readers can't trust any of them.

- Docs as performance theater. Writing docs to signal effort, not to transfer knowledge. Easy to spot in the reads-per-doc metric.

How to measure whether your doc investment is paying off

Three numbers your wiki tool probably gives you but you haven't checked:

| Metric | Healthy | Warning |

|---|---|---|

| Docs read at least 3× in 90 days after creation | >60% | <40% |

| Median age of most-read docs | <12 months | >18 months |

| Time-to-first-answer for new hires (pre-agreed 10 questions) | Trending down | Flat or up |

We wrote about this in more depth in our knowledge management comparison — the tool choice matters less than the ownership discipline. Tracking time-to-first-answer is the highest-signal metric most teams never measure.

How PanDev Metrics fits the doc-economics story

Three applications:

Onboarding ramp correlation. We measure time-to-meaningful-PR during developer onboarding. Teams with better-maintained docs show 20-30% faster ramp on the same complexity of codebase. That's measurable.

Doc-write time attribution. Our IDE-heartbeat data distinguishes coding time from non-coding (editor, browser, tooling). Technical writing in Markdown files shows up as "coding-like" activity — we can estimate how many hours a team spends writing docs per month and compare to the reader numbers.

Staleness signal from code churn. If a code module is changing weekly but the associated doc hasn't been edited in 9 months, the doc is likely stale. We can surface "likely-stale" doc lists by correlating code churn with doc last-edited timestamps.

This is adjacent to the broader engineering-cost question covered in cost per feature — docs are part of the hidden cost envelope most teams don't account for.

The honest limit

Our data sees code and IDE activity; it doesn't see inside wikis or Confluence. The read-count numbers in this article come from Write Docs Day Foundation's published research, GitLab's postmortem, and three of our customers who voluntarily shared wiki analytics to help us validate the framework. We don't have a statistically robust sample on read-to-write ratios; the framework is directionally honest, not a claim of precision.

Second limit: ROI formulas give false precision. A doc's expected reads is a guess, not a number. The formula's value is that it forces the team to articulate the assumption, not that it produces a reliable score.

The sharpest claim

Documentation is an engineering cost that deserves the same ROI analysis as any other investment. Teams that write reflexively ("we should document this") accumulate staleness faster than they accumulate value. Teams that write selectively ("this doc will be opened 20 times and save 30 hours") build a compounding asset. The difference over 3 years is not small; it's whether your wiki is a tool or a graveyard.

Related reading

- Knowledge Management for Dev Teams — the tool comparison complement

- New Developer Onboarding Ramp — where good docs pay back most visibly

- Cost Per Feature: Calculating Engineering ROI — the broader cost-attribution framework

- External: GitLab Handbook — docs-as-code at scale, publicly available

- External: Write the Docs Community — practitioner research on doc economics