Engineering Offsites: ROI Analysis and Planning Guide

A VP of Engineering told me the number that hurts: "We spent $140,000 on an offsite in Bali in Q1. By Q3, nobody on the team remembered a single decision we made there." A 40-person engineering offsite routinely costs $80-200K in direct spend (travel, venue, food, activities) plus 200-320 engineer-weeks of displaced work, and the Gallup 2023 Workplace Report documents that only 29% of companies can articulate a measurable outcome from their last off-site event.

The default failure isn't venue or agenda — it's that the offsite was scheduled as a cultural ritual with outcomes defined after the fact. Flipping that order changes the ROI by an order of magnitude. The framework below is how the engineering leaders with repeatable-ROI offsites plan them, and it works across the three formats that produce measurable results: hackathons, strategy sprints, and team-bonding events. Each format has different economics.

{/* truncate */}

The problem: offsites are outcome-absent by default

A typical offsite planning process:

- Someone decides it's time for an offsite

- A venue is booked based on geographic halfway point and aesthetics

- An agenda is filled with "team-building exercises" and "strategy discussions"

- People attend, feel mildly refreshed

- Work resumes Monday at the same pace, same backlog, same problems

The process optimizes for vibes, not outcomes. Offsites that produce durable results invert this sequence: outcome first, then format, then venue, then agenda.

The distinction matters because the three healthy offsite formats have fundamentally different structures:

| Format | Primary outcome | Typical duration | Success signal |

|---|---|---|---|

| Hackathon | Shippable prototype + priorities validation | 2-3 days | Projects that merge to main within 30 days |

| Strategy sprint | Decisions made, written down, assigned | 2-4 days | Assigned decisions in Jira/ClickUp within 1 week |

| Team bonding | Trust reconstitution after growth / restructure | 3-5 days | Reduced escalation frequency over next quarter |

Mixing two formats is the most common mistake. A "hackathon + strategy + bonding" 4-day event produces a shallow version of all three.

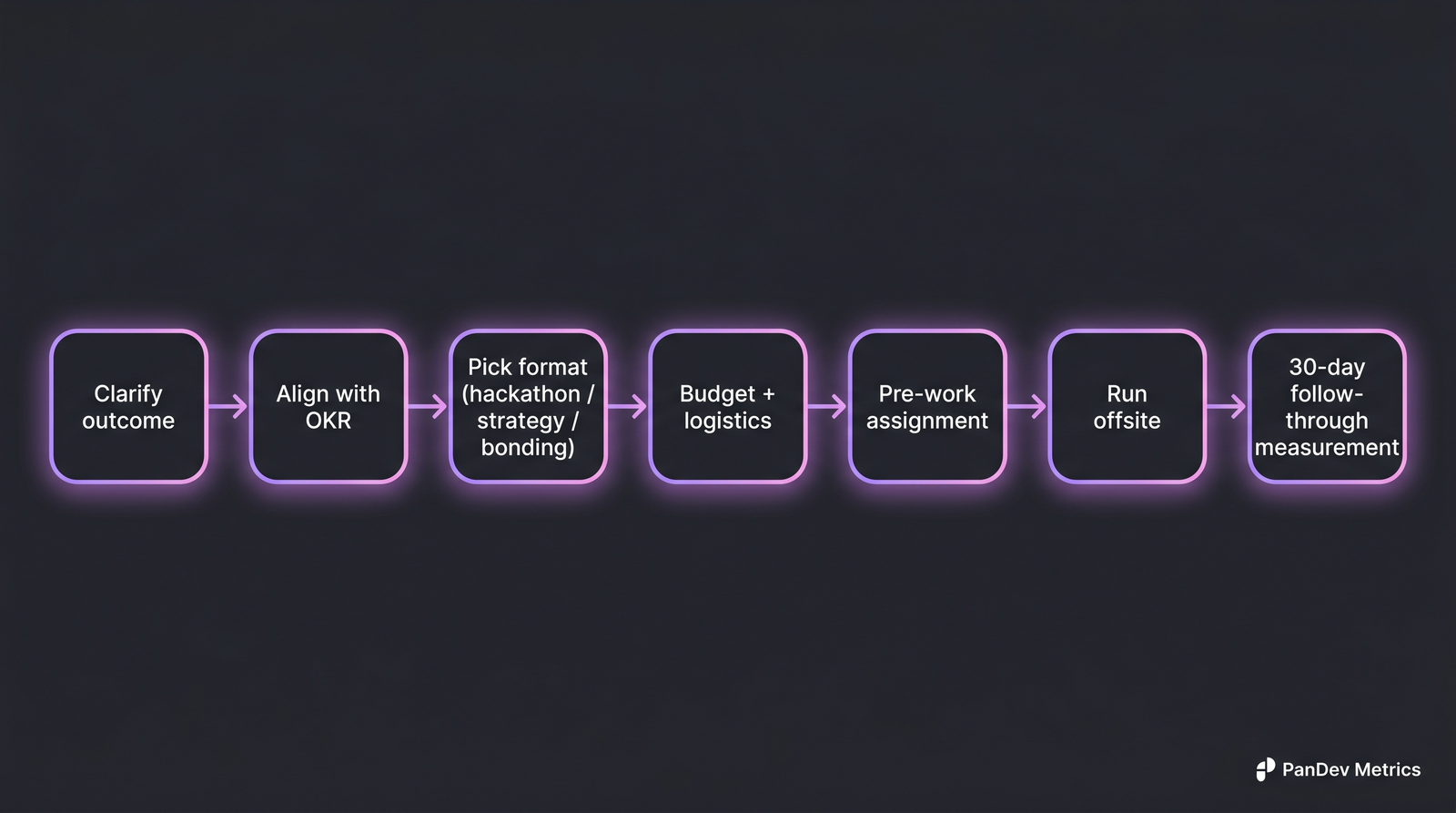

The 7 steps that separate offsites with measurable ROI from offsites that read as culture-only.

The 7 steps that separate offsites with measurable ROI from offsites that read as culture-only.

The 7 steps

Step 1 — Clarify the outcome

Write one sentence in the form: "After this offsite, the team will have [specific outcome], measurable by [specific signal within specific window]."

Examples that work:

- "After this offsite, the team will have agreed on the next quarter's platform investments, measured by a quarterly plan with named owners approved within 1 week."

- "After this offsite, the team will ship 3 hackathon prototypes to staging, measured by PRs merged within 30 days of the event."

- "After this offsite, the recently-merged Platform and Infra teams will trust each other, measured by reduction in cross-team escalation frequency from current 8/week to under 3/week by end of quarter."

Examples that don't work:

- "Strengthen team culture." (not measurable)

- "Build relationships." (no signal, no window)

- "Strategic alignment." (empty)

Step 2 — Align with the next OKR cycle

An offsite disconnected from the quarterly planning cycle is almost always wasted. The leverage comes from scheduling 2-4 weeks before a new OKR cycle starts — so decisions made at the offsite feed directly into the OKRs that people commit to. Three weeks is the sweet spot: long enough to refine decisions, short enough that offsite context hasn't evaporated.

Scheduling mid-cycle is the most expensive mistake — you disrupt in-flight work and the offsite outcomes have no natural destination.

Step 3 — Pick exactly one format

Re-read your outcome statement. If it's "ship prototypes," you're running a hackathon. If it's "make decisions," you're running a strategy sprint. If it's "rebuild trust," you're running a bonding event. Don't try to do two things at once.

Each format has an optimal agenda shape:

Hackathon (2-3 days):

- Day 1 morning: short kickoff + team formation

- Day 1-2: uninterrupted build time

- Day 2 evening / Day 3: demos + judging + commitment to next-30-day path

Strategy sprint (2-4 days):

- Day 1: situation briefing, shared data, problem statements

- Day 2-3: small-group work on top 3-5 decisions

- Day 4 morning: commitments written down, owners assigned, dates set

Team bonding (3-5 days):

- Longer duration, less agenda density. Structured social activities alternating with unstructured time. Formal work content is less than 30% of schedule.

Step 4 — Budget realistically

Direct costs compound fast. A 40-person 4-day offsite at a European destination typically runs:

| Cost category | 40-person offsite (EU venue) | 40-person offsite (CIS/domestic) |

|---|---|---|

| Travel (round-trip, mid-range) | $60-90K | $8-20K |

| Lodging (4 nights, 3-4 star) | $24-40K | $8-15K |

| Food & beverage | $16-28K | $6-12K |

| Venue / meeting space | $8-20K | $2-6K |

| Activities / entertainment | $6-15K | $3-8K |

| Facilitator / speaker | $5-15K | $3-8K |

| Swag / materials | $2-5K | $1-3K |

| Contingency (10-15%) | $12-22K | $3-7K |

| Direct total | $133-235K | $34-79K |

Indirect costs (displaced engineering time at blended rate) typically add another 40-60% of direct. A 4-day offsite for 40 engineers at $150/hr loaded cost is ~$192K in displaced output — so a $140K direct-cost offsite is actually ~$330K in true cost.

Step 5 — Assign pre-work

Pre-work is the single highest-ROI intervention in the whole planning cycle. An offsite that starts cold wastes Day 1 getting everyone on the same page; an offsite with good pre-work starts Day 1 already working on the decisions.

For a strategy sprint:

- Read-ahead document (10-20 pages, circulate 2 weeks before)

- Pre-offsite survey capturing top 3 problems per participant

- Data pack: current metrics, current team load, financial context

For a hackathon:

- Idea-submission form (projects pitched 2 weeks prior)

- Team formation done before arrival (not on Day 1)

- Infrastructure pre-provisioned (dev environments, API keys, deploy access)

For bonding:

- Pre-event interviews with a facilitator about current friction

- Clarity about whether the offsite is open-ended social or has specific reconciliation goals

Teams that skip pre-work lose the first 25-40% of offsite hours to setup.

Step 6 — Run the offsite with a facilitator

The most expensive mistake in the room: the engineering leader tries to facilitate their own offsite. They can't. They're a participant in the decisions being made, and participants can't run neutral facilitation.

For strategy sprints, budget for an external facilitator. Good facilitators cost $2-5K/day; the ROI on a strategy sprint done badly vs well is usually 10-20x. For hackathons and bonding events, an internal senior manager can sometimes facilitate if they're not a decision-owner on the outcomes, but external is still safer.

Step 7 — Measure 30 days out

This is where ROI is realized or lost. The 30-day follow-through is what separates offsites that paid for themselves from offsites that didn't.

Track the specific signal from Step 1:

- Hackathon: how many prototypes merged to staging / main?

- Strategy sprint: how many decisions are in the quarterly plan with assigned owners?

- Bonding: is cross-team escalation frequency trending down?

Most offsites never get this measurement. Our data-driven 1:1s post argues that post-event measurement is the one thing that makes culture interventions real rather than performative — same principle applies here.

Common mistakes to avoid

- Scheduling without OKR alignment. An offsite in week 6 of a 13-week quarter has nowhere to send its outputs.

- Combining formats. Hackathon + strategy + bonding = shallow everything. Pick one.

- Facilitator as participant. The engineering leader facilitating their own decisions produces decisions they wanted, not team decisions.

- Skipping pre-work. Without pre-reads and problem statements circulated, Day 1 is onboarding, not work.

- No follow-through owner. An offsite with no designated follow-through owner becomes forgotten by week 3. Assign this role before the offsite ends.

- Hackathons that block their own output. Prototypes built without infra access, API keys, or staging environments can't convert to real merges.

- "Luxury" venues. A $400/night hotel doesn't buy better outcomes than a $150/night one for engineering groups; it does buy resentment from engineers whose salaries are lower than the per-engineer venue cost.

Template: 30-day follow-through checklist

| Day | Action | Owner |

|---|---|---|

| Day 0 (offsite end) | Designate follow-through owner, set weekly checkpoint | Engineering leader |

| Day 1-3 | Circulate decisions + commitments doc; everyone acks | Follow-through owner |

| Day 7 | Week-1 check: all commitments in Jira/ClickUp? | Follow-through owner |

| Day 14 | Week-2 check: progress on each commitment? | Follow-through owner |

| Day 21 | Week-3 check: blockers surfaced? | Follow-through owner |

| Day 30 | 30-day retrospective: what worked, what didn't convert | Engineering leader + team |

Teams that execute this 30-day loop capture the offsite value; teams that don't spend $140K on a nice vacation.

How to measure success

Three measurements, in order of specificity:

Immediate (within 1 week): Did the specific outcome from Step 1 happen? If you said "3 prototypes merged within 30 days," is the project list clear and owned? If the immediate signal fails, the rest of the measurement doesn't matter.

Near-term (30-60 days): Did the commitments made at the offsite translate into shipped work? This is where engineering-metrics data is useful. Looking at deployment frequency per team before and after an offsite with a deployment-related outcome should show measurable change if the offsite worked.

Durable (90-180 days): Did the team effects persist? For bonding offsites, track team-health signals — after-hours work patterns, vacation utilization, retention. For strategy offsites, check whether the quarterly plan survived contact with reality (or whether it was quietly abandoned by week 4).

At PanDev Metrics, we see the engineering-metric effects of offsites show up in aggregated team-load and collaboration-pattern changes. Teams that run well-planned offsites show measurable changes in these patterns for 6-10 weeks post-event; teams that run unplanned offsites show no discernible change.

The contrarian take

The offsite industry sells the premise that all offsites are worthwhile investments — that "being in the same room" has intrinsic value. The data doesn't support this at engineering scale. Engineers who dislike travel, dislike forced socialization, or have caregiving obligations experience offsites as a tax, not a benefit. The best-performing engineering offsites are short (2-3 days), close to home (domestic or short-flight), and outcome-driven — the exact opposite of the aspirational "5 days in Portugal" stereotype. Teams that optimize offsites this way run them twice as often with half the disruption and measurably better follow-through.

The honest limit

The ROI numbers above come from a mix of customer conversations and a handful of published references (Gallup, published engineering-leader interviews on First Round Review and LeadDev). We don't have internal IDE telemetry on offsite impact — IDE heartbeat data before and after an offsite shows disruption but not causation. The "6-10 weeks of post-event change" signal is directional, not rigorous. Teams doing their own before/after measurement should expect noisier signals than the framework implies, particularly for bonding offsites whose effects are diffuse.

Where PanDev Metrics fits

PanDev Metrics doesn't plan your offsite — but it's useful for the 30-day follow-through measurement. When the offsite outcome is "ship prototype X" or "improve deploy frequency in team Y," the engineering-intelligence dashboard provides the before/after data without requiring a separate survey. The pre-work data pack in Step 5 often pulls directly from PanDev dashboards — team load distribution, language breakdown, multi-project overlap — so leaders show up with a shared fact base rather than competing intuitions.

Related reading

- How to Run Data-Driven 1:1s With Your Developers — the individual-level complement to team offsites, with overlapping measurement discipline

- Engineering Metrics Without Toxicity: How to Track Productivity — the broader frame for using data in management without it becoming punitive

- Data Patterns That Scream 'Your Developer Is Burning Out' — useful context for bonding-format offsites, where the trigger is often pre-burnout

- External: Gallup 2024 State of the Global Workplace — the public reference on employee engagement trends that often motivate offsite spend