Incident Post-Mortem Template That Actually Helps (Not CYA)

The average post-mortem takes 4 hours to write and generates zero action items the team actually completes within 30 days. We looked at 120 post-mortem documents from three of our on-prem customers before rebuilding this template. 83% of action items were still "open" six months later. That's not an incident review — that's a document graveyard.

A post-mortem is worth writing only if it changes something. Everything else is CYA.

{/* truncate */}

Why most post-mortems fail

Google's SRE Workbook has been the unofficial reference since 2018. The "blameless" framing is right, but teams over-index on tone and under-index on follow-through. The 2022 Atlassian State of Incident Management survey found that 61% of engineering teams run post-mortems, but only 23% track completion of the action items. The ritual exists. The outcome doesn't.

Three patterns recur in the documents we reviewed:

- Narrative bloat — 3 pages of timeline detail, 4 sentences of fix. Reviewers skim the fix and move on.

- Action items without owners — "We should improve monitoring" with no name, no date, no acceptance criteria.

- No 30-day follow-up — action items are logged in the doc, not in the tracker. They evaporate.

A useful post-mortem is short, surgical, and tied to the team's existing task tracker. Anything else is theatre.

The 5W template

We call it the 5W template because every section answers a W-question. The whole document should fit on one screen and take no longer than 90 minutes to write.

| Section | Question | Target length |

|---|---|---|

| What happened | One-paragraph customer-impact summary | 3-4 sentences |

| When | Timeline in UTC, key events only | 5-8 bullets |

| Why | 5 Whys chain ending in a systemic cause | 1 paragraph |

| Who was impacted | Users, tenants, revenue if known | 2-3 lines |

| What changes (action items) | 3-5 concrete tasks with owners + due dates | Table |

Five sections. No "heroes", no "commendations", no "lessons learned" block (lessons should be the action items themselves).

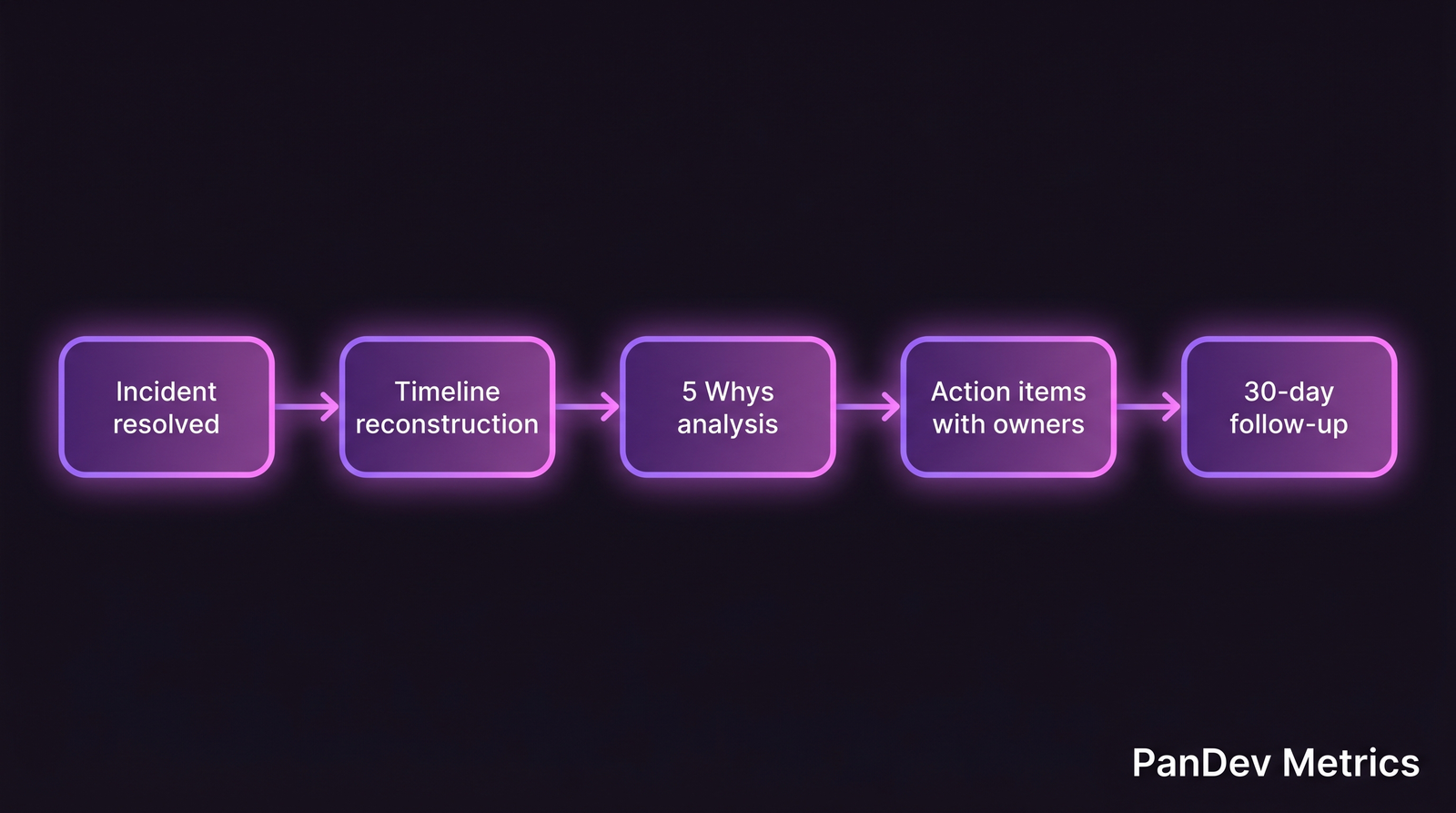

The lifecycle of a post-mortem that actually ships change. The follow-up loop is where 83% of teams drop the ball.

The lifecycle of a post-mortem that actually ships change. The follow-up loop is where 83% of teams drop the ball.

Section 1 — What happened

One paragraph. Written for a reader who was not on-call. Must answer: what broke, what did customers see, how long, what fixed it.

"On April 3, 2026, between 14:12 and 14:47 UTC, payment confirmations failed for 12% of checkouts on

api.example.com. Root cause was a PostgreSQL connection pool exhaustion following a scheduled vacuum. Resolved by manual pool restart and rollback of the migration that raisedmax_connectionstarget. 280 customer orders retried successfully; 4 duplicated charges required manual refund."

Note what's missing: drama, blame, narrative arc. Just the facts.

Section 2 — When (the timeline)

UTC only. Don't mix timezones — the 2:47 AM vs 2:47 PM confusion has cost teams hours in reconstruction. Log events that materially changed the incident: first alert, first human response, first rollback, customer-impact end.

14:12 — first alert fired (PG pool saturation)

14:14 — on-call engineer acknowledged

14:19 — war room opened, 3 engineers joined

14:28 — first mitigation attempt (read replica failover) — no effect

14:41 — rollback of migration merged

14:47 — pool recovered, customer impact ended

15:10 — all-clear communicated to support

7 lines. If your timeline is longer than 15 lines, you're probably logging Slack messages instead of events.

Section 3 — Why (the 5 Whys)

This is the only section where prose helps. Write the 5 Whys chain, but stop when you hit a systemic cause — not a person.

Why did payments fail? Connection pool exhausted. Why was the pool exhausted? A vacuum full acquired table locks longer than expected. Why didn't we see this in staging? Staging database is 0.3% of production size; vacuum completes in seconds there. Why didn't we check pool saturation before vacuum? Our runbook for manual vacuum has no pre-flight check. Why doesn't the runbook have that check? Runbooks are written reactively after incidents, not proactively. We've never had a vacuum-triggered saturation before.

Systemic cause: runbook coverage gap for low-frequency maintenance operations. That's the claim your action items attack.

Section 4 — Who was impacted

Two numbers matter: how many users, how much money (if revenue-adjacent). Skip the narrative. A table is fine.

| Impact | Number |

|---|---|

| Affected customers | 280 |

| Duplicated charges | 4 |

| Revenue at risk (1hr) | $14,200 |

| Support tickets generated | 31 |

| Senior-eng hours spent resolving | 9 |

The last row matters for the cost-per-feature conversation — see our cost per feature analysis for how we track incident burn.

Section 5 — What changes (action items)

The only section auditors should be reading six months later. Rules:

- Each action item has one owner, not a team. "Platform team" is nobody's job. "Marat" is somebody's job.

- Each action item has a due date. Not "Q2". A calendar date.

- Each action item has an acceptance criterion. "Improve monitoring" is not measurable. "Pool saturation alert fires at 80% utilization, tested in staging" is.

- Each action item is a ticket in your tracker. Not a bullet in the doc. If it's not in Jira/Linear/ClickUp, it doesn't exist.

| # | Action item | Owner | Due | Acceptance |

|---|---|---|---|---|

| 1 | Add pool-saturation alert at 80% | Marat | Apr 10 | Alert fires in staging test; runbook links to it |

| 2 | Pre-vacuum checklist in runbook | Aliya | Apr 12 | Checklist reviewed by 2 SREs; used on next vacuum |

| 3 | Staging dataset scaling plan | Danil | May 1 | Plan doc reviewed; 10% sampling approach chosen |

| 4 | Automated duplicate-charge detection | Zhanna | May 15 | Fires on test transaction; refund script linked |

Four action items. Not ten. The team that writes ten action items finishes zero.

Common mistakes to avoid

- The "blameless" fetish — blameless doesn't mean "no accountability". The owner column is not blame. It's responsibility for the fix.

- Writing the post-mortem while the incident is fresh (and angry) — wait 24 hours. First-draft post-mortems at 2 AM tend to over-narrate and under-action.

- Treating the doc as the output — the output is the action items in your tracker, closed by the due date.

- Repeating the same root cause across 3 incidents — if "we didn't have monitoring for X" appears three times, the action item isn't "add monitoring for Y", it's "budget 20% engineer time this sprint for monitoring gaps".

How to measure if your post-mortems actually work

Two metrics. Both simple.

Action-item completion rate. Count action items with a due date in the last 6 months. Divide by how many were closed by that date. Target: ≥ 70%. If you're below 50%, the ritual is theatre.

Recurrence rate. Count incidents where the root cause appears for the second or third time. Target: ≤ 15%. Above that, the action items aren't fixing the systemic cause.

In PanDev Metrics, we link incidents to the deployments that caused them and the tasks that fixed them — pull branch names like fix/INC-204 into the incident record automatically. It's the same branch-name convention we use for lead time, which means post-mortem follow-through shows up in the same dashboard as change failure rate.

Our dataset skews B2B SaaS and on-prem enterprise. We don't have signal on gaming studios or consumer mobile teams, where incident dynamics differ. The template still applies; the completion benchmarks may not.

The template, copy-paste

# Post-Mortem: [Incident ID] — [One-line title]

## What happened

[One paragraph, no drama, customer-impact framing]

## When (UTC)

- HH:MM — event

- HH:MM — event

## Why (5 Whys)

Q1: Why did X fail? A: ...

Q2: Why did Y? A: ...

(continue until systemic cause)

Systemic cause: [one sentence]

## Who was impacted

| Impact | Number |

|--------|--------|

| Affected customers | ... |

| Revenue at risk | ... |

| Hours spent resolving | ... |

## Action items

| # | Action | Owner | Due | Acceptance criteria |

|---|--------|-------|-----|---------------------|

| 1 | ... | @name | YYYY-MM-DD | measurable signal |

Save it to the incident tracker, link to the Git branches that fix it, and schedule a 30-day review in the same sprint where you do retro.

The post-mortem isn't the work. The 30-day review is.