Junior to Senior: Promotion Criteria Backed by Data

A 3.5-year engineer at a 120-person scaleup I worked with last year was "obviously senior" — by everyone's intuition. Her Git and IDE data told a different story: she was shipping more features than any senior on the team, but she wasn't reviewing PRs from people outside her squad, never owned a system-design proposal end-to-end, and her commits clustered in a narrow 2-component surface area. Her manager's gut said senior. The behavioral evidence said: ready in 6-9 months, not today. The 6-month data revisit confirmed it — she got there, and the promotion landed stronger than the intuition-based one would have.

Promotion decisions fail in two directions. Promote-too-early produces under-supported seniors who quietly under-perform and sometimes leave. Promote-too-late loses your best engineers to competitors who saw the readiness first. A 2023 First Round Review study on engineering careers found the single largest driver of senior-engineer regret was "promoted without being ready," cited by 41% of respondents. Data-backed criteria reduce both errors.

{/* truncate */}

The problem with intuition-only promotion

Managers pattern-match on vivid moments — a great incident response, a polished demo, a single architecture deck — and weight those heavily in promotion decisions. These moments are real signal, but they suffer three biases:

- Recency — last quarter weighs more than the prior 18 months

- Visibility — the engineer who presents at all-hands gets seen more than the equally-senior one doing platform work

- Similarity — managers score "engineers like them" higher

Microsoft Research's 2019 paper on promotion equity documented up to 2x disparity in time-to-promotion between demographically similar and different reports of the same manager, controlling for output. Data-backed criteria don't remove intuition; they anchor it.

What senior actually means (behaviorally, not by title)

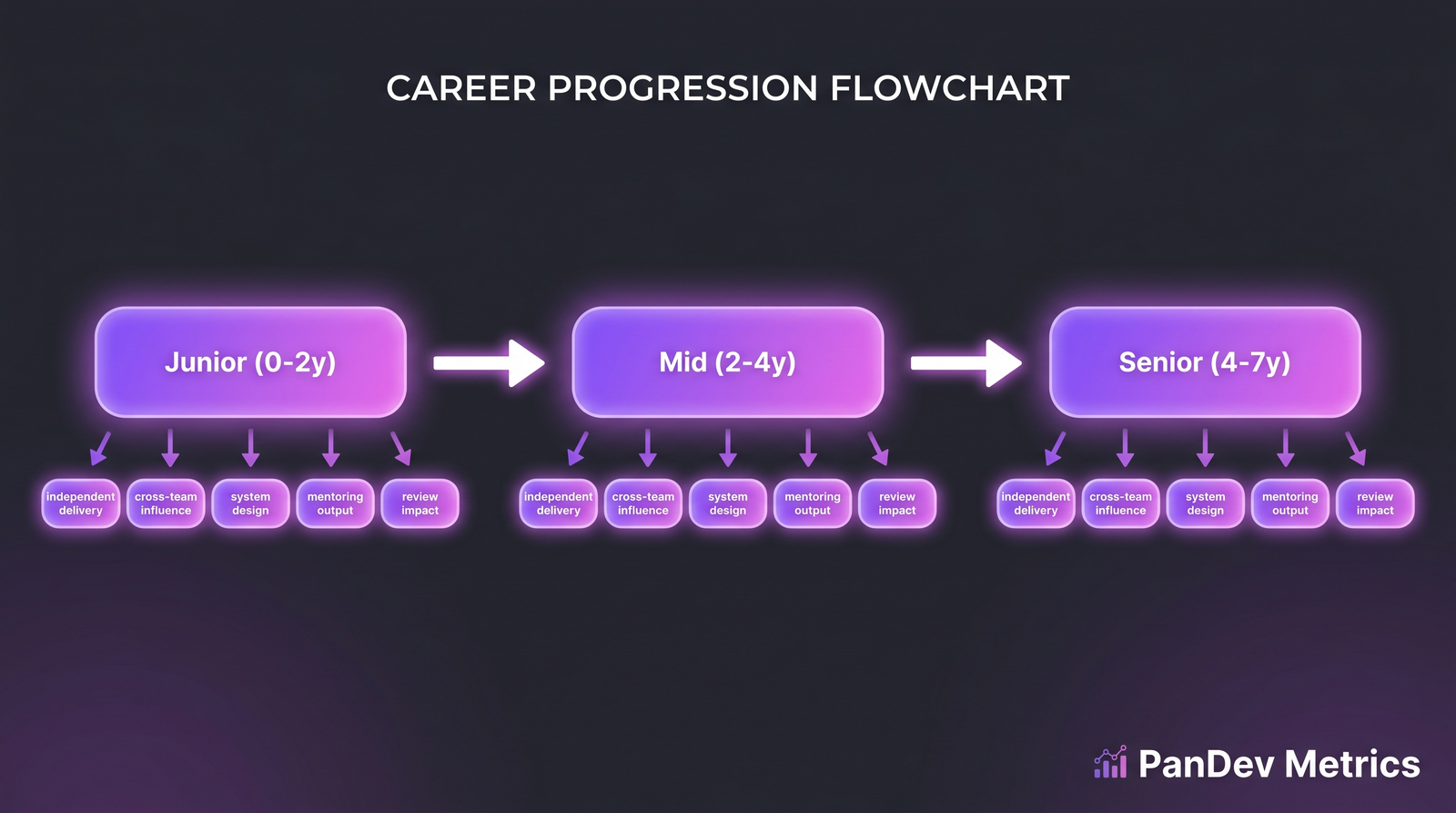

The four gates between mid and senior. Tenure alone clears none of them.

The four gates between mid and senior. Tenure alone clears none of them.

A mid → senior promotion means the engineer has passed four behavioral gates, each independently observable in Git, PR, and IDE data:

| Gate | What it looks like in data | Threshold |

|---|---|---|

| Independent delivery on ambiguous work | PRs where the originating task was created by the engineer, not assigned | ≥ 30% of PRs in last 6 months |

| Cross-team influence | Reviews completed on PRs outside the engineer's primary team | ≥ 20% of reviews in last quarter |

| System design ownership | Engineer authored ≥ 1 design doc that resulted in shipped system, with multi-contributor participation | ≥ 1 per 6 months |

| Mentorship output | PRs the engineer reviewed where the author was a junior engineer | ≥ 15% of reviews in last quarter |

No single gate is sufficient. A mid engineer who crushes independent delivery but never reviews outside their team isn't senior — they're a high-output IC. A mid engineer who reviews broadly but never owns a design doc isn't senior — they're a helpful reviewer.

All four, sustained for ≥ 6 months, is the promotion signal.

The four signals in detail

1. Independent delivery on ambiguous work

Seniors start projects. Juniors and mids complete assigned projects. The cleanest proxy: who opened the originating task/ticket?

Pull Jira/ClickUp/Linear data and check who created the task that resulted in each merged PR over the last 6 months:

| Role | Typical % of PRs from own-created tasks |

|---|---|

| Junior | 0-10% |

| Mid | 10-25% |

| Senior | 25-50% |

| Staff+ | 40-70% |

An engineer sitting at 15% for 18 months is mid, not early-senior. An engineer climbing from 12% to 32% over 9 months is showing the readiness signal.

2. Cross-team influence via PR reviews

Senior engineers review code outside their immediate feature team. The reason is simple: they're trusted by more than one codebase's maintainers, and their review adds value across surfaces.

Measure: of PR reviews this engineer completed last quarter, what % were on PRs whose author's primary team ≠ this engineer's primary team?

- Mid: 5-15%

- Early senior: 15-25%

- Senior+: 25-40%

Watch for pathologies: an engineer with 60%+ cross-team reviews and low own-delivery might be a review-hero avoiding their own shipped work. Senior is balance, not extremes.

3. System design ownership

Writing a design doc that gets implemented is the single hardest-to-fake senior signal. Implementation is the test — plenty of engineers write docs; seniors write docs that produce systems.

Track:

- Did the engineer author ≥ 1 design doc in the last 6 months?

- Was the design doc commented on by 3+ other engineers (non-authors)?

- Did the resulting system ship?

- Did the engineer remain a top-3 committer to the resulting codebase for ≥ 30 days post-launch?

Four yeses = full design-ownership gate cleared. Fewer = partial credit, note specifically what's missing.

4. Mentorship output

Seniors multiply the team. The simplest measurable form: reviewing PRs from juniors, with substantive comments (not just approvals).

Signal: of all PR reviews this engineer completed, what % were on PRs from engineers with <18 months tenure? And of those, what % included at least one inline comment with a code suggestion or question?

- Mid-level: 5-10% junior reviews, 20-30% with substantive comments

- Senior: 15-25% junior reviews, 50%+ with substantive comments

- Staff: 10-20% junior reviews (Staff mentors more via design, less via review), 70%+ substantive

An engineer who reviews 25% junior PRs but rubber-stamps them (all "LGTM" with no inline) isn't mentoring — they're gate-keeping fast. The substantive-comment ratio is the quality filter.

The quantitative dashboard

| Signal | Measurement | Mid range | Senior threshold |

|---|---|---|---|

| Own-task PR ratio | Tasks author == PR author / total PRs | 10-25% | ≥ 30% |

| Cross-team reviews | Cross-team reviews / total reviews | 5-15% | ≥ 20% |

| Design docs authored (6mo) | Count of merged-design-doc PRs | 0-1 | ≥ 1 w/ implementation |

| Junior-mentorship ratio | Junior PR reviews / total reviews | 5-10% | ≥ 15% |

| Sustained period | Months all 4 above threshold | < 3 | ≥ 6 |

Promotion conversations start when an engineer clears all five rows for 6 months. Before that, the conversation is "what to grow toward," not "when to promote."

What doesn't correlate with senior-readiness

Some metrics sound like they should predict senior-readiness but don't — and ranking people on them damages teams.

- Lines of code per week. Mid engineers write more LOC than seniors in our data, not less. Seniors spend more time reviewing and designing. The IDE heartbeat data backs this: coding-time share drops from 68% at mid to 52% at senior.

- Commit count. Same problem — seniors commit less, in bigger logical units.

- PR count. Correlates with mid-level output, weakly with senior.

- Standup talk time. Zero correlation. Extroverts over-index here.

- GitHub stars on side projects. Zero correlation with on-job senior behaviors.

If your promotion process weighs any of these, you're selecting for output volume, not seniority. Expect promote-too-early errors.

Using IDE + Git data in promotion conversations

PanDev Metrics' engineering-intelligence surface (IDE heartbeat + Git events + task-tracker links) makes the four signals above queryable without manual audit. What the CTO dashboard adds isn't a "readiness score" — we deliberately don't compute one — it's the ability to answer "Show me this engineer's cross-team review ratio for the last 6 months, quarter by quarter" in under 10 seconds, instead of 2 hours of PR archaeology.

The behavior we've seen in customers: managers go into promotion conversations with the four gates pre-checked, then use the conversation to discuss what the data doesn't capture (domain expertise, customer impact, incident response). The data doesn't replace judgment; it frees judgment from counting.

Common mistakes to avoid

- Promoting for tenure. 4 years in seat ≠ senior. Tenure is a necessary-not-sufficient condition.

- Using a single quarter of data. All four signals are noisy quarter-to-quarter. Use 6 months minimum.

- Weighting "visibility" over evidence. The engineer who presents at all-hands isn't necessarily further along than the one refactoring the deploy pipeline.

- Ignoring negative signals. An engineer who hits all four gates but has 3 incidents traced to reviewless merges is showing risk that gate-counting misses.

- Promoting because "they'll leave otherwise." This is how you create mediocre seniors. Retention is a separate problem — solve it with scope and pay, not title inflation.

The contrarian claim

Most engineering orgs promote too late, not too early, and the cost of too-late is larger. The narrative is "we promote too early, so set a high bar." The data across the customers we've watched says otherwise: engineers who cleared all four gates 6 months before their actual promotion either left (40%) or spent those 6 months doing senior work for mid pay. The gate framework isn't just about raising the bar — it's about making "they're ready right now" as visible as "they're not ready yet." Both errors cost; speed of detection matters equally.

Honest limits

Our dataset is 100+ B2B companies, ~1,000 tracked individuals. That's enough to validate the direction of the four signals, but not enough to publish tight thresholds for a specific industry (gaming vs fintech vs e-commerce have different design-doc norms). The thresholds above are medians across our data, not universal truths. Calibrate to your org by plotting your current seniors against the four metrics and looking at the distribution, then setting your gates 15-20% below the median senior's values.

We also can't capture cultural fit, customer empathy, stakeholder management from IDE+Git data. Those are real senior behaviors. The four gates are the necessary behavioral floor; they're not the sufficient ceiling.

Related reading

- Performance Reviews Based on Data: Templates and Anti-Patterns — the broader evaluation process the promotion sits inside

- New Developer Onboarding: How Metrics Show the Ramp-Up to Full Productivity — the curve that ends where this article begins

- The 10x Developer: What the Data Actually Shows — why individual-output myths distort promotion judgment

- External: First Round Review: Engineering Career Ladders — qualitative research on promotion regret