Knowledge Management for Dev Teams: Wikis, Notion, GitHub Compared

A team of 60 engineers I worked with last year had 1,400+ Confluence pages, a Notion workspace with 380 pages, a GitHub wiki in each of their 22 repositories, and a "team knowledge" Google Drive. A new hire's second-week task was to find the staging environment runbook. It took her four hours. It existed in all four systems, with three different URLs, two conflicting versions, and one correct but three-year-outdated instruction in the wiki.

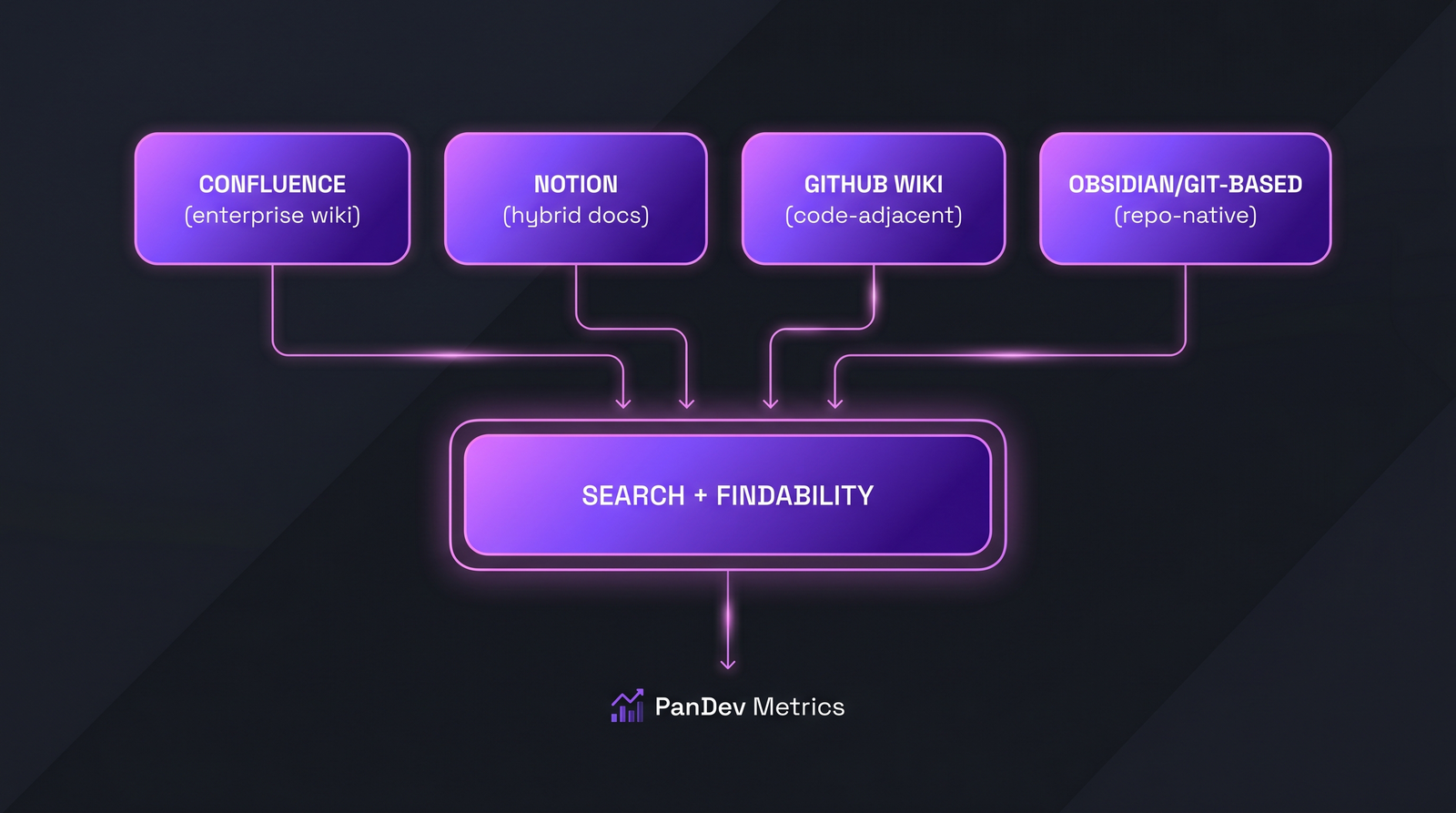

This is a comparison of four knowledge-management approaches — Confluence, Notion, GitHub Wiki, and Git-native docs (Obsidian/MkDocs/Docusaurus over a repo) — and a framework for picking one. Microsoft Research's 2024 engineering-productivity report listed "can't find documentation" as the #3 friction point behind slow builds and broken tests, ahead of code review delays. Tool choice is not neutral; it shapes whether documentation gets written, found, and trusted.

{/* truncate */}

Positioning

Confluence (Atlassian): the enterprise wiki. Good at page hierarchy, approvals, permission granularity. Heavy, slow, expensive.

Notion: the hybrid workspace — docs, databases, tasks, project views in one tool. Fast, pretty, developer-adjacent but not developer-native.

GitHub Wiki: the code-adjacent wiki. Lives next to the repo, uses Markdown, low friction to create. Weak on search, fragmented across repos.

Git-native docs (Obsidian / MkDocs / Docusaurus over a Git repo): documentation as code. Version-controlled, PR-reviewed, deploy-pipelined. Strongest for technical docs, weakest for everything else.

None of these are objectively best. Each is best for a specific team stage and content type.

The feature-by-feature comparison

The four tools, the shared problem: search + findability. Most teams pick a tool without planning the search layer, which is why knowledge evaporates.

The four tools, the shared problem: search + findability. Most teams pick a tool without planning the search layer, which is why knowledge evaporates.

Write-side friction (how painful is it to add a doc?)

| Capability | Confluence | Notion | GitHub Wiki | Git-native docs |

|---|---|---|---|---|

| Time to create first page | 3-5 min | 1-2 min | 30 seconds | 5-10 min (repo setup) |

| Markdown support | Partial | Yes (with quirks) | Yes | Yes (native) |

| Embed code with highlighting | Yes | Yes | Yes | Yes (best) |

| Tables | Yes (editor) | Yes (strong) | Yes (Markdown) | Yes |

| Diagrams (Mermaid etc.) | Plugin | Limited | Partial | Yes (native) |

| Draft / unpublished state | Yes | Yes | No | Yes (PR branches) |

GitHub Wiki wins on speed-to-create. Notion wins on richness. Git-native wins on technical precision but has setup cost.

Read-side friction (how easy to find and trust content?)

| Capability | Confluence | Notion | GitHub Wiki | Git-native docs |

|---|---|---|---|---|

| Full-text search (cross-workspace) | Strong | Strong | Weak (per-repo) | Depends on tooling (Algolia, lunr) |

| Discoverability for new hires | Weak (hierarchy rot) | Medium | Weak (fragmented) | Strong (with proper IA) |

| Staleness indicators | Manual | Manual | Manual | Git history (automatic) |

| Permission granularity | Strong | Strong | Tied to repo | Tied to repo |

| External (customer-facing) docs | Possible | Possible | No | Yes (designed for this) |

The GitHub Wiki's fragmentation across repos is the silent killer. A 20-repo organization has 20 search indexes. Finding "how does our staging auth work?" means guessing which repo to look in — and if the answer spans two repos, the wiki can't link it cleanly.

Collaboration and review

| Capability | Confluence | Notion | GitHub Wiki | Git-native docs |

|---|---|---|---|---|

| Inline comments | Yes | Yes | No (only issues) | Yes (via PR) |

| Approval workflow | Yes | Limited | No | Yes (PR review) |

| Version history | Yes | Yes | Yes (git) | Yes (git, strongest) |

| Diff-view of changes | Weak | Weak | Yes | Yes (strongest) |

| Bulk edit / refactor | Weak | Weak | Git-based | Strongest |

Git-native docs win the review/versioning contest decisively. If your engineering culture already does code review well, docs-as-code transfers that muscle directly.

AI search / retrieval (2026 relevance)

| Capability | Confluence | Notion | GitHub Wiki | Git-native docs |

|---|---|---|---|---|

| Built-in AI search | Yes (Atlassian Intelligence) | Yes (Notion AI) | No | Depends on deploy |

| RAG-friendly for internal LLM | Possible (API + export) | Possible (API) | Native (Git) | Native (Git) |

| Consistency of structure for AI | Medium | Medium | Low | High |

If you're feeding a self-hosted LLM with internal docs, Git-native is the cleanest source. Notion and Confluence both offer APIs but require ingestion pipelines. Wikis fragmented across repos are the hardest to RAG against without a lot of custom plumbing.

The pricing reality

| Tier | Confluence | Notion | GitHub Wiki | Git-native docs |

|---|---|---|---|---|

| 20 users | $6/user/mo (~$1,440/yr) | $10/user/mo (~$2,400/yr) | Free (included in GitHub) | Hosting: $0-30/mo |

| 100 users | $6/user/mo (~$7,200/yr) | $15/user/mo (~$18,000/yr) | Free | $30-200/mo + build pipeline |

| 500+ users | $11/user/mo Enterprise (~$66,000/yr) | $25/user/mo Enterprise (~$150,000/yr) | Free | $200-1000/mo + maintenance |

Notion is the most expensive once you reach the 500-user tier — a number most teams don't anticipate when piloting at 20. Confluence is the enterprise default for a reason: per-seat cost at scale beats Notion's. GitHub Wiki is free because it has the weakest feature set. Git-native is cheapest if your team already has deploy infrastructure, most expensive if you're standing it up from scratch.

The decision framework

Choose Confluence if:

- You're already on Atlassian (Jira + Confluence integration saves real time)

- You have >200 engineers and need fine-grained permissions across many teams

- You have compliance or audit requirements that need page-level approval workflows

- Your knowledge contributors are more than 50% non-engineers (PMs, designers, ops)

Choose Notion if:

- You're a fast-growing startup (<200 engineers) that values write-side speed over read-side structure

- Your team already uses Notion for product or company-wide documentation

- You want one tool to cover docs + tasks + databases (accept the sprawl tradeoff)

- Budget sensitivity is low; features matter more

Choose GitHub Wiki if:

- You have <30 engineers and a small repo count (<5)

- You want minimum friction to write and maximum co-location with code

- You're okay with repo-scoped knowledge (no cross-repo authority)

- You're deferring the "real" knowledge-management decision to a later scale

Choose Git-native docs (MkDocs / Docusaurus over a repo) if:

- Your documentation is primarily technical (architecture, API, runbooks)

- You want docs reviewed like code (PR, approval, deploy)

- You need external-facing docs for customers or open-source

- Your team has the platform skills to maintain a build pipeline

- You plan to feed an internal LLM with your docs (RAG-ready out of the box)

The 80/20 analysis

Most engineering teams under 50 engineers can operate on GitHub Wiki for repo-scoped technical docs + Notion for cross-team processes. This is the pragmatic default. Upgrade to Confluence when compliance or team size forces it. Move to Git-native docs when you need external-facing docs or an LLM-ready knowledge base.

The hidden problem: nobody pays for findability

All four tools ship with search. All four teams I've seen in the last three years have complained their search is "broken." The issue is almost never the tool; it's that the team treats search as an afterthought.

Findability rules that apply regardless of tool:

- Every doc has a canonical URL, linked from one authority source. If you have three pages titled "Staging Auth," you've already lost.

- Old docs get marked stale or archived, not silently outdated. Confluence's "last edited 14 months ago" is a signal everyone ignores. An explicit

status: staletag isn't. - The wiki homepage is maintained by a named person. "Everyone owns it" = nobody owns it. A named owner reviewing the homepage quarterly is the single highest-ROI knowledge-management practice.

- New hires write their first-month question into the wiki. Not "what they learned" — the questions they asked. That's your gap list.

Teams that skip these rules turn any tool into a graveyard. Teams that apply them get 70% of the value regardless of tool choice.

Measuring knowledge management effectiveness

Three metrics that actually matter, measurable in any of the four tools:

- Time-to-first-answer for new hires. Track how long a new engineer takes to find an answer to a pre-agreed list of 10 questions. Should trend down week-over-week. Most teams never measure this; measuring it reveals the findability gaps.

- Doc reuse rate. Of pages created in the last 90 days, how many have been opened by at least 3 distinct people? Below 40% means most docs are written-once-never-read.

- Staleness ratio. Fraction of pages last updated >12 months ago. Over 50% is a flag the team is accumulating rot. Confluence tends toward the worst staleness ratios because old pages persist silently.

PanDev Metrics doesn't directly track documentation quality — our telemetry sees code and IDE activity. What we can do: correlate documentation access patterns (via Git + IDE events for Git-native docs) with onboarding ramp time. Teams with strong docs-as-code hygiene hit the meaningful-PR milestone 30-40% faster than teams with Confluence-only knowledge bases of comparable size. Correlation isn't causation, but the pattern is consistent.

Summary comparison

| Area | Winner | Note |

|---|---|---|

| Write-speed | GitHub Wiki (tie with Notion) | 30 seconds to a new page |

| Read-findability at scale | Confluence or Git-native | Depends on hierarchy discipline |

| Technical accuracy | Git-native | PR review catches errors before publication |

| Cost at scale (500+) | GitHub Wiki (free) or Confluence | Notion gets expensive fast |

| External-facing docs | Git-native (Docusaurus) | Only one designed for this |

| LLM-RAG readiness | Git-native | Markdown in a repo is the cleanest source |

| Non-engineer contributors | Notion or Confluence | WYSIWYG wins when writers aren't technical |

Common mistakes to avoid

- Adopting a new tool before solving the ownership problem. Tool migration is theater if no one owns the homepage.

- Running two tools in parallel "for a while." The "while" becomes permanent and you get the 1,400-page problem.

- Optimizing for writers, not readers. Notion is fast to write, slow to find. Most teams are read-heavy and write-light; choose accordingly.

- Ignoring the LLM future. Your docs will be consumed by AI in the next 3 years. Plan for structured, Git-based sources.

- Treating search as the tool's problem. It's your team's problem. No tool will save you from a team that doesn't curate.

The contrarian claim: most teams blame their wiki for being "a mess." It's not the wiki. It's the team's refusal to treat documentation as a first-class engineering artifact with an owner, a review process, and a deprecation policy. Any of the four tools works when those three things exist. None work when they don't.

The honest limit

Our data is strongest on IDE and Git activity. We see where documentation links are clicked and which source files are opened during onboarding — but we don't measure documentation quality directly. The search-time numbers above come from customer interviews, published engineering blogs (Shopify, GitLab, Stripe), and 4 years of watching customers pick tools. Your mileage will vary; measure your own time-to-first-answer before declaring victory.

Related reading

- New Developer Onboarding: How Metrics Show the Ramp-Up to Full Productivity — the strongest productivity signal tied to knowledge management

- Design Docs: When to Write, When to Skip — the complement to knowledge tooling; docs that need to exist

- Self-Hosted LLMs for Engineering Teams — why your doc strategy affects your AI strategy

- External: GitLab Handbook — the most-studied example of docs-as-code done at scale, publicly available as a reference