LegalTech Engineering: Compliance-Heavy Development Done Right

A LegalTech engineer doesn't just ship features. Every commit touches data that could be subpoenaed, privileged, or regulated under state-specific bar association rules. The global legal-software market crossed $29B in 2024 (Deloitte Legal Operations 2024 report), and with it came a compliance surface most SaaS engineering teams never see: attorney-client privilege, SOC 2 Type II as baseline, ISO 27001 for document handling, plus bar-association e-discovery rules in 50+ jurisdictions.

Productivity measurement in this environment is not a surveillance tool — it's an audit artifact. The same IDE telemetry that tells a SaaS EM "the team is healthy" is, in LegalTech, evidence of SDLC maturity in front of an enterprise law-firm client's IT security review.

{/* truncate */}

Why LegalTech engineering is different

Three pressures stack on the engineering org that don't exist in consumer SaaS:

Privilege-aware data handling. Anything touching client-matter data is potentially privileged. Engineers can't log it, can't cache it, can't ship it to a third-party analytics service without a DPA and a review. A careless console.log of a matter ID can be a disclosable event.

Jurisdictional fragmentation. A product sold to a law firm in New York, London, and Frankfurt must clear the American Bar Association Model Rule 1.6, SRA (UK) standards, and GDPR Art. 9 special categories simultaneously. Each jurisdiction has a different data-residency expectation. Engineers don't just deploy to regions — they deploy to legal regimes.

Audit-ready by default. Enterprise law-firm procurement includes a 100-200 question IT security questionnaire. Change management, separation of duties, access logs, deployment approvals — all need evidence trails. A Stack Overflow 2024 Developer Survey showed 31% of developers report spending significant time on compliance work; in LegalTech we consistently see 45-55%.

| Framework / regulation | What it requires | Where it hits engineering |

|---|---|---|

| ABA Model Rule 1.6 + state bar rules | Duty of confidentiality, competent use of technology | No third-party data leaks, vetted subprocessors, cert pinning |

| SOC 2 Type II | Control evidence over 6-12 months | Immutable audit logs, change-approval trails, access reviews |

| ISO 27001 / ISO 27018 | Information security + cloud PII handling | Classification, encryption at rest + in transit, key management |

| GDPR Art. 6, 9 + LIA | Lawful basis, special-category data | Data-subject erasure paths, consent records, LIA documentation |

| e-Discovery (FRCP 26+37) | Preservation of relevant ESI | Deterministic snapshots, WORM storage, deletion holds |

| NY DFS 500 / CCPA / HIPAA if crossover | Breach notification, encryption, access controls | Incident runbooks tuned to 72-hour / 30-day windows |

The metrics that matter in LegalTech

1. Change approval lead time — not raw deployment frequency

Classic DORA deployment frequency fits cloud-native SaaS teams. In LegalTech, a deploy that skips change-advisory-board review is a SOC 2 finding. The more useful metric is the time between a change-approval ticket opening and the deployment closing it. Healthy teams target under 48 hours for standard changes, under 30 minutes for pre-approved change types.

A target of "deploy 10× per day" in a law-firm-facing product is often a red flag, not a badge — it means change control is being bypassed.

Benchmark: median change-approval-to-deploy 24-72h for mid-market LegalTech; elite teams get to 2-6h through pre-approved standard-change catalogs.

2. Access log completeness

Every production system touching matter data must emit access logs, and those logs must survive a 7-year retention audit. The engineering-health metric: percentage of production services emitting structured access logs to an immutable store. A target of 100% sounds aspirational; in regulated environments, anything under 99.5% fails the next audit.

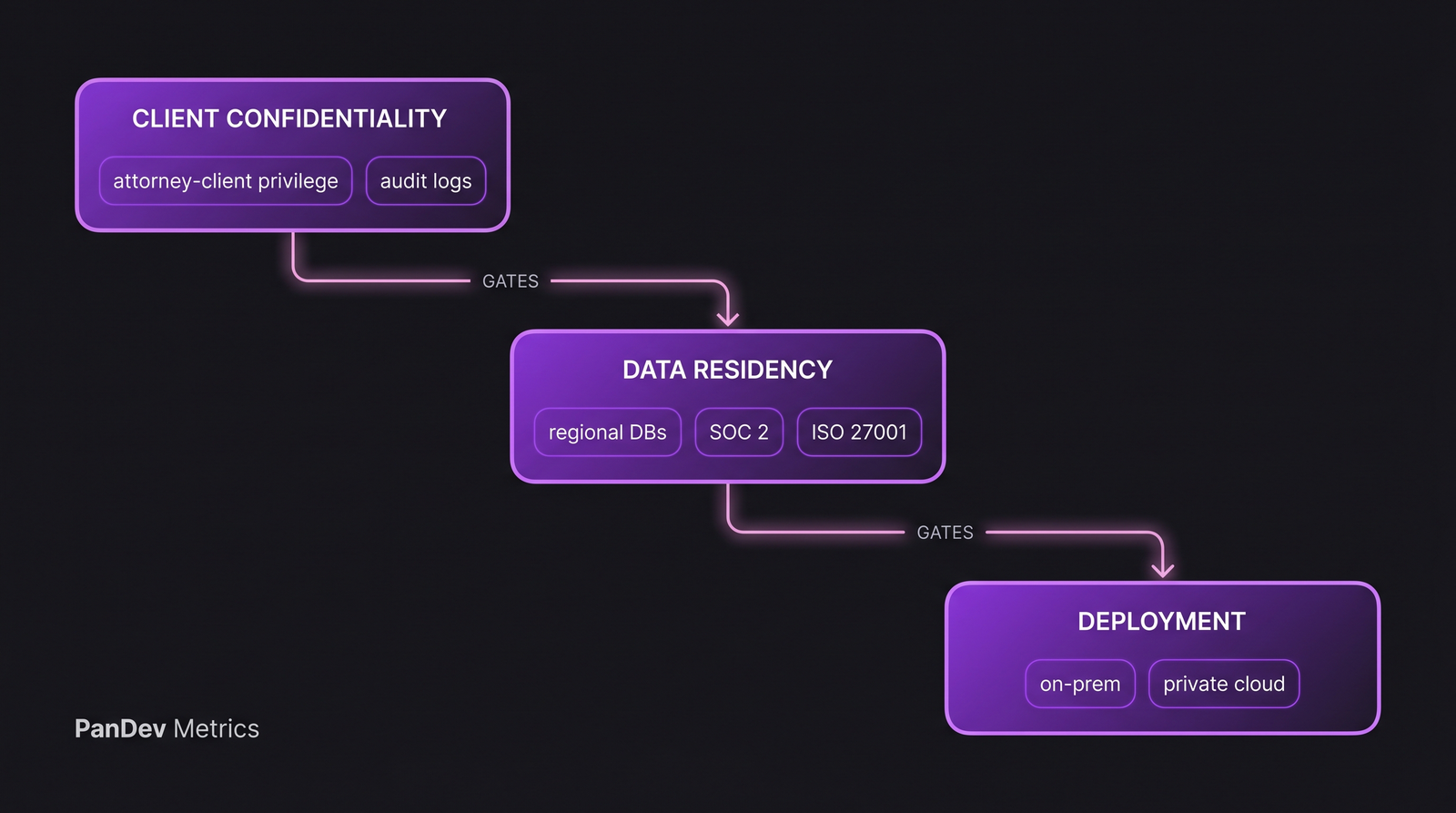

Three layers gate each other: privilege rules at the top, residency and certification in the middle, deployment model at the bottom. Breaking any layer leaks upward.

Three layers gate each other: privilege rules at the top, residency and certification in the middle, deployment model at the bottom. Breaking any layer leaks upward.

3. Separation-of-duties adherence rate

No single engineer writes AND merges AND deploys a change to production systems handling privileged data. SOC 2 CC6.1 / CC8.1 requires separation. Track: percentage of production deploys with distinct author, approver, and deployer identities. We routinely see this drop to 60-70% in teams that don't measure it — the same engineer approves their own PR through a shared service account.

4. Dependency and CVE response SLA

A law firm's Monday-morning IT security briefing might reference a CVE published Friday at 10pm. LegalTech engineering SLAs for CVE remediation need to be tighter than general SaaS — typically 24h for critical, 7 days for high. Tracking the time between CVE disclosure and patched-in-production is an engineering-health metric that also doubles as RFP-defensible evidence.

5. Coding time on regulated paths vs non-regulated

In LegalTech, engineers split time between code that touches client-matter data (privileged path) and code that doesn't (marketing site, internal admin, internal tooling). Privileged-path changes carry 2-3× the review overhead. Measuring the actual distribution of coding effort between paths tells you where to invest in review automation, where to simplify the compliance tax, and where to push for self-service.

How compliance changes measurement

Four things change versus a standard SaaS team:

Self-report productivity is disqualified from audit evidence. Auditors want objective signals — IDE telemetry, commit metadata, approval logs. Self-report surveys of "how did the sprint go?" are fine for retros; they're not evidence for SOC 2. This aligns with the broader argument we make about IDE telemetry vs self-report — in regulated environments, the argument isn't philosophical, it's procurement.

On-prem is often non-negotiable. Enterprise law-firm clients frequently require air-gapped or private-cloud deployment of any analytics tools used on their engineering org. This filters the vendor landscape hard — most SaaS-only productivity tools are immediately disqualified.

Data minimization is the default. We collect IDE heartbeat data at project + file-path + language granularity, not file contents or keystrokes. For LegalTech teams adopting engineering intelligence, we recommend dropping file-path collection below repo-name granularity and tagging sensitive projects to exclude path data entirely — the insight trade-off is small and the compliance posture is dramatically cleaner.

Audit trails become a product feature. The thing engineering leaders track for self-management (who changed what, when) becomes something the law firm asks to see during procurement.

Typical LegalTech engineering team profile

| Parameter | Typical range |

|---|---|

| Team size | 15-80 engineers |

| Tech stack | .NET/C# or Java dominant, Python for document processing, React/TypeScript frontend |

| Deploy cadence | Weekly to biweekly; rarely daily on privileged paths |

| Primary pressure | Audit readiness + data residency + jurisdictional breadth |

| Toolchain | Jira or Azure DevOps (not GitHub Issues), on-prem GitLab or Bitbucket DC, Jenkins or Azure Pipelines |

| Mandatory certifications | SOC 2 Type II, ISO 27001, often HIPAA BA for litigation tech |

| Client base | Law firms (top-200), corporate legal, regulators |

What to track differently from a standard SaaS team

- Approval velocity over change velocity — a faster approval process is the real lever; shipping more frequently without approval speed improvement is a risk, not a win.

- After-hours work by privileged-project tag — after-hours coding on a privileged path raises a different flag than after-hours work on the marketing site. Surface the distinction.

- Vacation and OOO detection with matter-conflict awareness — conflicts-of-interest rules mean the engineer who builds the tool for Client A may be excluded from Client B's instance; the team has to know about OOO at that granularity to reassign work.

- Cost per feature by jurisdiction — the same feature may cost 1.4× to ship in Germany versus the US purely due to review overhead; track it explicitly so product decisions are informed.

Common pitfalls

- Optimizing for throughput only — pushing for more PRs per week in a LegalTech team is often pushing for shortcuts around review. The right target is stable throughput with steady approval velocity.

- Treating compliance as overhead to minimize — it IS overhead, but it's also the moat. Sophisticated legal buyers will pay a 30-50% price premium for a vendor that demonstrably handles compliance well.

- Using SaaS-only productivity tools — if the tool can't ship on-prem or private-cloud, it will fail procurement reviews and waste your evaluation budget.

- Ignoring the cost of context switching between privileged and non-privileged code — engineers switching constantly pay a measurable context-switching tax; in LegalTech that tax is amplified by the mental mode-switch between "normal SaaS engineering" and "audit-aware engineering."

The contrarian position

Most LegalTech engineering-org advice assumes compliance is a cost center. We think the inverse: LegalTech teams that instrument their SDLC rigorously get procurement conversations shorter by weeks. A buyer who sees a 3-click answer to "show me your separation-of-duties adherence over the last 90 days" has fewer follow-up questions than one who sees a PDF policy document. Engineering intelligence in LegalTech is not just an internal management tool — it's a pre-sales accelerator.

Where PanDev Metrics fits

Our on-prem Docker and Kubernetes deployment (single docker-compose up or Helm chart) is the baseline requirement for most LegalTech clients. We track the metrics above — change approval lead time, separation-of-duties adherence, after-hours coding on tagged-sensitive projects — via IDE heartbeat plus Git event integration. Our data collection is project and language level, not file-content level, which matches the data-minimization default LegalTech buyers expect.

An honest limit

Our dataset tilts to LegalTech companies at 20-150 engineers. Enterprise legal departments running 500+ person engineering orgs have regulatory surface areas (banking-legal crossover, multi-jurisdictional defense) that we've seen but haven't yet measured at scale. The frameworks above are defensible at the scales we've observed; treat the numbers as directional for the Fortune 500 legal IT case.