OKRs for Engineering Teams: Templates That Actually Work (2026 Examples)

McKinsey research on engineering effectiveness found that the highest-performing organizations share one trait: their engineering goals are explicitly connected to business outcomes. Yet most engineering teams write OKRs like "Improve code quality" with a key result of "Increase test coverage to 80%." That's not an OKR. That's a task with a number next to it. Good engineering OKRs connect technical work to business outcomes, and the right metrics make them actually measurable.

{/* truncate */}

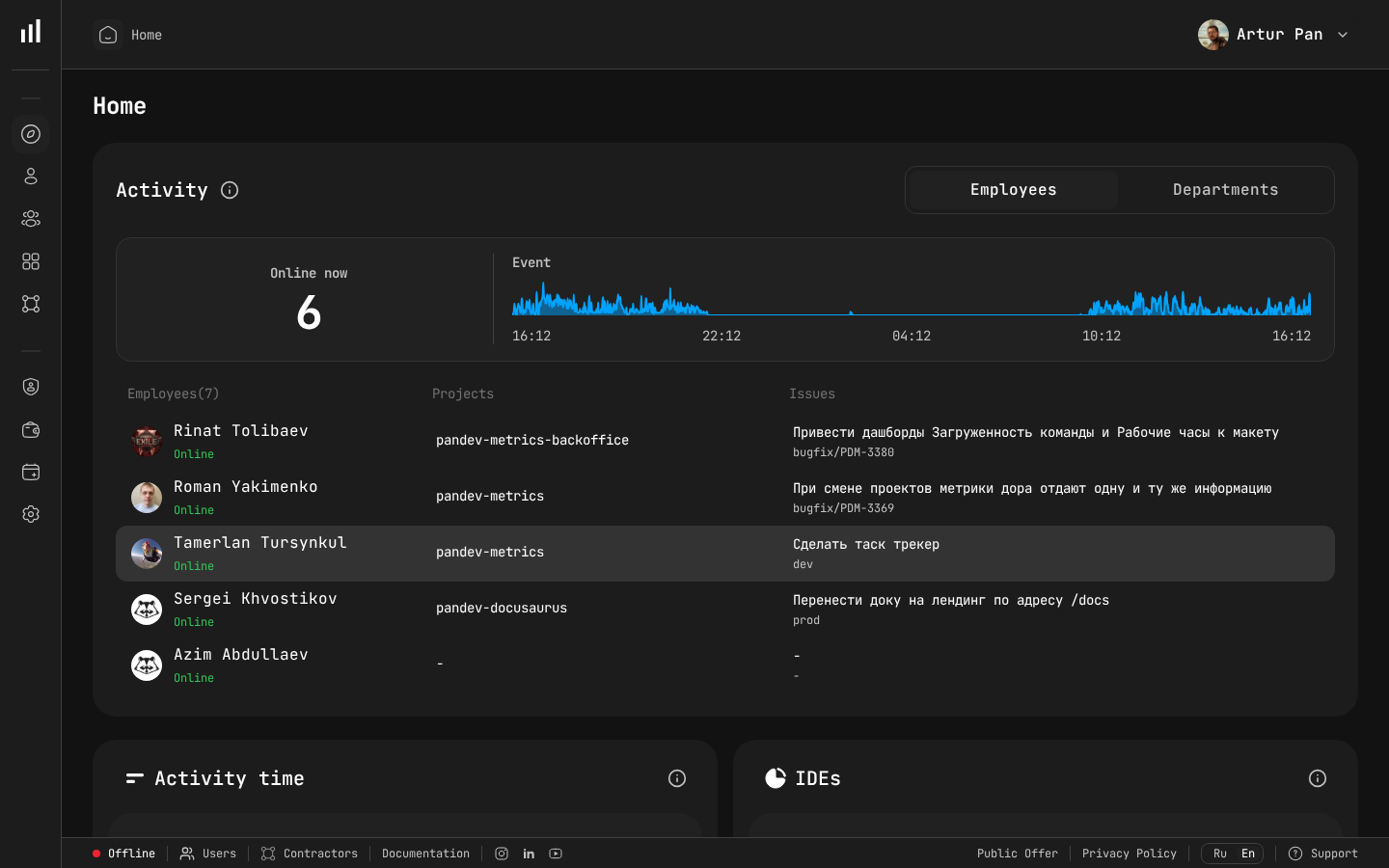

Team dashboard providing real-time visibility into engineering OKR progress.

Why Engineering OKRs Fail

Before writing good OKRs, let's understand why most engineering OKRs are bad.

Failure Mode 1: Output OKRs

Objective: Improve engineering productivity

KR1: Close 150 Jira tickets per sprint

KR2: Increase deployment frequency to 5x/week

KR3: Reduce average PR size to under 200 lines

Why it fails: These are outputs, not outcomes. You can close 150 tickets by splitting one task into 150 subtasks. You can deploy 5x/week by deploying trivial changes. You can reduce PR size by creating meaningless micro-PRs. None of this creates value.

Failure Mode 2: Activity OKRs

Objective: Invest in technical excellence

KR1: Conduct 4 tech talks per quarter

KR2: Each developer spends 20% time on tech debt

KR3: Complete 3 architecture documents

Why it fails: These are activities, not results. You can conduct 4 terrible tech talks. You can spend 20% on tech debt that doesn't matter. You can write architecture documents nobody reads. Where's the outcome?

Failure Mode 3: Unmeasurable OKRs

Objective: Build a world-class engineering culture

KR1: Improve developer happiness

KR2: Attract top talent

KR3: Be recognized as an engineering-led company

Why it fails: How do you know when you've succeeded? "Improve developer happiness" — by how much? Measured how? "Attract top talent" — what's top talent? How many? These are wishes, not key results.

Failure Mode 4: Irrelevant OKRs

Objective: Modernize the tech stack

KR1: Migrate 100% of services to Kubernetes

KR2: Adopt TypeScript across all frontend projects

KR3: Implement GraphQL for all APIs

Why it fails: Maybe all of these are good technical decisions. But the OKR doesn't explain why any of it matters to the business. If the CEO asks "why should I care about Kubernetes?" and you can't answer with a business outcome, the OKR is irrelevant.

The Framework: Outcome-Driven Engineering OKRs

Good engineering OKRs follow a framework:

Objective: [Business or user outcome that engineering enables]

Key Result 1: [Measurable change in a metric that proves progress]

Key Result 2: [Measurable change in a different dimension]

Key Result 3: [Measurable change that prevents unintended consequences]

The key principles:

- Objectives describe outcomes, not activities. "Reduce customer-facing downtime" not "Improve infrastructure."

- Key Results are measurable and time-bound. "Reduce P1 incidents from 6/quarter to 2/quarter" not "Reduce incidents."

- At least one KR prevents gaming. If your objective is speed, include a quality KR. If your objective is quality, include a delivery KR.

- Engineering OKRs connect to company OKRs. If the company objective is "Expand to enterprise market," the engineering OKR might be about reliability (enterprise customers demand 99.9% uptime) or security (SOC2 compliance).

Engineering OKR Templates

Here are complete, usable OKR templates for common engineering objectives. Adapt the specific numbers to your context.

Template 1: Delivery Speed

When to use: The business needs engineering to ship faster — product roadmap is bottlenecked by engineering capacity or process.

Objective: Accelerate feature delivery to support Q3 revenue targets

KR1: Reduce average Lead Time from commit to production

from 5 days to 2 days

Measured by: DORA Lead Time (4-stage breakdown)

KR2: Increase Planning Accuracy from 65% to 80%

Measured by: Sprint commitment vs. actual delivery

KR3: Maintain or improve change failure rate

(currently 8%, target ≤ 8%)

Measured by: DORA Change Failure Rate

Why KR3 exists: Prevents gaming KR1 by shipping untested code.

If speed increases but quality drops, the OKR isn't met.

How to track progress:

| Week | Lead Time | Planning Accuracy | Change Failure Rate | On Track? |

|---|---|---|---|---|

| 1 | 4.8 days | 67% | 7% | Starting |

| 4 | 4.1 days | 71% | 8% | Yes |

| 8 | 3.2 days | 75% | 7% | Yes |

| 12 | 2.1 days | 79% | 6% | Achieved |

Template 2: Reliability & Uptime

When to use: Customer trust is at stake, or you're moving upmarket to enterprise customers who demand SLAs.

Objective: Achieve enterprise-grade reliability to close

the Fortune 500 pipeline

KR1: Reduce P1 production incidents from 6/quarter

to 2/quarter or fewer

Measured by: Incident tracking system

KR2: Improve mean time to recovery (MTTR) from 4 hours

to under 1 hour

Measured by: Incident resolution timestamps

KR3: Achieve 99.95% uptime for core customer-facing services

Measured by: Monitoring and SLA tracking

KR4: Ship at least 85% of planned roadmap features

(Delivery Index ≥ 0.85)

Measured by: Delivery Index from sprint tracking

Why KR4 exists: Prevents "we can't ship anything because

we're focused on reliability." Reliability AND delivery

must coexist.

Template 3: Developer Productivity

When to use: Engineering feels slow but you're not sure why. Teams are busy but output doesn't match effort.

Objective: Remove systemic blockers so engineers can do

their best work

KR1: Increase average Focus Time from 1.8 hours/day

to 3.0 hours/day across engineering

Measured by: Focus Time metric (engineering platform)

KR2: Reduce average PR review time from 12 hours to 4 hours

Measured by: PR cycle time (review stage)

KR3: Improve developer satisfaction score from 6.2/10

to 8.0/10 on "I have enough uninterrupted time

to do deep work"

Measured by: Quarterly developer survey

Why this works: KR1 is the quantitative measure, KR3 is

the qualitative validation. If Focus Time improves but

developers don't feel the difference, something is wrong

with the measurement or the intervention.

Specific initiatives that might achieve these KRs:

| Initiative | Expected Impact on KRs |

|---|---|

| No-meeting Wednesdays | KR1: +0.5 hrs Focus Time on Wednesdays |

| Auto-assign PR reviewers | KR2: -3 hrs review wait time |

| Reduce recurring meetings by 30% | KR1: +0.3 hrs Focus Time org-wide |

| Improve CI pipeline speed | KR2: indirect (faster feedback loops) |

Note: The initiatives are how you achieve the OKR, not the OKR itself. The OKR is the outcome. Track both.

Template 4: Technical Debt Reduction

When to use: Technical debt is measurably impacting delivery speed, reliability, or developer experience.

Objective: Eliminate the technical debt that's slowing

down feature delivery

KR1: Reduce deployment time for the monolith from 45 minutes

to under 10 minutes

Measured by: CI/CD stage in Lead Time breakdown

KR2: Reduce time spent on bug fixes from 25% of engineering

capacity to under 15%

Measured by: Project/category allocation tracking

KR3: Improve Lead Time for the Platform team from 8 days

to 3 days (the team most affected by debt)

Measured by: Team-level Lead Time

Why this works: Instead of "reduce tech debt" (unmeasurable),

these KRs target the specific impacts of tech debt: slow

deployments, high bug load, slow delivery. Fix the impacts,

and you've fixed the debt that matters.

Template 5: Scaling & Growth

When to use: You're hiring rapidly and need to ensure the organization scales without losing efficiency.

Objective: Scale engineering from 40 to 65 developers

without losing delivery efficiency

KR1: Maintain Delivery Index above 0.80 org-wide

throughout the hiring period

Measured by: Delivery Index trend

KR2: New hires reach productive contribution (Activity Time

within 20% of team average) within 8 weeks

Measured by: New hire ramp-up tracking

KR3: Keep engineering cost per delivered feature within 10%

of pre-hiring baseline (adjusted for team size)

Measured by: Cost per project analytics

KR4: Maintain Productivity Score within 5% of pre-hiring

baseline during scaling

Measured by: Org-wide Productivity Score

Why KR3 and KR4 exist: Hiring more people doesn't help

if each person delivers proportionally less. These KRs

ensure you're scaling output, not just headcount.

Template 6: Security & Compliance

When to use: SOC2 audit, enterprise customer requirements, or proactive security investment.

Objective: Achieve SOC2 Type II compliance to unblock

enterprise sales pipeline

KR1: Zero critical or high-severity security findings

in the SOC2 audit

Measured by: Audit report

KR2: Reduce average time-to-patch for critical

vulnerabilities from 14 days to 48 hours

Measured by: Vulnerability tracking system

KR3: 100% of production deployments pass automated

security scanning

Measured by: CI/CD pipeline enforcement

KR4: Deliver all planned Q3 features on schedule

(Planning Accuracy ≥ 75%)

Measured by: Planning Accuracy metric

Why KR4 exists: Security work often becomes an excuse

for not delivering features. This KR ensures security

improvements don't come at the cost of all other work.

How to Set the Right Targets

The hardest part of writing OKRs is choosing the right numbers. Too easy and they're not ambitious. Too hard and they're demoralizing.

The Baseline Method

- Measure the current state — you can't set a target without knowing where you are

- Research benchmarks — what do similar organizations achieve?

- Set a stretch target — aim for 70% achievement probability (Google's recommendation)

Example:

Current Lead Time: 5 days

Industry benchmark (DORA "High"): 1 day to 1 week

Stretch target: 2 days (60% improvement)

Comfortable target: 3 days (40% improvement)

Chosen target: 2 days (ambitious but achievable in one quarter

with focused investment)

The "So What" Test

For every KR, ask: "If we achieve this, so what? What changes for the business?"

| KR | "So What?" | Valid? |

|---|---|---|

| Reduce Lead Time to 2 days | Features reach customers faster, competitive advantage | Yes |

| Increase test coverage to 80% | ??? | Not unless tied to an outcome |

| Reduce P1 incidents to 2/quarter | Better uptime, fewer customer complaints, less firefighting | Yes |

| Migrate to Kubernetes | ??? | Not unless tied to deployment speed or cost reduction |

If you can't answer "so what?" with a business outcome, the KR isn't ready.

Aligning Engineering OKRs With Company OKRs

Engineering doesn't exist in a vacuum. Here's how to cascade company objectives into engineering OKRs:

Example: E-Commerce Company

Company Objective: Grow revenue by 40% YoY

| Company KR | Engineering OKR Connection |

|---|---|

| Launch mobile app by Q3 | Eng Objective: Deliver mobile app MVP. KRs: feature completeness, performance, launch date |

| Reduce churn by 20% | Eng Objective: Improve reliability. KRs: uptime, incident count, page load time |

| Expand to 3 new markets | Eng Objective: Enable internationalization. KRs: i18n framework, launch timeline, no regression in existing markets |

Example: B2B SaaS Company

Company Objective: Move upmarket to enterprise customers

| Company KR | Engineering OKR Connection |

|---|---|

| Close 10 enterprise deals | Eng Objective: Build enterprise features. KRs: SSO, audit logs, SLA compliance |

| Achieve SOC2 certification | Eng Objective: Security compliance. KRs: audit readiness, vulnerability response time |

| Reduce support tickets by 30% | Eng Objective: Improve product stability. KRs: bug rate, API reliability, documentation coverage |

The OKR Cadence for Engineering

Quarterly Cycle

| When | Activity |

|---|---|

| Week -2 (before quarter) | CTO drafts engineering OKRs aligned with company OKRs |

| Week -1 | VP Engs and EMs cascade into team-level OKRs |

| Week 1 | All OKRs finalized and shared org-wide |

| Week 4 | First check-in: are we on track? Early data available |

| Week 7 | Mid-quarter review: course-correct if needed |

| Week 10 | Pre-close: final push, identify at-risk KRs |

| Week 12-13 | Quarter close: score OKRs, write retrospective |

Weekly Check-in Template

Use this in your leadership meeting:

OKR Progress — Week [X] of Q[X] 2026

Objective: [Name]

| Key Result | Target | Current | Trend | Status |

|------------|--------|---------|-------|--------|

| KR1: ... | X | Y | ↑/↓/→ | On track / At risk / Off track |

| KR2: ... | X | Y | ↑/↓/→ | On track / At risk / Off track |

| KR3: ... | X | Y | ↑/↓/→ | On track / At risk / Off track |

Notes:

- [What happened this week]

- [Blockers or risks]

- [Decisions needed]

Scoring OKRs

At the end of the quarter, score each KR on a 0.0-1.0 scale:

| Score | Meaning |

|---|---|

| 1.0 | Fully achieved or exceeded |

| 0.7-0.9 | Strong progress, nearly there |

| 0.4-0.6 | Partial progress, worth investigating why |

| 0.1-0.3 | Minimal progress — was the KR realistic? |

| 0.0 | No progress or not started |

Healthy scoring pattern: If your team consistently scores 1.0 on all OKRs, your targets are too easy. The ideal average is 0.6-0.7 (a benchmark originally established by Google and now widely adopted). Some misses mean you're being ambitious enough. The DORA State of DevOps research further validates this: elite teams set ambitious targets and learn from partial misses rather than playing it safe.

Common Questions

"Should individual developers have OKRs?"

Generally no. OKRs work best at the team and department level. Individual developers should have personal goals (career growth, skill development) tracked in 1:1s, but tying individual OKRs to engineering metrics creates perverse incentives.

Exception: Staff+ engineers who own org-wide technical initiatives can have individual OKRs tied to those initiatives.

"How many OKRs should an engineering team have?"

1-2 objectives with 3-4 key results each. More than that, and you're not focused. If a team has 5 objectives, they effectively have none — everything is a priority, which means nothing is.

"What if our OKR becomes irrelevant mid-quarter?"

Update it. OKRs are not sacred commitments — they're strategic alignment tools. If the market shifts, a competitor launches something, or priorities change, update the OKR. Document why, and adjust the end-of-quarter scoring accordingly.

"How do OKRs relate to sprint planning?"

OKRs set the direction; sprints execute the work. Each sprint should contain tasks that move at least one KR forward. If a sprint is full of work that doesn't connect to any OKR, you have an alignment problem.

| Level | Time Horizon | Purpose |

|---|---|---|

| OKRs | Quarterly | Strategic direction |

| Roadmap | Monthly | Feature sequencing |

| Sprint | Bi-weekly | Execution planning |

| Daily standup | Daily | Tactical coordination |

"What metrics platform do we need for engineering OKRs?"

At minimum, you need automated tracking for:

- Lead Time and DORA metrics (for delivery speed OKRs)

- Focus Time and Activity Time (for productivity OKRs)

- Delivery Index and Planning Accuracy (for predictability OKRs)

- Cost per project/team (for efficiency OKRs)

Manual tracking breaks down by week 3 of the quarter. Automated, always-current metrics are what make OKR check-ins useful instead of stale.

The Anti-Gaming Principle

Every OKR system is vulnerable to gaming. The best defense is the "balanced KR" approach shown in every template above: always include a counter-metric.

| If You're Optimizing For... | Include a Counter-Metric For... |

|---|---|

| Speed (Lead Time) | Quality (Change Failure Rate) |

| Quality (Bug Rate) | Delivery (Planning Accuracy) |

| Efficiency (Cost) | Output (Delivery Index) |

| Output (Features Shipped) | Sustainability (Team Health) |

If speed improves but quality tanks, the OKR is not achieved. If quality improves but nothing ships, the OKR is not achieved. Balance prevents gaming and ensures real progress.

Set engineering OKRs you can actually measure. PanDev Metrics provides automated tracking for every metric in this guide — Lead Time, Focus Time, Delivery Index, Planning Accuracy, Productivity Score, and cost analytics. Set your targets, track progress weekly, and score your OKRs with real data instead of gut feeling.