On-Call Rotation Best Practices: SRE-Style Schedules to Reduce Burnout (2026)

Your best SRE quit last quarter. She didn't say "burnout" in the exit interview, but her last three months included 14 after-hours pages, 2 weekend incidents, and a 3am call on her birthday. A 2021 Catchpoint / DevOps Institute survey of 500+ on-call engineers found 67% reported burnout symptoms tied directly to paging load. Google's SRE book sets an internal ceiling of 2 incidents per on-call shift before a rotation is declared unhealthy — most teams we measure blow past that in week one.

On-call is fixable. It's a scheduling and sociotechnical problem, not a personality flaw in the people who can't hack it. Here's a 9-rule playbook that keeps your SLA intact and keeps your best engineers on the team past their second rotation.

{/* truncate */}

The problem

Three failure modes show up in almost every team with a broken rotation:

Too few rotators. A 4-person rotation means each person is on-call every 4th week. Over a year that's 13 weeks of degraded sleep, cancelled plans, and interrupted focus. Google's SRE team targets a minimum of 6 people per rotation for exactly this reason — below six and recovery time vanishes.

No hand-off ritual. The incoming on-call engineer learns about the flapping alert from PagerDuty at 2am. The outgoing engineer had context but didn't write it down. Every rotation resets knowledge to zero, so the same incident gets rediscovered monthly.

Compensation pretends the pager doesn't exist. Engineers are salaried, the pager fires at 3am, and nobody adjusts expectations for the following day. A 2023 Blameless / DevOps Institute report found that teams without explicit on-call comp or recovery time had 2.4× higher turnover among senior engineers than teams that did.

The rules below attack all three at once.

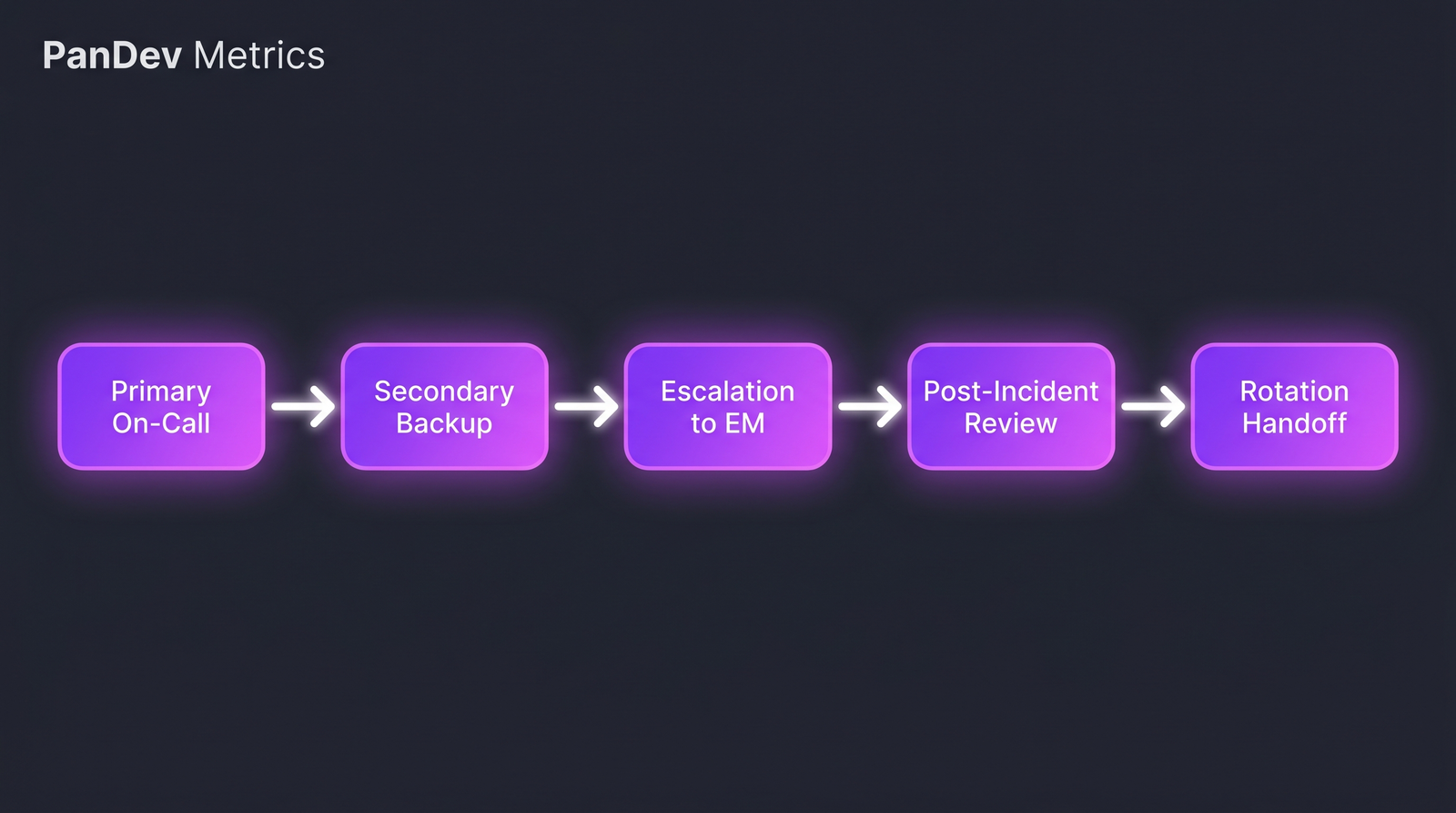

The healthy rotation loop: primary → secondary → escalation → review → handoff. Every arrow is a decision point teams skip.

The healthy rotation loop: primary → secondary → escalation → review → handoff. Every arrow is a decision point teams skip.

The 9 rules

Rule 1 — Minimum 6 engineers per rotation

Below six, there's no slack. One person on PTO or sick takes the rotation to a 5-way split, then the shift that was already heavy becomes brutal. At six or more, the math works: each engineer is on primary every ~6 weeks, giving a real recovery window between shifts. If you don't have six, don't have a 24/7 rotation — use follow-the-sun with a partner team or triage-only hours with deferred response.

Rule 2 — Primary + secondary, always

Never route pages directly to one person. The secondary picks up if the primary doesn't acknowledge within 5 minutes. This single rule cuts unresolved pages roughly in half in teams we've seen adopt it — because the primary is asleep, in a meeting, or out of signal more often than the org expects.

| Role | Acknowledge window | Escalation trigger |

|---|---|---|

| Primary | 5 min | No ack → secondary paged |

| Secondary | 10 min | No ack → EM paged |

| EM | 15 min | No ack → VP Eng |

Secondary isn't "backup" — it's an active role with expected availability during the shift.

Rule 3 — Hard cap: 2 paging incidents per 12-hour shift

This is Google's SRE ceiling from their public book, and it's the single most useful number in rotation design. If a shift exceeds 2 paging incidents, something upstream is broken: the alerting is noisy, the system is fragile, or both. Track this monthly. If it trends above 2 for two consecutive months, the rotation is declared unhealthy and engineering leadership owns a remediation plan, not the on-call engineer.

Rule 4 — Structured handoff at every rotation swap

15 minutes, synchronous if possible, a short written artifact otherwise. Cover:

- Active incidents (open, mitigated-not-resolved, under investigation)

- Ongoing concerns (deploy freezes, known-flapping alerts, dependency issues)

- This week's change log (what deployed that the next on-call should know about)

- Runbook updates (any new runbooks, any gaps found)

Skipping handoff is the most common tax. We've seen teams rediscover the same flaky alert three rotations in a row because no one wrote it in the handoff doc.

Rule 5 — Compensation reflects the cost

Three models that work, pick one and commit:

- Time-in-lieu — every paged hour after 10pm or on weekends adds paid recovery time, taken within two weeks.

- Flat stipend — $300-$800/week while on rotation, independent of incidents, clearly communicated.

- Hybrid — small base stipend + per-paged-incident bonus.

The worst model is "you're salaried, figure it out." It externalizes the cost to the engineer's health and, eventually, to your turnover budget. Blameless' 2023 data tied compensation clarity to retention more strongly than any other on-call variable.

Rule 6 — Mandatory post-incident recovery

If someone was paged between 10pm and 6am, they don't start at 9am. Policy, not negotiation. Teams that publish this policy and enforce it see 30-40% fewer self-reported burnout symptoms over 12 months (DevOps Institute 2023). Teams that rely on "feel free to come in late if you need to" see engineers show up anyway and quietly build resentment.

Rule 7 — Rotate types, not just names

Three kinds of on-call work rotate separately:

- Paging rotation (the pager itself)

- Incident commander rotation (runs the response when things go sideways)

- Review rotation (owns the postmortem within 48 hours)

Merging these into one role overloads the same person. Splitting them means on any given week, three different engineers carry reduced load rather than one engineer carrying all of it.

Rule 8 — No on-call within 30 days of termination or role change

Announced resignation, internal transfer, PIP — all reasons to pull someone off the rotation. Their attention is elsewhere, their motivation to stay heroic is gone, and putting them on the pager is asking for a missed page. The remaining rotation absorbs the gap, which is also a signal to hire before it's urgent.

Rule 9 — Review rotation health monthly with data, not vibes

A 30-minute monthly meeting. Three charts only:

- Paging incidents per shift (is it under 2?)

- After-hours page count per engineer (is it evenly distributed?)

- Post-incident recovery actually taken (is the policy being honored?)

If any of the three is red, that's the agenda for the next month. Our CTO Dashboard pattern works well here — leaders see the signal weekly, but the on-call conversation is monthly so it doesn't crowd out delivery work.

Common mistakes to avoid

| Mistake | Why it hurts | Fix |

|---|---|---|

| One-week shifts | Too long — sleep debt compounds to a breaking point by day 4 | Split into two half-weeks with different primaries |

| "Volunteer" on-call | The same 2-3 people carry 80% of shifts until they quit | Mandatory rotation with codified exceptions |

| Paging on every error | Alert fatigue kills response quality | Ruthless alert tiering — page only SLO-level impact |

| No runbooks | Each incident relitigated from scratch | Runbook-per-alert as a merge gate |

| Counting only incidents | Misses low-sev interruptions that still break focus | Track paged minutes, not just paged events |

The checklist

- Minimum 6 engineers per rotation

- Primary + secondary both staffed for every shift

- Paging incidents per shift tracked and under 2 avg

- Handoff ritual documented and followed

- Compensation model published

- Recovery-time policy published and enforced

- Incident commander and review roles rotated separately

- People leaving / transferring are pulled off rotation

- Monthly rotation-health review on the calendar

How to measure if this is working

Four signals tell you the rotation is healthy:

- Paged incidents per shift — trend stays below 2 for rolling 3 months

- After-hours coding time — we track this as a burnout signal in PanDev Metrics via IDE heartbeat data; sustained after-hours activity post-incident suggests recovery policy is being skipped

- On-call-to-exit gap — measure time between a person's rotation and voluntary exit; if it's consistently under 6 months, the rotation is contributing to attrition

- MTTR trend — a healthy rotation correlates with flat or improving MTTR; a deteriorating rotation shows up there first

Our dataset across 100+ B2B engineering teams shows a consistent pattern: teams that publish explicit on-call comp and recovery policies hit a 30% lower after-hours IDE activity spike in the week following an incident compared to teams with implicit norms. Our signal is limited to work we can see in the editor — Slack triage and pager-only engagement are invisible to us, so treat this as a directional indicator, not the full picture.

When this framework doesn't fit

If your team is under six engineers, stop. You cannot run a sustainable 24/7 rotation with fewer than six bodies — the math defeats any rules you layer on top. Options: follow-the-sun with another team, triage-only business hours, or a paid external SRE partner for overnight coverage until you hire.

Also skip this if your paging volume is structurally low (say, fewer than 4 pages per quarter). A formal rotation becomes overhead; informal coverage with a clear escalation chain works better at that volume.

The contrarian rule

Most playbooks start with "improve your alerts." We disagree. Alert noise is a symptom; the root cause is nobody owns the alerts as a product. Rule 3 (the 2-incidents-per-shift ceiling) creates the forcing function: when the ceiling is breached, alerting ownership becomes an engineering-leadership problem, not a line engineer's weekend project. Fix rotation health first, alert hygiene follows.