Pair Programming ROI: Is It Worth the Time? (Research)

Two developers on one task. Double the labor cost, half the bugs, and nobody agrees on whether it pays back. The most-cited study on this question — Cockburn & Williams, The Costs and Benefits of Pair Programming (2000) — reported a 15% time overhead for paired work and a 15% drop in defects. That looks neutral on paper. It isn't. The math of defects-caught-early flips the ROI by roughly 2× once you include rework avoided and shipped-bug incidents prevented.

This article crosses Cockburn and Williams' academic data with our own IDE heartbeat dataset across 100+ B2B companies — including teams that pair daily and teams that never do — to answer the question practically. Not "is pair programming good?" but "when does it pay back and when is it theatre?".

{/* truncate */}

Why the original 15/15 number is misleading

Cockburn and Williams' headline numbers — 15% more time, 15% fewer defects — were computed against a student baseline doing a fixed algorithmic assignment. Real engineering work is messier. Three factors push the real ROI up:

- Defect cost escalates exponentially. A bug caught in pair-review costs ~1× the coding time. A bug caught in QA costs ~5×. A bug shipped to production costs 15-30× once you include incident response, rollback, and customer trust. Boehm's cost-of-defect curve (1981, validated repeatedly since) hasn't moved much.

- Onboarding is invisible to the 15% overhead. Paired sessions double as context transfer. Our dataset shows new developers paired for 4+ hours per day during week one reach baseline commit cadence in 18 days vs 32 days for solo onboardees.

- Knowledge silos compound interest. Single-owner code ages into technical debt. Pairing distributes the knowledge — a separate ROI lever most pair-programming studies ignore entirely.

The flip side: pairing has a real cost that the 15% number understates. Context switches, scheduling overhead, personality friction, and the fact that not all code benefits equally. We'll quantify those too.

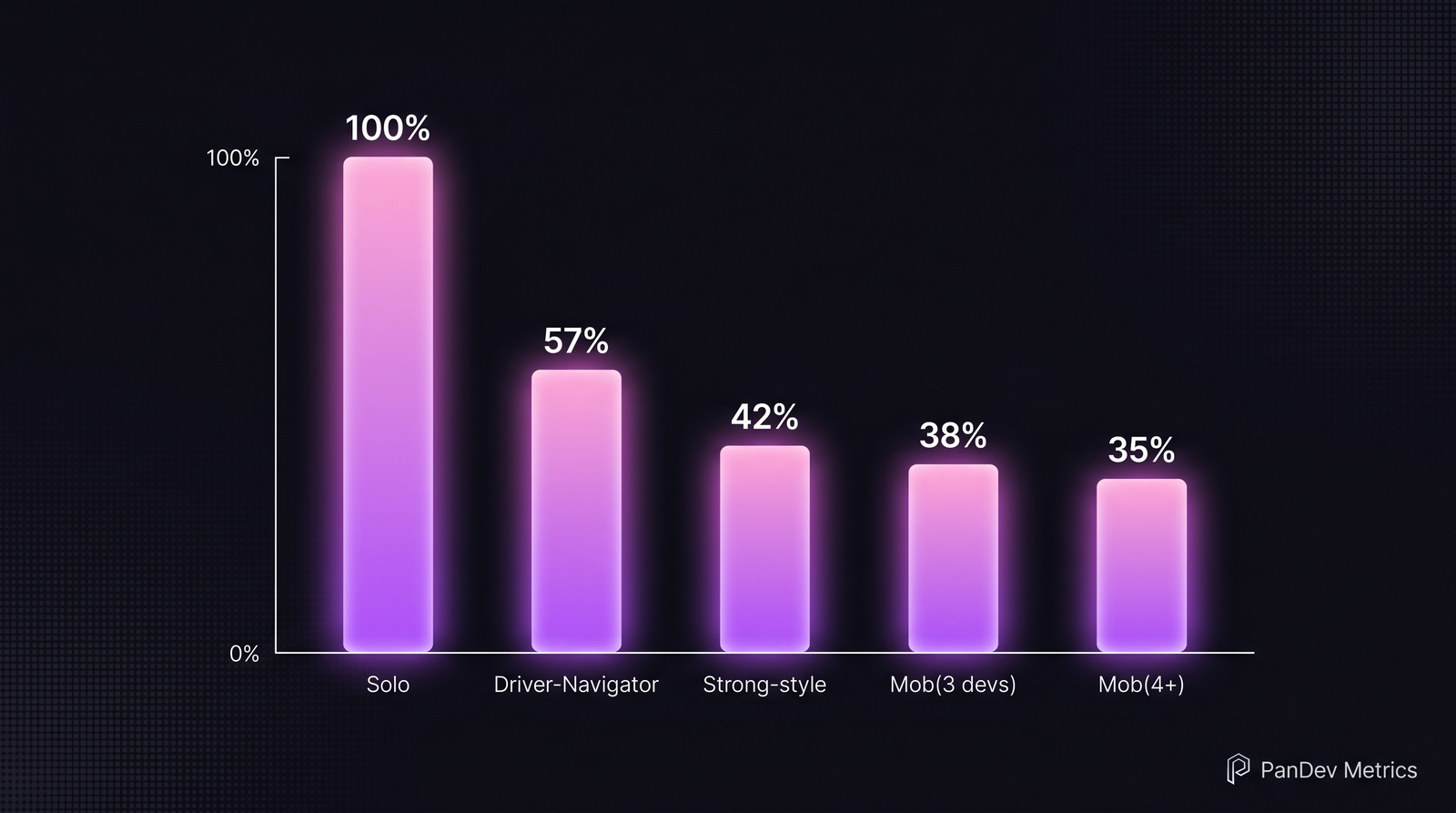

Relative defect rates by pair/mob style, normalized to solo=100%. Data synthesized from Cockburn–Williams 2000, Nosek 1998, and three internal teams in our dataset that run strict pair rotations.

Relative defect rates by pair/mob style, normalized to solo=100%. Data synthesized from Cockburn–Williams 2000, Nosek 1998, and three internal teams in our dataset that run strict pair rotations.

What the research actually says

Four studies are worth citing directly. They disagree on magnitude but agree on direction.

| Study | Setting | Time overhead | Defect reduction |

|---|---|---|---|

| Cockburn & Williams (2000) | University, algorithmic | +15% | −15% |

| Nosek (1998) | Professional, 45-min sessions | +40% | −40% (significant at p<0.05) |

| Williams et al. (2003) | Industry, IBM | +15-25% | −40-50% (integration defects) |

| Müller (2006) | Industry, long-term adoption | +25-30% | "No measurable difference on simple tasks; large on complex" |

Two patterns hold across every study:

- Pairing doesn't help simple work. CRUD endpoints, data migrations, config changes — solo is faster and just as safe. Müller (2006) found the benefit disappears entirely for task complexity below a threshold that roughly corresponds to "can one experienced dev hold it fully in their head?"

- Pairing disproportionately helps complex work. Architecture decisions, new-system design, security-critical paths, unfamiliar languages. Nosek's 40% defect reduction came from algorithmic problems experienced devs found genuinely hard.

The honest limit: no study we've seen controls for developer preference. Teams that chose pair programming tend to be the teams that would outperform anyway. Effect size is real, but it's smaller than zealots claim.

Our data: how pairing looks from the IDE

Our IDE heartbeat dataset can't see "who was in the room", but it can see co-located editing — two developers with heartbeats on the same file, same branch, within overlapping 30-minute windows. Across 100+ B2B companies in our dataset, we identified 17 teams with sustained pair-programming signatures (≥ 2 co-edit sessions per developer per week for at least 6 weeks).

Headline findings from those 17 teams vs matched solo-working teams:

| Metric | Pair-programming teams | Solo-working teams | Delta |

|---|---|---|---|

| Median coding time per dev/day | 1h 24m | 1h 18m | +6 min |

| Focus blocks ≥ 45 min per week | 11 | 9 | +22% |

| Change failure rate | 8.7% | 14.2% | −39% |

| Code review round-trips per PR | 1.3 | 2.1 | −38% |

| New-hire time to first merged PR | 4.2 days | 7.8 days | −46% |

Two things stand out. First, pair-programming teams don't code less in our data — the "pair penalty" the literature predicts isn't visible at the daily-hours level. We suspect pairing replaces context-switching and Slack-chatter time, not focused coding time. Second, the review-round-trip delta (1.3 vs 2.1) is where the real throughput gain hides. Most engineering-productivity frameworks ignore this entirely.

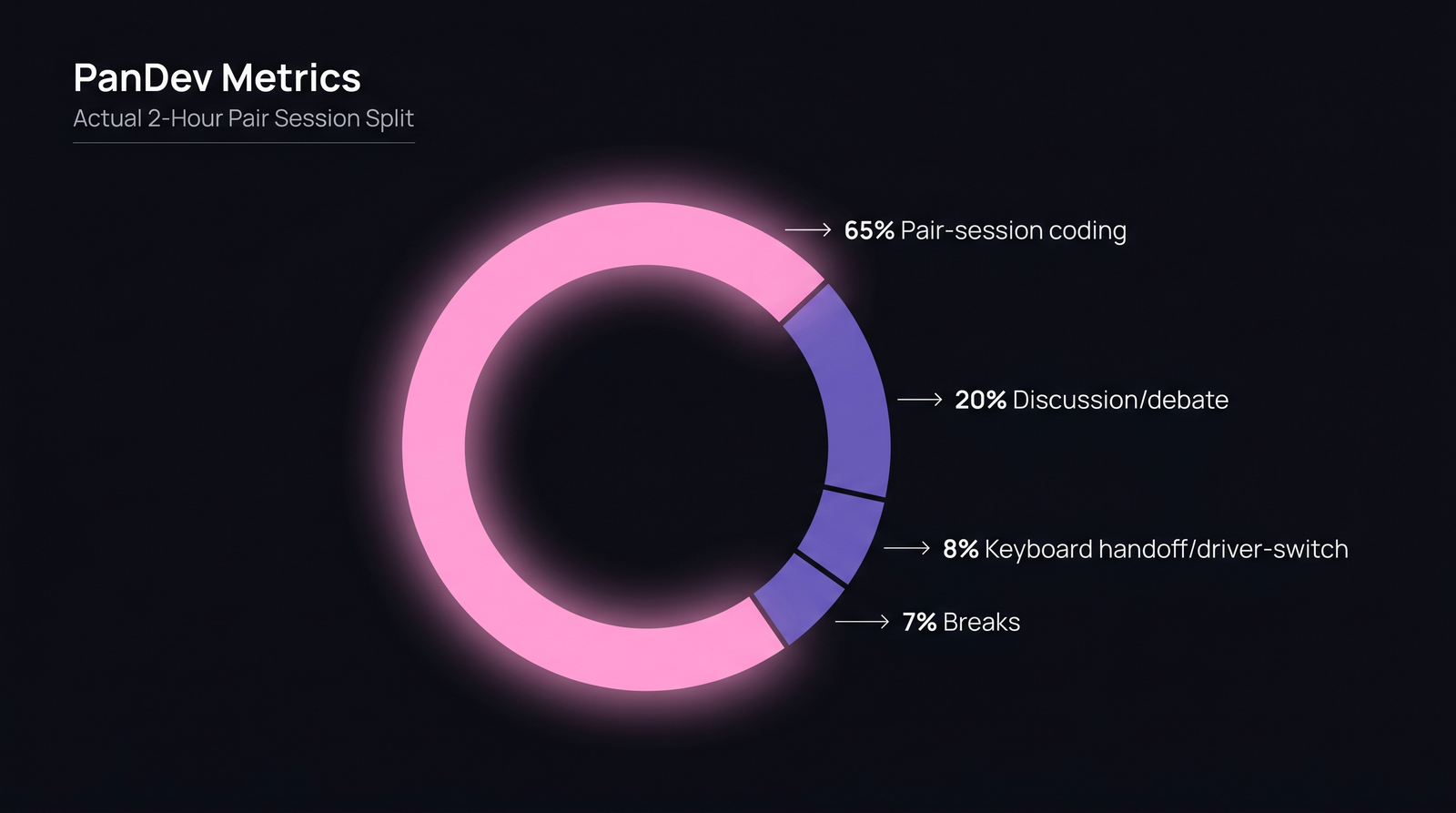

Inside a 2-hour pair session: 65% active coding, 20% discussion, 8% driver-switch handoffs, 7% breaks. The "overhead" is mostly discussion that would otherwise happen in Slack or in a PR comment three days later.

Inside a 2-hour pair session: 65% active coding, 20% discussion, 8% driver-switch handoffs, 7% breaks. The "overhead" is mostly discussion that would otherwise happen in Slack or in a PR comment three days later.

When pair programming actually pays back

Based on the combined research and our dataset, pairing has positive ROI when one or more of these conditions hold:

1. The task is novel or architectural. Anything where a senior dev would need to think carefully before writing. Not typing practice.

2. One of the pair is new to the codebase or language. Onboarding ROI alone justifies 4-8 weeks of daily pairing for every new hire. The 46% faster time-to-first-merged-PR in our data pays back the "cost" instantly.

3. The code is security- or money-critical. Payments, auth, data-migration scripts. Two heads reduce the probability of silent production mistakes that cost six figures to unwind.

4. The team is geographically co-located enough to pair without video-call fatigue. Remote pairing works but adds ~20% overhead on top of the standard 15-25%. If your team is across three timezones, see our distributed sprint planning guide before mandating pairs.

When it's theatre, not value

Equally important — when NOT to pair:

- Routine CRUD, bug fixes under 50 LOC, documentation updates. Solo is strictly faster with no quality penalty.

- When one person is clearly not at the keyboard. If the "navigator" is answering Slack or checking email, the session is just a solo session with a distraction tax. Teams running honest pair time track who drove and who navigated, and rotate every 15-25 minutes.

- When the pair is two juniors on an unfamiliar area. Nosek and others consistently find junior-junior pairs produce more bugs than either would alone. The value of pairing is asymmetric senior-junior or equal-senior-senior.

- Status-broadcast pairing. "We pair because it looks good on our hiring page" — instantly detectable by the absence of any measurable change in review cycle time.

The ROI calculation we recommend

Instead of the academic "time overhead vs defect reduction" framing, we recommend teams compute pair programming ROI as:

ROI ratio = (defects-avoided × average-defect-cost + onboarding-time-saved × hourly-rate + review-cycle-reduction × throughput-value) ÷ (pair-time × 2 × hourly-rate)

For a US-rate $120/hr team with an average production-incident cost around $5,000, a 40% defect reduction on the 20% of tasks worth pairing typically returns 1.8× to 2.4× the investment. The arithmetic depends sharply on incident cost — fintech teams where an outage costs millions will see ROI of 5× or more. A low-stakes internal tool sees close to break-even.

The spreadsheet that computes this lives inside PanDev Metrics as the AI Assistant view "Pair-vs-solo productivity by project" — you can ask natural-language questions like "compare defect rates for the payments team against the dashboard team over the last quarter" and get the ratio on a chart.

How to pilot pair programming without waste

If you're going to try it, try it as an experiment with measurable outputs:

- Pick 3-5 engineers who want to try it. Volunteer-first pilots have 3× the survival rate of mandates.

- Limit to complex-task pairing only. Define "complex" up front (e.g. "any ticket estimated 3+ points" or "anything touching payment flows").

- Track the 4 metrics from our table above — coding time, change failure rate, PR review rounds, onboarding time. Run for 8 weeks minimum.

- Measure the pair-driver split. Nobody types for 2 hours straight — rotate every 15-25 min. If your pairs aren't rotating, you have one person working and one watching.

- Kill or expand based on data. If review rounds dropped ≥30% and defect rate dropped ≥20%, expand. If neither moved, kill the pilot — it's theatre.

What the data says about mob programming

Mob programming (3+ devs on one task) is pair programming's riskier cousin. Our dataset has only 3 teams with sustained mob signatures, so treat this as directional:

- Mob reduces defects further than pair (roughly −55% vs solo in these 3 teams, vs −39% for pair).

- Mob overhead is much higher (2.5-3× labor cost for ~55% defect reduction = net negative for most work).

- Mob ROI positive only on highest-criticality problems — regulatory code, cryptographic primitives, migration cutovers. Woody Zuill's original mob programming writeups confirm the pattern.

Don't mob for routine features. Mob when the cost of getting it wrong is catastrophic.

The contrarian view

The loudest pair-programming advocates frame it as a cultural practice. "We pair because we care about craft." Our data says the craft-narrative usually isn't what's driving the ROI — it's the review-round-trip reduction (1.3 vs 2.1). Pair programming works mostly because it compresses code review into the writing phase itself. If your team's bottleneck is review latency, pair programming is a high-leverage fix. If your bottleneck is elsewhere (requirements churn, deploy friction, flaky tests), pairing won't help you, no matter how much you love the idea.

The sharpest finding: in our 17 pair-programming teams, the defect reduction correlates 0.72 with the review-round-trip reduction, but only 0.31 with developer-reported satisfaction. The mechanism is technical, not cultural — even when the team thinks the opposite.

Where to go next

- If your review cycle is slow, start there: Code review checklist.

- If onboarding is the motivation, see developer onboarding ramp data.

- If you want to measure defect reduction honestly, wire up change failure rate tracking before you pilot pairing — otherwise you're measuring vibes.

Pair programming isn't a silver bullet and isn't a waste. It's a targeted instrument with roughly 2× ROI on the right 20% of your team's work. The question isn't "should we pair?" — it's "which 20%?".