Peer Recognition Systems for Engineering Teams That Work

Every engineering org has tried the kudos bot. Most are dead within 9 months. A 2024 Gallup meta-analysis of 1.2M workers flagged something specific about technical roles: peer recognition drives 2.7× higher engagement lift than manager praise for engineers, but only when the recognition meets three criteria — specific behavior, public visibility, and timely delivery. The average Slack /kudos command meets none of them.

This is a playbook for a peer-recognition system that actually keeps running past year one. It works for teams of 10-200, costs under $50/engineer/year, and — contrary to most vendor decks — has nothing to do with points or badges.

{/* truncate */}

The problem: why most kudos systems die

The failure pattern is consistent:

- Month 1-3: leadership pushes adoption; 60% of engineers use it

- Month 4-6: the same 10-15 people keep posting; the long tail goes quiet

- Month 7-9: people stop reading the channel; posts stop

- Month 10+: the kudos bot is still installed but sends 2 messages a week, all birthdays

Harvard Business Review's 2023 study of 40 engineering orgs using peer-recognition software found the median system was abandoned in 11.3 months. The three causes HBR identified:

- Vague "thanks" with no behavior tied — "thanks for being awesome" adds no information

- Point / badge / leaderboard gamification — engineers correctly read as childish, disengage

- Management hijacking — the moment a manager posts "kudos for shipping Q3 goals," the channel becomes performative

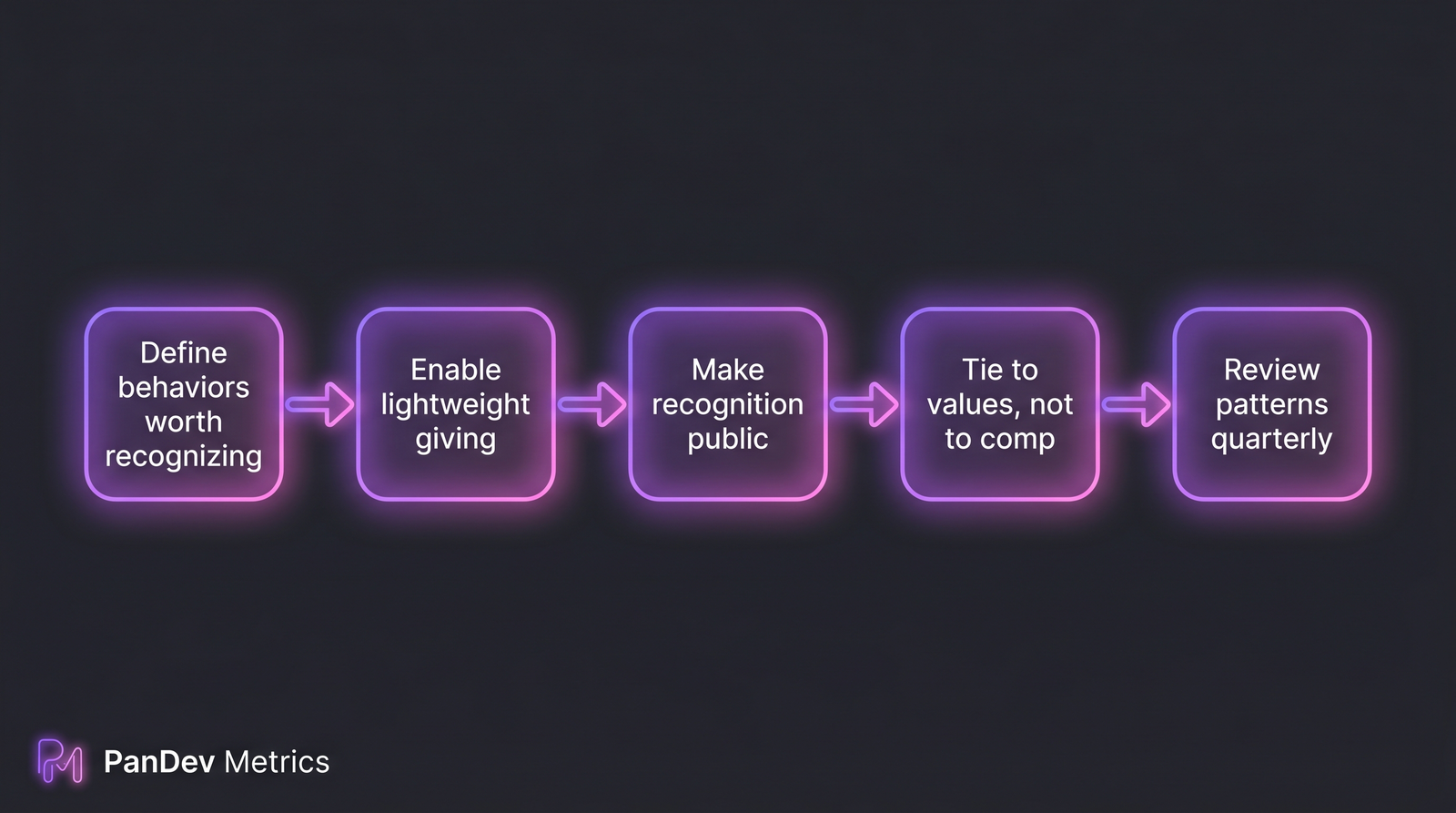

The 5-step recognition loop. Each step has a common failure mode that kills the system if skipped.

The 5-step recognition loop. Each step has a common failure mode that kills the system if skipped.

The framework: 5 steps

Step 1 — Define the behaviors worth recognizing

Don't launch a peer-recognition system without an explicit list of what "recognition-worthy" means. Common anti-pattern: leaving it abstract, expecting engineers to know.

A working list for most engineering orgs:

| Behavior | Example | Why recognize it |

|---|---|---|

| Unblocked someone | "Rewrote the migration script so the pipeline team could deploy" | Reduces org latency |

| Caught a production risk before launch | "Pushed back on the auth change during code review; it had a race condition" | High-value reviewing |

| Shared context that wasn't required | "Wrote up the fix plus a design note explaining why" | Compounds team knowledge |

| Taught someone a tool / pattern | "Pair-debugged k8s log issues with [junior]" | Mentorship without formal program |

| Cleaned up something nobody owned | "Deleted 120 dead npm deps across 4 repos" | Org hygiene most ignore |

Each behavior is observable (someone saw it happen) and specific (not "is a great teammate"). This is the foundation — skip it and the system degrades to generic thanks.

Step 2 — Enable giving in the tools engineers already use

Do not add a separate kudos portal. Engineers will not navigate to a new URL. Instead, embed recognition in existing flows:

- Slack: a

/shoutout @user behaviorcommand that posts to a team channel - GitHub / GitLab: a bot that scans for "thanks @user for X" comments and cross-posts

- 1:1 note templates: a "peer shoutouts this week" field the EM can ask about

Our own team uses the Slack + GitHub combination. The key is one-tap giving, visible publicly, with nothing more than writing a sentence.

Step 3 — Make recognition public by default

Private kudos do less work. A 2023 Deloitte study of 180 companies showed public peer recognition was 3.1× more predictive of retention than private thanks. The mechanism: public recognition tells the recognizer's team what "good" looks like. It's a culture-shaping artifact, not just a pat on the back.

A public #team-shoutouts channel, read by everyone, is worth ten private notifications.

Step 4 — Tie recognition to values, never to compensation

The moment peer recognition converts to points, dollars, or promotion credit, two things happen:

- Engineers start gaming it (posting to favored peers, trading kudos)

- Unpopular work (reliability, documentation, refactors) gets less recognized because it gets less noticed

Keep it explicitly non-monetary. No tier levels, no dollar conversion, no "top kudos-earner" awards. If someone's contributions are compensation-worthy, the comp process handles it separately.

This is the contrarian part. Most vendor recognition platforms push gamification because it's measurable. The measurable gets you vanity metrics; the unmeasurable (cultural shift) is what actually reduces attrition.

Step 5 — Review patterns quarterly, not individually

Every quarter, the EM + HRBP review aggregate patterns — not individual kudos counts. Questions:

- Are certain people consistently invisible to peers? (may signal isolation, not low performance)

- Are certain behaviors under-recognized? (e.g., nobody is getting thanked for documentation — is nobody doing it, or is it being missed?)

- Is recognition equitable across demographics? (bias flag)

The right output is an org-level insight, not a "who got the most kudos" leaderboard. Skip this step and the recognition signal decays without you noticing.

Common mistakes

| Mistake | Why it hurts | Fix |

|---|---|---|

| Points / badges / levels | Reads as corporate, engineers disengage | Values-based, non-monetary |

| Only public at leader level | Can't see peer-to-peer dynamics | Public default, private by choice |

| Letting managers dominate posts | Becomes performance theater | Manager quota: post 1:1 with IC posts |

| Using a generic platform | Doesn't match engineering vocabulary | Customize behaviors to your eng ladder |

| Tying to comp | Invites gaming | Hard separation, comp handled elsewhere |

| No quarterly review | Invisible decay | 30-min quarterly pattern review |

| "Employee of the month" | Zero-sum game, 1 winner + many losers | Multiple recognizers + multiple recipients |

The checklist

- List of 5-10 specific, observable behaviors published

- Giving mechanism embedded in Slack and/or GitHub (one-tap)

- Public channel active, with EM + IC posts

- Values-tied language, zero points/badges/dollars

- Quarterly pattern review on calendar (EM + HRBP)

- No leaderboards visible to individuals

- Manager posts limited to balance IC voice

How to measure if it's working

Don't track "kudos count per person." That's the trap. Track:

- % engineers who gave at least one recognition this month — target >50% sustained after month 6

- % engineers who received at least one this quarter — target >90%

- Time between recognizable behavior and recognition — target under 48h (latency kills feedback loops)

- Recognition channel read-rate — Slack analytics; declining read-rate signals decay

PanDev Metrics doesn't read your Slack or kudos data directly. What it does see: the behaviors people should be recognized for. When an engineer consistently contributes to repos or projects outside their primary scope (visible through multi-repo IDE activity), that's often invisible to management but obvious to peers — and worth naming. Teams using our performance review guide pair recognition-channel data with IDE telemetry to surface the "quiet contributors" — people doing high-value work across boundaries who rarely self-promote.

Honest limit: peer recognition systems are behavioral interventions. Their effects are observable at the team level (engagement, retention) but rarely traceable to individual productivity lifts. Anyone claiming "kudos system increased productivity 23%" is probably reading correlation as causation.

When this framework doesn't fit

- Teams under 8 engineers — too small; informal thanks in standups works better

- Heavily remote / async teams with 6+ hour timezone gaps — sync public channels lose recognition events across timezones; use async-friendly tools like written weekly team digests

- Cultures where public praise is uncomfortable — some regional cultures treat public recognition as loss of face or embarrassment; adapt to private-by-default with public opt-in