Planning Accuracy: How to Know If Your Team Overestimates or Underestimates Tasks

"This should take two days." Three weeks later, the feature is still in progress.

Steve McConnell, in Software Estimation: Demystifying the Black Art, found that software projects typically overrun initial estimates by 28-85%. Brooks's Law from The Mythical Man-Month explains part of the reason: complexity grows non-linearly with scope, and adding people to a late project makes it later. The PM is frustrated. The developer feels guilty. The roadmap is fiction. And the entire organization has quietly accepted that engineering estimates are unreliable.

This isn't a people problem. It's a measurement problem. And it's fixable.

{/* truncate */}

The Estimation Problem Nobody Wants to Admit

Every engineering team estimates. Story points, t-shirt sizes, hours, days — the format varies, but the outcome is remarkably consistent: estimates are wrong.

The question isn't whether estimates are wrong. It's whether they're wrong in a predictable, correctable direction.

This is what Planning Accuracy measures: not whether your team estimates perfectly (nobody does), but whether their estimation bias is consistent enough to compensate for, and whether it's improving over time.

Two types of estimation failure

| Failure mode | What it looks like | Business impact |

|---|---|---|

| Chronic underestimation | Tasks consistently take 2-3x longer than estimated | Missed deadlines, eroded stakeholder trust, death march sprints |

| Chronic overestimation | Tasks finish early but buffer time is wasted | Slow perceived velocity, sandbagged commitments, underutilized capacity |

Most teams suffer from underestimation. Research by Steve McConnell (author of Software Estimation: Demystifying the Black Art) found that software projects typically overrun initial estimates by 28-85%, depending on how early the estimate was made.

But some teams — especially those burned by past deadline misses — swing the other way. They pad everything by 50-100%, delivering on time but at a pace that frustrates product teams.

Both patterns are problems. Both are fixable with data.

What Planning Accuracy Looks Like in Practice

Planning Accuracy in PanDev Metrics compares estimated effort (hours, story points, or days — whatever your team uses) against actual effort (measured through IDE activity data and task completion timestamps).

The formula is straightforward:

Planning Accuracy = 1 − |Estimated − Actual| / Estimated

A score of 1.0 means perfect estimation. A score of 0.5 means your estimates are off by 50%. A negative score means your estimates are worse than random.

Example: A real sprint breakdown

| Task | Estimated (days) | Actual (days) | Planning Accuracy |

|---|---|---|---|

| User auth refactor | 3 | 5 | 0.33 |

| Search API endpoint | 2 | 2.5 | 0.75 |

| Dashboard widget | 1 | 0.5 | 0.50 |

| CSV export | 2 | 2 | 1.00 |

| Payment integration | 5 | 8 | 0.40 |

| Bug fix batch | 1 | 1 | 1.00 |

| Sprint total | 14 | 19 | 0.64 |

This sprint has a Planning Accuracy of 0.64 — not terrible, but with a clear underestimation bias. The two largest tasks (auth refactor and payment integration) drove most of the miss. This is a common pattern: large tasks have worse estimation accuracy than small tasks.

Why Developers Can't Estimate (And It's Not Their Fault)

The planning fallacy

Daniel Kahneman and Amos Tversky identified the "planning fallacy" in 1979: people systematically underestimate the time needed to complete future tasks, even when they know similar tasks took longer in the past.

For developers, this manifests as:

- Remembering the coding time but forgetting the debugging time

- Assuming the happy path without accounting for edge cases

- Not factoring in code review cycles, deployment issues, or dependency delays

- Estimating based on "how long it would take if everything goes right"

Unknown unknowns

Software estimation is fundamentally harder than estimating physical tasks because the scope of unknowns is unknown. A carpenter can estimate a bookshelf because they've built hundreds. A developer building a new microservice has variables they literally cannot foresee: API quirks, library bugs, infrastructure issues, security requirements that emerge mid-development.

The anchoring effect

In sprint planning, the first estimate spoken aloud anchors all subsequent discussion. If a senior developer says "that's a 3-pointer," junior developers hesitate to disagree even when their gut says it's an 8. Planning Poker was designed to prevent this, but in practice, many teams have abandoned it for "quick" verbal estimates that are heavily anchored.

Patterns We See Across Engineering Teams

Analyzing Planning Accuracy data from PanDev Metrics across B2B engineering teams reveals consistent patterns — patterns that mirror what Kahneman described as the "planning fallacy" in action:

Pattern 1: Small tasks are estimated well, large tasks are not

| Task size | Avg. Planning Accuracy | Direction of error |

|---|---|---|

| < 4 hours | 0.82 | Slight overestimate |

| 4–8 hours (1 day) | 0.71 | Slight underestimate |

| 1–3 days | 0.58 | Underestimate by ~40% |

| 3–5 days | 0.45 | Underestimate by ~55% |

| 5+ days | 0.31 | Underestimate by 2-3x |

The lesson is clear: break tasks into pieces smaller than one day wherever possible. A 5-day task estimated as five 1-day subtasks will be more accurate than a single 5-day estimate, even though the total scope is identical.

Pattern 2: Estimation accuracy improves with feedback loops

Teams that review their Planning Accuracy data after each sprint show measurable improvement:

| Sprint # | Avg. Planning Accuracy (no review) | Avg. Planning Accuracy (with review) |

|---|---|---|

| 1 | 0.52 | 0.51 |

| 2 | 0.49 | 0.56 |

| 3 | 0.53 | 0.61 |

| 4 | 0.50 | 0.65 |

| 5 | 0.51 | 0.68 |

| 6 | 0.48 | 0.72 |

Without feedback, teams hover around 0.50 indefinitely — essentially coin-flip accuracy. With regular review, they improve to 0.70+ within 6 sprints. The data, not the talent, makes the difference.

Pattern 3: Tuesday velocity predicts sprint success

Our data shows Tuesday is the peak coding day across the dataset. Teams that front-load complex tasks to Monday-Tuesday have better sprint completion rates than teams that distribute evenly. The reason: when Tuesday goes well, the rest of the sprint has momentum. When the hardest tasks are left to Thursday-Friday, risks accumulate.

Pattern 4: Language and framework affect estimation accuracy

| Primary language | Avg. Planning Accuracy | Likely cause |

|---|---|---|

| Python | 0.68 | Rapid prototyping, fewer surprises |

| TypeScript | 0.62 | Frontend complexity, design iterations |

| Java | 0.57 | Boilerplate overhead, enterprise complexity |

| Multi-language projects | 0.48 | Context switching, integration issues |

Java projects (2,107 hours in our dataset — the most of any language) tend to have lower Planning Accuracy. This reflects the language's verbosity and the enterprise environments where Java dominates — more stakeholders, more compliance requirements, more "surprises" during implementation.

How to Improve Planning Accuracy: A Framework for CPOs and PMs

Step 1: Start tracking the gap

Before you can improve, you need a baseline. For every task, record:

- Estimated effort (in whatever unit your team uses)

- Actual effort (measured, not self-reported)

- Date estimated, date started, date completed

- Whether scope changed mid-task

PanDev Metrics automates the "actual effort" part through IDE tracking. When a developer works on a task tagged to a specific ticket, the system records how much active coding time went into it.

Step 2: Identify your bias direction

After 2-3 sprints, calculate your team's average Planning Accuracy and bias direction. Most teams will find they consistently underestimate. This is normal.

| Bias direction | What to do |

|---|---|

| Consistent underestimate by 20-40% | Apply a 1.3x multiplier to estimates as a starting correction |

| Consistent underestimate by 50%+ | Tasks are too large — break them down before estimating |

| Consistent overestimate by 20%+ | Reduce padding — your team is sandbagging (possibly unconsciously) |

| Random — sometimes over, sometimes under | Estimation process is broken — try different granularity or estimation methods |

Step 3: Implement reference class forecasting

Instead of estimating from scratch, compare new tasks to completed similar tasks and use their actual duration as the baseline. PanDev Metrics maintains a historical record of task durations by type, making reference class forecasting practical.

Example: "The last three API endpoints took 1.5, 2, and 2.5 days. This one is similar in complexity. Estimate: 2 days."

This approach, recommended by Kahneman, dramatically reduces the planning fallacy because it anchors on actual outcomes rather than optimistic projections.

Step 4: Make Planning Accuracy a sprint metric

Add it to your sprint retrospective dashboard alongside velocity and burndown. When the team sees their accuracy score, they naturally start to calibrate.

Don't use it punitively. Planning Accuracy is not a score that determines bonuses or performance reviews. It's a calibration tool. If a team's accuracy drops because they took on a novel technical challenge, that's expected and healthy.

Step 5: Communicate uncertainty, not dates

Instead of "this will ship on March 15," say "our Planning Accuracy is 0.65 with an underestimation bias. Based on our estimate of 10 days, the likely range is 10-16 days." Stakeholders can handle uncertainty — what they can't handle is surprise.

The Cost of Bad Estimates

Poor Planning Accuracy has compounding costs:

| Impact area | Cost |

|---|---|

| Missed commitments | Eroded trust with customers, sales, and leadership |

| Overtime/crunch | Burnout, attrition — our data shows coding time spikes before deadlines followed by crashes |

| Sandbagging | Reduced throughput as teams pad estimates to protect themselves |

| Bad hiring decisions | "We need more developers" when the real problem is estimation and process |

| Product delays | Features promised to customers arrive late, affecting revenue |

This mirrors Brooks's Law perfectly: "adding manpower to a late software project makes it later." One VP of Engineering we spoke with summarized it well: "We hired two developers to fix a velocity problem. It didn't help because the problem wasn't capacity — it was that our estimates were 2x wrong, so we were always behind no matter how many people we added."

Planning Accuracy as a Leading Indicator

Planning Accuracy is one of the few leading indicators available to engineering leadership. By the time DORA metrics show degradation, the damage is done. But Planning Accuracy trends give you weeks of warning:

- Dropping accuracy → team is taking on unfamiliar work or has hidden blockers

- Increasing bias toward underestimation → scope creep or growing tech debt

- Sudden accuracy improvement → team may be sandbagging to hit numbers

When you combine Planning Accuracy with Activity Time data (our median of 78 min/day tells you what's realistic), you can build roadmaps grounded in what your team actually does, not what you wish they did.

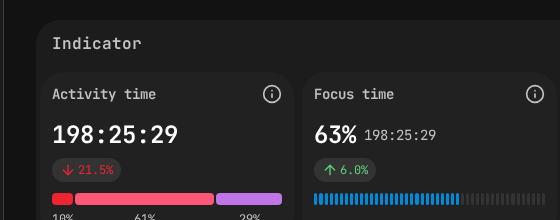

Planning Accuracy indicator showing actual vs estimated delivery.

Based on aggregated data from PanDev Metrics Cloud (April 2026). Estimation patterns observed across B2B engineering teams. References: Steve McConnell, "Software Estimation: Demystifying the Black Art" (2006); Daniel Kahneman, "Thinking, Fast and Slow" (2011); Fred Brooks, "The Mythical Man-Month" (1975).

Want to track your team's Planning Accuracy automatically? PanDev Metrics connects IDE activity to your task tracker, measuring actual effort against estimates — no manual timesheets required.