Pluralsight Flow vs Jellyfish vs LinearB in 2026: Honest Comparison

Three names get pasted into every Engineering Intelligence shortlist in 2026: Pluralsight Flow, Jellyfish, and LinearB. Three different histories, three different buyers, three completely different bets on what an EI platform should be. And yet the average mid-market engineering leader spends two weeks evaluating all three and walks away unsure which one fits.

The confusion isn't accidental. All three vendors describe themselves with overlapping language ("engineering intelligence", "DORA metrics", "data-driven engineering") while internally optimizing for very different ICPs. The 2023 DORA State of DevOps Report (Forsgren et al., Google Cloud) flagged this exact problem: the tooling category had outpaced the buyer's mental model. Most teams pick the wrong platform not because the platforms are bad, but because the platforms aren't even competing on the same axis.

This piece untangles it. No vendor pitch. We'll name where each wins and where each is wrong for you.

{/* truncate */}

Quick verdict (TL;DR)

If you only read one paragraph:

- Pluralsight Flow wins for Atlassian-heavy shops where Jira is the operating system and you want manager-level cycle-time charts without a procurement war. Loses on pricing transparency and roadmap velocity.

- Jellyfish wins for CFO-driven engineering analytics: R&D capitalization, OKR rollup, finance-aligned reporting. Loses for teams that just want to ship faster.

- LinearB wins for DevOps-mature teams who already speak DORA fluently and want workflow automation (gitStream, WorkerB) on top of metrics. Loses on price and absence of on-prem.

And the contrarian footnote that almost no analyst will write: for engineering organizations under 100 developers, the $25K+/yr Big Three rarely beat a $5-8K/yr alternative on actual feature usage. The premium pays for per-seat enterprise plumbing (SSO depth, custom roles, dedicated CSM), not metric superiority.

Pluralsight Flow in 2026

Flow has had three names and two corporate parents. It launched in 2015 as GitPrime, the first vendor to render Git history into manager dashboards. Pluralsight acquired it in 2019, rebranded to Pluralsight Flow, and in 2024 spun it out to Appfire as part of a portfolio divestiture. Each handoff cost momentum. We've spoken to three teams whose Flow renewals went sideways in the Appfire transition: pricing tightened, support response slowed, and the public roadmap visibly thinned.

What Flow still does well:

- Native Atlassian integration. Appfire is an Atlassian Marketplace giant. If your org runs on Jira + Bitbucket, Flow's PR cycle-time charts and code-review heatmaps require almost zero setup.

- Manager-friendly UI. The cycle-time and code-review dashboards are mature. EMs don't need DORA literacy to extract value.

- Cohort comparisons. Flow's strongest unique feature, comparing a team's metrics against an industry cohort, still works and still has buyers.

Where Flow is weaker in 2026:

- No IDE-level telemetry. Flow is Git-and-tracker only. It can't distinguish AI-assisted commits from human ones, and senior IC contributors who spend most of their day reading code look idle.

- Pricing opacity. No public pricing. Customer-reported numbers on G2 cluster around $25-50/user/month with annual commitments. Discounts from the Pluralsight era reportedly tightened post-Appfire.

- Slowed integration cadence. Newer trackers (Linear, Height), new AI coding tools, and new Git providers land slowly. We covered this in detail in our best Pluralsight Flow alternative writeup.

ICP for Flow in 2026: mid-market Atlassian-loyal shops, 100-500 engineers, EM-led adoption, low DORA literacy, no on-prem requirement.

Jellyfish in 2026

Jellyfish takes a completely different bet: the buyer of an EI platform isn't the VP of Engineering. It's the CFO. The platform was built from day one around engineering operations management (EOM) language: R&D capitalization, allocation by initiative, OKR-to-effort mapping, finance-friendly reporting.

What Jellyfish does well:

- R&D capitalization reporting. For US public companies with capitalizable software development costs, Jellyfish's "investment categories" feature reduces what was an internal audit nightmare to a generated report. We've seen Finance teams sign Jellyfish over the EM's objections specifically for this.

- OKR and initiative alignment. Jellyfish ties tracker work back to declared business initiatives. The dashboards a CFO opens look like FP&A reports, not DevOps dashboards.

- Custom allocation models. "How much engineering investment went into Customer X's roadmap requests last quarter?" Jellyfish answers this well.

Where Jellyfish is weaker:

- DORA depth is shallower. Jellyfish ships DORA, but it's a checkbox feature next to cycle time and capitalization. Teams who actually run DORA reviews tend to graduate to LinearB.

- Cost. Public reports on TrustRadius and G2 indicate enterprise contracts starting at $40-60K/yr for 50-100 engineers. R&D capitalization buyers absorb the cost. Pure engineering buyers balk.

- No on-prem. Cloud-only. Regulated industries (fintech, gov, defense) can't deploy it.

ICP for Jellyfish in 2026: US-domiciled mid-market and enterprise with serious capitalization or board-level reporting needs. CFO co-signs the purchase order. 200+ engineers typical.

LinearB in 2026

LinearB came to the category late (founded 2019) and built a thesis around DORA + workflow automation. Product hooks include gitStream (PR routing rules), WorkerB (Slack-bot reminders for stale reviews), and DORA dashboards backed by what they cite as analysis of 8.1 million pull requests.

What LinearB does well:

- DORA-native UI. Lead Time for Changes, Deployment Frequency, MTTR, Change Failure Rate are first-class objects, not derived charts.

- Workflow automation. gitStream routes PRs based on file paths and reviewer expertise; WorkerB nudges reviewers. Teams that adopt these features see measurable cycle-time improvement (LinearB publishes case-study deltas; treat them as directional).

- DevOps-mature buyer fit. If your team already runs DORA reviews, LinearB's vocabulary matches yours from minute one.

Where LinearB is weaker:

- No IDE-level data. Same blind spot as Flow. Coding time and AI-tool usage are invisible.

- Pricing. Customer-reported numbers cluster around $35-50/user/month in the mid-tier. For a 50-developer team that lands at $30-60K/yr.

- No on-prem. Cloud-only. Some regulated buyers report this as a hard blocker.

ICP for LinearB in 2026: DevOps-mature mid-market engineering teams who treat DORA as their primary measurement model, have a release engineering function, and don't need on-prem.

Side-by-side: 18 criteria

This is the table most evaluations skip and most buyers wish they had. Pricing data is customer-reported (G2, TrustRadius, sales-call leaks). Treat ±20% as the realistic uncertainty.

| Criterion | Pluralsight Flow | Jellyfish | LinearB |

|---|---|---|---|

| DORA metrics | Partial | Yes (basic) | Yes (deep) |

| IDE heartbeat telemetry | No | No | No |

| Cycle time / PR analytics | Yes (strong) | Yes | Yes (strong) |

| R&D capitalization | No | Yes (best-in-class) | No |

| OKR / initiative mapping | Partial | Yes | Partial |

| Workflow automation (gitStream-style) | No | No | Yes |

| AI coding-tool detection | No | No | Partial |

| Cohort benchmarks | Yes (unique) | Partial | Yes |

| Burnout / health signals | Partial | No | Partial |

| On-prem Docker | No | No | No |

| On-prem Kubernetes | No | No | No |

| Air-gapped deployment | No | No | No |

| GitHub / GitLab / Bitbucket | All | All | All |

| Jira integration depth | Best | Yes (deep) | Yes |

| Russian / non-English UI | No | No | No |

| Multi-tenant org structure | Partial | Yes | Yes |

| Public pricing | No | No | No |

| Customer-reported start price (50 devs) | $25-30K/yr | $40-60K/yr | $30-40K/yr |

Three things stand out from that table.

First: none of the three offer on-prem. This is the most consistent gap in the entire category. Every regulated-industry buyer we've spoken to in the last twelve months listed on-prem as a hard requirement and ended up looking outside the Big Three.

Second: none of the three ingest IDE telemetry. All three rely on Git + tracker events. That means none can answer "how much active coding time does a senior engineer actually spend?" with editor-accurate numbers, the metric that, per Microsoft Research (Forsgren, Storey, et al., 2020), correlates with self-reported productivity better than PR cycle time does.

Third: gitStream is LinearB's actual moat. Of the differentiating features, only LinearB's PR-routing automation is genuinely hard to replicate. Cohort benchmarks (Flow) and capitalization (Jellyfish) are configuration features any vendor can ship. gitStream is rules-engine product surface that took years to build.

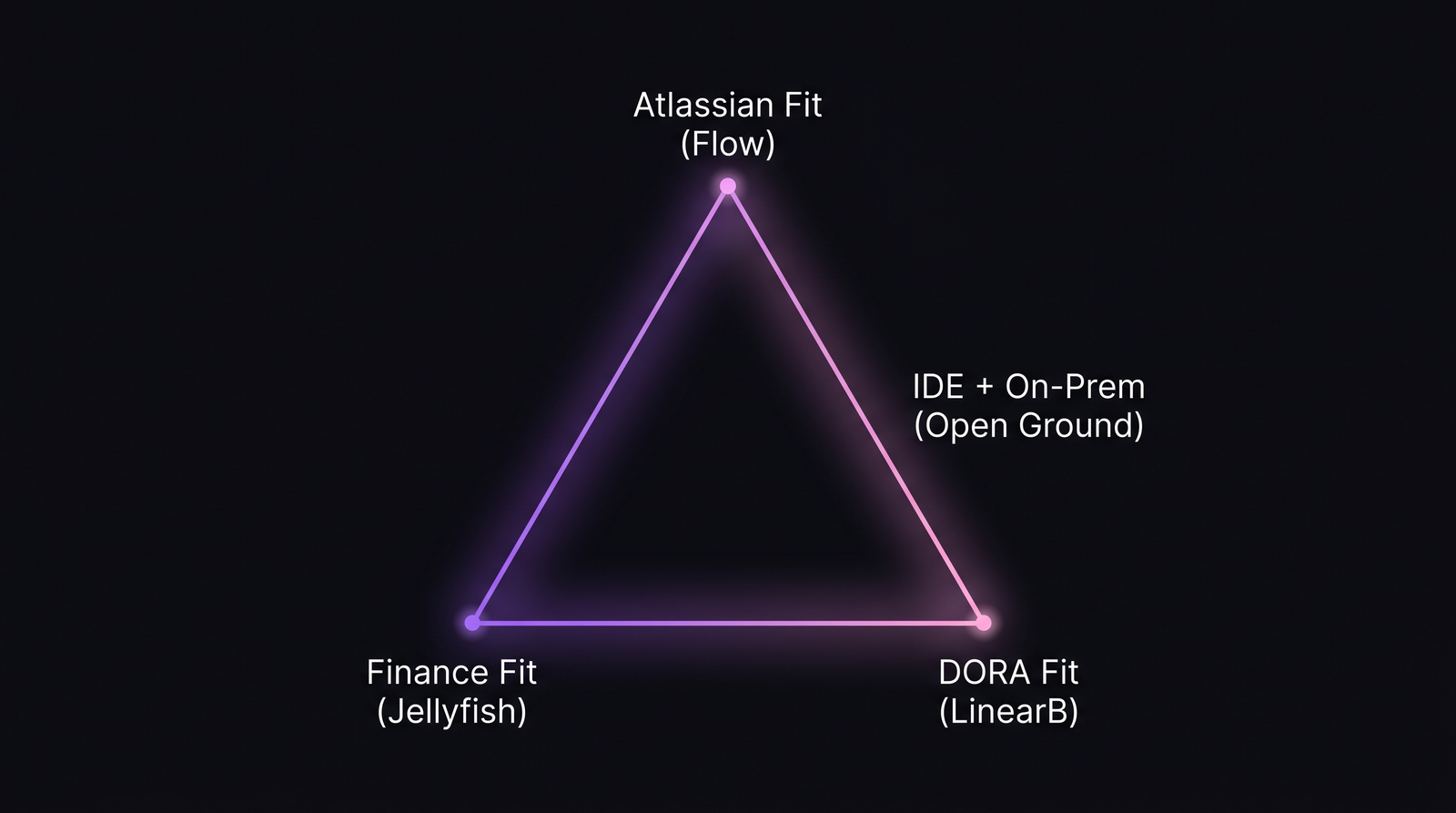

Three corners of the same category. The fourth axis (IDE telemetry plus on-prem) is open ground the Big Three haven't entered.

Three corners of the same category. The fourth axis (IDE telemetry plus on-prem) is open ground the Big Three haven't entered.

Decision tree: which fits your team

Three questions in order. Answer them honestly and you'll have your shortlist of one.

1. Does Finance need R&D capitalization or board-level allocation reports?

If yes → Jellyfish. No other platform in the category will satisfy a CFO without heavy custom work. If no, continue.

2. Does your team run DORA reviews today, in a real meeting cadence?

If yes → LinearB. The vocabulary match alone saves three weeks of onboarding. If you also want gitStream-style PR automation, this is a near-automatic pick. If no, continue.

3. Is your day-to-day operating system Jira + Bitbucket + Confluence, and is procurement easier inside the Atlassian ecosystem?

If yes → Pluralsight Flow via the Atlassian Marketplace path. The friction savings are real.

If you answered "no" to all three, or if you answered "yes" to any of them but on-prem deployment is also a hard requirement, none of the Big Three are a clean fit. That's the alternative-tier conversation, which we walk through in our top 15 engineering intelligence platforms 2026 market overview.

Migration costs (if you switch)

Switching EI platforms is more painful than buyers expect. From migrations we've supported, the cost shape repeats:

| Cost category | Typical effort | Notes |

|---|---|---|

| Historical data export | 1-3 weeks | Most platforms export PR/cycle-time CSV but not custom dashboards |

| Integration remapping | 2-4 weeks | Repository connections, tracker projects, identity merge |

| Custom dashboard rebuild | 1-4 weeks | The longest tail. Dashboards rarely port 1:1 |

| Stakeholder retraining | Ongoing | Especially EM-led teams that built habits on old UI |

| Parallel-run period | 30-60 days | Comparing old and new before cutover |

The honest take: if a platform you already use is delivering signal your team acts on, switching for a 15% metric improvement isn't worth the migration tax. Switch when the existing platform is failing a hard requirement (pricing, on-prem, IDE telemetry, finance reporting), not when it's merely "below the new shiny option."

The IDE-data gap (and the alternative tier)

We track IDE heartbeat data across 100+ B2B companies. At PanDev Metrics, every heartbeat ping records editor, language, project, and timestamp at roughly 1-2 minute resolution. Across our dataset, median active coding time is around 78 minutes per workday. None of Flow, Jellyfish, or LinearB can measure this. The data simply isn't in their pipeline.

That gap matters more in 2026 than it did in 2022. The 2024 Stack Overflow Developer Survey reported 76% of professional developers use or plan to use AI coding tools. A Git-only EI platform sees a 200-line PR; it cannot tell whether that PR took 4 hours of human typing or 20 minutes of human review of Copilot output. Editor telemetry can.

PanDev Metrics is also the only platform in this comparison set that ships production-grade on-prem Docker and Kubernetes deployment. For regulated buyers (fintech, telecom, government), that's not a feature, it's a procurement gate. We sit in the "alternative tier" intentionally: lower price point ($5-15K/yr typical for 50-developer teams), narrower enterprise plumbing than Jellyfish, but a structurally different data foundation. See the head-to-heads: PanDev vs Pluralsight Flow, PanDev vs Jellyfish, and PanDev vs LinearB.

Honest limit: pricing data in this article is customer-reported from G2 and TrustRadius. None of the three vendors publish list prices. Treat the numbers as directional. We've validated ranges with a sample of buyers, but a single procurement cycle can move a number 30% in either direction depending on commit length and seat count.

FAQ

What's the difference between Pluralsight Flow and Jellyfish?

Flow optimizes for engineering managers in Atlassian-heavy shops who want manager-level cycle-time visibility. Jellyfish optimizes for CFOs and engineering operations leaders who need R&D capitalization, OKR alignment, and finance-grade reporting. Flow's natural buyer is a VP Engineering; Jellyfish's is a CFO co-signing with the CTO. The product surface barely overlaps once you look past the homepage marketing.

Is LinearB better than Pluralsight Flow?

For a DevOps-mature team that runs DORA reviews and wants PR-automation features, LinearB is structurally a better fit than Flow. For an Atlassian-loyal shop with low DORA literacy that wants the easiest manager UI, Flow remains competitive. "Better" depends on which axis you're measuring on. There's no universal answer.

Which is best for DORA metrics: Flow, Jellyfish, or LinearB?

LinearB. They built the platform around DORA as the primary measurement model. Flow ships DORA as a secondary feature next to its older cycle-time charts. Jellyfish ships DORA but the company's center of gravity is capitalization and allocation, not DevOps performance.

Do any of them support on-prem deployment?

No. None of Pluralsight Flow, Jellyfish, or LinearB offer production-grade on-prem in 2026. All three are cloud-only SaaS. Regulated buyers (fintech, telecom, government, defense) consistently report this as their primary disqualification. The on-prem alternative tier exists but lives outside the Big Three — PanDev Metrics is the main contender there with Docker and Kubernetes packages.

Which has the best AI features in 2026?

LinearB ships the most AI surface today — gitStream rules can incorporate AI-driven reviewer suggestions, and they've published roadmap items for AI-assisted DORA insights. Jellyfish is heavier on natural-language querying of their existing data. Flow's AI roadmap is the thinnest of the three, post-Appfire. None of the three can detect AI-assisted commits at the IDE level — that requires editor telemetry, which is the open ground the alternative tier (including PanDev Metrics) has moved into.

The sharpest claim, restated

The Big Three Engineering Intelligence platforms aren't actually competing with each other. They're competing for different buyers — the EM who lives in Jira (Flow), the CFO who needs capitalization (Jellyfish), the DevOps lead who runs DORA reviews (LinearB). The lazy "best EI platform 2026" article ranks them on a single axis and is wrong by construction.

Pick the corner of the triangle your organization actually lives in. If your corner is "regulated industry, IDE-level visibility, on-prem deployment" — none of the three are home. That's not a failure of evaluation; that's a signal to look one tier down.