Principal Engineer: How to Measure Your Real Impact

A principal engineer at a 200-person fintech spent Q3 writing 180 lines of code. Her team shipped 340,000 lines in the same period. When her CTO looked at coding-time dashboards for a performance review, she almost got flagged as underperforming. What actually happened in Q3: she rewrote the payment reconciliation spec that unblocked two teams, mentored three senior engineers into tech-lead roles, and killed a six-month project that would have shipped something the market didn't want. Her measurable output was tiny. Her impact was the largest of any engineer in the company that quarter.

This is the principal engineer measurement paradox. Every staff-plus framework (Will Larson's, Tanya Reilly's The Staff Engineer's Path, the Google internal engineering ladder) acknowledges it: principal engineers are paid for judgment and force multiplication, not throughput. But most engineering orgs measure them like senior engineers with a bigger title. This article is how to measure principal impact honestly — and how a principal should measure their own impact when the review conversation comes.

{/* truncate */}

What principal engineers really do

The confusion starts with the job description. "Principal" usually means one of three very different roles:

Archetype 1 — Deep specialist. The domain expert. Distributed systems principal who owns consensus code. Database principal who owns query optimization. Impact is measured in correctness and scale: the systems they own don't fail in ways that matter.

Archetype 2 — Tech lead at scale. The cross-team force multiplier. Sets architecture for 5-10 teams, reviews the hardest PRs, writes RFCs that unblock initiatives. Impact is measured in what those teams ship, not in personal commits.

Archetype 3 — Solver. The person handed the hardest problems. Rotates across teams, lands on the critical fire, writes the 800 lines that unblock 80,000. Impact is measured in avoided disasters.

Tanya Reilly's 2022 book The Staff Engineer's Path (based on interviews with 50+ principal and staff engineers at companies from startups to Google) identified these three archetypes explicitly and noted that trying to measure a principal by the metrics of their archetype-adjacent role always distorts the signal. A specialist measured on throughput looks bad. A solver measured on retention looks bad. A tech lead at scale measured on individual code volume looks bad. Every time.

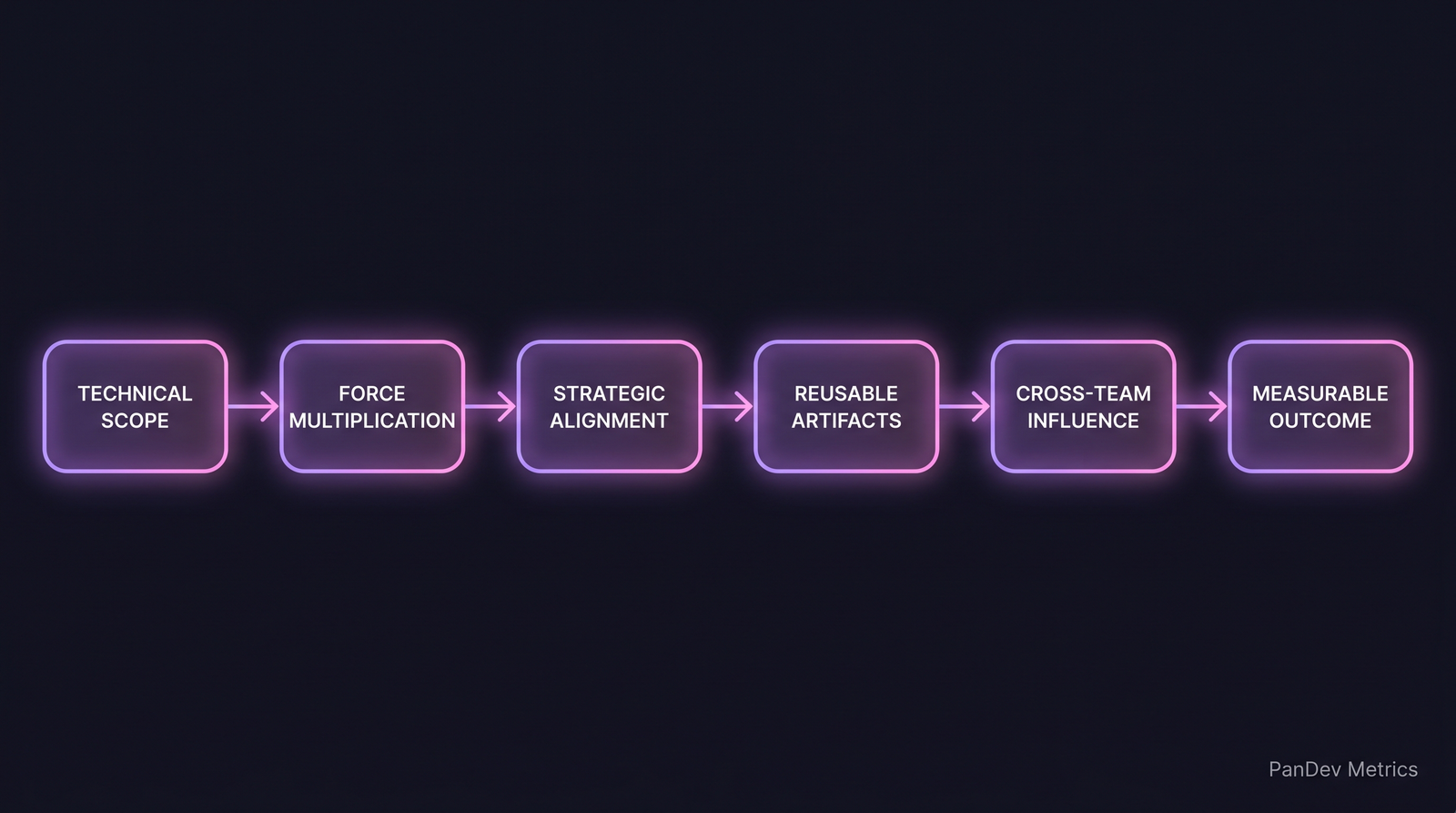

The principal impact chain. Notice that "measurable outcome" is the last step, not the first. Most impact metrics try to measure it directly and end up measuring activity instead.

The principal impact chain. Notice that "measurable outcome" is the last step, not the first. Most impact metrics try to measure it directly and end up measuring activity instead.

The 5 questions a principal should be asking

1. Whose work did I unblock this quarter?

The clearest single signal. A principal who can't name the 3-5 people or teams whose work they unblocked is either invisible or genuinely not multiplying force. Ask them monthly. Keep a list.

Microsoft Research's 2023 survey of 4,000+ senior engineers found that force-multiplication (measured through peer-review of unblocking contribution) correlated better with promotion-to-principal decisions than any code-based metric. It's the signal that actually moves careers in the upper band.

2. What decisions did I make that the organization will still be living with in 2 years?

Principal engineers make architectural decisions that outlive their own tenure at the company. Track them explicitly: a principal who made zero 2-year-durable decisions this year is doing senior-engineer work, not principal work.

Examples of durable decisions:

- Database choice for a new product line

- API shape for a platform that's now used by 4 teams

- Sunsetting a service that was costing $80k/year

- RFC that shaped on-call philosophy

3. How many people did I make meaningfully better at their job?

Not "reports" (principals usually don't have them). Mentees, collaborators, code-review students. If three senior engineers point at you and say "she taught me how to think about distributed transactions", that's measurable impact on org capability.

Will Larson's Staff Engineer frames this as "developing other engineers" and notes it's the hardest kind of impact to quantify in a review — which is why principals who neglect it get promoted slower and fired faster during reorgs.

4. What did I stop from happening?

Negative impact is real impact. Killed projects that shouldn't have shipped. Architectures that didn't get built. Acquisitions that weren't done. These are invisible on every dashboard.

One principal we interviewed during product research phrased it sharply: "My biggest Q2 contribution was killing a six-month migration that would have cost us $400k and delivered nothing. There's no Jira ticket for that." The impact is real. The measurement challenge is that it requires peer testimony or explicit tracking.

5. What would break if I left tomorrow?

A principal whose departure would barely inconvenience the org is either not in the right role or is over-abstracting their work. The healthy answer isn't "everything breaks" (bus factor = 1, you've failed at force multiplication) — it's "three specific initiatives lose their best advocate and would ship 6-8 weeks later".

The metrics principal engineers should track for themselves

This is the practical tool kit. Track these quarterly, not to put on a dashboard, but to have data when the review conversation happens.

| Metric | What it measures | Healthy direction |

|---|---|---|

| Cross-team RFC contributions (as author or primary reviewer) | Architectural influence | 3-8 per quarter |

| Engineers you've explicitly mentored | Force multiplication | 3-5 ongoing |

| Code review comments on PRs you didn't author | Technical leadership | 20-40% of your code activity |

| Projects killed or descoped on your recommendation | Judgment value | 1-3 per year |

| Junior/mid engineers promoted after your direct mentorship | Org capability growth | 2-4 per year |

| External visibility (conference talks, open-source commits, written artifacts) | Signal to market | 1-3 per year |

A principal who only scores high on personal coding-time metrics but low on all six of these is doing senior-engineer work with a bigger title. That's a real problem for both the engineer (stalled career) and the company (overpaying for throughput).

What PanDev Metrics can and can't measure

Our IDE heartbeat data captures coding activity across an engineer's workstation — how much time in which codebase, which languages, which files. For a principal, this is misleading as a primary signal and we say so explicitly. A principal with low coding-hours but high code-review signal and high cross-team commit-touches is probably force-multiplying well. A principal with high coding-hours and low cross-team activity may be doing senior work that should be delegated.

Where our data does help principals: detecting when they're being pulled into tactical work that shouldn't be theirs. If a principal's IDE time on any single project exceeds 60% for a full quarter, they've effectively been reduced to a senior engineer on that project. That pattern is visible and fixable.

Where our data doesn't help: measuring decisions, killed projects, mentorship depth, external influence. Those require peer signals (360s, structured testimony, documented artifacts). We're honest about this — force multiplication is hard to measure from telemetry alone, and any vendor claiming to solve it in one metric is selling you a story.

Red flags for principals (self-diagnostic)

- You're the only one who understands a critical system. You've created a bus factor, not built force multiplication. Document and delegate before the review.

- Your quarter's highlights are all personal shipping. You're doing senior-engineer work. Ask your manager why the force-multiplication opportunities aren't landing on your desk.

- Nobody is reaching out to you for architecture review. Either you're not visible enough, or the organization has routed around you. Both need a conversation.

- Your mentees are stagnating. If three engineers you've mentored haven't grown in a year, the mentorship isn't working — time to change approach or mentees.

Red flags for managers of principals

- You can't list the 3 things your principal accomplished last quarter without looking at their commits. You're not seeing their real work.

- Your principal is in standup with a team every morning. They're being used as a senior engineer. Fix the allocation.

- Reviewers can't agree on what your principal is good at. Role clarity failure. Define the archetype explicitly.

The contrarian claim

Principal engineers are the most mis-measured role in engineering orgs. The bias is structural: throughput is easy to measure, judgment is hard. Every org that measures principals on the same dashboards as senior engineers is systematically underrating the force-multipliers and overrating the high-output specialists. Over 3-5 years, this compounds into promoting the wrong people into staff+ roles and losing the right ones.

Honest limit: we don't have data on what "fixes" this. The teams we see getting principal impact right use 360-style peer testimony, structured quarterly artifact reviews, and explicit archetype assignment in hiring. Those are process fixes, not metric fixes. Telemetry can flag "this principal is being used wrong" but can't alone measure "this principal is doing it right".

Related reading

- Performance Reviews Based on Data: Templates and Anti-Patterns

- The 10x Developer: What the Data Actually Shows

- VP of Engineering First 90 Days: A Data-Driven Playbook

If you're a principal engineer and your performance review reads like a senior engineer's review with a bigger number, something is wrong with how your impact is being seen.