PropTech Development Velocity: Real Estate SaaS Engineering

A PropTech team I worked with last year ships 4.2 deploys per week across their flagship product. Their CEO benchmarks that against a reference SaaS portfolio and concludes velocity is "mediocre." It's not. A fintech of similar headcount ships 7.1; a pure B2B SaaS ships 9.4. PropTech lives at the intersection of regulated data, geospatial complexity, and 1990s MLS integrations — the raw deploy-frequency number hides what engineering is actually fighting.

Stack Overflow's 2024 Developer Survey places real-estate software in the bottom third of all industries for reported build and integration-testing speed. Microsoft Research's 2024 DevEx benchmarks show regulated industries losing an average 23% of engineering throughput to compliance friction alone. PropTech layers geospatial complexity on top of that.

{/* truncate */}

Why PropTech engineering is different

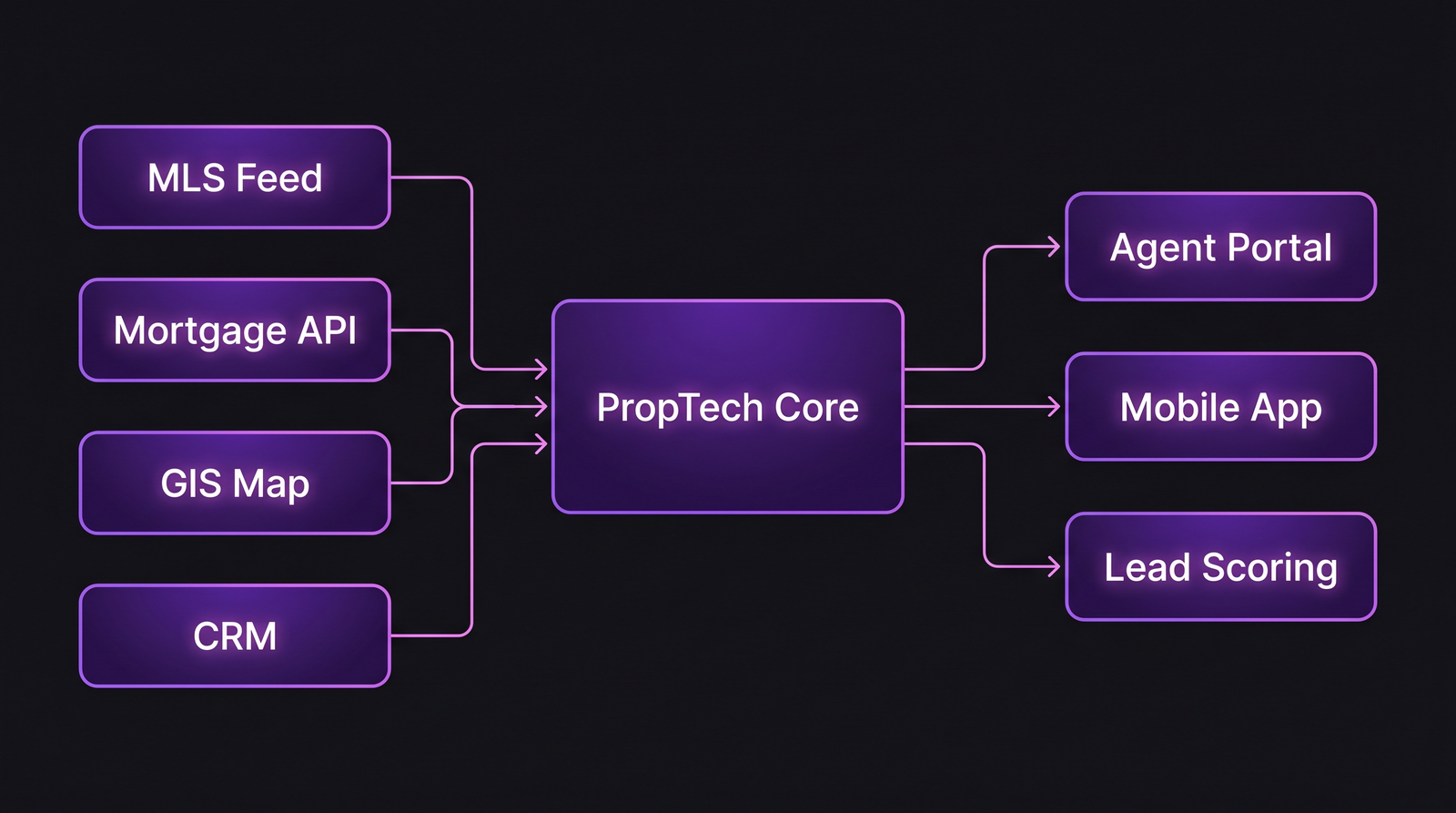

Real-estate software touches ten data sources that no other industry combines in one product: MLS feeds with their own XML dialects, mortgage-rate APIs, GIS map tiles, county tax databases, CRMs, e-signature vendors, and often a 20-year-old on-prem system at a brokerage. Each integration has its own SLA, its own compliance posture, and its own "contact your partner manager" update cadence.

| Constraint | What it requires | Where it hits engineering |

|---|---|---|

| RESO Data Dictionary compliance | Normalize MLS fields across 600+ US MLSes | Each new market is weeks of schema mapping |

| Fair Housing / disparate-impact | Audit ML ranking, personalization features | Model governance, extra QA gates |

| State-level e-sign laws | Notarization workflows differ by state | Feature flags per jurisdiction |

| Financial / mortgage data (GLBA) | Secure pipeline for borrower PII | Separate ingestion, encryption, data-loc constraints |

| Geospatial accuracy (plat boundaries) | Map layers per county recorder | Tile rendering, cache invalidation complexity |

| Photo / 3D-tour storage | Multi-GB asset serving per listing | CDN cost, pre-signed URLs, copyright checks |

Add a mobile app, an agent portal, a consumer portal, and an internal CMS — which is the typical PropTech product surface — and a single user flow crosses five of the above constraints.

The metrics that matter in PropTech

1. Integration test pass rate — the real velocity proxy

Unit test pass rate tells you the code you wrote works. Integration test pass rate tells you your 14 external dependencies still exist in the shape you expected.

Target benchmark: ≥ 92% passing on main branch, measured daily. PropTech teams below 85% consistently miss sprint commitments because flaky MLS tests get re-ran and mask real regressions.

Why self-report fails for this: developers routinely mark flakes as "known issue" and filter them out. The real pass rate is what the nightly full-suite shows, not what engineers see in PR checks.

2. Feature lead time per jurisdiction

A feature isn't done when it merges. It's done when it's enabled in every market where it was promised. A PropTech team measuring merge-to-deploy gets a rosy number. Measuring merge-to-enabled-in-all-promised-markets often adds 2-6 weeks per feature.

Target: measure both. The gap between them is a legal / ops tax, not a code tax.

3. Map render p99

If your product has a map, the map is the product. A p99 render time above 1.8 seconds predicts user drop-off more reliably than any other perf metric.

Target: < 1.2 s p99 on the primary map surface.

4. MLS sync lag

Hours between a listing changing at the source MLS and the change appearing in your system. Agents notice 2-hour lag. Consumers notice 30-minute lag. The industry average is 90 minutes; top teams sit at 15-30.

Target: < 30 min p95 for hot markets, < 2 hours p95 for tail markets.

5. Photo-pipeline throughput

How many new listing photo-sets per hour your pipeline processes. During spring inventory surge in North America, this metric spikes 4-6x. Teams that don't capacity-plan for this discover it as an incident, not a roadmap item.

The integration surface a PropTech engineering team actually maintains. Every line is a contract you don't own.

The integration surface a PropTech engineering team actually maintains. Every line is a contract you don't own.

How regulation and scale change measurement

Fair Housing and disparate-impact rules mean any ML feature that ranks listings, agents, or buyers needs an audit log of inputs and outputs going back 2-7 years depending on jurisdiction. That's not a feature you bolt on later; it's a data-architecture decision you make before the first ML experiment.

GLBA changes how you stage data. Anything touching mortgage borrower PII can't live in the same warehouse as listing data without extra controls. A PropTech team that treats all data as one pool will fail the first CISO review from an enterprise brokerage customer.

The practical consequence: a PropTech team needs 25-35% more platform engineering than a comparable B2B SaaS just to maintain the compliance substrate. Headcount benchmarks from LinearB and similar tools don't capture this.

Typical PropTech engineering team profile

| Parameter | Typical range |

|---|---|

| Team size | 15-40 engineers |

| Tech stack | TypeScript frontend, Python/Go/Java backend, PostGIS for geo, Elasticsearch for listing search |

| Deploy cadence | 3-6 deploys/week per service (11-18 services typical) |

| Primary pressure | Data freshness + regulation |

| Toolchain | Multiple MLSes via RESO Web API, Mapbox/Mapbox alternatives, Plaid or similar for financial data, Twilio/SendGrid for agent comms |

| DORA posture | Lead time 3-8 days; deploy freq 3-6/week; MTTR 2-6h; CFR 10-18% |

DORA's 2024 State of DevOps report shows medians across industries — real estate scored in the "medium" cluster on delivery performance but in the "low" cluster on operational stability. That split is specific to PropTech: deploy speed is fine; what breaks is integrations.

What to track differently from a standard SaaS team

- External-dependency uptime tracking. Treat every MLS and external API as a dependency with its own SLA. A PropTech team without a dependency dashboard is flying blind — when a state's MLS goes down, you need to know before your support team does.

- Jurisdiction-scoped feature flags. Flag-by-state, flag-by-MLS, flag-by-license-type. A generic feature flag system collapses under this; you need hierarchical flags or you end up with hundreds of boolean flags.

- Map rendering as a tracked metric. Separate from API p99. Map tiles, clustering, search-within-bounds are perf domains of their own.

- Photo-pipeline queue depth. Alert-worthy when the backlog exceeds 2 hours of inbound photo volume.

Common pitfalls

- Treating MLS data as authoritative. It isn't. MLSes have outages, schema changes without notice, and delayed corrections. Your system needs to handle "listing was active yesterday, gone today with no delete event."

- Optimizing for national velocity when your customers are local. A top-10 metro broker cares about their metro's data quality, not your global uptime. Per-market SLOs matter.

- Skipping the data-residency conversation early. Enterprise brokerages ask about data residency in the first security questionnaire. Retrofitting residency after launch is expensive. Teams in EU markets also hit GDPR + local real-estate registries with their own constraints.

- Assuming the agent and consumer surfaces share a roadmap. They don't. Agents live in the product 6+ hours/day. Consumers visit 3 times before closing. Shared code is fine; shared priorities are a trap.

Where PanDev Metrics fits

PropTech engineering leaders are already managing a lot of different metrics surfaces. PanDev Metrics plugs in as a single view of actual developer time across the multiple services a typical PropTech org runs — listing ingestion, map service, agent portal, consumer app. For teams using GitLab on-prem (common in regulated markets), the on-prem Docker deployment runs alongside internal infra without exporting source data. The IDE heartbeat approach answers the most annoying PropTech-engineering question: "who's actually working on the Q2 MLS expansion vs the consumer-facing redesign?"

The one Git convention that makes all PropTech tracking work: name branches with task IDs (e.g. feature/MLS-2187). Coding time per initiative, lead time per jurisdiction, cost per new market — everything derives from that one naming rule.

Related reading

- Engineering Metrics in Fintech: Compliance, Speed, and Security

- DORA Metrics: The Complete Guide for Engineering Leaders (2026)

- E-Commerce: How to Accelerate Feature Delivery Before High Season

PropTech's honest constraint: our dataset is B2B-heavy and skews toward English-speaking markets. The patterns above fit US, Canada, UK, and Australia well. LATAM PropTech operates under notarial-code systems that change the data model significantly; we don't have strong signal there. If you're building in a market with a different property-registry regime, treat this guide as a starting point, not a map.