Release Management Playbook for Software Teams (2026)

A production release at a 60-engineer SaaS I worked with in 2025 went out at 16:48 on a Friday. The on-call pager fired at 17:22 — a 34-minute latent failure in a feature the release manager had approved "because CI was green." Rollback took 71 minutes because the automation had never been rehearsed with real traffic. Total cost: one customer refund, two engineers' weekends, and a policy change that should've existed from day one.

Release management is the unglamorous half of delivery. DORA's 2024 State of DevOps report ties change failure rate and mean time to restore directly to release discipline — not to engineer talent, not to test coverage. This playbook is the concrete set of rules and rituals that pushed two teams I worked with from monthly pain-releases to daily confident ones.

{/* truncate */}

Why release management is undervalued

Engineering leaders over-index on throughput metrics — deploy frequency, PR count, story points — and under-invest in the operational discipline that makes throughput safe. Google's DORA research has shown since 2019 that the high-performing quartile isn't faster because of heroics; it's faster because the release pathway is rehearsed, instrumented, and boring.

The four release-health metrics to track together:

| Metric | Elite benchmark (DORA 2024) | Low-performer signal | What it catches |

|---|---|---|---|

| Deployment frequency | On-demand / multiple per day | Weekly or less | Integration friction, batch size |

| Lead time for changes | < 1 day | > 1 week | Review + deploy pipeline drag |

| Change failure rate | 0-15% | > 30% | Release discipline, rollback readiness |

| MTTR | < 1 hour | > 24 hours | Observability + runbook maturity |

Tracked alone, any one of these lies. Teams gaming deploy frequency ship bad releases. Teams gaming failure rate ship nothing. You measure all four or you measure none.

The playbook

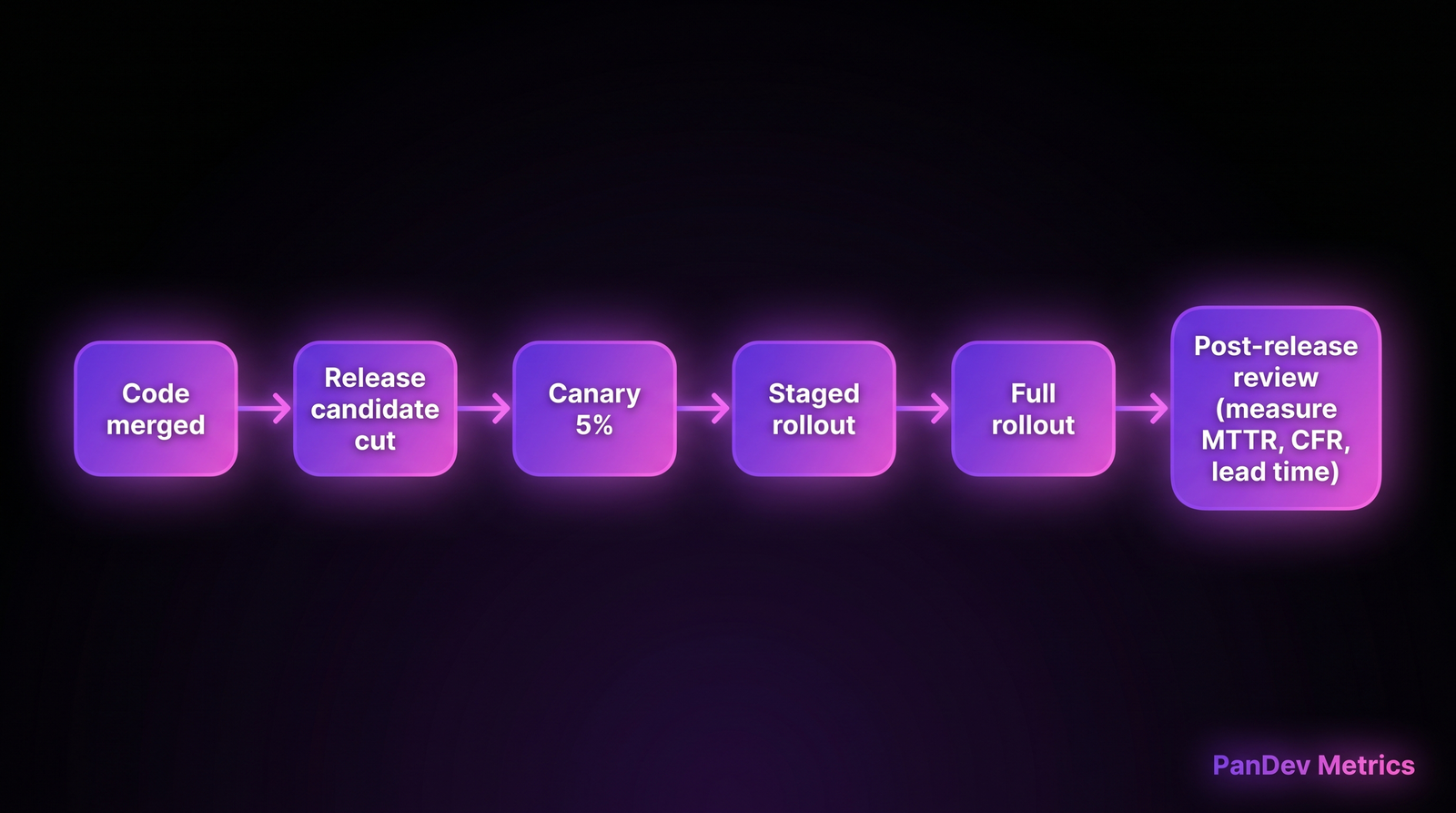

The pipeline most teams already have on paper. The playbook below is the written rules that make each stage actually execute.

The pipeline most teams already have on paper. The playbook below is the written rules that make each stage actually execute.

Step 1 — Release train, not release heroics

Pick a cadence and never skip it. Weekly on Wednesday, or daily at 14:00, or hourly on main — anything except "when it's ready."

The cadence is the commitment device. Teams without one ship when they feel confident; confidence is a lagging indicator of how bad the last release was. A train forces smaller batches, and small batches cut change failure rate by the most reliable mechanism in software: less risk per release.

Concretely, for each release train:

- Cut-off time for merging (e.g. 13:00 for 14:00 deploy) — everything after waits for the next train

- A tagged release candidate (

rc-2026.05.22.1) that runs in pre-prod for ≥ 30 minutes - A named release manager on duty who can abort — not the engineer who wrote the code

Step 2 — Canary by percent, not by prayer

A canary release isn't "we deploy and hope no one notices." It's an explicit routing rule that sends 5% of traffic to the new version for a fixed window, monitored on three signals:

- Error rate delta (new vs old, must not increase > 2%)

- p99 latency delta (must not increase > 10%)

- Business metric delta (checkout success, sign-up completion — must not decrease)

If any of the three flips red, automation halts the rollout. If all three stay green for the window (15-30 min typical), expand to 25% → 50% → 100%.

Most 50-200 engineer orgs don't have this. They have kubectl rollout and a Grafana dashboard someone eyeballs. That's not a canary, that's optimism.

Step 3 — Rollback is a first-class feature

Rollback must be:

- Rehearsed weekly in production (literally: roll forward, then roll back, on purpose)

- Under 10 minutes from decision to green-traffic-restored

- A button, not a PR — any on-call can execute, no approval needed

If your rollback requires writing a reverse migration, you don't have rollback. You have "we rolled forward with a fix, eventually." Migrations should be expand-contract: ship the new schema alongside, let both old and new code read it, remove the old schema after a successful bake window of ≥ 7 days.

Step 4 — The release manager rotation

A named release manager for each release train, rotating weekly. Not the engineer shipping the feature; a different person, accountable for the pipeline not the code. Responsibilities:

- Sign off that pre-prod bake passed

- Monitor canary dashboards during rollout

- Own the rollback decision (without escalation)

- Run the 15-minute post-release review

Rotation means every senior engineer on the team gains release-pathway literacy within a quarter. That literacy is what makes the team resilient when the regular release manager is on vacation.

Step 5 — Post-release review in 15 minutes

After every release train, the release manager writes a three-line note:

- What shipped

- What anomalies appeared (if any)

- What we'd change in the pipeline next time

Fifteen minutes, stored in a dedicated Slack channel or a markdown file in the release repo. Monthly, the team reviews the accumulated notes for patterns. This is how you find the silent process rot — the fact that the last 6 releases all had a "canary alerted but we proceeded" note means the canary thresholds are wrong, not the canary process.

The release checklist (copy-ready)

| Check | Required before cut? | Owner |

|---|---|---|

| All CI checks green (unit + integration + e2e) | Yes | CI |

| Migrations marked expand-contract | Yes | Author |

| Feature flags configured (default-off for risky features) | Yes | Author |

| Release notes drafted (one paragraph minimum) | Yes | Author |

| Release candidate tagged + deployed to pre-prod | Yes | Release manager |

| Pre-prod bake ≥ 30 min with synthetic traffic | Yes | Release manager |

| Rollback procedure verified for this release (button works) | Yes | Release manager |

| Dashboards open (errors, p99, business metrics) | Yes | Release manager |

| On-call engineer aware + in channel | Yes | Release manager |

If any row is "No," the release doesn't go. This isn't bureaucracy — it's the difference between a 71-minute Friday rollback and a 4-minute Tuesday one.

Common mistakes to avoid

- "We'll test this in staging and then production." No. Staging traffic is never production traffic. Canary on real users, with tight percentages and tight thresholds, or you haven't tested it.

- "We'll roll forward with a fix." Sometimes true, mostly cope. Rollback beats roll-forward every time the bug is unclear. Decide roll-forward in < 5 minutes, or roll back.

- "The release manager is whoever is free." This is how release discipline decays. Named, rotated, recognized — or you have no release manager.

- Releasing on Fridays "because we're ready." You're not ready. The people who'd fix the outage are AFK in 2 hours. Ship Monday-Thursday. The cadence saves you from yourself.

- Post-release reviews as optional. They are the flywheel. Without them the team never learns why it shipped 30% change failure rate for two quarters.

Measuring the playbook's effect

Track these four, weekly, and you'll see the playbook working within 8-12 weeks:

- Deployment frequency (is it trending up, at stable or improving failure rate?)

- Change failure rate (should settle in the 5-15% band — zero is suspicious, above 30% means discipline gap)

- MTTR (should trend below 60 min as rollback gets rehearsed)

- Lead time for changes (should trend below 24 hours as batch size shrinks)

PanDev Metrics pulls lead time from Git events (commit → PR → merge → deploy) and pairs it with IDE heartbeat data to show whether teams are actually coding less or reviewing slower. For release discipline, the lead-time stage we watch most is PR-merged → deployed: that number reveals the pipeline health you can't see in CI logs. Our data across 100+ B2B companies: teams with written release playbooks have a median merged-to-deployed time of 4 hours; teams without have 34 hours. That's an 8x delta, and it's the cleanest signal of release discipline we can measure.

The contrarian claim

Release frequency isn't what separates high-performers from low-performers. Rollback readiness is. A team that ships monthly but can roll back any release in 8 minutes outperforms a team that ships daily but takes 3 hours to restore service. DORA's data supports this: MTTR is the metric that moves most when teams invest in release discipline, and MTTR improvements unlock the confidence to ship more often — not the reverse.

If you can't roll back in under 10 minutes, don't try to ship faster. Rehearse rollback first.

Honest limits

This playbook assumes a web/backend service with deployable artifacts and traffic routing. Mobile releases (app-store review cycles), embedded firmware (OTA update mechanics), and on-prem customer installs all have different constraints — the shape of the playbook transfers, the timing doesn't. Our data is heaviest on cloud SaaS B2B; we see less signal on desktop-software release cadences and almost none on embedded.

Related reading

- Change Failure Rate: Why 15% Is Normal and 0% Is Suspicious — the counter-intuitive target for CFR

- MTTR: Why Speed of Recovery Matters More Than Preventing All Outages — why rollback investment pays back faster than prevention

- From Monthly Releases to Daily Deploys: A Practical Roadmap — the staged rollout of the cadence increase itself

- External: DORA State of DevOps 2024 — the benchmark ranges cited above