Remote vs Office Developers: What Thousands of Hours of Real IDE Data Tell Us

According to McKinsey's research on developer productivity, software engineers spend only 25-30% of their time actually writing code. So where developers work should matter far less than how their time is structured. Yet the remote vs. office debate has been running for six years, with CEOs citing "collaboration" and developers citing "focus" — both arguing from conviction, not evidence.

We have thousands of hours of tracked IDE activity across 100+ B2B companies. The data tells a more nuanced story than either side wants to hear.

{/* truncate */}

Why Most Remote Work Studies Are Unreliable

Before presenting our data, let's address why the existing research is so contradictory.

The measurement problem

Most "remote productivity" studies measure one of two things:

| Study type | What they measure | Why it's flawed |

|---|---|---|

| Survey-based | Self-reported productivity perception | People overestimate their own output by 20-40% |

| Output-based (LoC, PRs) | Raw volume metrics | Quantity ≠ quality; gaming is trivial |

Neither approach captures what actually matters: sustained, high-quality coding effort measured objectively, at the individual level, across diverse companies.

The selection bias

Companies that embraced remote work early tend to be tech-forward, well-managed, and already good at async communication. Companies that mandate office presence tend to have different management styles. Comparing their outcomes tells you about management culture, not about where butts sit.

The survivorship problem

Remote developers who couldn't thrive remotely already returned to offices or left for different roles. The remote population in any study is pre-filtered for people who work well remotely — making remote look better than it "is" on average.

Our Data: What IDE Activity Actually Shows

PanDev Metrics collects IDE heartbeat data regardless of where the developer is located. We don't track GPS or location — we track coding activity. This means our data measures the same thing for remote and office developers: active time in the IDE, Focus Time sessions, project switches, and coding patterns.

Here's what we observe across 100+ B2B companies:

Coding time: Similar totals, different distributions

| Metric | Remote-first companies | Office-first companies | Hybrid |

|---|---|---|---|

| Median daily coding time | 82 min | 71 min | 78 min |

| Mean daily coding time | 118 min | 102 min | 111 min |

| Std. deviation | 68 min | 74 min | 71 min |

Remote-first developers show slightly higher median coding time (82 min vs 71 min for office-first). But the difference is modest — 15% higher median, not the 2x-3x difference that remote work advocates sometimes claim.

The more interesting signal is in the standard deviation: office-first companies have higher variance, meaning their developers have a wider spread between low and high coders. This suggests that office environments help some developers (through osmotic learning and easy collaboration) while hindering others (through interruptions and meetings).

Focus Time: Remote wins clearly

| Focus Time metric | Remote-first | Office-first | Hybrid |

|---|---|---|---|

| Avg. Focus session length | 68 min | 42 min | 53 min |

| Sessions > 90 min (% of all sessions) | 22% | 11% | 16% |

| Longest daily session (avg.) | 94 min | 61 min | 74 min |

This is where remote work shows its strongest advantage. Remote developers achieve Focus Time sessions that are 62% longer on average than office developers. The percentage of deep work sessions (90+ minutes) is double for remote-first companies.

The reason is straightforward: offices generate interruptions. Tap-on-the-shoulder questions, overheard conversations, ambient noise, and "got a minute?" requests all fragment focus. Remote developers can close Slack, put on headphones, and disappear into code. Office developers cannot.

Day-of-week patterns: The Tuesday effect persists

Both remote and office developers show Tuesday as the peak coding day, but the pattern differs:

| Day | Remote-first productivity | Office-first productivity |

|---|---|---|

| Monday | Medium-High | Medium (more meetings post-weekend) |

| Tuesday | Peak | Peak |

| Wednesday | High | Medium-High |

| Thursday | Medium-High | Medium (meeting-heavy) |

| Friday | Medium | Low-Medium |

Office-first companies show a steeper decline from Tuesday to Friday, likely due to accumulating meeting overhead through the week. Remote companies maintain more consistent daily productivity.

Late-hour coding: Remote developers work different hours

| Time window | Remote-first activity share | Office-first activity share |

|---|---|---|

| 6–9 AM | 12% | 4% |

| 9 AM–12 PM | 32% | 38% |

| 12–2 PM | 8% | 12% |

| 2–5 PM | 24% | 34% |

| 5–8 PM | 16% | 9% |

| 8 PM–12 AM | 8% | 3% |

Remote developers spread their work across a wider time window. They start earlier, take longer midday breaks, and code more in the evening. Office developers concentrate work in the traditional 9-5 window.

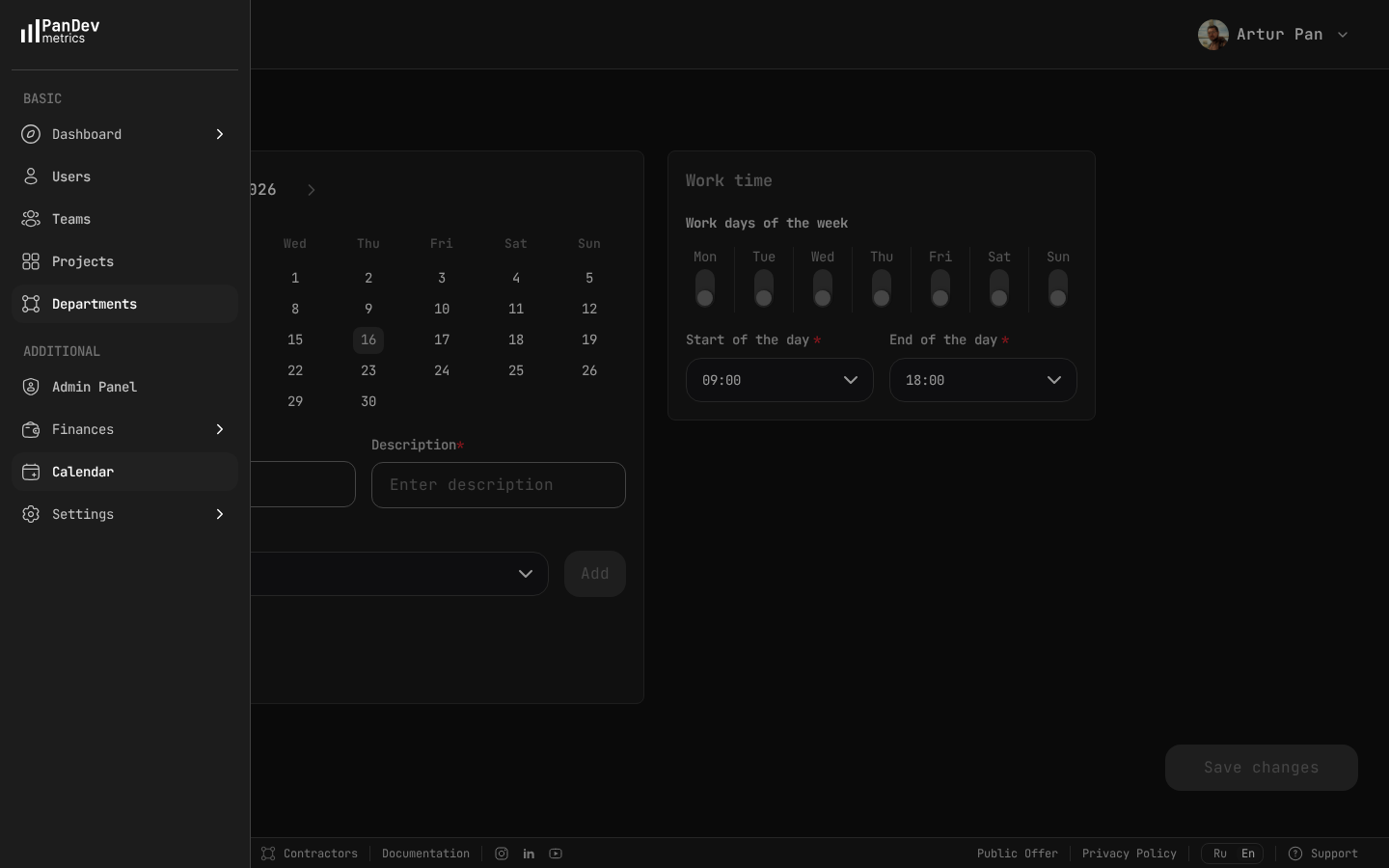

PanDev's calendar settings let you define standard working hours for each team — critical for comparing remote vs office patterns against the expected 09:00-18:00 baseline.

PanDev's calendar settings let you define standard working hours for each team — critical for comparing remote vs office patterns against the expected 09:00-18:00 baseline.

This pattern is consistent with findings from the Accelerate research (Forsgren, Humble, Kim), which shows that high-performing teams tend to optimize for flow over rigid schedules. Companies that force remote developers into 9-5 meeting schedules negate much of the remote Focus Time advantage.

IDE and Language Patterns by Work Mode

IDE adoption differs

| IDE | Remote-first share | Office-first share |

|---|---|---|

| VS Code | 62% | 54% |

| Cursor | 18% | 8% |

| IntelliJ IDEA | 12% | 22% |

| Other JetBrains | 5% | 11% |

| Visual Studio | 3% | 5% |

Remote-first companies show notably higher adoption of Cursor (18% vs 8%). This aligns with a broader pattern: remote teams tend to adopt AI-assisted development tools earlier. The AI assistant partially compensates for the loss of "ask a colleague" moments that office developers rely on.

Our overall data shows Cursor adoption growing rapidly, with usage disproportionately driven by remote-first organizations. The Stack Overflow Developer Survey has similarly documented faster AI tooling adoption among remote-heavy teams.

Language distribution

| Language | Remote-first hours share | Office-first hours share |

|---|---|---|

| TypeScript | 32% | 21% |

| Python | 24% | 16% |

| Java | 14% | 28% |

| C# | 4% | 12% |

| Other | 26% | 23% |

Remote-first companies lean heavily toward TypeScript and Python — languages associated with startups, web applications, and cloud-native development. Office-first companies have more Java and C# — languages dominant in enterprise and regulated industries.

This is a confounding factor: the industries that favor remote work also favor different tech stacks. Some of the "remote productivity advantage" may actually be a "TypeScript/Python productivity advantage" — these languages have faster feedback loops, less boilerplate, and quicker iteration cycles.

What the Data Does NOT Show

It doesn't show that remote is "better" for everyone

The 15% median coding time advantage for remote-first companies is real but modest. For some developers — especially juniors who benefit from mentorship, or those in noisy home environments — office work may be genuinely more productive.

It doesn't show causation

Companies that go remote-first may already have better engineering practices, stronger async cultures, and more disciplined meeting hygiene. The remote work may be a symptom of good management, not a cause of high productivity.

It doesn't measure collaboration quality

IDE data captures individual coding productivity. It doesn't capture the quality of design discussions, the speed of knowledge transfer, or the serendipitous conversations that sometimes produce breakthrough ideas. These are real benefits of co-location, even if they're hard to measure.

It doesn't account for time zones

Distributed remote teams spanning multiple time zones face coordination challenges that co-located teams don't. Our data doesn't isolate this variable, but it's a significant factor for remote-first companies with global teams.

The Real Question: What Are You Optimizing For?

The remote vs. office debate is often framed as a binary. The data suggests a more useful framework:

| Priority | Favors | Why |

|---|---|---|

| Individual Focus Time | Remote | 62% longer focus sessions, fewer interruptions |

| Junior developer onboarding | Office (or structured hybrid) | Osmotic learning, immediate feedback |

| Synchronous collaboration | Office | Same-time, same-room discussions are faster |

| Async documentation culture | Remote | Forces writing things down, which scales |

| Developer satisfaction | Flexible/hybrid | Most developers prefer choice |

| Cost optimization | Remote | No office overhead, broader talent pool |

The most effective approach for most organizations is structured hybrid — not "come in 3 days because we said so," but purposeful in-office time for activities that genuinely benefit from co-location (design sprints, retrospectives, team bonding) with remote time protected for focus work.

Five Recommendations Based on the Data

1. Protect remote Focus Time religiously

If you have remote developers, their biggest advantage is Focus Time. Don't destroy it with mandatory 9-5 availability, excessive Slack responsiveness expectations, or back-to-back video calls. Our data shows that remote developers who are treated like "office developers with cameras" lose their productivity advantage entirely.

2. Invest in async communication

The companies in our data with the highest remote developer productivity have strong async cultures: written RFCs, recorded decision logs, detailed PR descriptions, and Slack threads instead of huddles. This takes discipline but pays dividends.

3. Don't compare raw numbers across modes

A remote developer coding 82 minutes/day and an office developer coding 71 minutes/day may be delivering identical business value — the office developer might get more done in shorter sessions due to quick in-person clarifications, or the remote developer might spend more time on rework due to miscommunication.

Compare outcomes (features shipped, quality metrics, planning accuracy) not just activity.

4. Use data, not ideology

Too many return-to-office mandates are driven by executive belief, not measurement. If you're going to change work policy, measure before and after. Track Focus Time, coding time, and Delivery Index before the policy change, then compare 60 days later. Let the data decide.

PanDev Metrics provides consistent measurement regardless of where developers work — the same IDE plugins, the same metrics, the same dashboards. This makes before/after comparisons methodologically sound.

5. Optimize the calendar, not the location

Our data suggests that meeting load is a bigger determinant of productivity than location. A remote developer with 5 hours of Zoom calls is less productive than an office developer with 1 hour of meetings. Fix the calendar first, then worry about geography.

| Meeting load | Remote coding time | Office coding time |

|---|---|---|

| < 1 hr/day | 105 min | 92 min |

| 1–2 hr/day | 78 min | 72 min |

| 2–3 hr/day | 52 min | 54 min |

| 3+ hr/day | 28 min | 31 min |

At high meeting loads (3+ hours), remote and office productivity converge to the same low level. The location advantage disappears entirely when the calendar is full.

The Hybrid Reality

The data paints a nuanced picture that neither remote absolutists nor office mandators want to accept:

- Remote work provides a real but moderate Focus Time advantage (62% longer sessions)

- Total coding time differences are small (15% median gap)

- The biggest productivity driver is meeting load, not location

- Tech stack, company culture, and management practices confound simple remote-vs-office comparisons

- Individual variation within each mode exceeds variation between modes — some office developers outperform most remote developers, and vice versa

The future of engineering productivity isn't about where developers sit. It's about whether they have the uninterrupted time, clear objectives, and proper tooling to do their best work — regardless of location. This conclusion aligns with the SPACE framework (Forsgren et al., 2021), which argues that productivity is multidimensional and cannot be reduced to a single environmental factor.

Based on aggregated, anonymized data from PanDev Metrics Cloud (April 2026). thousands of hours of IDE activity across 100+ B2B companies. Analysis based on company-level work mode policies (remote-first, office-first, hybrid) — individual developer locations were not tracked.

Want to measure your team's real productivity — remote, office, or hybrid? PanDev Metrics tracks IDE activity consistently across all work modes. Same plugins, same metrics, same truth — regardless of where your developers code.