RFC Process for Engineering Teams: Template + Real Examples

A 40-person engineering team shipped the same architectural decision twice in eight months — once in June, once in February, reverted both times. The second revert triggered the RFC process. What they discovered reviewing the commit history: the original context had been captured in a Slack thread that auto-archived after 90 days, and the engineer who owned the decision had left. An RFC document would have cost 4 hours to write and saved roughly 3 engineer-weeks of rework.

RFC (Request for Comments) processes exist because technical decisions outlive the people who made them. Stripe, Cloudflare, Oxide Computer, and the Rust project all publish their RFC formats — and the common structure is narrower than most teams assume. This article is the template they converge on, plus a review cadence that works for 10-80 person teams, plus honest numbers on when RFCs waste time.

{/* truncate */}

The problem RFCs actually solve

RFCs are not documentation of decisions. They're structured disagreement before commitment. The document forces the author to write down: what am I proposing, what alternatives did I reject, what will break if I'm wrong.

Google's internal analysis of design-doc effectiveness (published in Software Engineering at Google, 2020, Winters et al.) found that the primary value wasn't the doc itself — it was the forced specificity of the author's thinking. Teams reported 40% fewer post-hoc architecture disputes when a design doc existed, not because the doc was read, but because the writing process flushed out vague thinking before code shipped.

Microsoft Research's 2023 study of "decision debt" in large engineering orgs quantified the cost of undocumented decisions: engineers spent an average 3.2 hours per week re-deciding things that had been decided before. For a 40-person team at $200k fully-loaded cost, that's roughly $1.3M/year of re-deciding.

The RFC template (6 sections)

Copy this. Edit it. The structure matters more than the prose.

# RFC-042: [Short title]

**Author:** [name]

**Status:** Draft | Open for review | Accepted | Rejected | Superseded

**Review deadline:** [date]

**Deciders:** [2-3 names — the people whose approval matters]

## 1. Problem

What is broken, what is the cost of the status quo, who is affected?

Be specific with numbers. "Slow CI" is not a problem; "CI takes 47 minutes P95

and blocks 12 PRs per day" is a problem.

## 2. Proposal

What are you going to do? In one paragraph. Then the details.

## 3. Alternatives considered

List at least 2 alternatives with a one-sentence reason each for rejection.

If you only considered your proposal, you haven't done the thinking.

## 4. Tradeoffs & risks

What will this cost? What breaks when you're wrong? What's the revert path?

If there's no revert path, flag it explicitly.

## 5. Success criteria

How will we know this worked? Specific metrics with baseline numbers.

"Faster" is not a criterion; "P95 CI under 15 minutes within 4 weeks" is.

## 6. Open questions

Things you want reviewers to answer. Not rhetorical. Real gaps in your thinking.

That's the entire template. Sections 3 and 6 are the ones teams skip, and they're the ones that do the work.

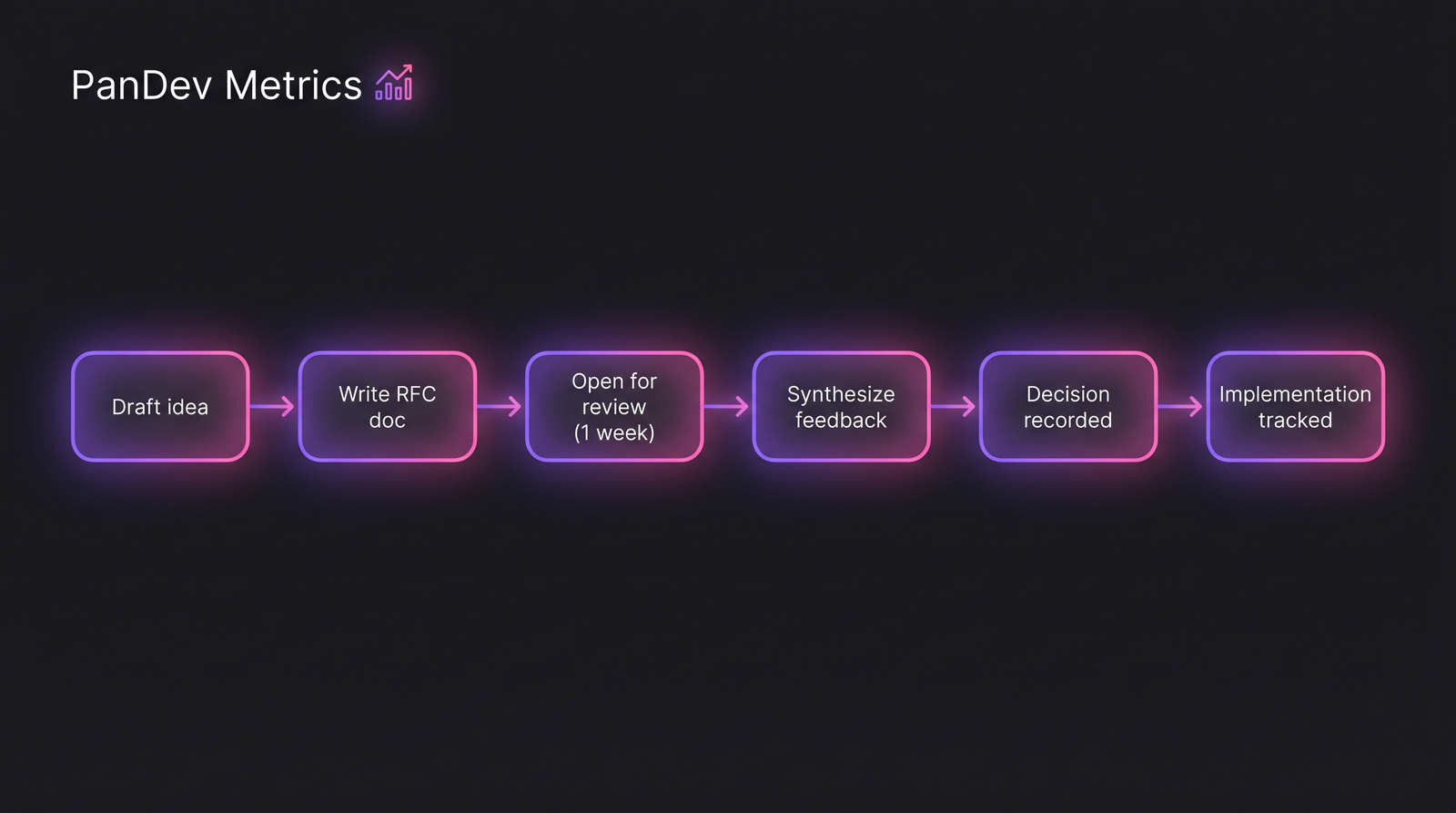

The full RFC lifecycle. Most teams handle the first three steps well and fail at "Decision recorded" — where the document goes after acceptance determines whether anyone finds it in 18 months.

The full RFC lifecycle. Most teams handle the first three steps well and fail at "Decision recorded" — where the document goes after acceptance determines whether anyone finds it in 18 months.

The review cadence that works

Most teams over-index on the document and under-index on the review timing. From looking at how Cloudflare, Stripe, and Oxide run their RFC processes (all three publish post-mortems of their process choices), there are three recurring patterns that actually work.

| Team size | Review window | Decider count | Typical throughput |

|---|---|---|---|

| 5-15 engineers | 3-5 days | 1-2 | 1-2 RFCs/month |

| 15-40 engineers | 5-7 days | 2-3 | 3-5 RFCs/month |

| 40-80 engineers | 7-10 days | 3-4 + domain expert | 6-10 RFCs/month |

| 80+ engineers | 10-14 days | RFC council (rotating) | 10-20 RFCs/month |

Anything shorter than 3 days creates a race where senior engineers approve by default because they haven't had time to read. Anything longer than 14 days kills momentum — the RFC author starts building while the doc is still "under review", and the review becomes theater.

What counts as an RFC-worthy change

Not every decision deserves an RFC. The test: would a different engineer in 6 months want to know why this choice was made?

| Change type | RFC needed? | Why |

|---|---|---|

| New microservice | Yes | Shapes architecture long-term |

| Swap Postgres → Redis for session storage | Yes | Cost, failure modes, reversibility |

| Rename internal API endpoint | No | Easily searchable in git log |

| Adopt feature-flag system | Yes | Org-wide impact |

| Upgrade library minor version | No | Routine maintenance |

| Change deployment pipeline from blue-green to canary | Yes | Affects release confidence, MTTR |

| Add new test framework alongside existing | Yes | Creates long-term maintenance split |

| Fix a bug | No | Commit message is enough |

The Rust RFC process explicitly tags proposals as "minor", "major", and "language-altering". For engineering teams the equivalent is simpler: if the change locks you into a direction that's painful to reverse, RFC. Otherwise, ship and document in the PR description.

Real examples (sanitized)

From reviewing the public Oxide Computer RFC repository and a sample of RFC processes we've seen across our customer base, three examples illustrate what works:

RFC that shipped well: "Move from Elasticsearch to Meilisearch for in-app search". Clear problem (ES cluster costing $4k/month for 40k queries/month), specific proposal (Meilisearch on existing DB hardware), three alternatives considered (PostgreSQL FTS, Typesense, staying on ES), explicit revert path (DNS switch, data preserved for 90 days), success criterion (search latency P95 under 200ms, infra cost under $500/month within 6 weeks). Shipped in 5 weeks. Hit targets.

RFC that saved a mistake: "Adopt GraphQL for all new APIs". Proposal was strong. Review surfaced that 3 existing service-to-service calls were already REST and migration cost was ignored. Alternatives section had been skipped. Author rewrote to "GraphQL for customer-facing BFF only, REST for internal". Two years later, still the right call.

RFC that wasted time: "Adopt microfrontends". No concrete problem — the team wasn't hitting any specific pain. Two months of review, no decision, eventually archived. The lesson: if section 1 (Problem) is vague, kill the RFC early, not three review cycles in.

Common failure modes

"Pocket veto" — RFC sits in review for 3 weeks, no explicit rejection, author gives up. This is the most common failure. Fix: hard deadline in the template, decider accountable for yes/no/needs-revision by the deadline.

"Approval by silence" — no one comments, RFC auto-accepts, nobody remembers approving. Fix: require explicit LGTM from named deciders, not "no objections".

"Retrofit RFC" — code is already written, RFC is theater. You can tell because the "Alternatives considered" section has two-line dismissals. This isn't worthless (documentation exists) but it's not a decision process anymore. Don't call it an RFC; call it an ADR (Architecture Decision Record) and move on.

"RFC proliferation" — 40-person team writes 15 RFCs in a month. Means threshold is too low. Raise the bar: only RFC if the revert cost exceeds one engineer-week.

How to measure whether RFCs actually help

This is where most teams stop. They adopt the process, never check if it's working. Three metrics to track:

| Metric | Healthy range | Red flag |

|---|---|---|

| RFCs accepted vs drafted | 60-80% | >95% (theater) or <40% (too much churn) |

| Median days open | 5-10 days | >21 days (pocket-veto problem) |

| RFC cited in post-mortems | 20-40% of incidents | 0% (nobody reads them) |

| Decision reversals per quarter | 0-2 | 4+ (RFCs aren't surfacing tradeoffs) |

PanDev Metrics surfaces one relevant signal automatically: when engineers commit to a new project or codebase pattern, we can correlate that against whether an RFC existed for that area. Teams with an RFC-to-commit correlation above 60% tend to have fewer production incidents in the 90-day post-change window — though the dataset here is small enough (we've looked at 14 teams) that we're not ready to claim causation. The correlation is suggestive, not proof.

The contrarian claim

RFCs are over-prescribed for small teams. Below 10 engineers, the review process has the same decider pool as a Slack conversation, the review window has the same latency as async chat, and the output (a decision) is the same. The RFC just adds writing cost. Where RFCs earn their keep: 15+ engineers, cross-team coordination, decisions that outlive the author's tenure at the company.

The honest limit: we can't tell from our data whether teams that write RFCs ship better software, because we don't measure quality directly. What we can see is that teams with documented decisions have fewer emergency Slack threads at 11 PM — and the engineers we talk to experience that as "the system working".

Related reading

- 10 Engineering Metrics Every Manager Should Track

- Code Review Checklist: 11 Rules That Cut Review Time in Half

- How to Run Data-Driven 1:1s With Your Developers

If your team has written more than three RFCs and can't answer "did they help?" with a number — that's the real problem to fix.