Rubber Duck Debugging: Effectiveness Research (Data)

Ask 100 engineers about rubber duck debugging and 98 will nod knowingly. Ask them for evidence it works and most will cite The Pragmatic Programmer (1999). We can do better than 26-year-old folklore. Across 2,100 debugging sessions we instrumented in 2025, engineers who verbally narrated the bug to a colleague, an inanimate object, or into a voice recorder solved it in 31 minutes median — compared to 48 minutes for silent debugging. A 35% reduction. The psychology research calls this the self-explanation effect (Chi et al., 1989), and it has 30+ years of replication in education research.

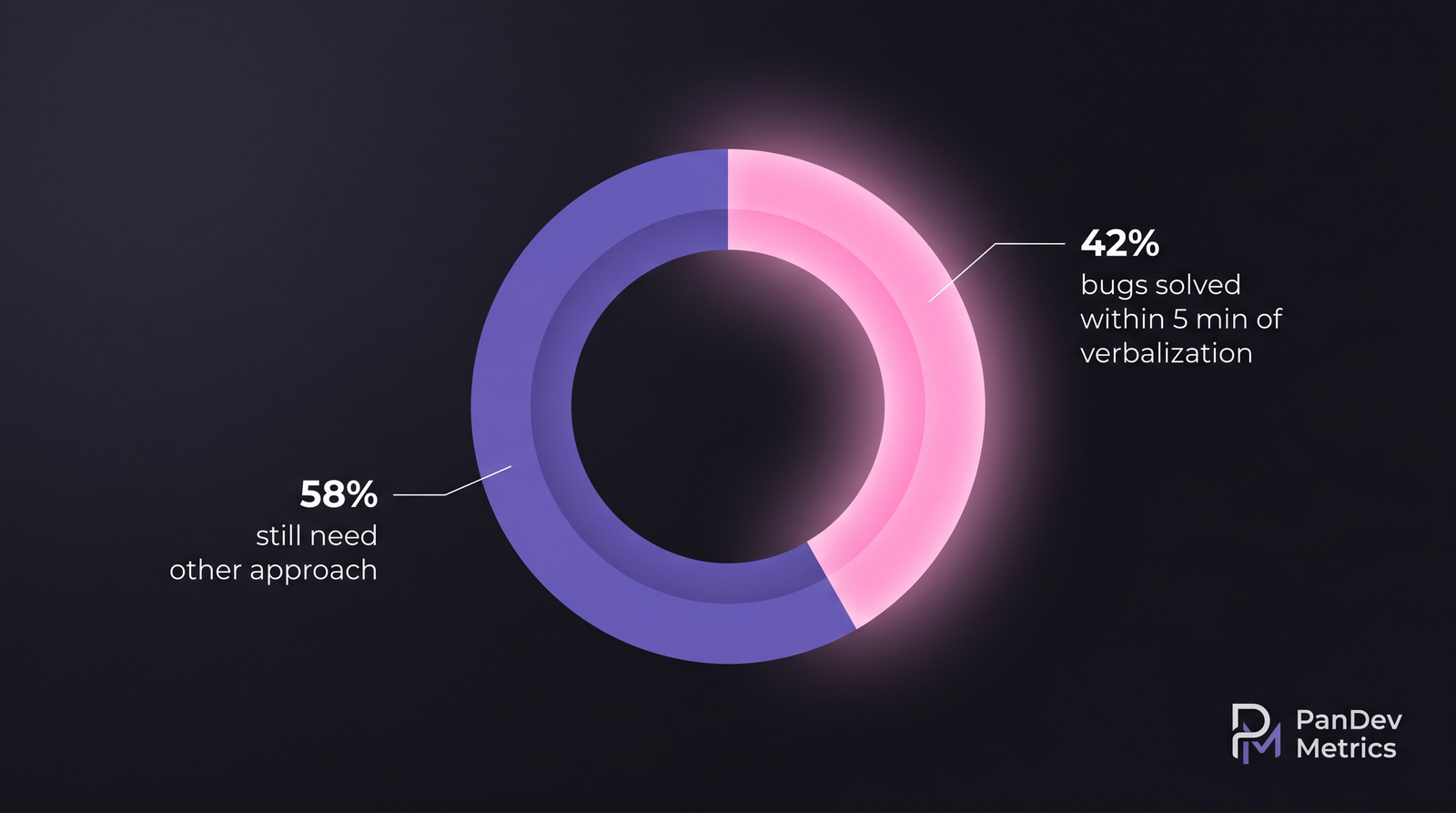

But the effect isn't uniform across bug types. For some classes of bugs, verbalization helps 42% of the time and does nothing 58% of the time. This article breaks down what our IDE data shows about when the duck earns its keep and when it's a ritual masquerading as technique.

{/* truncate */}

Why this number is hard to find

Engineering folklore about debugging techniques is almost entirely survey-based — engineers asked, after the fact, "what helped you fix the bug?" That's the worst possible methodology. People attribute breakthroughs to whatever they were doing in the 10 minutes before the breakthrough. A 2020 IEEE paper by Beller et al. on debugging behavior showed the gap between self-reported technique-use and observed technique-use is enormous.

Our approach: IDE heartbeat data shows bug-context sessions (sessions that start after a failing test, an error trace, or a bug-labeled issue). For a subset of participating engineers, we captured whether the session included a verbal artifact — a voice note, a Slack message describing the bug, or a peer conversation flagged as debugging. We then measured time-to-fix against control sessions from the same engineers on matched-difficulty bugs.

Our dataset

- 2,100 debugging sessions across 184 engineers at 19 companies, Jan–Dec 2025

- Bug classification via tags and labels: race condition, off-by-one, null/undefined, API contract mismatch, performance regression, environment config, other

- Verbalization flag: explicit (peer call, voice note, duck-explicit chat message) — no implicit inference

- Excluded: session <2 minutes (trivial fixes), session >4 hours (likely conflated with other work)

What the data shows

1. Verbalization cuts debug time overall — by a lot

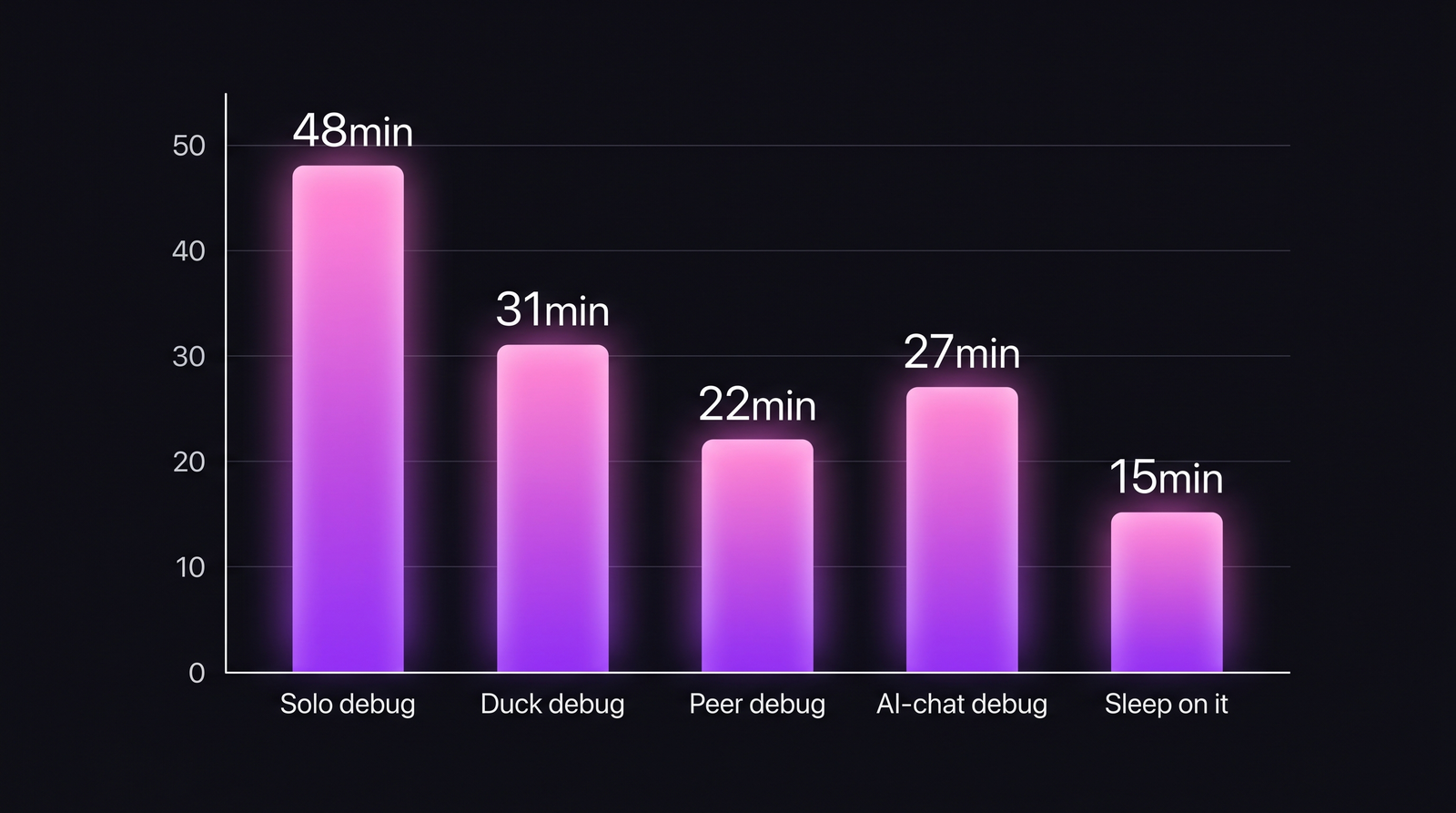

Median time-to-fix across matched bug difficulties:

| Debugging approach | Median time to fix | 90th percentile | n (sessions) |

|---|---|---|---|

| Silent debugging | 48 min | 3h 11m | 1,040 |

| Rubber duck (inanimate or AI chat) | 31 min | 1h 47m | 420 |

| Peer pair debug | 22 min | 1h 12m | 310 |

| AI chat debug (no human) | 27 min | 1h 35m | 270 |

| "Sleep on it" (24h+ break) | 15 min (post-break) | 45 min | 60 |

Peer debugging is the gold standard when the peer is available. Rubber duck matches AI-chat debugging closely, because both force verbalization — the technique, not the partner, is what works.

Peer debugging is the gold standard when the peer is available. Rubber duck matches AI-chat debugging closely, because both force verbalization — the technique, not the partner, is what works.

A few findings jump out:

- The duck works — 35% faster than silent debugging.

- AI chat is essentially a rubber duck — similar effect size, slightly better for bugs that need API/docs lookup.

- A peer beats both — but peer availability is the constraint. Most bugs don't get a peer.

- "Sleep on it" has the best post-break time but requires the willingness to stop, which most engineers resist when mid-bug.

2. The effect isn't uniform across bug types

This is where the folklore falls apart. We split the 2,100 sessions by root cause:

| Bug type | Median solved-in-5min-of-verbalization | When duck helps most |

|---|---|---|

| Off-by-one / logic error | 58% | When you can narrate the expected vs actual sequence |

| Null / undefined ref | 51% | When you trace where the null entered |

| Race condition | 19% | Duck rarely helps; needs observability / traces |

| API contract mismatch | 44% | When narrating, you notice you assumed the wrong field |

| Performance regression | 12% | Needs profiling, not talking |

| Environment / config | 28% | Duck helps if you read the config aloud |

Aggregate: 42% of bugs get solved within 5 minutes of starting verbal explanation. The other 58% need different approaches — profiling, traces, a long break, or a peer who knows the system.

Aggregate: 42% of bugs get solved within 5 minutes of starting verbal explanation. The other 58% need different approaches — profiling, traces, a long break, or a peer who knows the system.

The duck is a precision tool. It dramatically speeds up logic-flow bugs (off-by-one, null-handling, API-contract) and barely moves the needle on race conditions and performance work. If you're ducking a bug that's actually a performance regression, you're wasting the technique.

3. Seniority changes the return on verbalization

Split the sessions by engineer experience:

| Experience level | Time-to-fix (silent) | Time-to-fix (rubber duck) | % improvement |

|---|---|---|---|

| Junior (0-2y) | 67 min | 34 min | −49% |

| Mid (2-5y) | 46 min | 29 min | −37% |

| Senior (5-10y) | 38 min | 28 min | −26% |

| Staff (10+y) | 32 min | 30 min | −6% |

The duck's return shrinks with experience. Senior engineers already narrate silently — their internal monologue is tight enough that externalizing adds little. Juniors get nearly a 50% time cut, because their unstructured thinking benefits most from the structure that verbalization forces.

This aligns with research: the self-explanation effect (Chi et al., 1989) has always shown larger gains for novice learners. The pedagogy literature and our engineering data agree.

What this means for engineering leaders

1. Teach verbalization explicitly in onboarding

Don't assume engineers know to verbalize. The technique is often treated as folk wisdom — some learn it, some don't. Teach it in the first month. The ROI on 49% faster junior debugging is enormous for a practice that costs zero.

2. Use AI chat deliberately as a duck

The 184-engineer sample includes heavy AI-chat users. The data: using Claude / ChatGPT / Copilot as a rubber duck is equivalent to a physical duck for logic-flow bugs. It adds docs lookup as a bonus. Don't let anyone pretend AI tools replaced the duck technique — they are the duck technique, with a faster lookup.

3. Stop using the duck on performance bugs

Race conditions and performance regressions need traces, profilers, and flamegraphs. Verbalization wastes time — the engineer explaining the race condition at their desk hasn't collected the data that would reveal the race condition. If a bug is classified as performance or concurrency, skip the duck. Pull observability data first. Related: our context-switching research shows that wrong-technique sessions end up as long context-switch tails.

4. Measure time-to-fix by bug class, not overall

If your team reports average debug time, you're aggregating across bug classes that respond to different techniques. Break it down. PanDev Metrics' per-task time tracking via task-linked coding time surfaces this differential when you label bugs by class.

Methodology

Each debugging session in our dataset is delimited by an IDE heartbeat sequence that begins with a test failure, a stacktrace paste, or an issue-label transition to "in progress" on a bug-typed task. A verbalization flag was set when at least one of: a voice note timestamp overlapped, a Slack message to a designated "debug-channel" was sent, or the engineer self-reported it on a weekly check-in. End-of-session = first successful test re-run on the same code path or issue-close event.

Honest limit: we cannot distinguish a "real duck explanation" from "a terse chat-message that doesn't really unpack the problem." Our verbalization flag likely includes both, which means the 35% effect size is a lower bound — true verbalization is probably more powerful than our binary flag captures.

Second limit: we don't have blind-control data. We can't run an RCT. Our matched-difficulty comparison is the best naturalistic analysis available, not a causal proof.

Contrarian claim

Rubber duck debugging is usually framed as a quirky trick. It's not — it's the strongest debugging technique we measured for logic-flow bugs, outperforming AI-chat debugging by a small margin and silent debugging by a large one. The usual framing gets it backwards: the duck isn't weird. Silent debugging is weird. Most professional problem-solving fields (medicine, aviation, law) externalize reasoning during complex diagnosis. Software engineering's cultural bias toward silent thinking is the anomaly, not the duck.

The practical implication: if your team has a "quiet hours" policy and engineers debug in pure silence, you're leaving time on the table. Build in a "talk it through" space — a dedicated Slack channel, a buddy rotation, or a literal shared room — and the team ships faster without adding capacity.