Sprint Retrospectives That Don't Waste Time: Data-Driven Framework

The average engineering retro runs 60 minutes, produces five sticky notes, and ships zero action items into the next sprint. The Scrum Alliance's 2023 practitioner survey put "retros feel performative" as the #1 complaint from senior engineers. That's not a meeting problem. It's a measurement problem. Teams debate feelings because nobody pulled the data before the call.

This article gives you a 30-minute retrospective that opens with numbers, ends with named owners, and works on any team between 5 and 25 engineers.

{/* truncate */}

The problem with "what went well / what didn't / what to improve"

That 3-column board encourages narrative, and narrative bends toward the loudest voice. A VP Engineering we spoke with at a 40-person SaaS team put it bluntly: "After the fifth retro, I realized we were solving whoever-complained-most, not whatever-actually-hurt-the-sprint."

Atlassian's 2024 Agile Practitioner Report found that 62% of teams never revisit action items from the previous retro. Items are written, agreed on, then forgotten by Wednesday. The rituals of agile outlive the discipline.

Three failure modes show up in every unproductive retro:

| Failure mode | What it looks like | What it actually costs |

|---|---|---|

| Feelings-first | Team vents; no data backs any claim | 45 minutes, zero decisions |

| Loudest-voice bias | One engineer dominates the "improve" column | Junior devs stop participating |

| No follow-through | Actions written but not tracked | Same issue re-raised 3 sprints later |

The fix is not a better Miro board. It's bringing the sprint's actual signal into the room before anyone opens their mouth.

The 30-minute framework

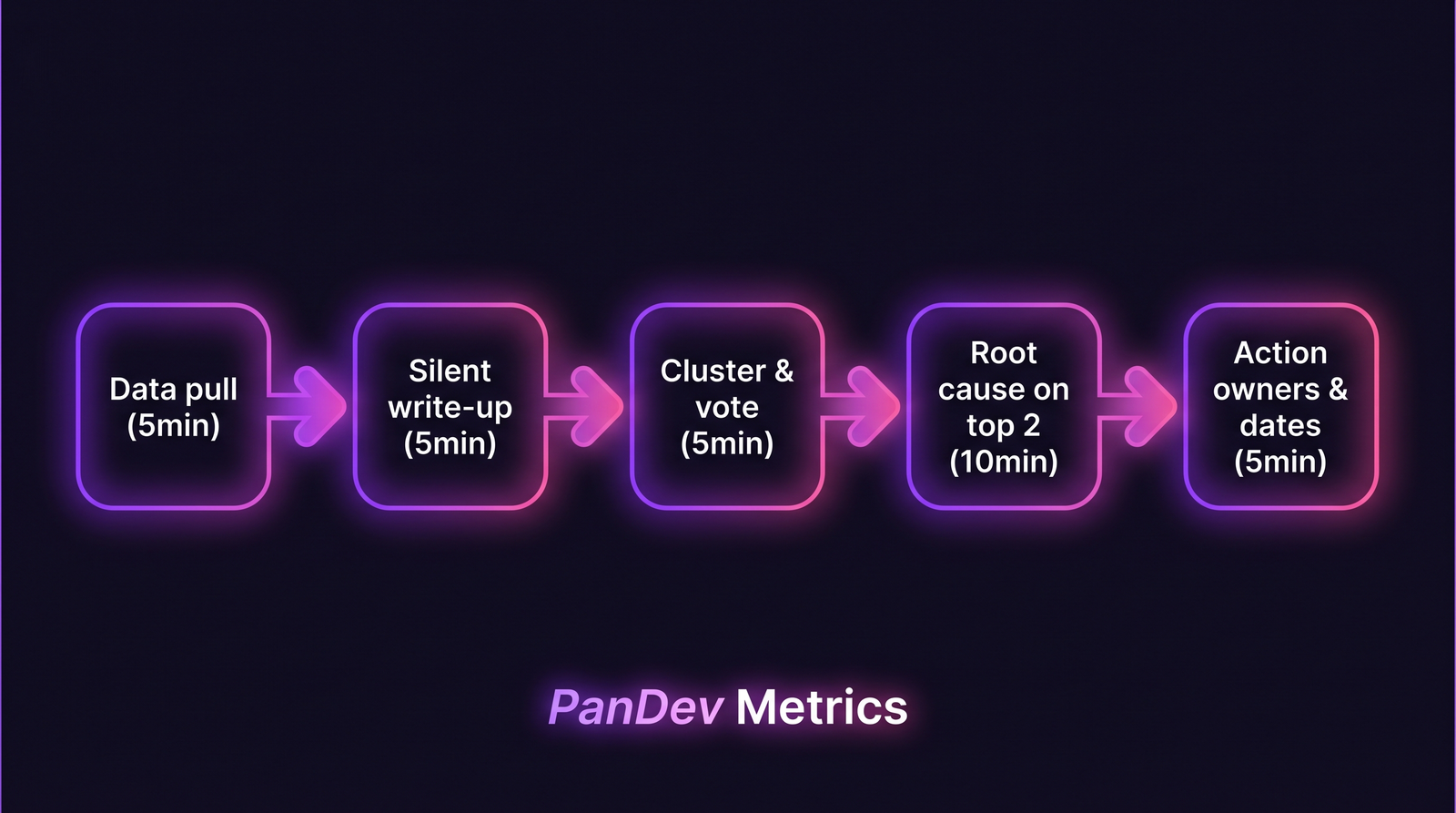

Five blocks, timed strictly. A meeting facilitator with a timer runs it. No slack.

Step 1. Data pull (5 min, before the meeting)

The facilitator drops four numbers into the retro doc 15 minutes before start:

- Planned vs delivered story points (or tickets)

- Cycle time median for the sprint (commit to merge)

- Incidents / rollbacks / hotfixes count

- Unplanned-work ratio (ad-hoc tickets that entered mid-sprint ÷ total)

If your team uses PanDev Metrics, the sprint report pulls those four automatically from IDE heartbeat data and Git events, so no one spends 20 minutes manually scraping Jira. If you don't have tooling, the scrum master spends 10 minutes in Jira + GitHub before the call. Either way, numbers on the screen before anyone talks.

Step 2. Silent written reflection (5 min)

Everyone writes in the doc, muted, for 5 minutes. Two prompts:

- "One thing the data surprises me about."

- "One specific thing I'd change next sprint."

No "what went well" column. Engineers are bad at positive retrospection and good at specific complaints, so lean into the strength. A specific complaint is an actionable signal; a vague "team felt good" is noise.

Step 3. Cluster and dot-vote (5 min)

The facilitator clusters similar notes in real time. Everyone gets 3 dots. Only the top 2 clusters move forward. Ignore the rest; they'll resurface if they matter.

This is where you cut the typical 60-minute retro. Most teams try to address every sticky note. You're picking the 2 that actually show up in the numbers.

Step 4. Root cause on the top 2 (10 min)

For each of the 2 winners, ask "why" three times. Not five. Five is a PowerPoint ritual; three reaches the cause without turning into therapy.

Example from a real retro we observed (anonymized Kazakh fintech, 12-person team):

- Symptom: "Cycle time doubled this sprint"

- Why 1: "PRs sat for 2 days before review"

- Why 2: "Two senior reviewers were on the incident response"

- Why 3: "Our on-call rotation has no backup reviewer coverage"

The action wrote itself: add a "backup reviewer" rotation slot. Not a workshop on "improving collaboration."

Step 5. Action owners and dates (5 min)

Each action gets a single named owner and a due date that falls inside the next sprint. If a proposed action can't get a named owner, it dies in the meeting. If it can't fit in one sprint, it's an epic, not a retro action; kick it to planning.

Write actions as @owner will ship X by sprint-end Friday. No "the team will explore". "Exploring" is how retro debt accumulates.

Five timed blocks, 30 minutes total. Data arrives before feelings do.

Five timed blocks, 30 minutes total. Data arrives before feelings do.

The numbers to pull, with an interpretation cheat sheet

| Metric | Healthy signal | Worth a conversation | Red-flag territory |

|---|---|---|---|

| Delivered / planned | 80–100% | 60–80% | Below 60% two sprints in a row |

| Cycle time median | Stable or trending down | +20% sprint over sprint | Doubled |

| Incidents during sprint | 0–1 | 2–3 | 4+ (every other day) |

| Unplanned work ratio | Under 20% | 20–35% | Over 35% (you're not sprinting, you're firefighting) |

These aren't universal targets. They're conversation starters. A team with 40% unplanned work might have a broken prioritization loop, or might be a platform team that plans that way on purpose. The retro's job is to surface the gap between the number and the story.

Common mistakes to avoid

| Mistake | Why it hurts | Fix |

|---|---|---|

| Running retros with no data | Loudest voice wins; you optimize for vibes | Pull the 4 numbers BEFORE the meeting |

| Trying to fix everything | 6 actions = 0 shipped | Max 2 actions per retro |

| Orphaned actions | "The team will look into…" | Named owner + sprint-end date |

| Retros weekly at sprint end | Everyone's tired; attention tanks | Mid-morning mid-week after sprint end |

| Skipping retros when sprint went well | You lose the learning signal | Good sprints have more teachable data than bad ones |

That fifth one surprises people. Teams we've watched only hold retros after bad sprints, then wonder why their improvement curve plateaus. Good sprints are where you reverse-engineer what to protect.

The checklist (copy and use)

- Facilitator pulls 4 sprint numbers into retro doc, 15 min before call

- 5-min silent written reflection, data visible on screen

- Cluster + 3-dot vote, top 2 only

- 3-whys on each top-2 cluster, 10 min total

- Each action has named owner + due date inside next sprint

- Previous retro's actions are reviewed at the top of the next retro (not this one)

- Retro ends on time, no overflow

Running the previous actions at the top of the next retro, not the bottom, is the single change that fixes the "62% of actions forgotten" problem from the Atlassian data. People show up having done the homework because they know it gets called out first.

How to measure if the retro is working

Tracking whether a meeting works is meta, but it's the only way out of ritual theater. Watch these over 4–6 sprints:

- Action closure rate. % of retro actions closed within the sprint they were assigned. Target: >80%. Under 50% means you're generating commitments you can't keep.

- Issue recurrence rate. How often the same theme re-appears. If "code review is slow" shows up in 3 consecutive retros, the retro itself is broken, not the code review.

- Sprint-over-sprint cycle time. Slow but real improvement signal. If cycle time hasn't moved in 6 sprints, the retros aren't producing usable learning.

PanDev Metrics tracks cycle time and incident count as first-class metrics across sprints, which makes the first-2-minutes "pull the numbers" step take literal seconds. If you're doing it manually, budget 10 minutes of pre-meeting prep. It's the highest-ROI 10 minutes of the week.

When this framework doesn't fit

Two cases: very small teams and distributed async-only teams.

For a 2–3 person team, the whole ritual is overhead. Swap it for a weekly 15-minute sync on one number that matters and one action. The formal framework adds structure you don't need at that scale.

For async-only teams across 6+ timezones, the 30-minute synchronous block is the wrong shape. Run the same 5 steps over 24 hours on a shared doc, with the facilitator driving each block's deadline. Less fun, same output.

The hardest part isn't the meeting

Writing actions is easy. Closing them is where teams die. The teams we've seen get retros right share one habit: retro actions are treated as real tickets in the tracker, with the same scrutiny as customer-facing work. The moment they become "nice-to-have improvement items in a Notion page," they're dead.