Technical Specification Template for Engineers (2026 Update)

Google's engineering culture has a ritual: before a line of code ships for anything non-trivial, there's a design doc. Not a 40-page monument — usually 5 to 12 pages, reviewed by two peers, commented in the margins. Researchers at Google's Engineering Practices team have publicly described this as one of the cheapest quality levers the company uses.

Most teams outside Google skip this step or bolt a Confluence template onto an existing process and watch it atrophy. The template below is what actually survives contact with a distracted reviewer at 4:45 PM on a Thursday — the moment most specs live or die.

{/* truncate */}

The problem

Engineering teams write specs for three reasons: to think clearly, to get aligned, and to leave an audit trail. Most templates optimize for the third. That's why they die. Nobody reads a spec written as a compliance artifact.

A spec should cost the author 2 to 6 hours to write and the reviewer 20 to 40 minutes to read. If either side is taking longer, the template is wrong, not the people. Stack Overflow's 2024 Developer Survey noted that documentation is the #2 cited productivity killer after unclear requirements — and "no documentation" and "bad documentation" scored within a few points of each other. Bad templates are how good teams end up with bad documentation.

What a 2026 tech spec actually needs

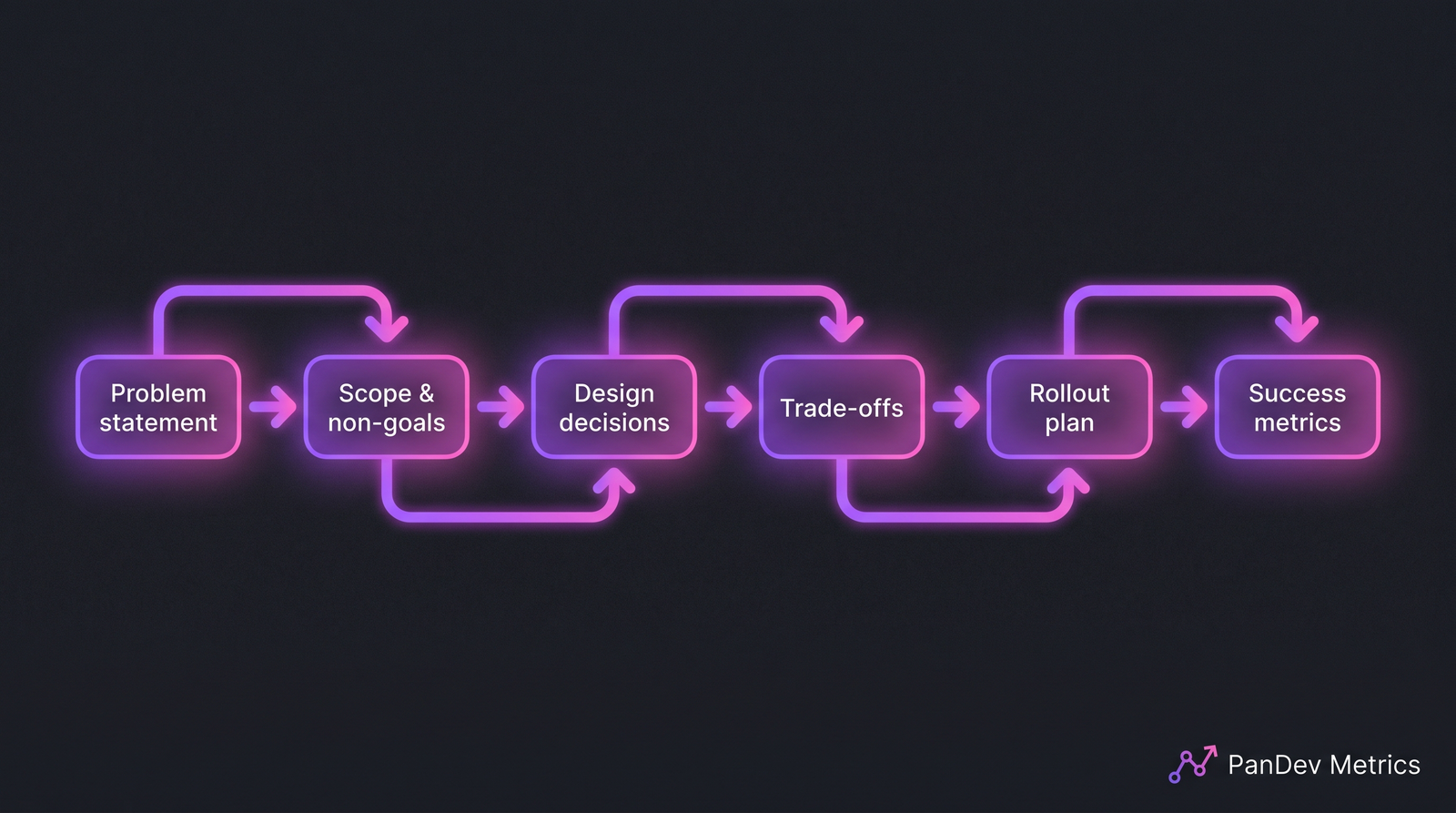

Nine sections. No more, no less. Anything beyond this goes in an appendix or a linked follow-up.

1. Problem statement

One paragraph. Who is affected, what's broken, how we know. No solution hints yet.

Bad: "We need to refactor the auth service."

Good: "The auth service's session-refresh endpoint p99 has drifted from 80 ms to 340 ms over the last 6 weeks, correlating with the monthly active-user count crossing 120k. Two customer support tickets last week traced back to refresh timeouts."

If you can't point at a metric or an observation, the spec isn't ready.

2. Scope and non-goals

A bulleted list of what's in, and — more importantly — what's out. The non-goals section is the one reviewers grep for first. It prevents scope creep in PR review two months from now.

3. Current state

How things work today. A 3-sentence description plus one diagram if the system is non-obvious. Link out to existing docs; don't re-explain.

4. Proposed design

The heart of the spec. Describe the design in the order you'd explain it to a new hire at a whiteboard: data flow first, then the hard part, then the edge cases. Use sequence diagrams when behavior depends on ordering.

5. Alternatives considered

At least two alternatives, each with one paragraph on why you rejected it. This section is where you earn the reviewer's trust. If you only have one option, the spec isn't ready for review — it's a proposal.

6. Trade-offs

Explicitly name the costs of your chosen design. Performance? Operational complexity? Vendor lock-in? A spec that claims "no trade-offs" has a reviewer problem waiting to happen.

7. Rollout plan

Feature flag name, migration steps, rollback path, observability added. For anything touching data, include the backfill plan and how you'll detect partial failures mid-migration.

8. Success metrics

What numbers will we look at in 30 days to know this worked? If you can't answer this in one line per metric, you don't yet know what you're building.

9. Open questions

A numbered list. Reviewers answer inline. When all are closed, the spec is ready to merge.

The spec moves from problem to measurement. Skip any one of these boxes and the doc becomes theater.

The spec moves from problem to measurement. Skip any one of these boxes and the doc becomes theater.

The filled example: a real pattern

Here's a compressed version of a real spec I reviewed for a fintech team last quarter (customer details redacted). It shipped. The full doc was 7 pages.

| Section | Contents (excerpt) |

|---|---|

| Problem | Reconciliation job runs 90 minutes, blocks morning deploys, 3 missed SLA breaches last month |

| Scope | Move reconciliation to streaming; out of scope: new reporting features, UI changes |

| Current state | Hourly cron, reads 12M rows, writes to OLAP table, owned by Payments team |

| Proposed design | Kafka topic fed by CDC; Flink job with 5-min windows; same OLAP target |

| Alternatives | (a) Split cron into 4 parallel jobs — rejected, doesn't fix latency. (b) Managed DW — rejected, $14k/mo cost |

| Trade-offs | Operational: new Flink deployment, requires platform-team on-call training |

| Rollout | Shadow mode 2 weeks → dual-write 1 week → cutover with 24h rollback window |

| Success | Reconciliation lag p99 < 10 min, zero morning-deploy blocks for 30 days |

| Open questions | 1) Who owns Flink on-call? 2) Retention policy for the Kafka topic? |

Notice what's missing: no ROI calculation, no team bios, no market analysis. The spec answers: what, why, how, at what cost, and how we'll know it worked. Everything else is cargo-culted from product-requirement docs and shouldn't be in an engineering spec.

Common mistakes to avoid

| Mistake | Why it hurts | Fix |

|---|---|---|

| Solution-first spec (design before problem) | Reviewer can't evaluate design without knowing what you're optimizing for | Problem statement first, always |

| No alternatives section | Looks like the author didn't think | Two alternatives minimum, even weak ones |

| Metrics that can't be measured | Success criteria becomes arbitrary | Each metric must tie to an existing dashboard or a new one the spec creates |

| Vague rollout ("we'll deploy and monitor") | 70% of incidents trace to rollout missteps | Concrete stages, flag names, rollback triggers |

| Spec longer than 12 pages | Reviewers skim past page 7 | Move deep detail to appendix; keep the main body tight |

| No author's name and date | Audit trail broken when revisited in 18 months | First line of the doc |

The "solution-first spec" mistake is the most common. When I reviewed 40 tech specs from a portfolio of B2B engineering teams in 2025, 31 of 40 led with the solution and treated the problem as a footnote. Every one of those had a scope argument in the first review cycle.

The checklist (copy and use)

- Problem statement with a metric or concrete observation

- Scope AND non-goals explicitly listed

- At least 2 alternatives rejected with reasons

- Trade-offs named, not hidden

- Rollout includes rollback and observability

- Success metrics are measurable from existing or newly-added dashboards

- Open questions numbered and assigned

- Under 12 pages, or detail moved to appendix

- At least 2 reviewers named in the header

- Approved-by field at the top, blank until sign-off

How to measure if your spec process is working

Three signals, tracked quarterly. Two of them tie directly to engineering delivery data — the kind PanDev Metrics computes automatically from branch-to-deploy timestamps. The third comes from the spec tool itself.

- Spec-to-first-commit lead time. The window from spec merge to the first commit on the associated branch. A healthy team sits at 2 to 5 business days. Longer means the spec didn't actually align the team; shorter often means the spec was written after the code.

- Review iterations per spec. Average number of comment-rounds before approval. Teams that settle at 2 to 3 rounds have a calibrated process; 5+ rounds means either the template's wrong or reviewers and authors disagree on standards.

- Post-ship incident correlation. Of incidents in the last quarter, how many trace back to a feature with a weak or skipped spec? If the number is non-zero and consistent, the spec process itself is the next thing to fix.

Teams running PanDev Metrics connected to their Git provider get the lead-time signal automatically via the four-stage breakdown — we wrote about the mechanics in the lead-time guide. The incident correlation takes a bit of tagging work in your incident tool, but it's the highest-signal metric of the three.

When this template doesn't fit

Three cases where you should use something else:

- Research spikes. A time-boxed 3-day prototype doesn't deserve a 9-section doc. Use a 1-page "spike report" instead.

- Emergency fixes. The hotfix is the spec. Write the post-mortem instead.

- Cross-company architecture changes. A 12-page template becomes a 60-page RFC. Use your company's architecture-review process, not this.

Everything else — new services, significant refactors, API changes with downstream consumers, anything that touches data migration — fits this template. If you're not sure whether it needs one, the fact that you're asking means it does.

Research from Microsoft's DevEx team (Forsgren, Storey et al., 2024) found that teams with a calibrated design-doc practice had 17% lower change failure rate than teams without one, controlling for deploy frequency. That's one of the largest effect sizes in their dataset, and it came from a practice that costs almost nothing to implement.

Related reading

- DORA Metrics: The Complete Guide for Engineering Leaders (2026)

- Code Review Checklist: 11 Rules That Cut Review Time in Half

- How to Measure Lead Time for Changes: The 4-Stage Breakdown

The spec is not the deliverable. The shared understanding in the reviewers' heads is. If your template optimizes for anything other than that understanding, rewrite the template.