Brooks's Law in 2026: Does AI Break the Mythical Man-Month?

Frederick Brooks published The Mythical Man-Month in 1975. His core claim, "adding manpower to a late software project makes it later", survived five decades of methodology fashion: waterfall, agile, DevOps. In 2026, AI coding assistants have lifted individual engineer throughput by 30-55% in controlled studies (GitHub/Microsoft Research, 2024-2025). The natural question: did AI finally break Brooks's Law?

Short answer: no. Slightly longer answer: AI accelerated the part of engineering work that was never the bottleneck, and barely touched the part that actually is.

{/* truncate */}

What Brooks Actually Claimed

Brooks's Law is one sentence; the reasoning behind it is two parts.

First, ramp-up cost. A new engineer doesn't ship code on day one. They consume the time of existing engineers (questions, code review, pairing) before they contribute net-positive output. On a late project, this drag arrives exactly when the team can least afford it.

Second, communication overhead scales quadratically. The number of unique pairwise channels in a team of n people is n × (n-1) / 2. Brooks observed that as n grows, coordination overhead grows faster than capacity.

The two compound. Add a person to a late 10-person team, and you import a ramp-up cost while simultaneously raising the communication channel count by 11. The project gets slower.

The Math of Communication Overhead

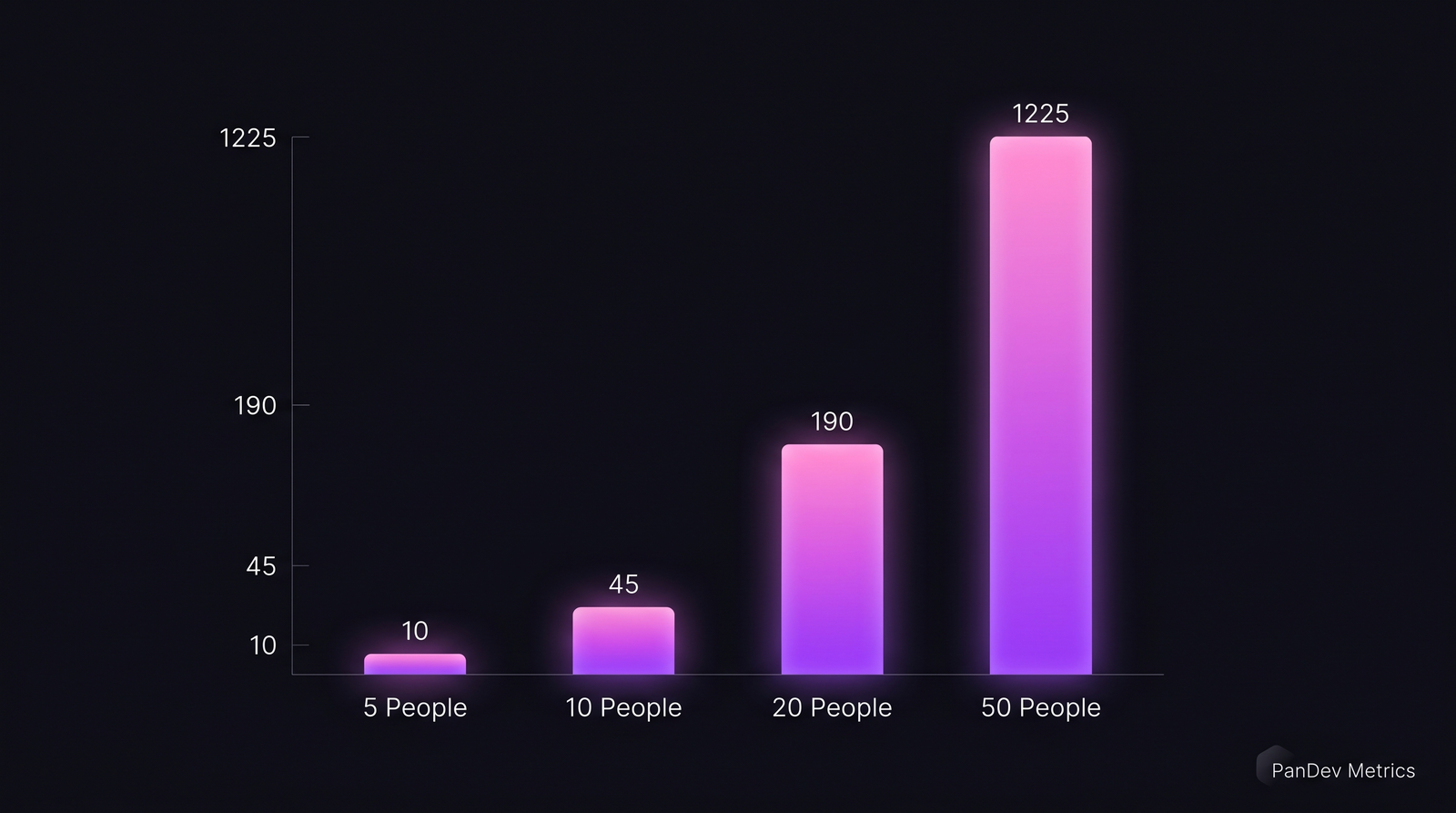

This is the table every engineering leader has seen and forgotten:

| Team size | Pairwise channels | Channels added by +1 person |

|---|---|---|

| 5 | 10 | 5 |

| 10 | 45 | 10 |

| 20 | 190 | 20 |

| 50 | 1,225 | 50 |

| 100 | 4,950 | 100 |

Going from 20 to 50 people adds 1,035 new pairwise channels: a 6.4x increase in coordination surface for a 2.5x increase in headcount.

Going from 20 to 50 people adds 1,035 new pairwise channels: a 6.4x increase in coordination surface for a 2.5x increase in headcount.

Real teams don't operate on full mesh. They cluster into squads, tribes, guilds. But the underlying gravity still pulls. Conway's Law turns this from a thought experiment into an architectural constraint: org structure leaks into system design, and a team that can't coordinate cleanly ships code that doesn't compose cleanly either.

What AI Actually Changed

The productivity gains from AI assistants are real, and they're well-documented across multiple credible sources.

GitHub Research (2024) ran a controlled study with 95 professional developers writing an HTTP server in JavaScript. Copilot users completed the task 55.8% faster. Microsoft's productivity study across 4,867 developers at Accenture, a large consumer SaaS, and ANZ Bank measured a 26% increase in completed tasks per week. Stanford and MIT economists working with Mercado Libre reported a 30-40% lift in pull-request volume after Copilot rollout.

Different methodologies, different orgs, different programming languages, same direction. AI demonstrably accelerates the slice of engineering work that involves an individual engineer typing code into an editor.

That's exactly the slice that wasn't the bottleneck.

What AI Did Not Change

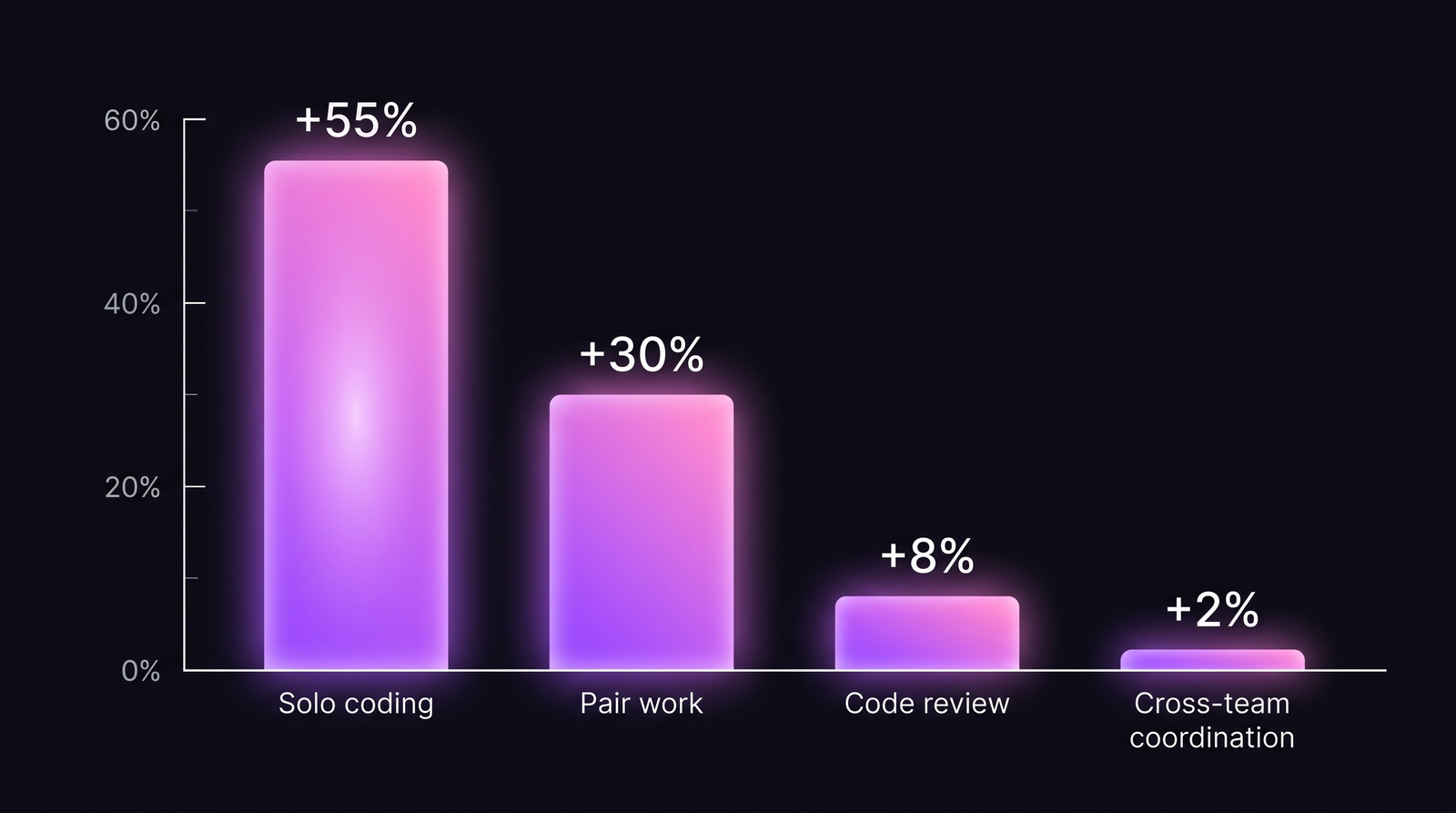

Five categories of engineering work where AI assistants have shown little or no measurable impact:

| Activity | AI impact (2024-2025 research) | Why AI helps less |

|---|---|---|

| Solo coding (greenfield) | +30-55% | Well-defined task, narrow context, AI excels |

| Pair programming | +20-30% | Some boilerplate accelerated, talk-time unchanged |

| Code review (multi-reviewer) | +5-10% | Reading and judgment dominates, not typing |

| Cross-team architectural decisions | ~0% | Requires context AI can't see (politics, history, constraints) |

| Onboarding a new engineer to an existing codebase | +5-15% | Doc generation helps; tacit knowledge transfer doesn't |

The productivity lift concentrates in solo, well-scoped coding. Cross-team coordination, the actual bottleneck in late projects, barely moves.

The productivity lift concentrates in solo, well-scoped coding. Cross-team coordination, the actual bottleneck in late projects, barely moves.

The DORA 2024 State of DevOps Report makes this explicit: in teams adopting AI coding tools, individual throughput rose but delivery stability and team-level throughput showed mixed signals. Some teams shipped slightly slower because increased PR volume overwhelmed code review capacity downstream.

The Late-Project Bottleneck Isn't Coding

In ten years of working with engineering teams, the pattern in late projects is remarkably consistent. The schedule didn't slip because engineers couldn't type fast enough. It slipped because:

- A dependency on another team materialized two months late

- A product decision was reversed three times mid-build

- An undocumented integration constraint surfaced in week 8

- A senior engineer left and took a critical mental model with them

- A regulatory review got queued behind 11 other reviews

DORA, Stripe, and McKinsey research converges on a similar split: in projects that miss deadlines, 60-70% of the lost time goes to coordination, decision-making, rework from changed requirements, and review queues. Not to writing code. McKinsey's 2024 "Developer Velocity" follow-up estimated developers spend roughly 30-40% of their time actually writing or modifying code.

AI compresses the 30-40%. It does not compress the 60-70%.

When AI Partially Sidesteps Brooks

Brooks's Law is weaker in conditions where coordination cost is structurally low to begin with.

Greenfield projects with clear modular boundaries. New person joins a microservice with strict APIs, ships value in week one. AI accelerates typing; architecture removes most coordination need.

Async-first cultures with strong written documentation. Channels exist, but the bandwidth-per-channel is high because everything is written down. New engineers self-onboard from docs while AI fills in code-level questions.

Well-tested codebases. AI-generated changes get validated by the test suite before they touch a human reviewer. Review queues stay short.

Teams that cap coordination surface. Squad sizes 6-8, clear ownership boundaries, no cross-squad PR reviews unless interface change. Brooks's quadratic stays linear because the team refuses to operate on full mesh.

In these conditions, adding people to a late project is less catastrophic than Brooks predicted. AI helps the new person ramp up on the parts AI can read.

When Brooks Still Wins, Hard

Conversely, AI does not save you when:

- The late project is a monolith with no test coverage, so new engineers can't ship safely

- The bottleneck is a single domain expert whose knowledge isn't written down

- The team has synchronous decision-making rituals (large standups, design review committees)

- The integration surface is regulated (fintech, medtech, telecom), so review queues are external and immovable

- The codebase has deep tacit conventions that AI can mimic syntactically but not semantically

Our work with regulated-industry customers consistently shows this pattern. Adding three engineers to a fintech late-project doesn't speed it up. It adds three more code reviews to the compliance officer's already-saturated queue.

What Real 2026 Data Suggests

We track IDE heartbeat telemetry across 100+ B2B companies on PanDev Metrics: coding time, focus blocks, project-switching rate, and per-engineer activity patterns. Across our dataset, two things are visible.

First, AI adoption (measured as active time inside Cursor, Copilot Chat, or AI-assisted IDE flows) increased the share of "active coding" time for individual developers by an average of 18% between Q3 2024 and Q1 2026. Engineers using AI assistants log more focused coding hours per day.

Second, in teams above 20 engineers, the ratio of coding time to coordination time (meetings, review wait, async threads) barely moved. Individual coding became more productive, but the cap on team output stayed where Brooks predicted it would.

The honest caveat: we don't yet have rigorous longitudinal data showing the second-order effect on team coordination overhead. Twelve months isn't long enough. The hypothesis that AI shifts the cost from coding to review queue is plausible and consistent with DORA 2024, but we'd need 24-36 month traces to confirm.

PanDev Metrics surfaces this split directly in the CTO Dashboard: the time-allocation view shows how much of each engineer's tracked day went to active coding versus context-switching, review wait, and idle periods. For leaders who want to test the Brooks-vs-AI hypothesis on their own team, that's where the signal lives.

The Contrarian Take

Here's what most "AI broke Brooks's Law" articles miss: AI assistants made the coding-time problem less interesting, which means coordination overhead now dominates an even larger share of total project time than it did in 1975.

If coding used to be 40% of project time and coordination 60%, and AI cuts coding time by a third, then coding becomes ~30% and coordination becomes ~70%. Brooks's Law didn't get weaker. Its diagnostic power got stronger, because the ratio shifted toward the part Brooks was actually warning about.

What This Means for Engineering Leaders

Three operational implications:

- For late projects, stop measuring "engineering throughput" in isolation. Track the queue depth at review, deployment, and approval stages. That's where 2026 slippage actually accumulates.

- AI tools are a per-engineer multiplier, not a team multiplier. Budget AI productivity gains against individual KPIs. Do not assume team-level lead time will drop by the same percentage.

- Onboarding cost is still real. AI helps new hires read code faster; it does not give them political context, historical decisions, or the unwritten "we tried that in 2023" knowledge. Plan for ramp-up the way Brooks did.

FAQ

What does Brooks's Law say? Adding manpower to a late software project makes it later. Frederick Brooks formulated it in The Mythical Man-Month (1975), based on leading IBM's OS/360 project.

Is Brooks's Law still relevant in the AI era? Yes, possibly more so. AI accelerates individual coding by 30-55%, but coordination overhead barely moved. The ratio of coordination time to coding time has likely increased.

How many communication channels does a team of 10 have?

Forty-five. The formula is n × (n-1) / 2, so for n=10: 10 × 9 / 2 = 45 pairwise channels.

What is the "mythical man-month"? Brooks's argument that man-months are not interchangeable as a planning unit. Adding more people doesn't proportionally reduce calendar time, because of ramp-up cost and communication overhead.

Can you actually speed up a late project in 2026? Sometimes. Sidestep Brooks by reducing scope, removing dependencies, or improving the coordination surface (better docs, smaller review batches, async decisions). AI helps existing engineers ship faster, but adding new people to a late project usually still slows it down.